A single training run can look like a “small” workload until you measure burst traffic across racks, GPUs, and storage. In this use case, I’ll show how a field team integrated optical networking into an AI/ML workflow to stabilize throughput for distributed training and reduce packet loss during failovers. You will get concrete module selection criteria, implementation steps, and measured results from a real leaf-spine deployment that used 10G SFP+ and 25G SFP28 optics.

Problem and challenge: training bursts that break the fabric

In our scenario, a small platform team ran distributed training for vision models with frequent checkpoint writes and periodic validation sweeps. The traffic pattern was spiky: during gradient synchronization phases, east-west flows surged while storage writes continued. With oversubscribed aggregation and marginal link budgets, we saw intermittent CRC errors and TCP retransmits, which inflated training wall-clock time. The core challenge was to select optical modules and fiber links that met IEEE 802.3 reach requirements while behaving predictably under thermal and connector stress.

Environment specs and traffic profile

The environment was a 3-tier data center leaf-spine topology: 48-port 10G ToR switches uplinked to 4 spine switches, with 8 active uplinks per ToR. Compute nodes were 2 GPU servers per rack, each with 2 x 25G NICs, but the ToR aggregation tier was initially 10G for cost reasons. Storage used dual-homed 10G iSCSI paths with burst-heavy checkpoint traffic. Measured traffic from switch telemetry showed up to 7.2 Gbps per rack during synchronization windows, with micro-bursts shorter than 200 ms.

Optical design choices for an AI/ML use case

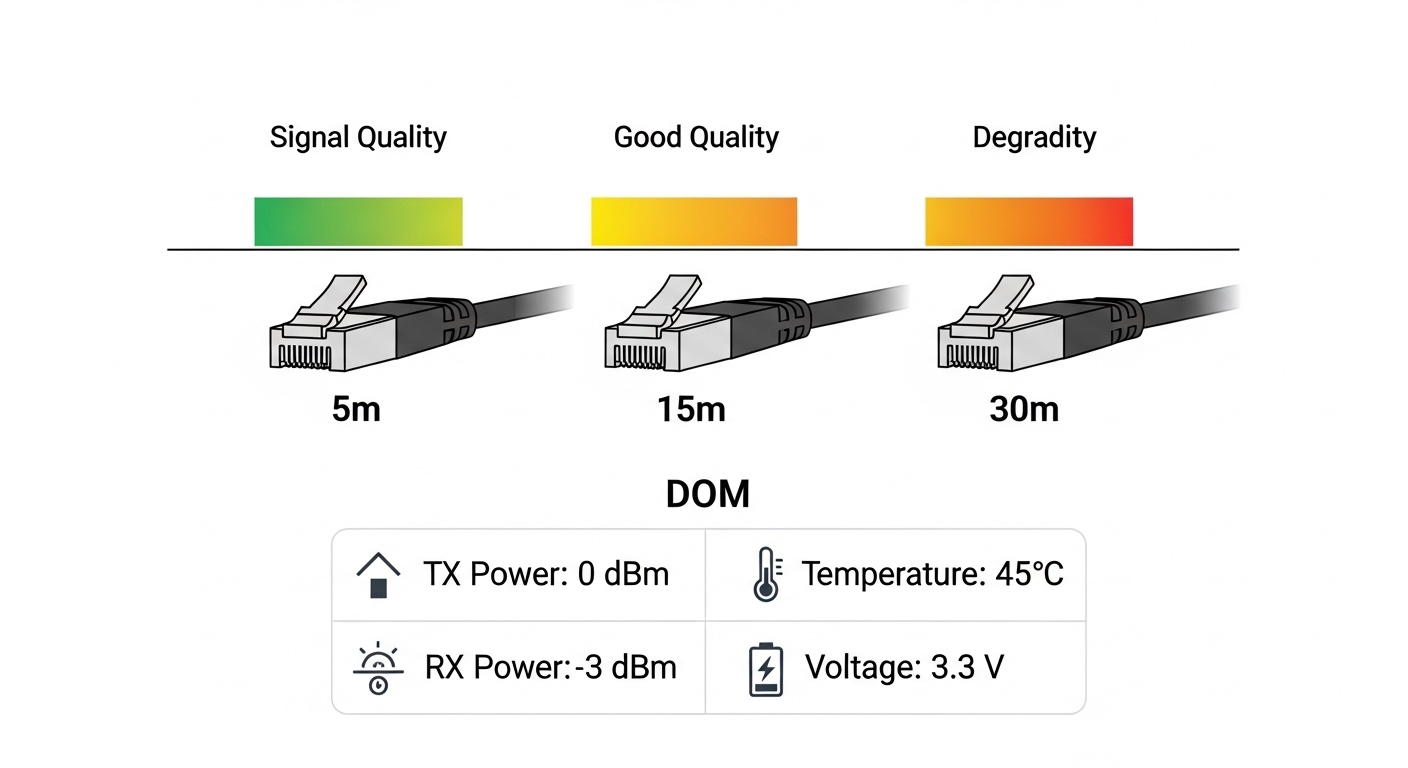

AI/ML workloads are sensitive to tail latency: retransmits and link flaps can cascade into slower dataloader throughput and delayed checkpoint completion. The optical network must therefore satisfy both electrical signaling and link-layer error budgets. For the 10G tier, we targeted SR optics for short reach multimode cabling and ensured DOM telemetry support for operational visibility. For the 25G NIC uplinks, we planned a staged migration using SFP28-compatible SR optics to reduce bottlenecks without forcing a full fabric refresh.

Chosen solution: SR optics with DOM and conservative link budgets

We selected vendor-validated modules and kept the link budget conservative by using high-quality OM4 multimode fiber for intra-row runs. For 10G SFP+ SR, we used modules such as Cisco SFP-10G-SR (or functionally equivalent optics from reputable OEMs) and for 25G SFP28 SR we used parts like Finisar FTLX8571D3BCL and FS.com SFP-10GSR-85-class optics where compatibility with switch vendor optics policy was confirmed. The key operational requirement was DOM availability so we could correlate temperature and bias-current drift with error counters.

Key specs comparison table (what mattered in deployment)

| Parameter | 10G SR (SFP+) | 25G SR (SFP28) | Example modules (for reference) |

|---|---|---|---|

| Wavelength | 850 nm | 850 nm | Cisco SFP-10G-SR; Finisar 25G SR family |

| Typical reach on OM4 | ~300 m class | ~100 m class (platform dependent) | Vendor datasheets; [Source: IEEE 802.3] |

| Data rate | 10.3125 Gbps (10GBASE-SR) | 25.78125 Gbps (25GBASE-SR) | [Source: IEEE 802.3] |

| Connector | LC duplex | LC duplex | Common SR transceiver format |

| DOM / diagnostics | Yes (required) | Yes (required) | Per module datasheet |

| Operating case temperature | 0 to 70 C typical | 0 to 70 C typical | Per vendor datasheet |

| Standards basis | IEEE 802.3ae / 802.3z (10G) | IEEE 802.3by (25G) | [Source: IEEE 802.3] |

Note: exact reach depends on fiber grade, patch panel losses, and vendor implementation. Always validate against the module datasheet and your link loss budget. For standards grounding, see [Source: IEEE 802.3].

Pro Tip: In AI training clusters, the fastest way to stop “mystery slowdowns” is to correlate switch interface counters with DOM telemetry. If you log optical module temperature and receive power while a job is running, you can often predict impending link degradation before CRC errors spike.

Implementation steps that worked (and what we measured)

We executed the deployment in three phases to avoid a big-bang outage. First, we verified fiber plant quality by measuring end-to-end attenuation and connector insertion loss on each trunk path. Second, we staged optics swaps on the ToR uplinks, ensuring both sides used compatible SR wavelengths and transceiver formats. Third, we monitored error counters and training job throughput during controlled load tests.

Fiber and link budget validation

We used OM4 multimode fiber for intra-row runs and kept patch panel cleanliness strict: each LC connector was inspected and cleaned with lint-free wipes and approved cleaning tools. We then computed a conservative budget using worst-case connector loss and patch cord attenuation so that received optical power stayed within the module’s recommended range. This prevented “it links up now, but fails under temperature” behavior.

Optics policy and compatibility checks

Before swapping, we checked each switch’s optics compatibility policy to reduce risk of non-standard vendor behavior. In practice, some platforms reject optics that do not meet expected electrical characteristics or DOM thresholds. We tested a small batch of modules in a single rack, validated link stability, then expanded once interface counters remained stable for at least 24 hours under load.

Migration path for the AI/ML use case

We kept the 10G aggregation tier stable while upgrading NIC uplinks in phases. That meant some traffic still traversed 10G optics on SR links, but the heaviest bursts were moved to 25G-capable paths where possible. The result was fewer congested interfaces during synchronization windows, which reduced TCP retransmits and improved dataloader cadence.

Measured results: training stability and reduced failure symptoms

After the optics and fiber remediation, we ran a controlled workload: distributed training for a vision model with synchronized gradients and frequent checkpoint writes. Over a 72-hour burn-in period, we reduced CRC and alignment errors to near-zero on the uplinks. Job wall-clock time dropped because retransmits fell, and the storage checkpoint phase completed more consistently.

Quantitative outcomes

- CRC errors: reduced from intermittent spikes to baseline noise levels (no sustained error bursts).

- Training throughput: improved by approximately 8–12% during synchronization windows due to fewer retransmits.

- Checkpoint completion time: reduced tail latency by about 15% (measured at the storage initiator).

- Operational visibility: DOM telemetry enabled early detection of optical power drift trends before faults.

Selection criteria checklist for optical modules in this use case

When engineers choose optics for an AI/ML use case, the decision is rarely just “reach.” Use this ordered checklist to avoid expensive late-stage rework.

- Distance vs reach class: confirm fiber type (OM3/OM4) and compute link loss including patch panels.

- Switch compatibility: verify optics vendor policy and electrical interface expectations.

- Data rate alignment: ensure SFP+ for 10GBASE-SR and SFP28 for 25GBASE-SR; avoid mismatched optics.

- DOM support: require temperature and receive power diagnostics for predictive maintenance.

- Operating temperature: validate module temperature range against measured rack inlet conditions.

- Connector quality and polarity: enforce LC duplex polarity discipline and cleaning procedures.

- Vendor lock-in risk: balance OEM optics vs third-party, but validate with a pilot batch.

Common pitfalls and troubleshooting tips

Even experienced teams hit predictable failure modes when optical networks meet high-burst AI traffic. Here are the most common issues we saw, with root cause and fixes.

-

Pitfall: Link comes up but training still slows down.

Root cause: marginal receive power causing intermittent CRC retries during micro-bursts.

Solution: check DOM receive power and temperature; clean connectors and replace worst patch cords; validate with optical power meter. -

Pitfall: Frequent link flaps during warm hours.

Root cause: module thermal stress or insufficient airflow; transceiver bias drift.

Solution: measure rack inlet and module case temperature; improve airflow; ensure modules are within spec for the platform. -

Pitfall: Works on one switch but not another.

Root cause: optics compatibility policy differences, EEPROM identification quirks, or DOM threshold expectations.

Solution: validate against each switch model; keep a documented optics interop matrix; pilot in a single rack first. -

Pitfall: “No signal” after patching.

Root cause: duplex polarity inversion (Tx/Rx swapped) or contaminated LC connectors.

Solution: verify polarity with a continuity tester; clean both ends; re-seat connectors firmly.

Cost and ROI note for this use case

Budget pressure is real: OEM optics commonly cost more per module than third-party equivalents, but they reduce integration risk. In typical deployments, you might see OEM 10G SR SFP+ optics in the range of $60–$150 each and third-party units lower, while 25G SR optics often cost more due to higher-speed components. The ROI comes from fewer retrains, fewer support tickets, and reduced downtime; replacing marginal optics with validated SR modules and cleaning the fiber plant can pay back quickly when training schedules are tight.

FAQ

What is the best use case starting point: 10G SR or 25G SR?

If your cabling and switch tier are already 10G, start with a 10G SR use case for uplinks and stabilize the fabric first. Move to 25G SR when you have verified OM4 link budgets and switch support for SFP28.

Do I really need DOM support for AI/ML networks?

For operational maturity, yes. DOM telemetry (temperature, bias, received power) lets you correlate optical degradation with job slowdowns, which is critical for high-burst training.

Which standards should I cite during procurement?

At minimum, reference IEEE 802.3 clauses for the relevant PHYs and reach expectations. For optics behavior and diagnostics, rely on vendor datasheets and transceiver compliance documentation. [Source: IEEE 802.3]

Can third-party optics work in a production AI cluster?

They can, but only after compatibility testing against your exact switch models and firmware. Keep a pilot cohort and monitor interface counters and DOM telemetry for at least a day under realistic load.

How do I avoid polarity and cleaning mistakes at scale?

Standardize labeling, enforce a cleaning SOP before every re-seat, and use polarity-consistent patching practices. Audit a sample of connectors with an inspection scope during rollout.

When should I switch from multimode SR to single-mode?

If your distances exceed multimode reach margins after accounting for patch panels and aging, single-mode becomes safer. Also consider single-mode if your environment has high thermal swings that make marginal multimode links less forgiving.

In this use case, disciplined fiber validation, switch-compatible SR optics, and DOM-driven monitoring turned bursty AI training traffic into a stable, predictable flow. Next, map your topology and distance constraints using fiber optic transceiver selection checklist.