Industrial networks in plants, ports, and rail yards fail in predictable ways: connector contamination, thermal stress, and firmware or optics mismatches. This article helps reliability and network engineers choose industrial optical modules that fit IEEE 802.3 link budgets, survive environmental limits, and minimize MTBF-driven downtime. You will get practical selection criteria, a specs comparison table, common failure modes, and a short FAQ for procurement and operations teams.

Why industrial optical modules behave differently in the field

In enterprise rooms, you can often treat fiber transceivers as “plug and forget.” In harsh environments, the module is part of a system that includes enclosure airflow, vibration profile, cable routing, and grounding strategy. For example, a leaf-spine network in an aluminum smelter may see ambient swings from -10 C to 60 C near a conveyor motor and periodic mechanical shock from maintenance. In those conditions, marginal optics, weak thermal design, or insufficient EMC filtering can reduce eye safety margin and raise BER, even when link power looks acceptable at room temperature.

Industrial-grade modules typically target wider operating temperature ranges, more robust mechanical housings, and tighter control of optical output power drift under thermal cycling. They also tend to include monitoring features (DOM or vendor-specific equivalents) that let operations teams detect aging before a hard failure. When evaluating vendors, treat the module as a reliability component with measurable acceptance criteria: optical power, receiver sensitivity, and compliance to the relevant electrical interface standard (commonly SFF-8431/8432 and the host switch’s implementation of the same transceiver form factor).

Pro Tip: Many field failures are not “dead optics,” but BER creeping upward due to connector contamination plus thermal expansion. If your switch shows link up but rising CRC errors after a temperature cycle, inspect and clean MPO/LC connectors before replacing modules.

Key specs that determine link success and reliability

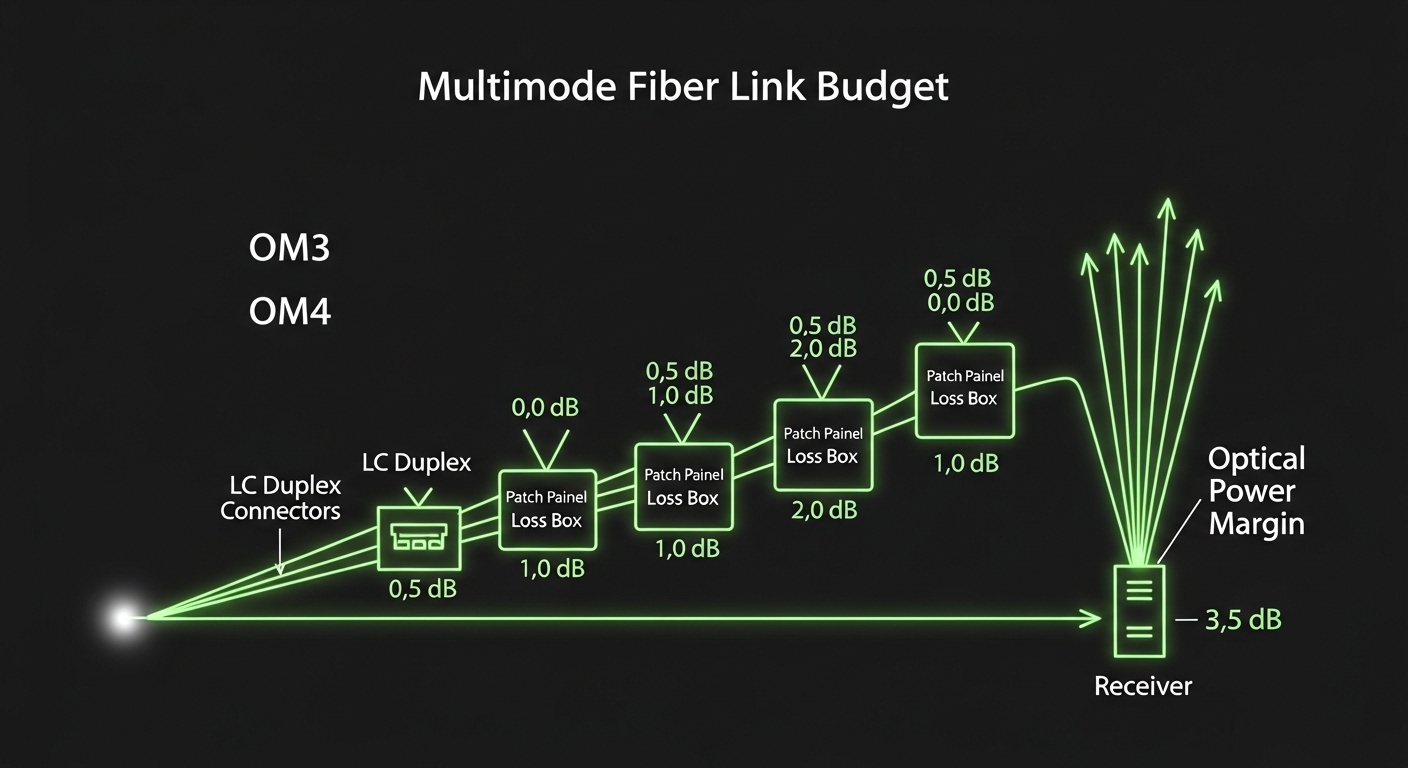

Before comparing part numbers, map your target link to the correct Ethernet PHY and reach class. IEEE 802.3 defines the optical PHY behavior, while vendor datasheets define the transceiver parameters that make the PHY work over your fiber plant. For multi-mode links, the effective reach depends on fiber bandwidth, modal dispersion (especially for OM3 vs OM4), and connector loss; for single-mode, it depends on span loss and chromatic dispersion tolerance at the selected wavelength.

Technical specifications table (common industrial choices)

The table below compares typical industrial optical module classes you will encounter when building or upgrading plant networks. Values are representative of widely sold products; always confirm the exact datasheet for your specific vendor and temperature grade.

| Module class | Typical wavelength | Reach target | Fiber type / connector | Data rate | Typical power (TX) class | Operating temperature | DOM / monitoring |

|---|---|---|---|---|---|---|---|

| 10G SFP+ SR | 850 nm | Up to 300 m (OM3) | MMF OM3/OM4, LC | 10G Ethernet | Low mW class | -40 C to 85 C (varies) | Often yes (DOM) |

| 10G SFP+ LR | 1310 nm | Up to 10 km | SMF, LC | 10G Ethernet | Higher mW class | -40 C to 85 C (varies) | Often yes (DOM) |

| 25G SFP28 SR | 850 nm | Up to 100 m (OM4) | MMF OM4, LC | 25G Ethernet | Low mW class | -40 C to 85 C (varies) | Commonly yes |

| 25G SFP28 LR | 1310 nm | Up to 10 km | SMF, LC | 25G Ethernet | Higher mW class | -40 C to 85 C (varies) | Often yes |

| 100G QSFP28 SR4 | 850 nm | Up to 100 m (OM4) | MMF OM4, MPO | 100G Ethernet | Low mW per lane | -40 C to 85 C (varies) | Commonly yes |

When you see “industrial” in marketing, validate it against the actual temperature and compliance claims in the datasheet and the module’s form factor spec. For example, a typical “industrial” 10G SFP+ LR may be sold with -40 C to 85 C operation, while some “extended” consumer variants only guarantee 0 C to 70 C. That difference can be the margin that keeps the laser bias stable during cold-start and high-ambient runtime.

For electrical compatibility, check whether the module is designed for the host’s expected SFP/QSFP behavior and whether it supports DOM signaling in the expected format. Host switches vary in how they interpret thresholds and alarms; a module with DOM can still be “compatible but noisy” if threshold defaults do not match the host’s monitoring logic.

Industrial deployment scenario: choosing modules for a rail yard

Consider a rail yard with a 3-tier Ethernet architecture: access switches at trackside cabinets, aggregation switches in a maintenance building, and a core switch in a hardened control room. You run 25G SFP28 for uplinks between cabinets and aggregation over single-mode fiber with an average span loss of 0.35 dB/km, plus 1.0 dB connector loss per end and 2.0 dB patch panel losses. The farthest uplink is 7.5 km, so you need a module with adequate transmitter power and receiver sensitivity for that distance at the operating temperature extremes. The cabinets sit outdoors with forced ventilation that can stall during heatwaves, so the modules must tolerate elevated internal temperature without exceeding the vendor’s specified laser bias stability limits.

In this scenario, the engineering team selects 25G SFP28 LR modules with DOM support so operations can trend optical power and detect drift. During commissioning, they run a full BER validation using the switch’s built-in optics diagnostics and a controlled traffic generator, then repeat tests after thermal soak. They also standardize on a cleaning SOP for LC connectors and MPO trunks, because the first month of outages typically correlates with installation dust rather than optical aging. The result is fewer truck-roll incidents and more predictable maintenance windows.

Selection checklist engineers use before buying industrial optical modules

Procurement and reliability teams often disagree on what matters most. The best practice is to score candidates against a checklist that links technical feasibility to operational risk. Use the ordered factors below so you do not discover compatibility issues during installation.

- Distance and link budget: compute attenuation, connector loss, splice loss, and any patch panel penalty; verify module reach class against the IEEE 802.3 PHY requirements.

- Fiber type and bandwidth: for MMF, confirm OM3 vs OM4 and ensure the planned reach aligns with the module’s SR class; for SMF, confirm wavelength and dispersion tolerance.

- Host switch compatibility: confirm the form factor (SFP+, SFP28, QSFP28), electrical interface behavior, and DOM interpretation; test with at least one spare in the target switch.

- DOM support and monitoring thresholds: ensure the module provides optical power, temperature, and bias telemetry in a format the host can read and alert on.

- Operating temperature and thermal design margin: verify the module’s specified temperature range and consider enclosure airflow derating; include cold-start time and high-ambient runtime.

- EMC and mechanical robustness: evaluate vibration/shock claims for the module and the host cage; inspect grounding and cable strain relief.

- Vendor lock-in risk: weigh OEM modules versus qualified third-party modules; demand documented performance guarantees and a return/RMA process that meets your downtime tolerance.

- Reliability acceptance criteria: require burn-in or qualification data, and define your own acceptance tests (optical power checks, DOM alarms, and BER verification).

Concrete part numbers to anchor compatibility discussions

In real networks, teams often standardize on known-good examples while validating industrial temperature grades. For instance, Cisco SFP-10G-SR is commonly referenced for 10G SR MMF links, while Finisar transceivers such as FTLX8571D3BCL are commonly used as compatible alternatives in many ecosystems. For 10G SR in multi-vendor deployments, FS.com also sells industrial-graded options like SFP-10GSR-85 (availability and temperature grades vary by SKU). Treat these as starting points for your compatibility matrix, not as guarantees.

When you compare candidates, confirm wavelength (850 nm vs 1310 nm), reach class, and DOM presence. Also verify that the connector type matches your patch cords: LC for single links and MPO for high-density multi-lane QSFP28 SR implementations.

Reliability testing and quality controls for industrial optics

ISO 9001 thinking pushes you to define acceptance criteria and traceability, not just “buy a module.” For industrial optical modules, practical QA includes verifying that the vendor’s production controls match your environmental profile. A typical reliability test plan includes temperature cycling, optical power drift measurement, and post-test BER validation under representative link conditions.

In the field, we often see that “it passed in the lab” can still fail if the module was never validated for your connector and patch cord ecosystem. For example, a module might meet sensitivity specs at nominal temperature but underperform when connectors are slightly misaligned or contaminated. That is why you should include installation-grade controls: connector inspection with a scope, cleaning verification, and a standardized patch cord type.

For standards context, reference IEEE 802.3 for the Ethernet PHY behavior and vendor datasheets for link budgets and optical operating limits. For transceiver mechanical and electrical expectations, use SFF guidance such as SFF-8431/SFF-8432 for SFP/QSFP behavior and the vendor’s DOM documentation for threshold interpretation. For broader reliability framing, ISO 9001 emphasizes risk-based planning and controlled production and service processes; apply it to your spares management, RMA workflow, and test evidence retention.

External authority: IEEE 802.3 Standard and SFF-related ecosystem references for general transceiver documentation pathways.

Common mistakes and troubleshooting for industrial optical modules

Even experienced teams make predictable errors. Below are concrete failure modes you can use to shorten mean time to repair (MTTR).

Mistake 1: Assuming link up equals correct optical quality

Root cause: The link may establish due to sufficient power at room temperature, but BER can rise after thermal cycling or with slightly contaminated connectors. This often appears as intermittent CRC errors rather than a total link drop. Solution: Use DOM telemetry and switch counters to check for CRC/FCS errors, then clean connectors using approved lint-free wipes and solvent-free cleaning tools. Verify with a fiber scope and re-run a BER test after a temperature soak.

Mistake 2: Mixing MMF and SMF without confirming wavelength and reach class

Root cause: An SR module (850 nm) connected to the wrong fiber type (SMF) can create severe dispersion and unexpected attenuation. Conversely, an LR module (1310 nm) may not be appropriate for a high-loss MMF plant. Solution: Confirm fiber type labeling, wavelength compatibility, and patch cord origin. Use OTDR or a certified loss test to validate the actual installed plant loss.

Mistake 3: Buying “extended temperature” without validating the actual host behavior

Root cause: Some modules claim -40 C to 85 C operation, but the DOM alarm thresholds or reset behavior may not match the host switch’s expectations. The result is nuisance alarms, throttling behavior, or module resets under marginal thermal conditions. Solution: Validate candidate modules in the exact host model and firmware revision. Capture logs for module insertions and DOM alarms across the temperature range.

Mistake 4: Neglecting MPO polarity and lane mapping for QSFP28 SR4

Root cause: MPO polarity errors or incorrect lane mapping can produce link failures that look like “bad optics.” Solution: Verify MPO polarity using a polarity tester or by following the documented MPO method for your patching scheme. Re-terminate or re-map lanes before replacing transceivers.

Cost and ROI: OEM vs third-party in industrial optical modules

Pricing varies widely by data rate, reach, and temperature grade. As a realistic planning range, 10G SR SFP+ industrial modules can land around 25 to 80 USD each, while 25G SFP28 LR variants can be 150 to 450 USD depending on vendor and temperature bin. QSFP28 SR4 modules often cost more, and 100G optics typically require higher unit budgets.

ROI is driven less by purchase price and more by downtime and replacement logistics. If a module failure causes a truck roll or extended outage, even a small improvement in MTBF or a better RMA process can dominate total cost of ownership. Third-party modules can reduce unit cost, but only if you enforce qualification testing, maintain traceable acceptance criteria, and keep spares compatibility validated. For ISO 9001-aligned procurement, require documented performance evidence and define service-level expectations for RMAs.

FAQ: industrial optical modules in real procurement and operations

What does “industrial” mean for optical modules?

In practice, it usually means a wider specified operating temperature range, stronger mechanical design intent, and better control of optical output drift under thermal stress. Always verify the exact temperature range, DOM availability, and optical power and receiver sensitivity specs in the datasheet.

How do I confirm compatibility with my switch?

Check the form factor (SFP+, SFP28, QSFP28), DOM signaling support, and host switch transceiver compatibility guidance. Then validate with a small batch in the exact host model and firmware version before scaling deployment.

Are DOM readings reliable for predicting failures?

DOM telemetry is useful for trending laser bias, transmit power, and temperature, which can correlate with aging and link margin changes. However, thresholds vary by host and vendor, so align alarm thresholds with your acceptance tests rather than assuming defaults.

Can I mix OEM and third-party industrial optical modules in the same network?

Yes, but only after you confirm electrical and optical compatibility, and after you test for correct DOM interpretation and stable link behavior. Mixed fleets increase validation workload, so maintain a versioned compatibility matrix and spares plan.

What is the fastest troubleshooting path for intermittent link issues?

Start with fiber inspection and cleaning, then check switch error counters and DOM optical power trends. Validate the link budget with measured loss (OTDR or certified attenuation tests) before replacing modules.

How should I plan spares for harsh sites?

Use your failure history and environmental severity to set stocking levels, and include at least one validated spare per critical link type. Track RMA turnaround times and keep cleaning tools and a fiber scope available to reduce MTTR.

If you treat industrial optical modules as a reliability-managed subsystem, you can reduce downtime, improve link margin visibility, and avoid costly rework. Next, review your fiber plant loss and host compatibility matrix, then validate candidates with a small controlled deployment using the checklist above via industrial fiber transceiver selection checklist.

Author bio: I am a reliability engineer who has validated SFP and QSFP optics in industrial cabinets using BER testing, thermal cycling, and connector contamination controls. I write QA-first deployment guidance aligned with ISO 9001 evidence practices and field MTBF optimization.