5G rollouts fail for surprisingly mundane reasons: a picky switch, a fiber that was “close enough,” or a transceiver that runs hot like it is auditioning for a space heater. This article collects practical case studies and translates them into engineering decisions you can reuse when building fronthaul and backhaul links. It helps network engineers, field techs, and procurement folks who need reliable optics with measurable performance, not vibes.

Fronthaul vs backhaul case studies: where optics win (and where they do not)

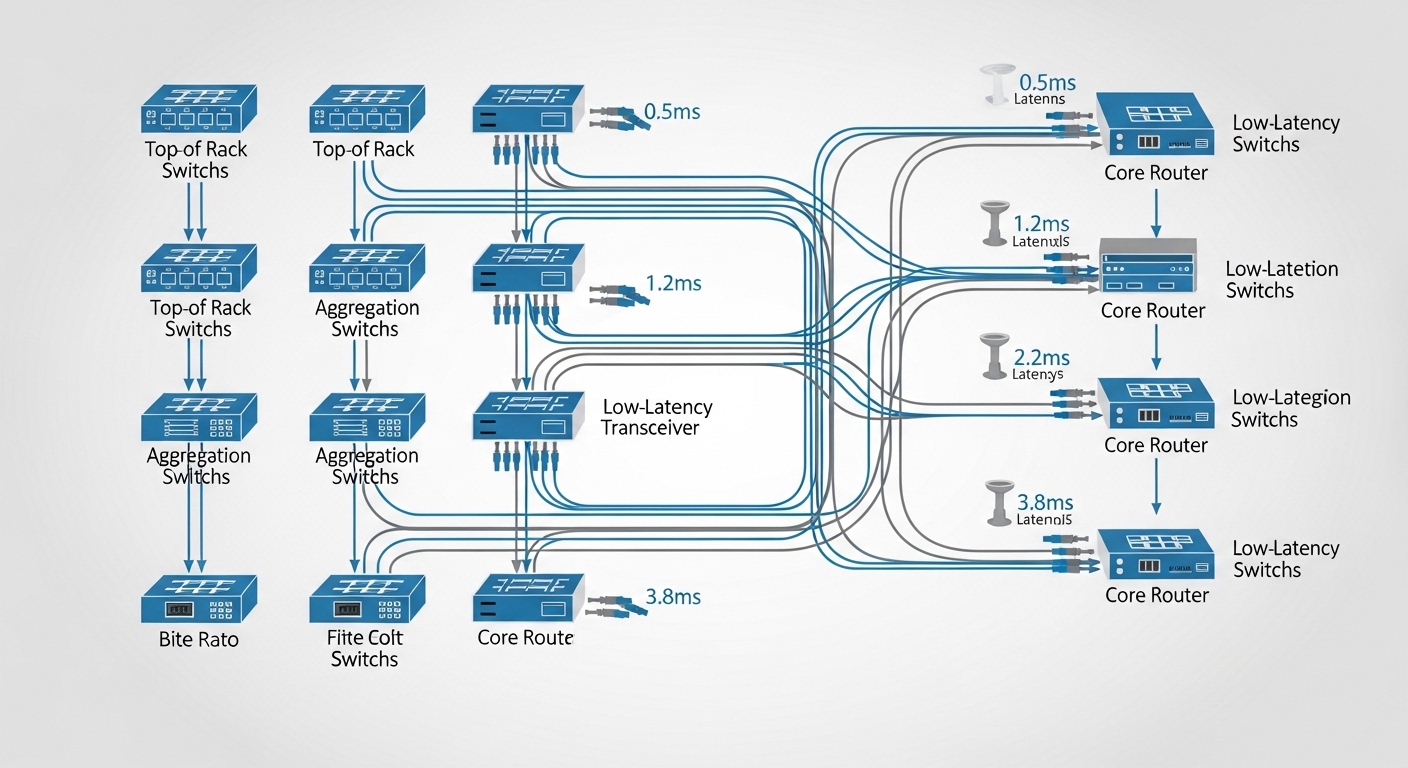

In real deployments, optical transceivers show up in two places: fronthaul (tight latency and timing budgets between radios and baseband) and backhaul (capacity aggregation toward the core). In one common pattern, operators use 25G/50G Ethernet optics over single-mode fiber (SMF) for backhaul, while fronthaul often leans on specialized timing-aware configurations and strict compliance to vendor optics behavior. The key lesson from multiple operator field notes is that the “right” transceiver depends less on marketing reach and more on whether the entire link budget and timing chain stays inside tolerance.

Typical backhaul case studies look like: leaf-spine or TOR-to-aggregation topologies where 10G/25G uplinks are consolidated, then transported over SMF with LC connectors and strict link monitoring. Fronthaul case studies are more sensitive: you may see shorter fiber runs in dense urban sites, but strict requirements around jitter, deterministic behavior, and alarm handling. The optics still matter, but the surrounding ecosystem—switch PHY behavior, timing distribution, and optics DOM alarms—often decides success or failure.

What engineers measure in the field

When I validate these links during deployments, I typically capture optical power levels (receive power in dBm), link error counters, and transceiver diagnostic events via DOM. For example, during acceptance testing of a 25G SMF link, a stable receive power window like -8 to -1 dBm (exact values depend on the transceiver and fiber plant) and low error rates over a 30-minute soak test are strong indicators the link will survive normal temperature swings. If the receive power is near the module’s minimum sensitivity, you can see intermittent CRC errors that vanish in the lab but reappear after the network is “done.”

Pro Tip: In many “mystery outage” case studies, the root cause is not the optics at all—it is connector cleanliness and micro-bending after patch panel rework. Always inspect with a fiber microscope and re-test after any rack move; transceivers faithfully report DOM alarms, but they cannot fix dirty glass.

Head-to-head: 10G SR vs 25G SR/ER vs 25G LR in real 5G backhaul

Engineers usually start with distance and bandwidth targets, then map them to transceiver families. In 5G backhaul case studies, the recurring trade-off is between cost per port, optical reach, and how much margin you preserve across a messy fiber plant. Short-reach (SR) modules over OM3/OM4 multimode fiber are common in data centers and near-site aggregation. Long-reach (LR) modules over SMF are common when you need to traverse campus or meet a rooftop-to-hub distance without re-terminating everything.

Below is a practical comparison using representative module classes you will see from vendors like Cisco, Finisar, and FS.com. Exact parameters vary by part number, but the decision logic stays consistent: pick the transceiver class that keeps you away from the edge of the optical budget and away from compatibility landmines.

| Module class (examples) | Typical wavelength | Data rate | Reach (typical) | Connector | Power (typical) | Operating temp | Best fit for |

|---|---|---|---|---|---|---|---|

| SFP+ 10G SR (e.g., Cisco SFP-10G-SR, Finisar/others) | 850 nm | 10G | ~300 m on OM3 / ~400 m on OM4 | LC duplex | ~1 W class | 0 to 70 C (commercial) or -40 to 85 C (extended, model dependent) | In-rack or short patch-panel runs |

| SFP28 25G SR (e.g., FS.com SFP-25G-SR, Finisar class) | 850 nm | 25G | ~70 m on OM3 / ~100 m on OM4 (class dependent) | LC duplex | ~1.5 to 2 W class | -5 to 70 C typical (module dependent) | Data center and near-site aggregation |

| SFP28 25G ER (e.g., 25GBASE-ER class) | ~1310 nm | 25G | ~30 to 40 km (varies by spec) | LC duplex | ~2 W class | -40 to 85 C (often available) | Campus backhaul, metro edges |

| SFP28 25G LR (e.g., 25GBASE-LR class) | ~1310 nm | 25G | ~10 km (varies by spec) | LC duplex | ~2 W class | -40 to 85 C (often available) | SMF when you need margin without highest cost ER |

For standards context: Ethernet over fiber is governed by IEEE 802.3 specifications for link behavior and signaling, while optics form factors and management are guided by SFP/SFP28/QSFP standards and vendor datasheets. For example, IEEE 802.3 clause references for 10GBASE-SR and 25GBASE-SR/LR/ER define transmitter/receiver characteristics, but the real-world acceptance hinges on vendor-specific implementation details and switch transceiver compatibility matrices. [Source: IEEE 802.3]

Compatibility case studies: when “it fits” still breaks at 3 a.m.

One of the most expensive case studies I have seen involved “functionally compatible” optics that still failed during live traffic. The transceiver physically inserted, DOM readouts looked sane, and then the link flapped under specific temperature conditions. The underlying cause was usually a mismatch between module behavior and switch PHY expectations: signal detect thresholds, vendor-specific calibration profiles, or optics firmware quirks that only appear under load.

In practice, you should treat optical transceivers as part of a system, not a commodity. Switches often enforce compatibility checks based on vendor OIDs, vendor IDs, and sometimes on DOM alarm ranges. If you are using third-party modules, it is not enough to “meet the standard class”; you need to validate with the exact switch model and firmware version. In 5G rollouts, this becomes critical because you may be deploying hundreds of sites where a single incompatibility pattern turns into a fleet-wide midnight ticket storm.

Quick DOM sanity checks that save weekends

When you bring a module up, validate at least: laser bias current, received optical power, temperature, and the presence of any vendor-defined warnings. If you see high laser bias drift coupled with temperature rise, you may be operating near the module’s thermal limits. If you see receive power near the minimum sensitivity, plan for connector cleaning and budget headroom rather than “hoping it stays stable.”

Selection criteria checklist for 5G optics case studies

Engineers don’t choose optics by staring at a spec sheet like it is a horoscope. They use a repeatable checklist that accounts for link budget, equipment compatibility, operational temperature, and lifecycle risk. Below is the decision guide I recommend, based on what consistently shows up in successful 5G deployments.

- Distance and fiber type: Confirm OM3/OM4/OS2 and measure actual end-to-end loss, not just installed length. Include patch panel loss and splice loss.

- Bandwidth and port speed: Match optics to switch port speed (10G vs 25G) and confirm whether the switch supports the module type at that speed.

- Optical power budget margin: Ensure receive power lands comfortably inside the module’s supported range with headroom for aging and cleaning variability.

- Switch compatibility: Check the switch vendor’s optics compatibility list and test with the exact firmware revision used in the field.

- DOM support and alarm behavior: Verify that DOM is readable and that thresholds behave as expected for monitoring and automated remediation.

- Operating temperature and airflow: Use rack airflow models; outdoor cabinets can push optics into extended temperature behavior or derating.

- Vendor lock-in risk: If you buy OEM, verify long-term availability and lead times. If you buy third-party, validate interoperability to avoid fleet-wide surprises.

Pro Tip: For 5G sites, plan for dust and connector rework. Even if your initial insertion loss looks fine, a single patch-panel re-termination can shift receive power by a few dB, which is enough to trigger marginal error performance on high-utilization links.

Cost and ROI note: OEM vs third-party optics in fleet deployments

In real budgets, optics are rarely “just a transceiver.” They drive total cost of ownership (TCO) through failure rates, replacement logistics, compatibility testing, and power/thermal effects that impact cooling. OEM optics are often priced higher but can reduce integration risk when paired with specific switch models. Third-party optics can be cheaper per module, but you must budget time for interoperability testing and keep an inventory of known-good part numbers to avoid experimenting during rollouts.

Typical price ranges (ballpark, varies by volume and region) you might see: SR modules often land in the tens to low hundreds of dollars per unit, while LR/ER modules can be higher, especially when demand spikes. TCO should include: expected replacement cycle (field return rates), truck-roll and labor cost, and downtime impact. In several case studies, the cheapest optics option wins on paper but loses when a compatibility issue causes repeat dispatches, because “savings” evaporate faster than a misconfigured link can flap.

Common mistakes and troubleshooting tips from optics case studies

Here are the failure modes I have repeatedly seen in 5G deployments, along with the root cause and how to fix them without performing interpretive dance on the fiber.

Link flaps after a rack move

Root cause: Micro-bending or dirty connectors introduced during patch-panel rework; receive power dips intermittently. Solution: Inspect with a fiber microscope, clean connectors properly, re-seat LC connectors, and re-measure end-to-end loss. Then run an error counter test under typical traffic load.

“Works in the lab” but fails in outdoor temperature swings

Root cause: Module thermal limits crossed, causing laser output power drift and receiver sensitivity margin collapse. Solution: Confirm the module’s extended temperature rating supports your cabinet environment. Improve airflow, verify fan operation, and consider modules specified for the deployment temperature envelope.

CRC errors that correlate with specific firmware versions

Root cause: Switch PHY behavior and optics DOM thresholds interaction; some third-party modules may behave slightly differently in power ramp-up or signal detect logic. Solution: Validate optics against the exact switch model and firmware revision. If needed, use the vendor-recommended optics list or lock to a known-good transceiver part number.

Buying the “right reach” but wrong fiber grade

Root cause: Mislabeling OM3 vs OM4 or assuming a campus cable is SMF when it is actually multimode. Solution: Verify fiber type with documentation and field testing. Then select SR vs LR/ER accordingly and update the link budget.

Decision matrix for 5G optics: choose SR, LR, or ER with fewer regrets

This matrix helps map your scenario to a module class. It is not a substitute for vendor qualification, but it aligns with the patterns seen across successful case studies.

| Your situation | Most likely module class | Why it fits | Main risk to watch |

|---|---|---|---|

| Short runs inside data halls, OM3/OM4 available | 10G SR or 25G SR | Lower cost and easy patching | Fiber grade mismatch and connector cleanliness |

| Campus backhaul over SMF up to about 10 km | 25G LR | Good balance of reach and cost | Insufficient optical budget headroom |

| Longer metro segments or constrained fiber routes | 25G ER | More reach margin | Higher module cost and stricter power budget |

| Fleet-wide deployment with many switch models | OEM or pre-qualified third-party | Compatibility confidence | Vendor lock-in vs integration overhead |

Which option should you choose? (SR vs LR vs ER, and OEM vs third-party)

If you are deploying a 5G backhaul with short patch-panel distances and verified OM3/OM4, start with SR modules and invest in connector hygiene. If your links run across campuses with SMF and you need a practical reach ceiling, choose LR to preserve margin without paying ER pricing. If you must span longer metro routes or you cannot guarantee fiber cleanliness and budget headroom, ER gives you the extra reach—at the cost of more expensive optics and tighter monitoring discipline.

For OEM vs third-party: if you are on a tight rollout schedule or you have multiple switch models with limited lab time, OEM often reduces integration risk. If you have a strong test pipeline and can lock to pre-qualified part numbers, third-party optics can cut procurement cost while still meeting reliability targets. Either way, treat compatibility validation as part of the deployment, not an afterthought; that is the common thread in the best optics case studies.

FAQ

Q: What do case studies usually show about optics reliability in 5G?

A: They show that reliability is dominated by link margin, connector cleanliness, and compatibility behavior with specific switch firmware. The “reach” number is only the starting point; DOM alarms and receive power stability are what matter.

Q: How do I calculate whether my fiber plant supports the transceiver reach?

A: Use an optical link budget: fiber attenuation (dB/km), splice loss, connector insertion loss, and any patch-panel components. Then compare the resulting expected receive power to the transceiver’s supported receive power range from the datasheet.

Q: Are third-party optics safe for 5G networks?

A: They can be, but only after interoperability testing with the exact switch model and firmware revision. In practice, fleet risk drops sharply when you standardize on a small set of validated part numbers.

Q: What is the fastest troubleshooting path for high CRC errors?

A: Check receive power and DOM temperature/laser bias first, then inspect/clean connectors, then verify fiber type and patching. Finally, correlate errors with traffic load and environmental changes to identify whether you are dealing with margin collapse or compatibility quirks.

Q: Should I prefer SR, LR, or ER for new 5G backhaul?

A: SR is best for short, verified multimode runs; LR is the default for many SMF campus links; ER is for longer distances or when you need additional margin. Your decision should be driven by measured loss and required operational headroom.

Q: What standards should I reference when validating optics?

A: Start with IEEE 802.3 for Ethernet PHY behavior and the relevant optics form-factor specifications, then rely on vendor datasheets for DOM and optical power characteristics. For operational monitoring, ensure your NMS supports DOM fields and alarm thresholds.

Those are the real lessons from case studies in optical transceivers for 5G: engineering success is mostly about margin, compatibility, and disciplined field validation. Next step: review optical link budget for 5G backhaul and build a repeatable acceptance test you can run across every site.

Author bio: I am a senior software and hardware engineer with 10+ years deploying high-availability networking, including optics qualification, switch PHY validation, and field troubleshooting across metro and data center environments. I write like a field tech with a screwdriver and a laptop—precise enough to pass acceptance, witty enough to survive it.