When a leaf-spine fabric starts saturating during peak backup windows or AI training bursts, engineers are forced to do bandwidth math fast. This article helps network and optical engineers evaluate 400G vs 800G optical modules by mapping real link budgets, transceiver power, and switch compatibility into an actionable selection path. It is written for operators planning upgrades on production trunks, where a wrong optics choice can cause link instability, marginal BER, or costly re-cabling.

Top 7 decisions when choosing 400G vs 800G optics

Both 400G and 800G are viable, but they stress different parts of the system: optics density, host switching ASIC lanes, and power delivery. In practice, the “right” choice depends on whether you are expanding port counts, upgrading uplinks, or reducing oversubscription. The sections below break the decision into concrete, field-relevant criteria.

Throughput and lane architecture: what 400G really maps to

Most 400G implementations in modern data centers are transported using coherent or advanced direct-detect variants, typically aggregating multiple lanes internally (for example, 8x50G electrical lanes or similar lane groupings depending on the module family). The key operational implication is that host switch line cards must support the exact electrical interface, lane reversal rules, and control plane signaling expected by the module vendor. If the switch expects a specific modulation format or optical reach class, “electrically compatible” modules can still fail to establish stable links.

For engineers, the most important question is not just “is it 400G,” but “is the switch expecting this module’s lane map and FEC mode.” IEEE Ethernet framing is standardized, but the optical PHY behaviors (including FEC strategy and loss budgets) are not interchangeable across vendors unless the switch vendor explicitly lists supported optics.

- Best-fit: uplinks where you need incremental capacity with minimal cabling changes.

- Pros: broad ecosystem support; predictable interoperability when using supported part numbers.

- Cons: if you are constrained by switch port counts, you may still hit saturation sooner than expected.

800G optics: density gains and the hidden lane-map risk

800G is typically deployed as higher aggregation (commonly 4x200G or 8x100G electrical lane groupings depending on platform). That aggregation increases pressure on host SerDes lane budgets and can amplify sensitivity to marginal connectors, patch panel cleanliness, and polarization/phase stability if coherent is used. The operational risk is that two modules can both advertise “800G,” yet still differ in how they present lane-level signaling to the host.

In field deployments, the most common 800G failure pattern is not “no light,” but “link flaps under thermal or load changes.” This can happen when the module is within spec at room conditions but violates effective receiver sensitivity or FEC margin after connector contamination or when the cage airflow is less than planned. Always validate with the switch vendor’s compatibility matrix and perform a link margin test using vendor tools, not only module diagnostics.

- Best-fit: fabrics where you must expand east-west bandwidth without adding more switch ports.

- Pros: fewer physical ports for the same total bandwidth; can simplify cabling counts per rack pair.

- Cons: tighter compatibility requirements; higher sensitivity to optics and interconnect quality.

Specs that matter: reach, wavelength, power, and temperature

Engineers often compare “reach” in marketing tables, but the real selection hinges on wavelength, connector type, and module power draw that affects PSU sizing and airflow. For short-reach deployments, the common practical constraint is thermal headroom inside dense line-card bays. For longer reach, the constraint shifts to optical budget, dispersion tolerance, and FEC performance under real fiber plant conditions.

Below is a representative comparison using typical industry classes for short-reach and extended-reach deployments. Exact values vary by vendor and part number; treat this as a decision framework, then confirm against the specific datasheets and switch compatibility list. IEEE Ethernet PHY/MAC framing is standardized, but the optical PHY and FEC implementation details are vendor-specific; consult [Source: IEEE 802.3] and module vendor datasheets for the definitive numbers.

| Spec | Typical 400G (short-reach class) | Typical 800G (short-reach class) |

|---|---|---|

| Data rate | 400G aggregate | 800G aggregate |

| Wavelength | Common: 850 nm (MMF) | Common: 850 nm (MMF) |

| Reach (representative) | Up to 100 m MMF (OM4/OM5 class varies) | Up to 100 m MMF (OM4/OM5 class varies) |

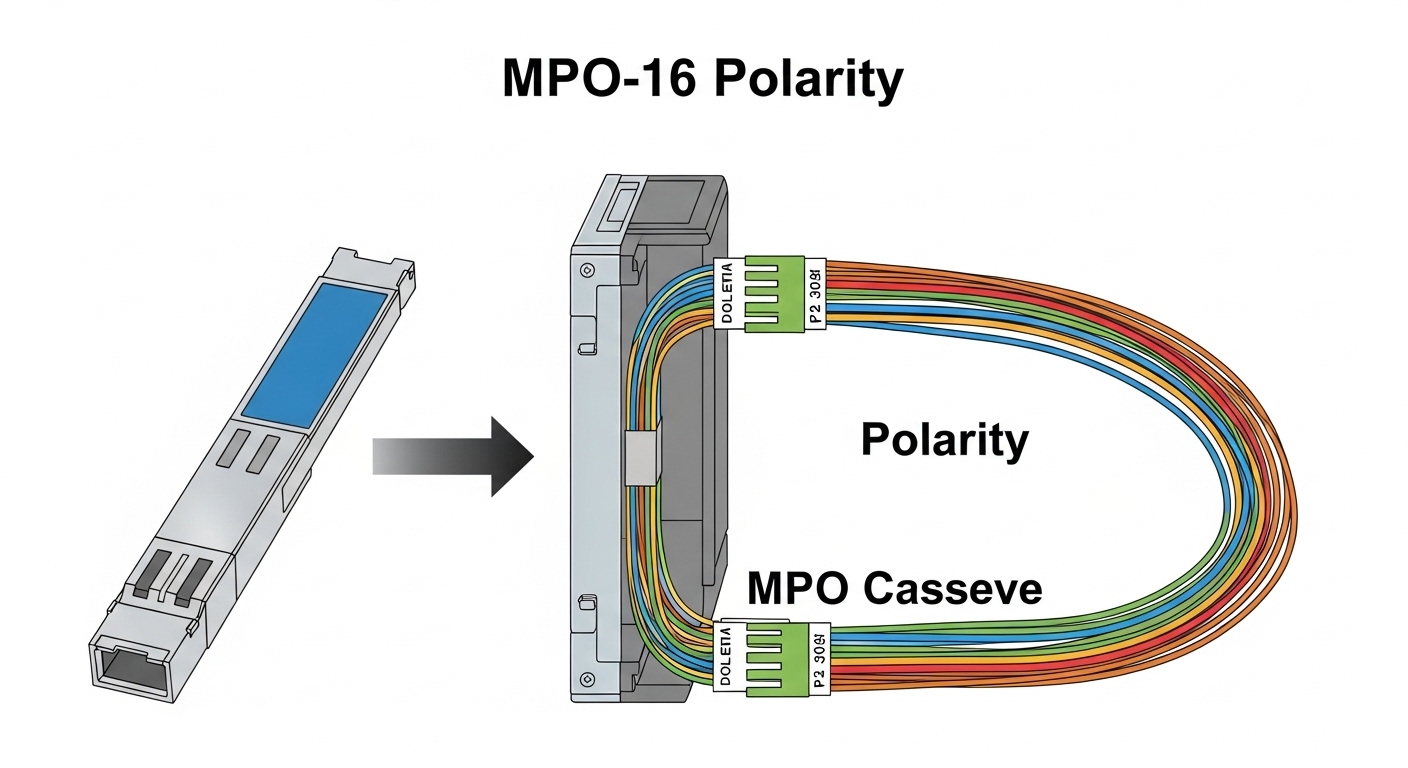

| Connector | Common: LC (or MPO-style depending on form factor) | Common: MPO or multi-fiber arrays (varies by module family) |

| Typical module power | Often on the order of 8–15 W (datasheet dependent) | Often on the order of 15–25 W (datasheet dependent) |

| Operating temperature | Common: 0 to 70 C (commercial) or wider variants | Common: 0 to 70 C (commercial) or wider variants |

| Diagnostics | Digital monitoring via vendor interface; DOM supported if allowed | Digital monitoring via vendor interface; DOM supported if allowed |

- Pros: a structured spec comparison prevents “reach surprises” during rollout.

- Cons: spec tables hide FEC and receiver sensitivity differences that drive BER under stress.

DOM, optics monitoring, and operational guardrails

Digital Optical Monitoring (DOM) is not just for dashboards; it is your early-warning system for failing lasers, elevated bias currents, and thermal drift. When choosing between 400G vs 800G, confirm that the module exposes the specific DOM fields your operations tooling expects (for example, temperature, supply voltage, bias current, received power, and alarms). Some platforms can read DOM but will not map alarms into their event workflows if the module’s management interface differs from what the platform supports.

In field practice, we recommend setting automated thresholds and tying them to maintenance windows. For example, if received power drops more than a configured delta over baseline after a patch panel change, you should schedule cleaning and re-inspection before the margin collapses. This is especially important for 800G, where optical budgets and interconnect tolerances can be less forgiving.

Pro Tip: In high-density deployments, the first reliable indicator of a developing connector problem is often DOM-reported received power trending downward over days, not sudden link loss. If you alert only on link-down events, you will usually detect the issue after the margin has already been consumed.

- Best-fit: any environment with automated NOC telemetry and planned optical maintenance.

- Pros: faster fault isolation; fewer truck rolls.

- Cons: DOM compatibility varies by platform; validate before standardizing.

Switch compatibility and firmware gating

Optics selection is constrained by the host switch. Many operators discover late that a module type is “supported” only for certain firmware revisions, and some require a specific optics profile or FEC mode handshake. That matters more for 800G because the host often uses more aggressive line-rate signaling and stricter lane-level constraints.

Before ordering, pull the switch vendor’s optics compatibility matrix and confirm: (1) exact module part number, (2) supported DOM behavior, (3) maximum reach class, and (4) any required firmware or configuration knobs. If the switch vendor provides “known good” optics lists, use them; if you must deviate, run a pilot in a staging fabric that mirrors production airflow and cabling.

- Best-fit: planned upgrades with a controlled firmware change window.

- Pros: reduces link flap risk and support escalation time.

- Cons: can introduce vendor lock-in and procurement lead-time dependencies.

Real-world deployment scenario: leaf-spine with surge traffic

Consider a 3-tier data center leaf-spine topology with 48-port 10G/25G edge-to-leaf and 100G-to-spine uplinks initially, then a migration to higher east-west capacity. In one common upgrade pattern, the operator replaces leaf uplinks with 400G links first to relieve oversubscription, then moves to 800G on the spine side when AI training jobs increase north-south and east-west concurrency. Suppose the fabric requires ~6 Tbps aggregate spine capacity during peak and you have 8 spine pairs available; moving from 400G to 800G can reduce the number of active uplink ports per leaf by roughly half, but only if your cabling plant and patch panels can support the higher-density connectorization.

Operationally, the engineer will measure transceiver and cage temperatures during peak load. If the module temperature approaches the upper range due to constrained airflow, the 800G optics may show elevated error counters before outright link failure. The fix is often mechanical: reposition baffles, increase fan speed within acoustic constraints, and re-check that the cage airflow path is unobstructed by cable bundles.

- Pros: validates that the “bandwidth math” matches thermal and optical realities.

- Cons: requires disciplined staging testing to avoid downtime.

Cost, ROI, and total cost of ownership under real procurement

Pricing varies heavily by reach class and vendor, but realistic budgeting often looks like: 400G optics commonly cost less per installed link than 800G, yet the total installed cost can be offset by fewer ports, fewer line-card resources, and reduced cabling churn. The ROI question is not “which is cheaper per module,” but “which reduces the number of switch ports and upgrade cycles.”

From a TCO standpoint, include: module unit price, expected failure rate and warranty handling, cleaning and inspection labor, spares inventory, and the operational cost of troubleshooting link flaps. Third-party optics can be cost-effective, but they may carry higher compatibility risk. OEM optics often have smoother support paths and better alignment with the vendor’s firmware expectations, which can reduce downtime costs even if unit price is higher.

- Best-fit: budgeting for a multi-year capacity plan with known warranty and support terms.

- Pros: fewer ports can reduce recurring capex in expansions.

- Cons: higher power and tighter compatibility can increase operational risk if you deviate from supported lists.

Common mistakes / troubleshooting when comparing 400G vs 800G

Most field failures come from mismatched assumptions: reach class versus actual fiber plant, connector cleanliness, and switch optics profile behavior. Below are concrete pitfalls, with root causes and fixes.

-

Mistake: Installing 800G optics without confirming the switch firmware optics profile and FEC mode handshake.

Root cause: The module may negotiate a mode that the host cannot sustain under load, leading to link flaps or rising error counters.

Solution: Verify the exact module part number in the vendor compatibility matrix and align firmware/config before deployment. -

Mistake: Assuming OM4/OM5 reach from a datasheet without measuring actual installed fiber loss and connector penalties.

Root cause: Patch panel degradation, dirty connectors, and aging can consume margin; 800G is typically less tolerant of tight budgets.

Solution: Perform OTDR and end-to-end loss testing, then clean and re-terminate if margins are insufficient. -

Mistake: Skipping transceiver thermal validation in high-density cages.

Root cause: Elevated module temperature reduces laser output power or receiver sensitivity over time, causing errors under peak traffic.

Solution: Measure cage airflow and module temperature during peak; adjust baffles and fan profiles within vendor limits. -

Mistake: Replacing a failed optics with a “same-looking” third-party module and expecting identical DOM alarm behavior.

Root cause: DOM fields and alarm thresholds may not map to the platform’s monitoring logic.

Solution: Validate DOM support and alarm mapping in staging; confirm telemetry ingestion before rolling to production.

FAQ: 400G vs 800G optical module buying questions

Is 800G always better than 400G for fabric bandwidth?

Not always. 800G vs 400G is a system-level decision: the host ASIC lane support, optics profile, power budget, and interconnect quality can make 800G harder to deploy reliably. If your constraints are port count or oversubscription, 800G can help; if your constraints are reach margin or operational risk, 400G may be safer.

What reach should I plan for in a typical data center?

Most migrations start with short-reach MMF classes (often 850 nm), but the actual plan must be based on measured installed loss and connector penalties. Treat any “up to X meters” claim as contingent on fiber type (OM4/OM5), patch panel quality, and cleaning discipline.

Do coherent optics change the 400G vs 800G decision?

They can. Coherent systems may provide different tolerance to dispersion and allow longer reach, but they also introduce more complex DSP/FEC behavior and tighter optical performance requirements. If your architecture requires extended reach, compare both vendors’ coherent module reach class and the host’s supported FEC/DSP modes.

Are third-party optics a good ROI choice?

They can be, but only after compatibility and DOM validation. The operational risk of unsupported optics profiles can increase downtime and troubleshooting time, which can erase unit-cost savings. For high-throughput 800G rollouts, pilot in staging with your exact firmware and monitoring stack.

How do I prevent link flaps after installing 800G modules?

Start with the switch vendor’s supported optics list, then validate thermal and optical margins under peak traffic. Clean connectors, inspect MPO polarity and keying, verify patch panel loss, and confirm that DOM alarms are being ingested correctly.

What standards should I cite in internal approval documents?

For Ethernet framing and baseline PHY/MAC expectations, cite IEEE 802.3. For optical module interoperability, cite vendor datasheets and the switch vendor’s compatibility documentation; those sources define the real operational constraints that govern link stability.

Summary: choose 400G vs 800G by combining reach and loss realities, switch optics gating, DOM/telemetry support, and thermal headroom—not by headline throughput alone. Next step: shortlist candidate part numbers from your switch compatibility matrix, then run a staging pilot that matches production cabling and airflow; see the related planning checklist at Optics planning for data center upgrades.

Disclaimer: This article is for technical information only and does not constitute legal advice. For compliance and contract interpretation, consult qualified counsel and the relevant vendor warranties and terms.

Author bio: I have deployed high-density Ethernet fabrics using 400G and 800G optical modules in production data centers, validating link budgets with OTDR and monitoring DOM telemetry under peak load. I write from field experience coordinating firmware compatibility, connector hygiene processes, and failure-mode analysis.