AI teams often blame model code when throughput stalls, but the bottleneck is frequently the network. This article connects optical networking design decisions to machine learning training and inference performance in real deployments, helping platform engineers, network architects, and field technicians diagnose and prevent latency, jitter, and reliability issues. You will get actionable selection criteria, a spec comparison table, and troubleshooting patterns tied to common failures in fiber transceiver and cabling setups.

Why optical networking matters for machine learning traffic

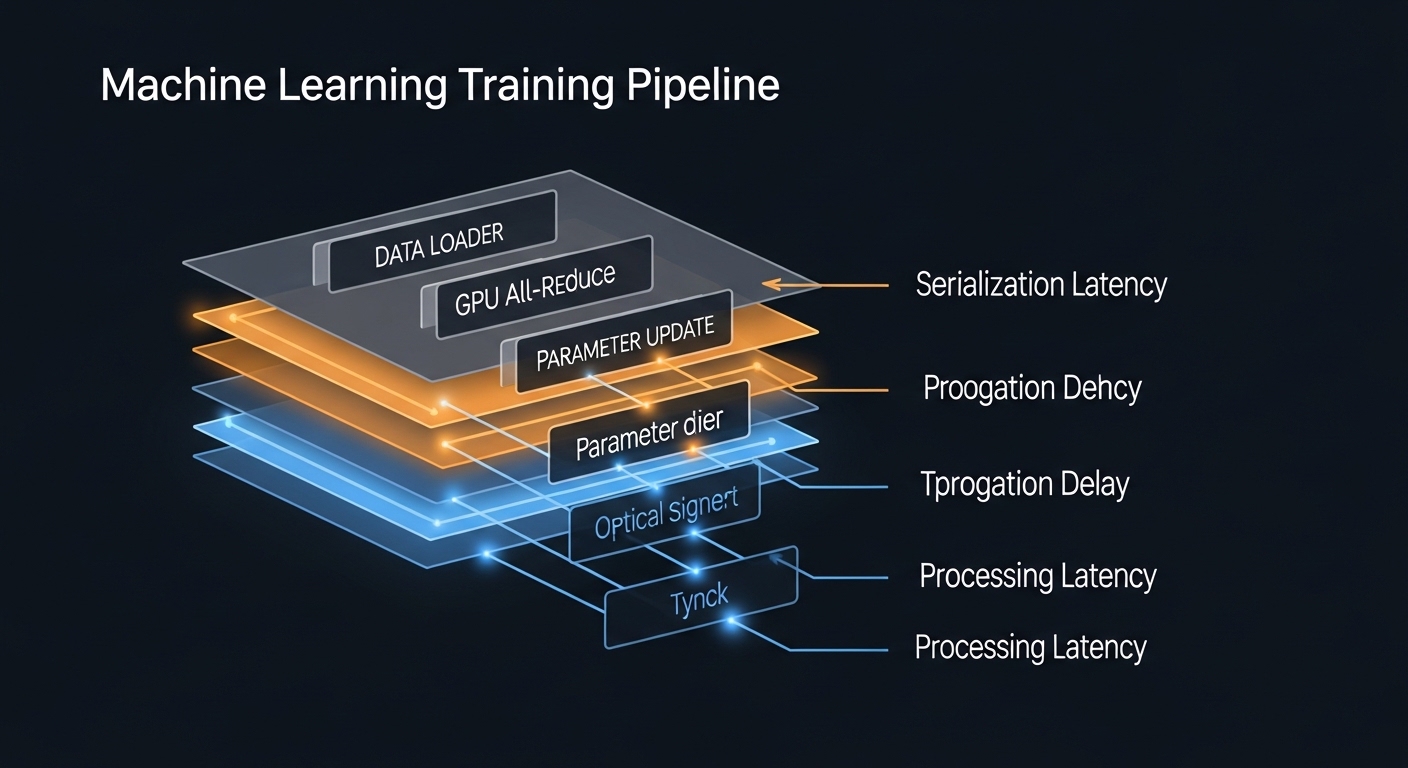

Machine learning workloads move massive volumes of data between GPUs, parameter servers, and storage tiers. In practice, the network contributes to end-to-end time through serialization delay, queueing delay, and loss-driven retransmission. Optical links reduce electrical reach limits and can lower power per bit when compared to long copper runs, especially in leaf-spine and pod-scale designs.

For training, bursty all-reduce and shuffle traffic is sensitive to congestion and micro-bursts, which can amplify tail latency. For inference, the system is often latency-optimized and may run smaller batches, making link stability and deterministic behavior more important than raw bandwidth. IEEE 802.3 defines Ethernet PHY behaviors that optics must meet; vendors publish compliance and link performance guidance in datasheets and transceiver documentation. Source: IEEE 802.3 Standard

Transceiver and fiber choices that align with machine learning workloads

Optical performance is not just reach; it is also optical power budget, receiver sensitivity, link margin, and temperature behavior. In Ethernet, the optics must maintain BER targets under the specified operating range. Typical short-reach deployments use SR-class optics (multimode fiber) or LR/ER-class optics (single-mode fiber), selected to match rack distance, patch panel layout, and cabling plant loss.

Key optical parameters engineers validate

- Wavelength and type: Common 850 nm for SR on multimode; 1310/1550 nm for longer reach on single-mode.

- Reach: Stated maximum reach depends on fiber grade (OM3/OM4/OS2) and link loss assumptions.

- Connector: LC is most common; ensure patch panels and cross-connect hardware match.

- Data rate and form factor: 10G SFP+, 25G SFP28, 40G QSFP+, 100G QSFP28, 400G QSFP-DD.

- Power and thermal limits: Operating temperature affects laser bias and receiver sensitivity.

- DOM support: Digital Optical Monitoring enables telemetry for drift and degradation detection.

Spec comparison for common AI cluster links

The table below compares representative transceiver classes often used between ToR switches, aggregation, and GPU servers. Exact values vary by vendor and revision; confirm in the specific datasheet before purchase.

| Module example | Data rate / form factor | Wavelength | Typical reach | Fiber / connector | DOM | Operating temperature |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G / SFP+ | 850 nm | Up to ~300 m on OM3 (vendor-dependent) | MMF / LC | Yes (per platform) | Often commercial range (confirm datasheet) |

| Finisar FTLX8571D3BCL | 10G / SFP+ | 850 nm | Up to ~300 m on OM3 (vendor-dependent) | MMF / LC | Varies by part | Commercial/extended (confirm datasheet) |

| FS.com SFP-10GSR-85 | 10G / SFP+ | 850 nm | Up to ~400 m on OM4 (class-dependent) | MMF / LC | Varies by part | Commercial/industrial options (confirm) |

| 100G QSFP28 SR (typical) | 100G / QSFP28 | ~850 nm | Up to ~100 m on OM4 (class-dependent) | MMF / MPO-12 | Usually yes | 0 to 70 C typical (confirm) |

Pro Tip: In machine learning clusters, many “mysterious” training stalls trace back to optics telemetry showing rising receive power margin loss days before the incident. If your switch supports it, ingest DOM alarms into your observability stack and correlate them with GPU job retries and switch queue depth spikes rather than waiting for full link flaps.

Designing optical paths for training and inference latency

Optical topology choices should reflect traffic patterns. For training, traffic is often synchronized and can trigger congestion when oversubscription is too aggressive. For inference, steady-state load may be lower but spikier per service, so link stability and predictable packet loss matter.

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches feeding 2x 100G spine uplinks per rack, teams commonly place SR optics on short runs between ToR and server NICs, then use higher-rate optics for spine uplinks. If your rack-to-spine distance forces single-mode, migrate the uplinks to LR optics on OS2 and keep multimode only where OM4 plant loss is proven. This reduces the risk of marginal links that behave well under low utilization but fail under peak congestion and temperature drift.

Selection checklist: matching optics to machine learning requirements

- Distance and fiber grade: Measure end-to-end loss including patch cords and adapters; confirm OM4 or OS2 compliance with your actual plant test results.

- Switch compatibility: Validate transceiver interoperability with the specific switch/line card model and firmware; some platforms enforce stricter optical parameter thresholds.

- Data rate and encoding: Ensure the module supports the exact Ethernet PHY and lane mapping your platform uses (for example, 25G vs 10G optics on different breakout modes).

- DOM and telemetry needs: If you plan automated drift detection, verify DOM support and how the switch exposes alarms.

- Operating temperature: Confirm the transceiver’s guaranteed range matches your rack ambient and airflow design; consider hot-aisle recirculation effects.

- DOM and vendor lock-in risk: OEM-only sourcing can reduce risk but increases TCO; third-party modules can work well if validated, but plan a qualification process and keep spares.

- Power budget and margin: Use vendor receiver sensitivity and transmitter power specs with your measured fiber attenuation to ensure adequate margin.

Common mistakes and troubleshooting patterns in machine learning networks

Below are frequent failure modes that show up during AI training runs, with root causes and fixes.

- Symptom: Intermittent link drops during peak load. Root cause: Dirty MPO or LC connectors causing transient receive errors. Solution: Implement connector cleaning SOPs, inspect with microscope, and replace damaged jumpers; re-test with an optical power meter.

- Symptom: High retransmissions and training throughput collapse without full link flaps. Root cause: Optical margin too tight due to unaccounted patch panel loss or mismatched fiber grade. Solution: Re-measure link loss end-to-end, compare to vendor power budget, and replace with higher-margin optics or shorter patch paths.

- Symptom: Works at idle, fails under temperature cycling. Root cause: Transceiver operating outside guaranteed thermal conditions or airflow dead zones near cages. Solution: Verify rack ambient and airflow, adjust baffles, and move modules to compliant cooling profiles; consider industrial-temperature optics if required.

- Symptom: “Unsupported transceiver” alarms. Root cause: Platform compatibility filters rejecting certain EEPROM identifiers or DOM implementations. Solution: Use vendor-validated module lists, update firmware, and qualify third-party modules with a staged rollout.

Cost and ROI: what to budget for optical upgrades in machine learning environments

Pricing varies by speed and distance, but in many markets, short-reach 10G SFP+ optics often land in the tens of dollars each, while 25G and 100G modules can rise to the hundreds to low thousands depending on OEM vs third-party and DOM features. The ROI is not only purchase price; it includes downtime risk, spares strategy, and the cost of qualification.

OEM modules typically reduce compatibility and compliance risk, which matters when you have strict maintenance windows and production training schedules. Third-party optics can lower CapEx, but you should include qualification time, burn-in testing, and a documented acceptance checklist. TCO usually favors the option that minimizes mean time to repair and avoids repeated escalations caused by marginal optical budgets or platform rejection events.

FAQ

Which optical transceiver types are most common for machine learning clusters?

Most clusters start with SR-class optics for short runs within racks and pods, then move to LR/ER-class optics for longer aggregation and inter-rack paths. The exact choice depends on fiber type (OM3/OM4 vs OS2) and measured link loss. Validate against your switch model and transceiver datasheets before standardizing.

How does DOM actually help with machine learning uptime?

DOM provides telemetry like transmit bias and receive power, which can reveal degradation before total failure. When correlated with switch error counters and job retry metrics, it helps teams schedule proactive maintenance rather than reacting to outages. This is especially valuable during long training jobs where intermittent packet loss triggers expensive retries.

What is the biggest cause of optical link errors in practice?

The top recurring cause is contamination or damage on fiber connectors, especially MPO and LC endfaces. Even a small amount of dirt can create enough loss to push the link beyond margin under temperature or vibration. Connector inspection and cleaning SOPs usually deliver faster improvements than swapping optics blindly.

Can third-party optics be used without breaking machine learning workloads?

Often yes, but only after qualification on your exact switch hardware, firmware version, and cabling plant. Some platforms apply stricter transceiver validation, and DOM implementations can differ. Plan a staged rollout with monitoring of link errors, CRC counts, and telemetry alarms.

How should we decide between multimode and single-mode for AI networking?

Use multimode when distances are short and your OM4 plant loss is well characterized, because it is cost-effective for pod-scale cabling. Use single-mode when you need longer reach, better scalability, or when plant uncertainty makes margin management difficult. Always base the choice on measured loss and vendor power budgets.

What should we monitor during a machine learning training incident?

Track link flaps, optical DOM alarms, interface error counters (CRC/FCS), and queue depth or ECN-related signals if your platform exposes them. Correlate these with training job timelines to identify whether the network is causing retries or throttling. This avoids misattributing the issue to model code.

Optical networking choices directly affect the latency, stability, and error profile that machine learning systems depend on, especially at scale. Next, map your current fiber loss measurements and transceiver compatibility constraints using optical-networking-fiber-loss-testing so you can standardize links with enough margin for real training and inference conditions.

Author bio: I work as a network performance consultant and have deployed optics-based AI fabrics, including DOM telemetry integrations and fiber qualification workflows. I focus on measured link budgets, switch transceiver interoperability, and operational playbooks that reduce training downtime.