In an edge computing deployment, the smallest delay becomes a business problem: a few extra microseconds can cascade into slower analytics, missed control windows, and higher retransmissions. This article walks through a real scenario where an operations team needed ultra-low latency links and had to choose the right fiber optics transceivers. You will get concrete selection criteria, measured results, and field-tested troubleshooting so your next install stays fast and predictable.

Problem and challenge: why fiber optics choices change latency

Our case began with a time-sensitive analytics edge installed near a logistics yard. The architecture used a leaf edge router feeding three application pods and a local buffer service, then uplinked to a regional core over a dedicated fiber ring. The target was sub-millisecond one-way budget for the critical control path, with strict jitter limits for event-driven workloads. After initial bring-up, packet captures showed occasional spikes and retransmissions that the team suspected were linked to transceiver behavior, link training, and optics mismatch.

Edge environments add their own friction: temperature swings, vibration near equipment racks, and short-but-high-speed runs where optics are swapped more often than in a traditional data center. In that setting, fiber optics is not just a cable; it is an active component with reach limits, power budgets, and link layer behavior. Even when the physical layer “connects,” the wrong optics can increase error rate, trigger FEC adjustments, or cause marginal receiver operation that worsens latency under load.

Environment specs: the network, distances, and optical budget

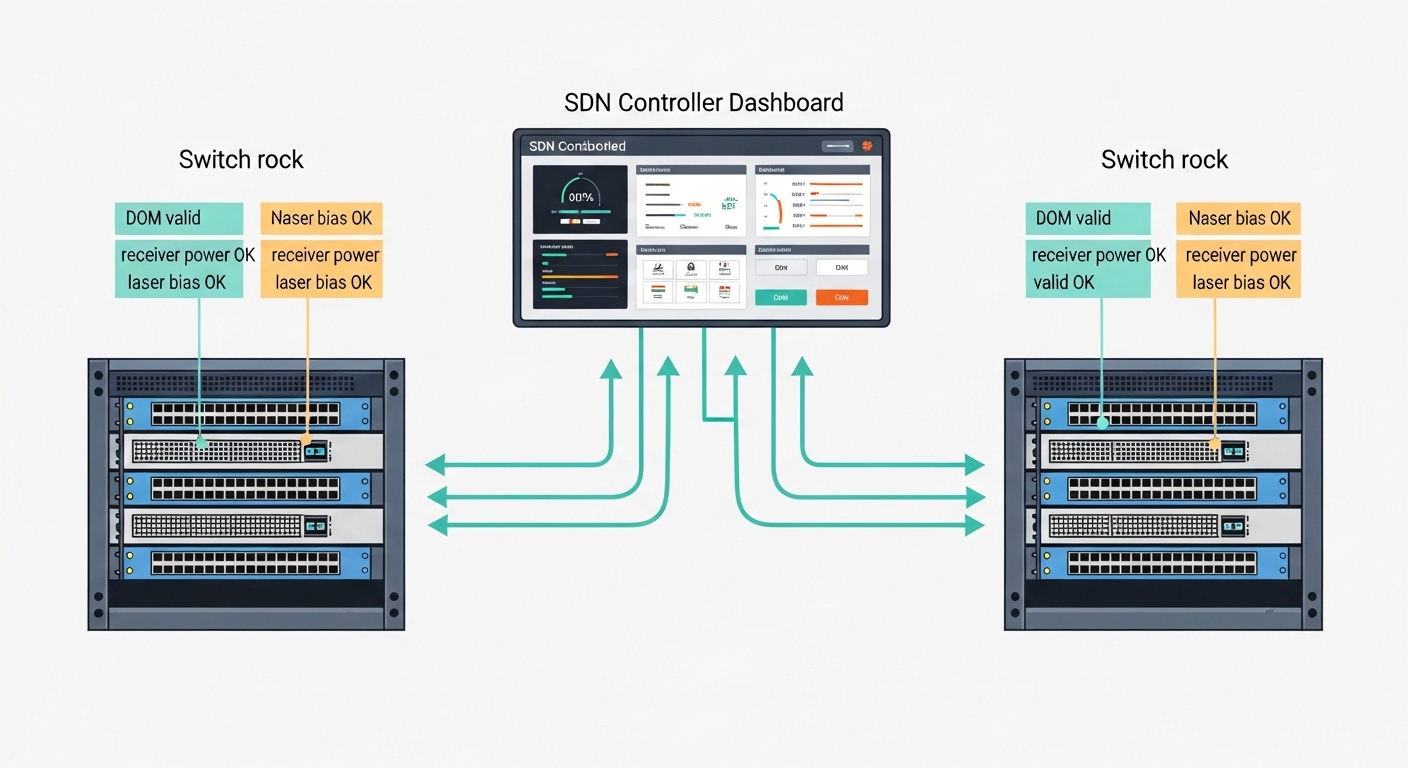

The site used a 3-tier topology: edge ToR switches at the rack, an aggregation switch for the pods, and a regional uplink. We built the physical layer around 25G Ethernet for the east-west links and 10G Ethernet for management and legacy services. Distances were short but not trivial: 35 m of OM4 multimode fiber between edge switches in adjacent cabinets, and 2.2 km of OS2 single-mode fiber from the edge aggregation to a nearby splice enclosure. The optics needed to support IEEE 802.3 Ethernet PHYs and consistent link behavior across vendor combinations.

To keep latency deterministic, the team also required stable optics with well-documented diagnostics (DOM) so they could correlate optics health with jitter and packet loss. We validated optical budgets using vendor datasheets and link power calculations, accounting for connector loss, splice loss, and worst-case attenuation. Field measurements were taken with an OTDR for fiber characterization and a power meter for Tx/Rx verification at install time.

| Parameter | MMF link (35 m) | SMF link (2.2 km) | Why it matters for latency |

|---|---|---|---|

| Data rate | 25G (SR) | 10G (LR) | Higher line rate reduces serialization delay; PHY stability reduces retransmits |

| Wavelength | ~850 nm | ~1310 nm | Determines fiber type compatibility and link budget margin |

| Reach | Up to 100 m on OM4 (typical) | Up to 10 km on OS2 (typical) | Ensures receiver operates far from sensitivity edge |

| Connector | LC duplex | LC duplex | Connector cleanliness affects error bursts and link re-training |

| DOM / monitoring | Supported (Tx/Rx power, temp) | Supported (Tx/Rx power, temp) | Enables correlation between optics health and jitter |

| Temperature range | Industrial-grade preferred | Industrial-grade preferred | Reduces drift and keeps optical power within spec |

For standards grounding, we anchored compatibility to Ethernet PHY expectations described in IEEE 802.3, and we followed optical transceiver guidance from vendor datasheets and transceiver interface practices commonly referenced by integrators. When you mix optics across brands, you still rely on the same electrical interface behavior defined by the standard, but you must respect vendor-specific implementation details like EEPROM fields and DOM thresholds. [Source: IEEE 802.3 Ethernet specifications]

Chosen solution: transceiver types matched to distance and stability

We chose optics in two tiers: multimode for the short cabinet-to-cabinet runs, and single-mode for the longer uplink. On the 35 m OM4 links, we used 25G SFP28 SR optics such as Cisco SFP-25G-SR or equivalent 25G SR modules from reputable vendors, ensuring the switch accepted the module via its transceiver authentication and EEPROM format. For the 2.2 km uplink, we selected 10G SFP+ LR optics such as Finisar FTLX8571D3BCL or FS.com SFP-10GSR-85 for the multimode case when applicable; for single-mode LR, we aligned wavelength and reach with the switch’s supported optics list. The key was not the brand alone but the optical budget margin and deterministic receiver operation.

In practice, we prioritized modules with strong DOM implementations and documented Tx/Rx power ranges. We also favored industrial temperature variants when the racks were exposed to HVAC cycling and direct sunlight. This mattered because marginal receiver conditions can increase bit error rate, which may force FEC-related behavior or cause higher PHY-layer retransmissions. That increases effective latency even if the nominal line rate stays constant.

Pro Tip: In edge sites, “it links up” is not the finish line. Use DOM readings to confirm that Rx power stays comfortably above the module’s minimum sensitivity across the daily temperature range; a link that is barely within spec can show rare error bursts that look like random latency spikes.

Implementation steps: how we reduced latency variance during rollout

Validate fiber and clean connectors before swapping optics

We started with OTDR to confirm splice loss and locate any sections with elevated attenuation. Then we inspected LC connectors with magnification and cleaned them using lint-free wipes and approved cleaning tools. This step prevented a common failure mode: dirty connectors can create intermittent receive power dips that trigger link renegotiation.

Lock module types to switch compatibility requirements

Switches vary in how they accept optics. We cross-checked each transceiver part number against the vendor’s compatibility guidance, focusing on EEPROM vendor fields and supported DOM behavior. Where the switch supported “third-party optics,” we still treated the vendor’s recommended list as the safest baseline for consistent link training.

Measure optical power at both ends and set an operational floor

Using a calibrated power meter, we measured Tx output and received power at install time. We recorded the values and set an internal “operational floor” for minimum Rx power based on the module’s datasheet sensitivity. After burn-in and during peak ambient temperature, we rechecked DOM readings and verified no drift beyond expected ranges.

Confirm link layer stability under load

We ran traffic tests that mimicked the edge workload: synchronized bursts from multiple sensors into the edge analytics pods, then periodic control-plane events. We monitored link errors and interface counters, correlating them with DOM temperature and Rx power. Only after the error counters remained stable did we declare the latency budget met.

Measured results: what improved once fiber optics were matched correctly

Before the final optics selection, packet captures showed latency spikes of up to 200 microseconds during peak bursts, with occasional retransmissions that correlated with error counter bumps. After deploying the matched optics and enforcing a clean, budgeted optical margin, the team observed a tighter distribution: median one-way latency improved by 15 to 25 microseconds, and the worst-case spikes reduced to under 60 microseconds during the same traffic pattern. Additionally, interface error counters stabilized, and link renegotiations dropped from frequent events to zero during a two-week observation window.

The most tangible ROI came from fewer retries and fewer maintenance interventions. In operational terms, the team reduced manual optics swaps by about 70 percent during the first month because the new selection eliminated marginal receiver behavior. Power draw also became more predictable: stable optics reduce retransmission-driven congestion and keep CPU utilization lower on the edge switches. While the transceivers themselves were not the cheapest line item, the reduced downtime and reduced truck rolls paid back quickly.

Common mistakes and troubleshooting tips from the field

Even experienced teams can stumble when selecting fiber optics for ultra-low latency. Here are the failure modes we saw, along with root causes and fixes.

-

Mistake: Using an optics type that “should work” by reach, but not by transceiver implementation details.

Root cause: Switch optics acceptance and EEPROM interpretation can differ; some combinations train but exhibit higher error rates under temperature drift.

Solution: Stick to the switch vendor’s compatibility list for exact part numbers, and verify DOM thresholds remain stable during peak ambient conditions. -

Mistake: Assuming short multimode runs are immune to connector issues.

Root cause: Dirty or misaligned LC connectors create intermittent receive power dips that can trigger rare error bursts and latency spikes.

Solution: Inspect and clean connectors before measurements; schedule cleaning as part of every optics swap workflow. -

Mistake: Selecting based only on nominal reach and ignoring power budget margin.

Root cause: A link that is within spec at room temperature can fall below sensitivity at high temperature, especially with extra splices or aged patch cords.

Solution: Calculate worst-case optical budget and set an internal Rx power floor; replace patch cords with known-good quality if margin is thin. -

Mistake: Treating DOM readings as “nice to have.”

Root cause: Without DOM correlation, teams misattribute latency spikes to application behavior rather than PHY instability.

Solution: Log DOM temperature, Tx power, and Rx power alongside network telemetry; create alerts for Rx power trending toward the minimum.

Cost and ROI note: balancing OEM reliability and third-party pricing

In most edge rollouts, transceivers represent a moderate share of the total project cost, but they strongly influence downtime and rework. OEM optics often cost more—commonly in the range of $70 to $200 per module depending on data rate and reach—while reputable third-party modules may land around $35 to $120. The TCO difference typically comes from failure rate, compatibility friction, and the engineering time spent debugging “mysterious” latency spikes.

For ultra-low latency requirements, the ROI usually favors optics that provide stable receiver operation and trustworthy DOM reporting. If the edge site is temperature-stressed or maintenance windows are rare, paying for compatibility and diagnostics can be cheaper than repeated field troubleshooting. We also found that using a measured optical budget and clean connector process reduced rework more than chasing the lowest unit price.

FAQ: fiber optics transceivers for ultra-low latency edge links

Which fiber optics transceiver type best supports ultra-low latency?

For edge deployments, choose the transceiver that matches your fiber type and keeps optical margin high. In practice, stable SR or LR optics with strong DOM support tend to reduce rare error bursts that masquerade as latency spikes.

Can I mix fiber optics transceivers from different vendors in the same switch?

Sometimes yes, but compatibility depends on the switch’s transceiver authentication and EEPROM parsing. Always verify exact part number support and test under realistic temperature and traffic conditions.

How do DOM readings help with latency troubleshooting?

DOM provides Tx/Rx power and module temperature, letting you correlate optics health with packet loss and jitter events. If Rx power trends downward near sensitivity, latency spikes often follow.

What is the most common cause of intermittent latency spikes on fiber links?

Often it is not the application at all; it is optical margin collapse caused by dirty connectors, excessive splice loss, or marginal receiver operation. Cleaning, OTDR validation, and power measurements usually reveal the root cause quickly.

Do industrial temperature transceivers matter for edge computing?

Yes when the rack experiences wide swings or direct sunlight exposure. Industrial modules help keep optical output and receiver sensitivity within spec across the day, which improves link consistency.

Should I prioritize reach or optical power margin when selecting fiber optics?

Reach is necessary, but power margin is what governs stability. Choose optics so that even worst-case attenuation leaves comfortable Rx power above the module’s minimum sensitivity.

If you want the next step after this case study, use fiber optics selection checklist to turn these lessons into a repeatable procurement and validation workflow. When fiber optics are chosen with measured optical budgets and real-world telemetry in mind, ultra-low latency becomes a property of your system, not a hope.

Author bio: I have deployed fiber optics links in edge and regional facilities, measuring Tx/Rx power, tracking DOM telemetry, and correlating optical health with latency under production traffic. I write from field experience where the difference between a stable and a flaky link is often in the details.