Smart city networks fail when fiber connectivity is an afterthought

In smart city deployments, fiber connectivity is the hidden dependency behind cameras, adaptive traffic signals, environmental sensors, and public Wi-Fi. A leaf-spine or ring topology can look perfect on paper, but real outages often trace back to transceiver mismatch, DOM misreads, or margin collapse from overlooked loss. This article helps network engineers, data center and edge site leads, and field technicians design fiber connectivity for smart cities with practical transceiver selection and operational checks.

How smart city fiber connectivity differs from typical data center links

Smart city networks blend controlled indoor switching rooms with harsh edge enclosures in cabinets, lamp posts, and roadside junction boxes. You routinely deal with temperature swings, vibration, intermittent power quality, and long aerial or ducted fiber routes with variable attenuation. While IEEE 802.3 specifies optical and electrical behavior, engineering reality is governed by link budget, connector cleanliness, and transceiver optical power and receiver sensitivity tolerances.

For example, a municipal traffic corridor may use a pair of ruggedized switches in an equipment room, then extend to intersections using single-mode fiber (SMF) spanning 10 km to 25 km. In that scenario, choosing the right wavelength (1310 nm vs 1550 nm), ensuring the correct connector polish (UPC vs APC), and validating DOM-operational ranges can be the difference between stable 10G links and repeated link flaps.

Smart city optical link budget basics you can audit

Engineers usually start with the fiber attenuation and add deterministic losses: splice loss, connector loss, patch panel loss, and any splitters or WDM filters. Then they add a margin for aging and cleaning variability. A practical approach is to treat the transceiver budget as a starting point, then enforce a conservative margin—especially for wet-environment enclosures where connector care is inconsistent.

When you cannot guarantee cleaning discipline at every field handoff, you should assume higher connector loss variability than lab specs. That means the “reach” number on a datasheet should not be treated as a guaranteed installation limit for fiber connectivity under real municipal maintenance workflows.

[[IMAGE:A photorealistic wide-angle photograph of a smart city fiber cabinet at roadside dusk, showing a wall-mounted rugged fiber termination enclosure with LC connectors, a small edge switch with SFP+ cages, and visible fiber slack management; cinematic lighting with street lamps, shallow depth of field, high detail, realistic textures, 35mm lens look]

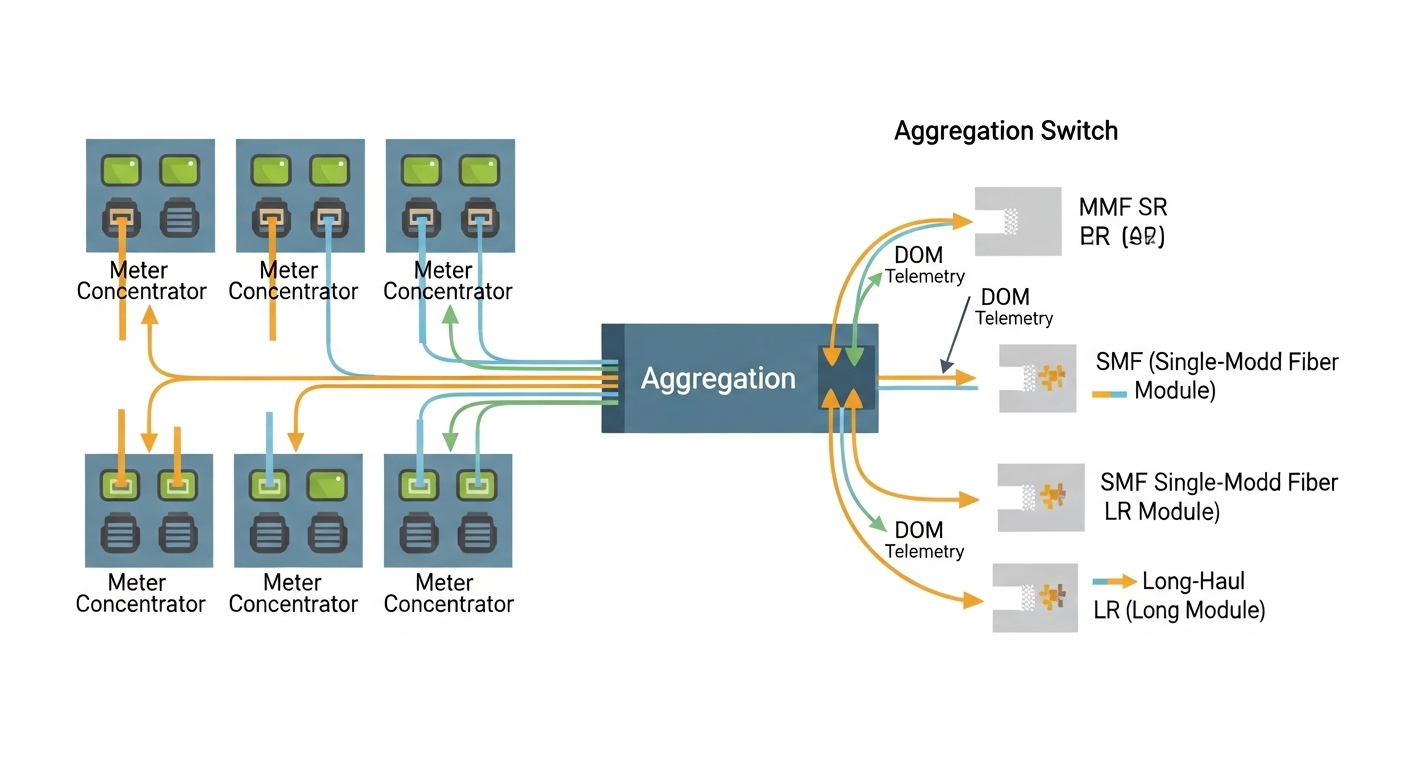

Transceivers that actually fit smart city fiber connectivity constraints

Smart city links commonly run 1G, 10G, and emerging 25G in aggregation and edge. Your transceiver choice must align with switch port type, optical fiber type, target reach, and environmental operating range. You also need to verify DOM support, because many operators use DOM telemetry for proactive maintenance and to trigger truck-rolls before failure.

Common transceiver families and where they land

Short-reach links inside buildings often use SFP/SFP+ SR optics over OM3 or OM4 multimode fiber, typically at 850 nm. Longer smart city routes usually use SMF optics such as LR (typically 10 km at 1310 nm) or ER (typically 40 km at 1550 nm), depending on the vendor. If you run dense aggregation where power and heat are critical, QSFP28 or SFP28 variants at 25G reduce port count while preserving throughput.

In practice, the most frequent “it worked in the lab” failure is selecting SR optics when the installed fiber is effectively longer than expected, or when the multimode plant has higher-than-assumed attenuation due to patching, poor terminations, or legacy cable damage.

Key specifications comparison you should require from vendors

Below is a comparison of representative modules frequently seen in network refresh projects. Always validate the exact part number against your switch vendor compatibility matrix and the transceiver datasheet for DOM and temperature support.

| Module example | Data rate | Fiber type | Wavelength | Typical reach | Connector | Optical power / sensitivity (class) | Operating temp |

|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | OM3/OM4 MMF | 850 nm | 300 m (OM3) / 400 m (OM4) | LC | Class 1M; vendor-defined budget | 0 to 70 C |

| Finisar FTLX8571D3BCL | 10G | OM4 MMF | 850 nm | 400 m | LC | Vendor-defined 10G budget | 0 to 70 C |

| FS.com SFP-10GSR-85 (example class) | 10G | OM4 MMF | 850 nm | 400 m | LC | Vendor-defined budget | 0 to 70 C (verify per listing) |

| Common 10G LR class (1310 nm) | 10G | SMF | 1310 nm | 10 km | LC | Vendor-defined budget | -5 to 70 C (varies by vendor) |

| Common 10G ER class (1550 nm) | 10G | SMF | 1550 nm | 40 km | LC | Vendor-defined budget | -5 to 75 C (varies by vendor) |

Note: the table uses representative families; exact power levels, sensitivity, and DOM telemetry fields must be confirmed in the vendor datasheet for your selected part number. For standards background, you should align to IEEE 802.3 optical link requirements and transceiver performance expectations. [Source: IEEE 802.3-2022][Source: vendor transceiver datasheets]

Pro Tip: In smart city field work, optical budget failures often come from connector cleanliness and undocumented patch loss, not from “reach.” Require an inspection with a fiber microscope before blaming the transceiver, and treat DOM readings as a trend signal: if RX power margin is shrinking over weeks, you likely have a contamination or aging connector rather than an outright module defect.

[[IMAGE:Conceptual vector illustration showing an optical link budget diagram for fiber connectivity, with a transceiver symbol at each end, labeled attenuation, splice loss, connector loss, and a shaded “safety margin” band; clean technical style, teal and dark navy palette, grid background, flat design, high readability]

Selection checklist for smart city fiber connectivity projects

Engineering teams often rush to “match the port speed” and forget the operational details that govern uptime. Use this decision checklist to reduce field churn and keep fiber connectivity stable across temperature, power events, and maintenance cycles.

- Distance and fiber type: confirm SMF vs MMF, measure actual installed length, and validate fiber plant type (OM3/OM4 grading and SMF core/cladding specs).

- Wavelength and reach class: choose 850 nm SR for short indoor links; choose 1310 nm LR or 1550 nm ER for long outdoor routes with higher attenuation risk.

- Switch compatibility: verify transceiver interoperability with your exact switch model, including any vendor firmware constraints. Some platforms enforce vendor-specific EEPROM fields.

- DOM support and telemetry mapping: ensure DOM fields you rely on (temperature, bias current, laser power, RX power) are exposed and readable by your monitoring system.

- Operating temperature range: outdoor enclosures can exceed lab assumptions; pick modules with extended temperature ratings when cabinets see direct sun or poor airflow.

- Power and thermal constraints: check module power draw and airflow assumptions in the enclosure; transceiver cages may have derating curves at high ambient.

- Connector standard and polish: confirm LC type and polish (UPC vs APC). Mismatched polish can cause reflections that degrade receiver margin.

- Vendor lock-in risk and spares strategy: decide whether to standardize on OEM optics, third-party optics with known compatibility, or a qualified hybrid approach for cost control.

- Maintenance workflow: if the city maintenance team cannot reliably follow cleaning procedures, design for extra optical margin and build a fast swap plan.

[[IMAGE:Photographic lifestyle scene inside a municipal network operations van, showing a field engineer using a handheld fiber microscope and a cleaning kit next to a rugged edge switch with open SFP cages; natural daylight, documentary style, high realism, 50mm lens look, detailed hands and tools]

Common mistakes and troubleshooting patterns in fiber connectivity

When smart city links flap or go dark, the fastest path to recovery is disciplined fault isolation. Below are field-proven failure modes and how to address them without guessing.

Selecting SR optics for a link that is effectively longer than expected

Root cause: The installed fiber length or patching path loss exceeds the SR reach budget, especially when multiple patch cords and connectors were added after the original survey. Multimode performance is sensitive to modal distribution and connector quality.

Solution: Re-measure end-to-end loss with an OTDR or calibrated OLTS, then compare measured values to the transceiver budget. If you need margin, move from SR over MMF to LR/ER over SMF, or re-terminate and reduce connector count.

DOM telemetry mismatch leading to false alarms or ignored degradation

Root cause: Some third-party optics expose DOM fields but not in the way your monitoring stack expects, or they use different thresholds. Operators then either ignore warnings or interpret them incorrectly, delaying response until hard failures occur.

Solution: Validate DOM readout paths during commissioning: confirm sensor channels, units, and threshold logic. For example, verify laser bias and RX power trends under stable link conditions. Align alert thresholds with observed baseline values, not only datasheet defaults.

Connector contamination after field maintenance

Root cause: Re-seat cycles, dust ingress through outdoor enclosures, and incomplete cleaning steps create micro-contaminants that attenuate or reflect optical power. This is more common when a cabinet is serviced in windy or high-dust conditions.

Solution: Use a fiber microscope to inspect before and after cleaning. Clean with lint-free methods and proper cleaners, then re-test optical power and link error counters. If reflections persist, verify polish type and check for damaged endfaces.

Using the wrong connector polish type for the environment

Root cause: UPC vs APC mismatch can increase return loss and degrade receiver performance, particularly at higher data rates. Reflections can also couple into the transceiver optics and create instability.

Solution: Standardize polish types across a route. For long-distance SMF, ensure the expected return loss profile matches the transceiver and receiver design. Confirm with connector labeling and physical inspection.

Cost, TCO, and ROI realities for smart city fiber connectivity

Transceiver pricing varies widely by speed, reach class, and whether you choose OEM or third-party. In typical procurement cycles, OEM 10G optics may cost roughly $80 to $250 per module, while qualified third-party modules often land around $30 to $120, depending on temperature rating and DOM behavior.

The hidden cost is not the module purchase; it is the operational cost of failures, truck-rolls, and extended downtime. If you standardize on optics that are not fully compatible with your switches, you may see increased port disable events, higher error rates, and more frequent replacement. A conservative TCO model should include spares stocking, commissioning time, and the labor cost of microscope-based cleaning and re-termination.

ROI improves when you combine a vetted transceiver catalog with measurable commissioning: document link loss, record DOM baseline readings, and define thresholds for maintenance action. This approach reduces mean time to repair and keeps fiber connectivity stable through seasonal temperature cycles.

FAQ: Fiber connectivity transceivers for smart city edge links

What fiber connectivity distance should I plan for when choosing SR vs LR?

Use measured installed length plus a conservative margin for patching and connector loss. As a rule, SR over multimode is best for short indoor segments; for outdoor routes and uncertain patch paths, plan LR over SMF to preserve receiver margin.

Do I need DOM support for smart city monitoring?

If your operations team uses telemetry to detect degradation, DOM is valuable. However, you must validate that your monitoring system reads DOM fields correctly for the specific transceiver model and that alert thresholds match your baseline.

Can third-party transceivers work in production?

Yes, but only after compatibility validation against your exact switch model and firmware revision. Test in a commissioning window by verifying link stability, DOM readout, and error counters under expected ambient temperatures.

What is the fastest troubleshooting step for a link that goes down?

Start with optical layer checks: inspect connectors with a microscope, clean if needed, then verify link partner and interface settings. After that, compare DOM RX power against baseline and check error counters to distinguish optical margin loss from configuration or electrical issues.

How do I reduce fiber connectivity outages during maintenance visits?

Standardize cleaning kits, microscope inspection, and a documented “clean-and-reseat” procedure. Also maintain a spares strategy with pre-validated modules so swaps do not introduce new compatibility variables.

Which standards should I reference for optical Ethernet behavior?

IEEE 802.3 provides the Ethernet physical layer framework and optical performance expectations. For implementation details, always rely on the transceiver datasheet, your switch vendor documentation, and relevant ANSI/TIA guidance on fiber cabling practices.

For smart city fiber connectivity, success comes from engineering discipline: correct reach class, verified switch compatibility, and operational margin backed by DOM telemetry and connector hygiene. If you are planning the next rollout, review edge cabling and rack power planning to ensure your cooling, power, and transceiver density decisions align with the optical design.

Author Bio: I am a data center engineer who has deployed and troubleshot high-density fiber connectivity in edge and aggregation environments, including ruggedized outdoor cabinets and leaf-spine refreshes. My work focuses on rack-level power, cooling airflow, fiber plant validation, and transceiver commissioning for measurable uptime.