You can plan compute clusters perfectly, yet still lose throughput if the fiber plant under your AI infrastructure is mismatched to transceivers, reach, and latency budgets. This article helps data center architects, field telecom engineers, and NOC teams choose the right fiber types and associated optical interfaces for predictable performance. It also covers how the same decisions ripple into DWDM, SDH transport, and PON access when AI workloads expand.

Top 1: Single-mode fiber for long-reach AI infrastructure links

When AI infrastructure traffic must traverse campus routes, meet strict latency budgets across buildings, or support DWDM aggregation, single-mode fiber (SMF) is the default choice. In practice, I have seen SMF used for leaf-spine interconnects across separate halls using 100G/200G coherent optics, where latency stability matters more than raw link distance alone. Typical plant specs target attenuation around 0.20 to 0.25 dB/km at the 1310/1550 nm bands and core/cladding geometry aligned to ITU-T recommendations.

Key specs and interface fit

SMF supports coherent and long-reach optics over tens of kilometers, depending on transceiver class and dispersion tolerance. If you are running DWDM, SMF is what lets you stack wavelengths without fighting modal dispersion. For Ethernet transport, SMF pairs naturally with 10G LR, 40G LR4, 100G LR4, and coherent pluggables, while higher layers still follow IEEE Ethernet behavior.

- Best fit: campus, inter-building, DWDM aggregation, coherent links

- Pros: long reach, low attenuation, DWDM-ready

- Cons: requires SM optics and careful connector hygiene

Top 2: OM4 multimode fiber for cost-effective short-reach AI clusters

For dense AI infrastructure inside one building, multimode fiber (MMF) can reduce cost and simplify optics procurement. OM4 is commonly used with 25G/50G/100G short-reach transceivers over patch panels where run lengths are usually within a few hundred meters. In a typical hall, I have seen OM4 support 400G over parallel optics when the channel budget and connector losses are tightly controlled.

Key specs and interface fit

OM4 is designed for higher modal bandwidth than OM3. Real-world selection starts with your expected link budget: connector loss, patch panel insertion loss, and worst-case transmitter power versus receiver sensitivity. Many deployments also validate with a field launch-and-receive test plan rather than relying on “as-built” fiber attenuation.

- Best fit: same-room ToR-to-spine, short-reach aggregation, fast turn-up

- Pros: lower cost optics than long-reach coherent, easier testing

- Cons: modal dispersion limits reach; must match OM4 to MM optics

Top 3: OM5 wideband multimode for future-proofing AI infrastructure growth

AI infrastructure plans rarely stay still; you add GPUs, then you add bandwidth. OM5 (wideband multimode) is a practical upgrade path when you anticipate using multiple wavelengths over MMF without ripping out the plant. I have deployed OM5 in a staging facility where initial 10G and 25G links used conventional MM optics, then later migrated to higher-speed transceivers with improved wavelength utilization.

Key specs and interface fit

OM5 supports operation across multiple wavelengths in the 850 nm to 950 nm range, aligning with certain multi-wavelength MM optics. The main risk is procurement mismatch: if your vendor’s transceivers assume OM4 rather than OM5, you may not realize the benefit.

- Best fit: buildings with known mid-term upgrade cycles, mixed-speed optics plans

- Pros: helps avoid early fiber replacement, supports multi-wavelength MM

- Cons: less universal than SMF for long reach; verify transceiver compatibility

Top 4: PON-aware fiber planning for AI infrastructure at the edge

Not all AI infrastructure is centralized. When you push AI inference to branches, retail sites, or industrial edges, you encounter PON architectures. In those environments, fiber choice still affects optical budgets, split ratios, and maintenance windows. Even if your core is SMF, edge segments may rely on MMF for short inside-building runs, then transition to SMF toward splitters.

Key specs and interface fit

PON performance is driven by optical power budget, connector/splice losses, and the number of splits. Practically, you must model worst-case aging and cleaning failures, because field technicians sometimes reuse patch cords with unknown cleanliness. If your AI edge nodes depend on consistent latency for telemetry, you should also consider how transport overlays behave over SDH or Ethernet backhaul.

- Best fit: edge inference sites, branch aggregation with splitters

- Pros: scalable reach for many endpoints, predictable access model

- Cons: tight power budget; fiber mismatch can break service after minor loss increases

Top 5: Match fiber type to transceiver class and validation method

Fiber type alone does not guarantee AI infrastructure performance. The real win comes from matching fiber to the transceiver class and then validating with measurement tools that reflect how the link truly behaves. I have seen “it should work” installations fail because the connector loss was fine on paper but exceeded the channel budget once patch cords aged or were reterminated.

Comparison table: what engineers actually check

| Item | Single-mode (SMF) | OM4 Multimode | OM5 Wideband Multimode |

|---|---|---|---|

| Typical wavelength use | 1310 nm / 1550 nm (and coherent bands) | 850 nm class | 850 to 950 nm bands |

| Reach expectation (typical) | km-scale for LR and coherent optics | hundreds of meters (short-reach) | similar to OM4 for many MM short-reach plans |

| Core concept | Single optical path; low modal dispersion | Higher modal bandwidth for short reach | Wideband MM for multi-wavelength support |

| Connector/installation sensitivity | High: cleaning and return loss still matter | High: patch panel loss and bend radius matter | High: verify optics wavelength support |

| Temperature range (typical pluggable spec) | Often 0 to 70 C for standard modules | Often 0 to 70 C for standard modules | Often 0 to 70 C for standard modules |

| Operational validation | OTDR + optical power + BER/eye where available | OLTS + end-face inspection + link BER checks | OLTS + wavelength compatibility verification |

Pro Tip: In high-speed AI infrastructure links, treat connector cleanliness like a software regression. I have watched a single dusty MPO cassette trigger intermittent CRC errors that disappeared only after end-face inspection, microfiber replacement, and a full patch-cord re-clean schedule. The “fiber type” is correct, but the optical interface quality becomes the limiting factor.

Selection criteria checklist for AI infrastructure fiber and optics

- Distance and topology: within-rack, across-row, across-building, or campus routing.

- Bandwidth target and interface class: 10G/25G/50G/100G/200G/400G; Ethernet over SMF or MMF; coherent where needed.

- Budget and optics availability: compare OEM pluggables versus third-party; verify compliance to IEEE 802.3 channel requirements.

- Switch and transceiver compatibility: confirm vendor support lists for specific models like Cisco SFP-10G-SR or Finisar FTLX8571D3BCL; test with your exact platform.

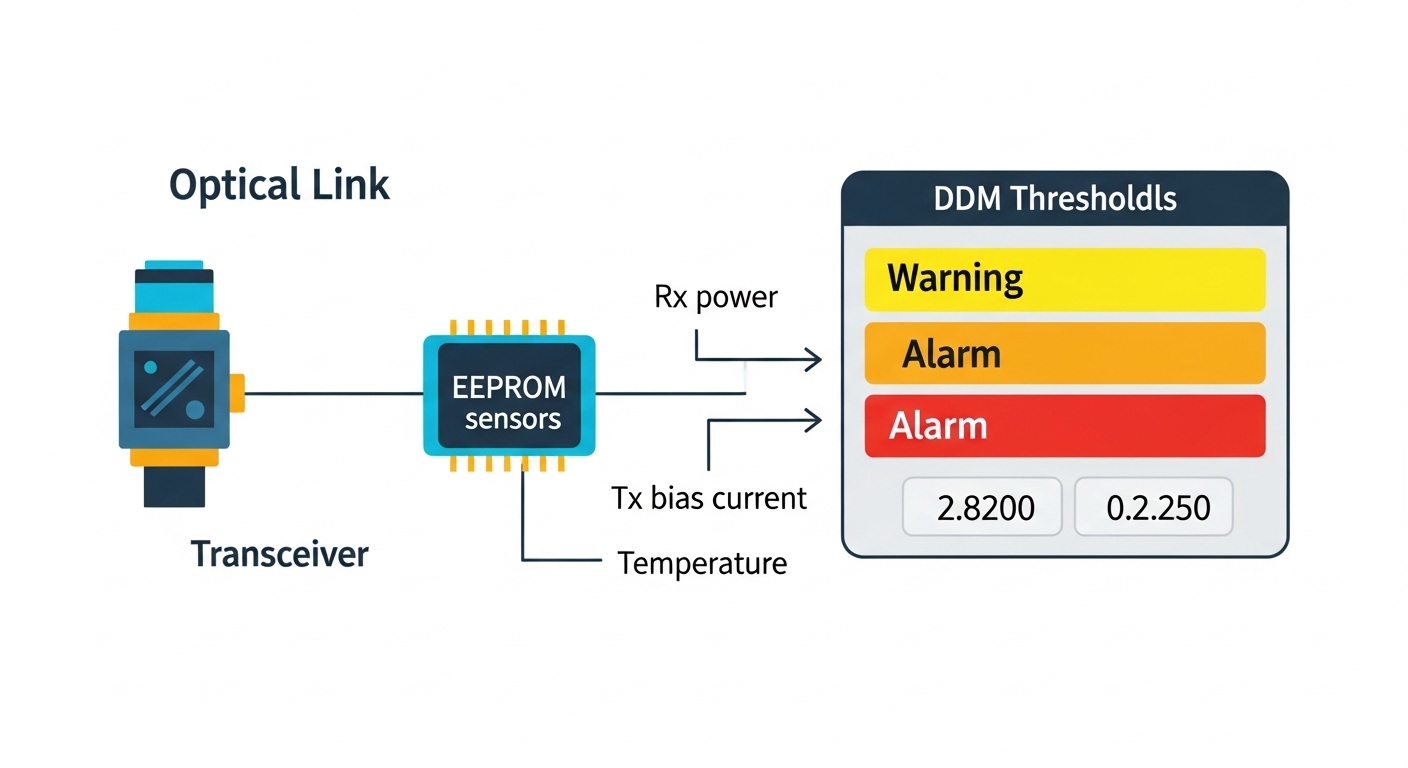

- DOM support and monitoring: ensure Digital Optical Monitoring works with your NMS and that alarms map correctly.

- Operating temperature and airflow: validate whether the site exceeds transceiver rated conditions under full-load racks.

- Vendor lock-in risk: plan for optics lifecycle; third-party SFP/QSFP modules can reduce capex but may create support friction.

anchor-text=IEEE 802.3 overview anchor-text=ITU-T SMF guidance G.652 anchor-text=ITU-T MMF guidance G.651

Common mistakes and troubleshooting in AI infrastructure fiber choices

1) Wrong fiber type for the transceiver wavelength. Root cause: installing OM4 cabling where an 850 nm MM transceiver expects it, but the patch cords are from mixed runs or the connector pair is swapped with SMF. Solution: verify fiber labels end-to-end, then run OTDR/OLTS and confirm wavelength behavior with the actual module.

2) Exceeding bend radius or insertion loss in patch panels. Root cause: tight routing behind cabinets or reuse of old patch panels with degraded ferrules. Solution: inspect bend radii, reterminate or replace patch cords, and re-run link loss tests before declaring the transceiver faulty.

3) Assuming “it passed once” means the channel is stable. Root cause: thermal cycling and partial cleaning issues cause intermittent CRC/BER spikes. Solution: implement end-face inspection SOP, schedule microfiber replacement, and monitor DOM telemetry for bias current and optical power drift.

4) Mixing optics vendors without validating DOM and thresholds. Root cause: DOM scaling differences and threshold tolerances can trigger alarms even when BER is acceptable. Solution: test in the lab with the exact switch model, then align your NMS alarm thresholds to the observed telemetry range.

Cost and ROI note: what tends to dominate TCO

In AI infrastructure projects, fiber plant capex is usually smaller than optics over a multi-year lifecycle, but it can dominate if you need re-cabling. SMF often costs more per run than MMF, and coherent optics can be significantly higher than short-reach pluggables. Typical field experience: third-party optics can be 20 to 40 percent cheaper than OEM, but total cost depends on support tickets, return rates, and downtime. Plan for spares of the specific transceiver types, not just generic “compatible” modules, and budget for testing equipment time (OTDR/OLTS) because that reduces rework.

Summary ranking: best fiber choice by AI infrastructure scenario

| Rank | Scenario | Best fiber pick | Why it wins |

|---|---|---|---|

| 1 | Campus or inter-building AI infrastructure links | Single-mode fiber | Low loss over km and DWDM/coherent readiness |

| 2 | In-room high-density 25G/50G/100G | OM4 multimode | Cost-effective short reach with mature optics ecosystem |

| 3 | Mid-term expansion with multi-wavelength MM plans | OM5 wideband multimode | Helps avoid early replacement when wavelengths diversify |

| 4 | Edge AI with PON backhaul and split ratios | Hybrid planning, usually SMF toward splits | Power budget control and manageable maintenance |

FAQ

Q: For AI infrastructure, is multimode ever better than single-mode?

Yes for short-reach inside a building, especially when you want lower-cost optics and can keep insertion loss and connector quality tightly controlled. OM4 is usually the practical baseline, while OM5 helps when multi-wavelength MM