You are planning the next upgrade for leaf-spine or core uplinks, and you need to decide whether to standardize on 400G or move straight to 800G. This article helps network and infrastructure teams evaluate cost, performance, optics compatibility, and operational risk so you can fund the right ports first. It is written for engineers who must match transceivers to switch line cards, meet reach requirements, and estimate power and failure impacts.

What changes when you scale from 400G to 800G

The jump from 400G to 800G is not just “double bandwidth.” It changes port density planning, oversubscription math, and the optics ecosystem you will standardize on. Many platforms still rely on different electrical lane counts, different FEC/PCS behavior, and different optics form factors, which can affect interoperability and lead time. From a performance lens, both can meet the same application throughput, but 800G can reduce the number of physical uplinks while increasing per-port transceiver cost and power draw.

Throughput and oversubscription reality

In a typical 3-tier data center, uplinks are sized for bursty east-west traffic plus north-south spikes. If you currently run 400G uplinks and see persistent congestion at specific times, moving to 800G can reduce the number of congested links without changing your routing policy. However, if congestion is driven by oversubscription at the fabric or by microburst sensitivity, “more speed per port” may not eliminate the root cause.

Optics and FEC implications

Optics selection is tightly coupled to switch vendor validation. For 400G, common choices include QSFP-DD and OSFP variants depending on platform generation; for 800G, you often see QSFP-DD for 2x400G breakout internally or larger lane aggregation approaches depending on the silicon. In practice, you must match the transceiver to the switch’s required FEC mode and supported DOM reporting so the control plane can monitor temperature, bias current, and link diagnostics.

| Spec | 400G (example: SR) | 800G (typical approach) |

|---|---|---|

| Target data rate | 400G Ethernet (e.g., 4x100G or 8x50G depending on PHY) | 800G Ethernet aggregated lanes |

| Wavelength | 850 nm (MMF) | 850 nm (MMF) in short-reach designs |

| Reach (MMF) | Up to ~70 m with OM4; ~100 m with OM5 is often cited by vendors for SR | Often similar short-reach constraints, but exact reach depends on implementation and vendor validation |

| Connector | LC duplex (typical) | LC duplex or multi-fiber mapping depending on optics type |

| Operating temperature | Commercial and industrial grades vary by vendor; many pluggables support ~0 to 70 C or extended ranges | Depends on optics SKU; validate against your site spec |

| DOM / monitoring | Supported by most modern optics; ensure switch compatibility | Same requirement; DOM mapping may differ by vendor |

| Energy impact | Lower per port than 800G, but more ports may be needed | Fewer ports, but higher per-port transceiver and line-card power |

Cost modeling that actually matches procurement and operations

When you model ROI, separate optics cost from platform cost and from operational risk. A typical 400G MMF transceiver line item is often meaningfully cheaper than an equivalent validated 800G optics line item, but 800G may reduce the number of ports required on the switching layer. Teams also underestimate spares: the larger the portfolio of optics SKUs, the higher the safety stock you must hold to avoid downtime.

Realistic price bands and TCO levers

In many enterprise and wholesale deployments, third-party 400G SR optics can be purchased at a noticeable discount versus OEM validated optics, while 800G pricing tends to be less forgiving due to newer validation cycles. Your total cost of ownership should include transceiver failure rate assumptions, expected replacement cycles, and the cost of labor to swap optics during maintenance windows. Power is also non-trivial: if 800G reduces the number of active ports, you may save power at the switching layer, but you must confirm the measured wattage of both the line card and the optical module.

Pro Tip: Before committing to 800G, run a pilot that measures actual port power and link error counters under your real traffic profile. Vendor datasheets rarely reflect your exact FEC settings, cable plant, and temperature gradients, and the gap can flip the ROI conclusion.

Selection criteria checklist for 400G vs 800G

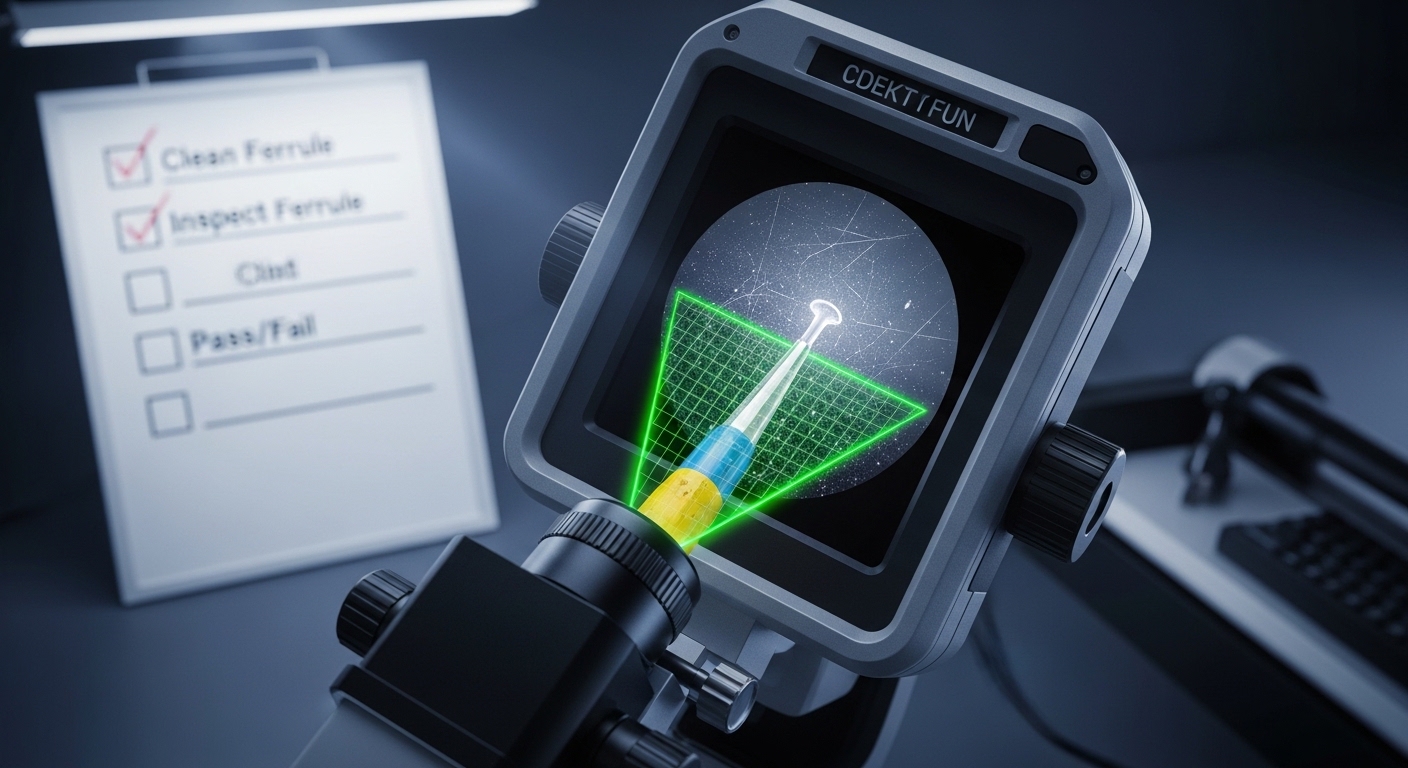

- Distance and fiber plant: confirm OM4 vs OM5, end-face cleanliness, patch panel losses, and link budget margin for SR optics.

- Switch compatibility: verify transceiver part numbers are supported by your exact switch model and line card revision.

- DOM and alarm behavior: ensure the switch reads DOM thresholds correctly and that alerts integrate with your monitoring stack.

- DOM support and FEC mode: confirm the required FEC/PCS mode is supported; mismatch can cause link flaps or degraded performance.

- Operating temperature: validate optics temperature rating against your rack inlet temperature and airflow constraints.

- Vendor lock-in risk: evaluate how much of your optics spend is constrained to OEM versus third-party, and what that means during shortages.

- Upgrade path: consider whether 400G gives you a smoother migration timeline while 800G optics mature.

Common pitfalls and troubleshooting outcomes

These issues show up frequently when teams compare 400G and 800G rollouts.

-

Pitfall: Link comes up then intermittently drops after thermal soak.

Root cause: optics running near temperature limits or bias current instability due to poor airflow.

Solution: measure rack inlet and module temperature, improve airflow, and set alarms based on vendor DOM thresholds. -

Pitfall: High CRC/FEC errors after swapping to a cheaper third-party module.

Root cause: mismatch between switch-required FEC settings and module behavior, or insufficient link budget due to patch loss.

Solution: validate supported transceiver SKUs for the switch model; clean connectors and re-measure loss with an OTDR or certified tester. -

Pitfall: 800G ports underperform during congestion events despite “correct” link rate.

Root cause: traffic engineering and queue scheduling bottlenecks inside the fabric; port speed alone does not fix microburst handling.

Solution: review queue profiles, ECMP behavior, and congestion telemetry; test with production-like traffic patterns before scaling.

FAQ: 400G vs 800G for buyers and field teams

Is 800G always better if my switches support it?

No. 800G can reduce port count, but it can increase optics cost and spares complexity. If your congestion is caused by oversubscription or microbursts, 400G may deliver better ROI when paired with correct queue tuning.

For short reach, what optics should I evaluate first?

For typical intra-rack and top-of-rack distances, evaluate 850 nm MMF pluggables with connector type and reach validated against your switch model. If you are comparing 400G SR versus 800G SR, ensure both have the same or better link budget margin on your actual fiber plant.

Do third-party optics work for 800G?

Sometimes, but compatibility risk is higher on newer platforms because validation windows are shorter. Use only transceiver SKUs listed as supported by the vendor for your switch model, and keep a controlled rollback plan.

What should I measure during a pilot?

Measure link stability, DOM telemetry (temperature, bias current), and error counters such as CRC and any vendor-specific FEC indicators. Also record real power draw per port during your peak traffic period.

How do I estimate spares and downtime cost?

Estimate replacement lead times and the probability of optics failure during the initial rollout window. Multiply expected swaps by labor hours and maintenance window constraints, then compare that TCO across 400G and 800G optics strategies.

Where does ROI usually land: 400G or 800G?

ROI depends on your port density needs and optics pricing at the time of procurement. Many teams start with 400G for faster standardization and predictable spares, then move to 800G when optics cost and validation maturity improve.

If you want a practical next step, map your current uplink utilization, fiber reach, and switch line-card compatibility, then