400G migration is breaking your AI workloads plan

When AI workloads scale from pilot to production, the network becomes a bottleneck fast: congestion, oversubscription surprises, and optics that do not behave the way the datasheet promises. This article compares common 400G migration options so you can choose a path that fits your fabric, budget, and risk tolerance. It is written for data center engineers and field techs planning a 400G rollout for AI workloads across leaf-spine or spine-only topologies.

Performance tradeoffs: 400G optics formats and link behavior

For AI workloads, performance is not only about raw throughput; it is about stable link operation under real conditions: temperature swings, connector stress, and deterministic latency targets when using ECN, RoCEv2, or DCTCP. At 400G, you will usually choose between different pluggable form factors (for example, QSFP-DD) and different modulation/optics families based on reach and cost. In practice, the “best” option is the one that maintains low BER and consistent receiver margin across the full operating range defined by IEEE 802.3 and vendor transceiver specifications. For optical Ethernet, the relevant electrical/optical behavior is grounded in the IEEE 802.3 400GBASE requirements and the transceiver vendor’s compliance guidance.

What engineers measure during a rollout

During migration, field teams typically validate link stability with optical power levels, link error counters, and temperature/DOM telemetry. A common operational goal is to keep receive power within the transceiver’s recommended range and to avoid excessive forward error correction (FEC) stress. If you use RoCEv2 for AI workloads, you also validate that ECN marking and queue behavior match your congestion control strategy, because optics-related flaps can look like congestion to the transport layer.

Reach and fiber reality: SR, LR, and DR choices for AI workloads

Reach determines how you group racks, how you route fiber trunks, and how many spares you must stage. For most AI workloads in modern pods, short-reach optics dominate because training clusters typically use top-of-rack and spine cabling within a few hundred meters. You can still hit longer reaches for aggregation or campus extensions, but that introduces higher optical cost and sometimes different connector types or fiber plant assumptions.

Side-by-side optics comparison (typical 400G options)

The table below compares representative 400G transceiver categories you will see in migration plans. Exact values vary by vendor and specific part number, so treat these as engineering starting points.

| Option | Typical wavelength | Target reach (typ.) | Fiber type | Connector (typ.) | Data rate | Operating temperature (typ.) |

|---|---|---|---|---|---|---|

| 400G SR (multimode) | 850 nm | 70 m to 100 m | OM4 / OM5 | MPO-12 or MPO-16 | 400G Ethernet | 0 C to 70 C (varies) |

| 400G DR (multimode) | ~1310 nm | 300 m to 500 m | SMF or specific MM reach variants | LC or MPO (varies) | 400G Ethernet | -5 C to 70 C (varies) |

| 400G LR (singlemode) | 1310 nm | 10 km | SMF | LC | 400G Ethernet | -5 C to 70 C (varies) |

| 400G ER (singlemode) | 1550 nm | 40 km | SMF | LC | 400G Ethernet | -5 C to 70 C (varies) |

Reference points: IEEE 802.3 defines Ethernet physical layers and compliance expectations for optical links, while vendor datasheets define DOM behavior, optical power budgets, and temperature classes. See [Source: IEEE 802.3] via [[EXT:https://standards.ieee.org/standard/802_3]] and consult your specific transceiver manufacturer’s datasheet for DOM and power range details.

Compatibility and risk: OEM vs third-party optics for AI workloads

In AI workloads deployments, compatibility failures are often operationally expensive: a single port flaps can trigger traffic reroutes, training job restarts, and expensive troubleshooting time. OEM optics can reduce surprises, but third-party options can cut procurement cost if they meet compliance and your switch’s compatibility matrix. The key is to validate whether your switch firmware supports the transceiver type, whether the transceiver reports correct DOM values, and whether the platform enforces vendor-specific authentication.

Decision checklist for compatibility

- Switch and firmware compatibility: confirm the exact model and firmware release supports that transceiver vendor and optics class.

- DOM support and telemetry accuracy: verify that temperature, bias current, transmit power, and received power fields populate reliably.

- Power budget alignment: validate link margin against your measured fiber loss and patch panel insertion loss.

- Authentication or vendor-lock policies: check if your platform blocks non-OEM optics or requires a supported list.

- Connector and polarity discipline: ensure MPO polarity method matches your cabling standard and labeling practice.

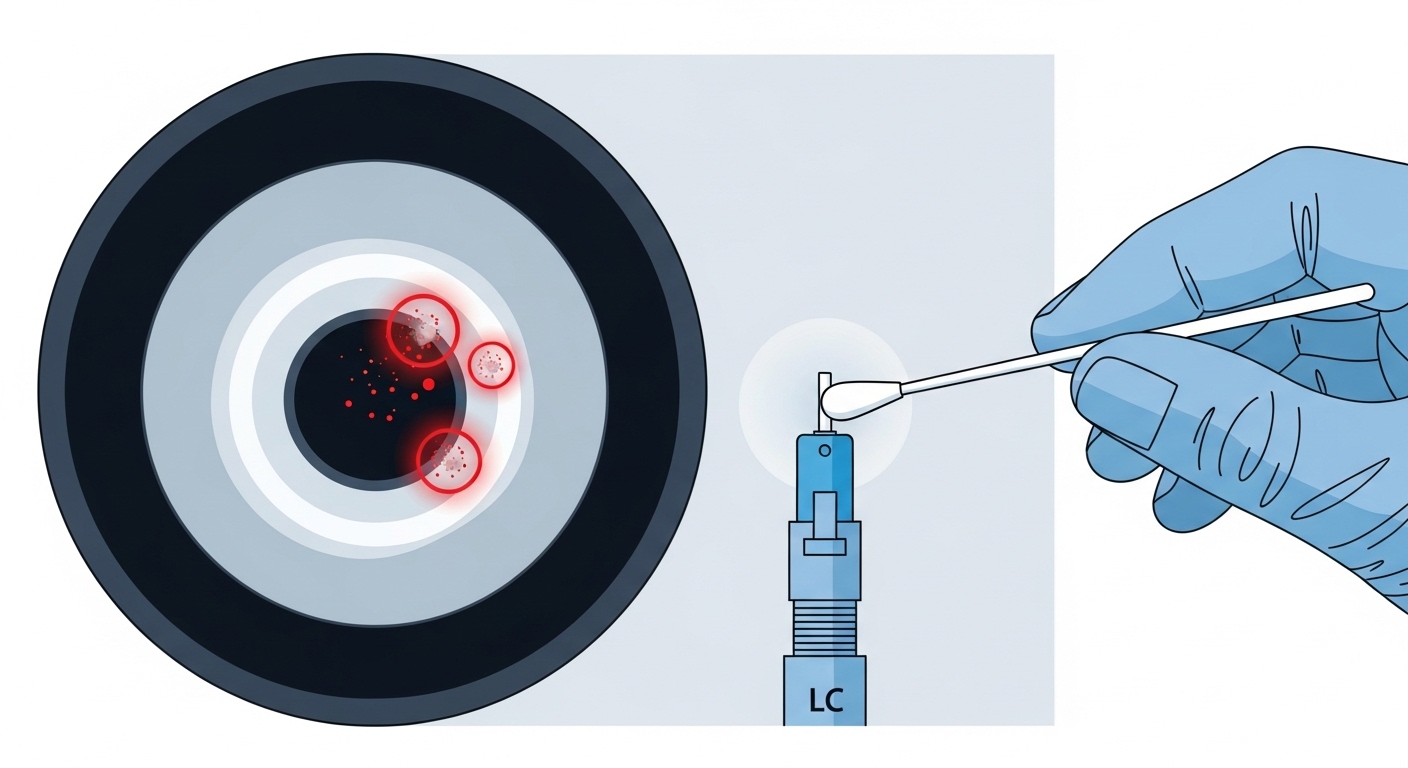

Pro Tip: In the field, the most common “optics problem” during AI workloads migration is not the transmitter. It is a polarity or dust issue on MPO connectors that only becomes visible when you push higher link utilization and the system starts relying on tight receiver margins.

TCO and ROI: how to budget 400G migration without surprises

ROI for AI workloads network upgrades is measured in uptime, time-to-train, and reduced operational risk, not just per-transceiver sticker price. Typical 400G optics pricing varies widely by reach and vendor strategy, but you can plan approximate ranges: short-reach multimode optics are often the cheapest per port, while long-reach singlemode optics cost more and may require different fiber plant readiness. If you run at high utilization, even a small optics failure rate increases mean time to repair and can disrupt training cycles. A realistic TCO view also includes spares, labor time for cleaning and re-terminations, and the cost of firmware validation cycles.

Cost drivers you should model

- Procurement cost: OEM usually costs more but reduces validation effort.

- Power and cooling impact: higher optical complexity can slightly influence rack power, but the bigger factor is avoiding retries and link flaps.

- Downtime and labor: re-seating, cleaning, and re-testing with an optical power meter can consume hours per incident.

- Spare strategy: staging the right reach and vendor type prevents prolonged downtime.

For compliance context, IEEE 802.3 and vendor datasheets are your authoritative sources. For operational best practices on optical connectors and fiber handling, consult vendor guidance and reputable industry publications such as [Source: Cisco Optics and Compatibility documentation] via [[EXT:https://www.cisco.com/]] and [Source: Finisar and other transceiver vendor datasheets] via their support portals.

Common mistakes and troubleshooting tips during 400G AI workloads rollout

Even strong architectures fail during migration when teams underestimate optics handling and platform nuances. Below are concrete failure modes seen by field engineers, with root causes and fixes.

Link flaps after cleaning attempts

Root cause: MPO polarity mismatch or incorrect cable orientation after re-termination. The link may train intermittently because some lanes are marginal.

Solution: verify MPO polarity method end-to-end (connector mapping), re-check fiber labels, and use a continuity test before re-seating. Then confirm receive power lane-by-lane with DOM telemetry or transceiver diagnostics.

High FEC events or rising error counters

Root cause: fiber loss or patch panel insertion loss exceeds the transceiver power budget, especially after adding new jumpers or changing bend radius.

Solution: measure optical power at both ends, confirm you stay within the transceiver’s recommended receive power range, and inspect for excessive bend radius or damaged patch cords.

Non-OEM optics not recognized or limited to low features

Root cause: firmware compatibility mismatch or authentication enforcement that blocks or partially supports transceivers.

Solution: confirm the switch model and firmware release with the transceiver vendor’s compatibility notes, run a controlled validation in a staging rack, and keep an OEM spare for rapid rollback.

Temperature-related performance drift

Root cause: transceivers operating near the upper temperature limit because of airflow constraints or obstructed cable guides in dense racks.

Solution: verify airflow paths and inlet temperatures, enforce cabling clearance, and monitor DOM temperature over several days during peak AI workloads windows.

Decision matrix: pick the right 400G option for your environment

Use this matrix to align technical fit with operational risk. It is a practical way to compare optics strategies across performance, distance, and compatibility constraints.

| Reader profile | Best-fit optics approach | Why it fits | Main tradeoff |

|---|---|---|---|

| Short reach within a pod (most AI workloads) | 400G SR over OM4/OM5 | Lowest cost per port and simplest cabling for 70m-class links | Distance ceiling; requires good multimode plant |

| Hundreds of meters inside a campus or larger rooms | 400G DR class | Extends reach without going to full long-haul optics | May require different connector/fiber planning |

| Cross-building or long-haul AI workloads | 400G LR/ER over SMF | Matches long distance requirements with predictable budgets | Higher optics cost and more expensive fiber plant |

| Strict uptime and fast validation deadlines | OEM optics for first wave | Minimizes compatibility and DOM telemetry surprises | Higher purchase price |

| Budget-constrained rollout with strong lab testing | Third-party optics with verified compatibility | Lower procurement cost if validated with your switch/firmware | Needs disciplined staging and rollback plan |

Which option should you choose?

If your AI workloads run primarily within a pod using leaf-spine, start with 400G SR and ensure your OM4/OM5 plant and MPO polarity are correct. If you are bridging longer intra-campus distances, move to 400G DR and validate power budget with measured fiber loss before mass deployment. If you must span buildings or long-haul links, choose 400G LR/ER and prioritize SMF quality and end-to-end margin.

For the first migration wave, my recommendation is a staged approach: use OEM optics for a small pilot to confirm platform behavior, then expand with third-party optics only after compatibility and DOM telemetry are proven. Next step: review How to plan fiber polarity and MPO cleaning for AI data centers to reduce the most common outage causes during 400G bring-up.

FAQ

What is the most common reason 400G links fail during AI workloads migration?

The most common causes are connector polarity mistakes (especially with MPO) and fiber dust or damage that creates lane-level margin loss. Validate with continuity checks, then confirm DOM-reported receive power and error counters after reseating.

Should I buy OEM optics or third-party for AI workloads?