In modern data centers and campus networks, optics maintenance cost often spikes when modules fail outside warranty windows or when replacements arrive late. This article helps network managers, procurement leads, and field engineers design warranty and insurance strategies that reduce downtime and predictable spend. You will get concrete selection criteria, troubleshooting pitfalls, and an ROI view that treats transceivers as managed assets rather than expendable parts.

Top 7 levers that control optics maintenance cost

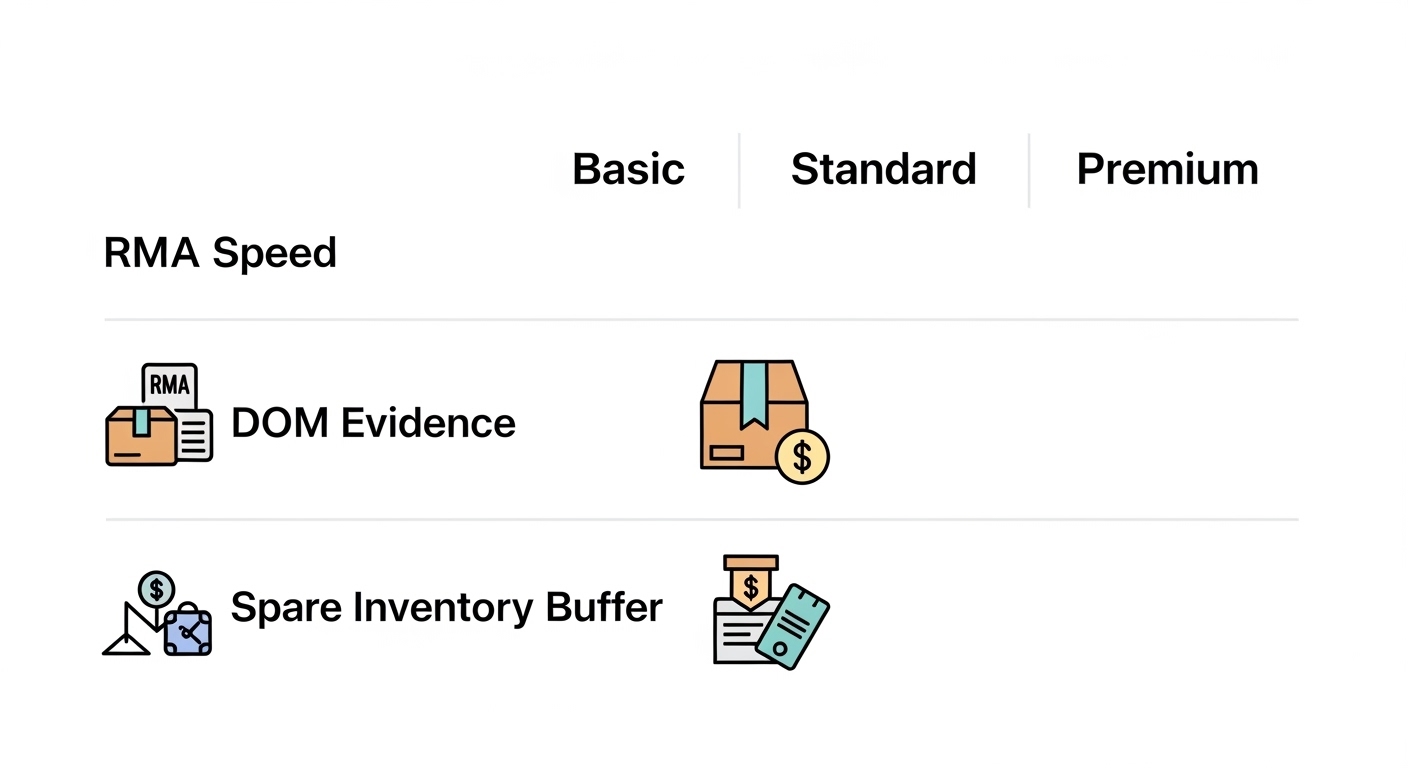

Optics maintenance cost is rarely only the replacement price of an SFP or QSFP module. It is the sum of transport, labor, downtime risk, RMAs, and the operational overhead of chasing compatibility issues. In practice, the biggest controllable levers are warranty coverage terms, proof-of-purchase traceability, spares positioning, and how you document link performance over time. The goal is to convert “surprise failures” into “planned swaps” with measured MTBF and clear escalation paths.

When you implement these levers, you also improve auditability for IT finance: you can tie each failure to a serial number, port, and optical budget history. That matters because some insurers and vendors require evidence such as DOM readings, link partner type, and fault logs. For standards context, optical Ethernet interfaces align to IEEE 802.3 for physical layer behavior; vendors then specify module compliance and recommended operating conditions in datasheets. anchor-text: IEEE 802.3 physical layer standards

Real-world budgeting model (what usually drives the variance)

- Failure rate outside warranty: many organizations see a second wave of failures after 24 to 36 months due to cumulative handling and thermal cycling.

- RMA friction: shipping and downtime can turn a low module price into a high operational cost.

- Inconsistent spares strategy: “one size” spares fail when you mix LR, SR, and ER optics across different distances.

Pro Tip: Track DOM telemetry at steady intervals (for example, once per hour) and export it to a time-series store. Insurers and vendors respond faster when you can show that RX power drift or bias current trends began weeks before the hard failure, rather than only providing a “module dead” timestamp.

Top 1: Warranty terms that actually reduce your optics maintenance cost

Not all warranties protect you equally. Field teams often discover too late that “limited lifetime” may exclude certain conditions, that RMA turnaround is not guaranteed, or that coverage depends on using vendor-approved transceivers. The best approach is to treat warranty review like a procurement risk assessment: read the exclusions, confirm RMA SLAs, and verify whether the vendor requires the original host switch for diagnostics.

What to verify in vendor warranty language

- Coverage duration and start date: confirm whether it starts at ship date or install date.

- RMA process and SLA: ask for a stated turnaround time and shipping method for replacements.

- Compatibility requirements: check whether “tested on our switch lineup” is a strict requirement or a recommendation.

- Serial number traceability: confirm that the vendor can validate coverage by serial number and batch.

- Exclusions: look for damage caused by improper cleaning, ESD, or out-of-spec temperature.

For optics, operating windows are not just contractual—they are physical limits. Datasheets for common modules like Cisco SFP-10G-SR class optics specify temperature ranges and optical performance expectations (for example, typical SR parameters around 850 nm with specified reach depending on OM grade). anchor-text: Cisco support and datasheets portal

Top 2: Insurance policies built around evidence, not guesses

Optical insurance can help when your warranty window ends or when accidental damage occurs during maintenance. However, many policies deny claims if documentation is weak or if the claim narrative conflicts with observed failure modes. The winning strategy is to align your incident response workflow with what insurers consider “substantiated loss.”

Evidence checklist that speeds claims

- DOM snapshot (vendor-agnostic): record TX bias current, laser temperature, and RX power at the time of incident and immediately after replacement.

- Port and link context: capture switch model, port number, optics type (SR/LR/ER), and fiber type (OM3/OM4/OS2).

- Fault logs: include interface flaps, CRC/discipline counters, and any “link down” reason codes.

- Cleanliness verification: if using MPO or LC, keep a record of inspection results and cleaning steps.

From a compliance standpoint, your documentation should reflect the underlying physical layer behaviors defined by IEEE 802.3, even if the insurer does not care about the standard number. What matters is that you can show the link was operating within spec until a measurable anomaly occurred. anchor-text: IEEE education resources for networking fundamentals

Top 3: Spares positioning and burn-rate math for predictable optics maintenance cost

Even with strong warranties and insurance, you still pay for downtime risk if spares are wrong or missing at the moment of failure. Spares planning should be based on burn rate (modules consumed or swapped per month) and lead times. In practice, teams reduce optics maintenance cost by holding the right quantity of the right variants at the right locations.

A practical spares formula engineers use

- Compute monthly failure swaps: failures per month per module type.

- Apply lead time in days: include carrier time and RMA replacement time if relevant.

- Add confidence buffer: common approach is 1.5x to 2x the expected demand during peak seasonal thermal loads.

- Segment by distance and wavelength: SR at 850 nm, LR at 1310 nm, ER at 1550 nm, plus the matching fiber standards.

Example: if a 10G SR module type shows 0.3 swaps per quarter in a region with 14-day replenishment lead time, you plan for at least one spare pool per site per module family, then scale up where thermal stress is higher. That prevents “rush shipping” and emergency labor, which are usually the hidden cost multipliers.

Top 4: Choosing OEM vs third-party optics without creating warranty gaps

OEM transceivers can be expensive, but they often come with cleaner compatibility and better documentation for warranty claims. Third-party optics may reduce purchase cost, yet they can increase optics maintenance cost if they trigger intermittent link issues, require manual vendor approvals, or complicate insurance claims. The decision should be based on compatibility evidence, not just unit price.

Common decision approach

- Use OEM for critical links: edge aggregation, core uplinks, and any link that must meet strict uptime targets.

- Use third-party for bulk, non-critical, or well-characterized segments: where you have prior validation results and stable operating conditions.

- Require DOM and compliance documentation: insist on datasheet alignment and ability to read DOM values in your switch.

Many networks rely on digital diagnostic monitoring (DOM) so you can detect drift and avoid sudden failures. That operational benefit is independent of OEM versus third-party, but the quality of calibration and the clarity of documentation can differ. When evaluating specific models, compare wavelength, reach, and optical power budgets from datasheets and match them to your link class.

Spec comparison table for planning (SR and LR examples)

| Module example | Data rate | Wavelength | Typical reach | Connector | DOM support | Operating temp range | Notes for cost planning |

|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | 850 nm | ~300 m on OM3 (varies by spec) | LC | Yes (DOM) | Commercial/Industrial per datasheet | Often higher OEM unit cost but smoother warranty support |

| Finisar FTLX8571D3BCL | 10G | 850 nm | ~300 m class on OM3 (varies) | LC | Yes (DOM) | Commercial/Industrial per datasheet | Third-party pricing can lower purchase spend if compatibility is proven |

| FS.com SFP-10GSR-85 | 10G | 850 nm | 300 m class on OM3 (varies) | LC | Yes (DOM) | Commercial/Industrial per datasheet | Useful for bulk spares; validate with switch before scaling |

| Common 10G LR SFP class | 10G | 1310 nm | ~10 km class on OS2 | LC | Yes (DOM) | Per datasheet | LR/ER modules often cost more; spares strategy matters more |

Use this table as a planning template, then confirm the exact revision and temperature class in each vendor datasheet. Optical specifications can vary by revision, and your switch may enforce vendor or hardware compatibility checks. For authoritative baselines, rely on vendor datasheets and the physical layer expectations described in IEEE 802.3. anchor-text: ITU-T resources for optical link fundamentals

Top 5: Operational guardrails that prevent avoidable failures

Many optics failures are preventable: contamination, high insertion cycles, improper handling, and exceeding optical budget. These failures inflate optics maintenance cost because they create both parts replacement and labor. The best mitigation is to standardize handling and to instrument links so you can detect early drift.

Guardrails that work in the field

- Fiber cleaning SOP: inspect connectors before insertion; clean with approved methods; document inspection outcomes for insurance.

- DOM-based drift thresholds: set alerts for RX power drop patterns and TX bias current increases rather than waiting for link down.

- Thermal discipline: verify airflow paths; avoid blocking vents; keep switch and patch panel temperatures within the module’s operating specification.

- ESD and seating checks: use anti-static procedures; verify full seating; avoid repeated hot swaps if your host supports only specific insertion practices.

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches and aggregated uplinks to a spine, you might have 96 optics per leaf pair depending on oversubscription and redundancy. If you maintain 6 sites, you can quickly reach hundreds of modules, so a single hygiene failure mode like contaminated MPO trunks can cause a cluster event. In that scenario, teams often deploy connector inspection before patch changes, and they rotate spares only after DOM confirms stability.

Top 6: A deployment scenario showing how warranty and insurance combine

Consider a campus network supporting 12 buildings with centralized core and multiple IDF closets, using 10G LR links for backhaul and 10G SR for intra-building aggregation. Each building has two redundant uplinks, and the organization plans to standardize on a small set of optics families: 10G LR for 6 to 8 km OS2 runs and 10G SR for 100 to 300 m OM4 patching. Over a year, the team sees roughly 2 to 4 failures per quarter across all third-party optics, mostly during maintenance windows.

They implement a strategy where OEM optics are used for core uplinks, third-party optics are used for access aggregation after compatibility validation, and insurance covers accidental damage during connector cleaning and re-cabling. They require DOM export for every incident and keep a log tying the module serial number to switch port and time. Result: RMA cycles shorten because the vendor can confirm coverage with serial traceability, while insurance claims succeed because they include connector inspection records and DOM drift evidence. This reduces optics maintenance cost by limiting emergency shipping and by avoiding repeated troubleshooting that would otherwise consume engineer time.

Top 7: Common mistakes and troubleshooting tips that drive unexpected optics maintenance cost

Even well-designed warranty and insurance strategies fail when incidents are mishandled. The most expensive failures are often procedural: the team swaps the wrong module, ignores optical budget mismatch, or closes an incident without capturing evidence needed for claims. Below are common failure modes with root causes and fixes.

“It worked in the lab” compatibility mismatch

Root cause: DOM readings and link negotiation can differ by transceiver firmware revision or by host switch optics compatibility checks. A module that links up initially may still run with marginal optical power or different lane behavior. Solution: validate in a staging switch matching the production model, confirm DOM fields and alarm thresholds, and keep a compatibility matrix per switch platform.

Ignoring connector cleanliness before blaming the optics

Root cause: fiber end-face contamination causes high insertion loss and intermittent link flaps. The optics may show RX power drops and increased error counters, but teams often replace modules repeatedly. Solution: adopt a strict inspect-clean-retry workflow; use a microscope for LC and MPO; require inspection evidence for insurance claims.

No DOM telemetry baseline, so drift goes unnoticed

Root cause: without historical DOM data, you cannot show whether the failure started as a gradual degradation. That increases troubleshooting time and can weaken warranty or insurance narratives. Solution: establish a baseline period, then alert on trends such as rising TX bias current or falling RX power over a defined window.

Optical budget mismatch during patch changes

Root cause: replacing a patch panel, changing fiber grade, or adding extra splices can shift the link beyond the module’s power budget. Symptoms appear as CRC errors, high BER, or link instability. Solution: document the optical budget: fiber type, connector counts, and estimated insertion loss; re-validate after any cabling change.

Summary ranking table: which tactic lowers optics maintenance cost fastest

| Rank | Strategy | Primary impact | Best for | Implementation effort | Risk if skipped |

|---|---|---|---|---|---|

| 1 | Warranty review with RMA SLAs and serial traceability | Reduces downtime and replacement friction | Core and uplink links | Low to medium | Long returns, denied coverage |

| 2 | Insurance evidence workflow (DOM, logs, inspection) | Improves claim success rate | Accidental damage scenarios | Medium | Denied claims and unrecovered losses |

| 3 | Spares positioning using burn-rate and lead time | Prevents emergency shipping and labor spikes | Multi-site operations | Medium | Service impact during failures |

| 4 | Operational guardrails (cleaning SOP and DOM alerts) | Prevents avoidable failures | High-maintenance environments | Medium | Repeat failures and higher attrition |

| 5 | OEM vs third-party policy with compatibility matrix | Controls cost without increasing incident rate | Networks with mixed link criticality | Medium to high | Intermittent link instability |

| 6 | Documented optical budget and change control | Reduces “patch-change” regressions | Cabling-heavy teams | Medium | Unexpected link drops |

| 7 | Incident response that captures evidence fast | Shortens troubleshooting cycles | Teams with limited field time | Low | Long investigations and higher labor |

Cost and ROI note for optics maintenance cost planning

In many organizations, a single optics incident can cost far more than the module itself when you include engineer time, truck rolls, and expedited shipping. Typical module unit prices vary widely by class and brand, but you should plan TCO using a blended model: purchase price plus expected labor per incident plus downtime risk. OEM optics may cost 1.5x to 3x more per unit than third-party in some procurement cycles, yet they can reduce incident rate and speed warranty resolution. Third-party modules can still be cost-effective if you validate compatibility and maintain a strict evidence workflow for warranty and insurance.

For ROI, compare two scenarios: (1) higher purchase price with fewer incidents and smoother RMA, versus (2) lower unit price with more troubleshooting and potential claim friction. Many teams find the best ROI comes from a hybrid policy: OEM for critical links, third-party for bulk access, and consistent cleaning plus DOM monitoring to reduce avoidable failures.

FAQ

How do I estimate optics maintenance cost for a multi-site network?

Start with your module inventory by type (SR, LR, ER), then estimate expected swaps per month using the last 12 to 24 months of incidents. Multiply by average labor hours, shipping costs, and a downtime factor for each criticality tier. Add a warranty and insurance recovery rate assumption based on your claim outcomes.

Does DOM telemetry improve warranty or insurance outcomes?

Yes, when it helps prove that the module degraded before total failure. Capture RX power, TX bias current, and temperature trends around the incident, then link the data to port and serial number. Many vendors and insurers can process claims faster with objective telemetry.

Should we buy OEM optics to reduce maintenance cost?

Not always. OEM optics often reduce compatibility friction and simplify warranty validation for critical links, but third-party optics can be cost-effective if you validate with your switch platform and document compatibility. Use OEM selectively where downtime risk is highest.

What is the most common reason claims for optics fail?

Missing evidence or an incident narrative that does not match observed failure modes. For example, claims can be denied when there is no connector inspection record after cleaning-related failures, or when the serial number trace is not provided. Maintain a repeatable incident capture workflow.

How can we reduce failures caused by cleaning and handling?

Implement a strict inspect-clean-retry SOP with connector microscopes for LC and MPO, and train technicians on anti-static handling and proper seating. Pair the SOP with DOM alerts so you can detect drift before a link becomes unstable.

What should be in a compatibility matrix for transceivers?

Include switch model, port type, transceiver part number, firmware or revision if applicable, and validation results such as link stability and DOM alarm behavior. Update it when you change patch panels, fiber grades, or switch software versions.

If you want to reduce optics maintenance cost sustainably, treat warranty, insurance, spares, and operational hygiene as one system, not separate purchases. Next, review your transceiver lifecycle controls and align them with your incident evidence workflow using transceiver lifecycle management.

Author bio: I have deployed and operated optical transceiver fleets in multi-site data centers, where I built warranty/RMA workflows and DOM telemetry baselines to cut repeat incidents. I also advise procurement and field teams on compatibility validation, spares math, and measurable cost reduction tied to real outage and claim outcomes.