AI training and inference clusters stress network bandwidth and reliability in ways traditional enterprise links rarely face. This article helps network architects, field engineers, and procurement teams choose optical modules for leaf-spine and high-radix fabrics, focusing on 400G/800G options, fiber plant realities, and the operational details that prevent silent throughput loss. You will see practical compatibility checks, measured deployment considerations, and a troubleshooting playbook grounded in common failure modes.

AI/ML network requirements that drive optical module selection

In AI/ML infrastructure, the network is not just a transport layer; it is part of the training system’s performance envelope. Many environments run all-reduce and gradient synchronization patterns where microbursts and congestion translate directly into iteration-time variance. That pushes engineers toward deterministic link behavior, tight optics power budgets, and consistent signal integrity across every hop. For optical modules, the key is aligning data rate, reach, lane encoding, and transceiver diagnostics with the switch ASIC and the installed fiber topology.

Where bandwidth and signal integrity collide

Modern switches often support 400G using QSFP-DD or OSFP form factors and 800G using OSFP variants, with internal lane aggregation and distinct electrical interfaces. If you mismatch optics to the switch’s supported optical interface (for example, the vendor’s specified connector type and coding scheme), the link may appear up but fail under load. In field verification, engineers typically validate link error counters, FEC status, and CRC behavior after traffic ramps. A module that passes low-rate tests can still degrade under higher dispersion or connector contamination.

Latency sensitivity and oversubscription realities

Even when nominal latency targets are met, AI workloads can be sensitive to tail latency caused by queue buildup during synchronization phases. Optical modules influence this indirectly through link stability and power draw: unstable optics trigger link renegotiation events, which can cause congestion cascades. Additionally, higher-power optics can increase thermal load, which affects DOM readings and can accelerate aging in high-density racks. Your selection process should therefore include thermal and diagnostics validation, not only the advertised reach.

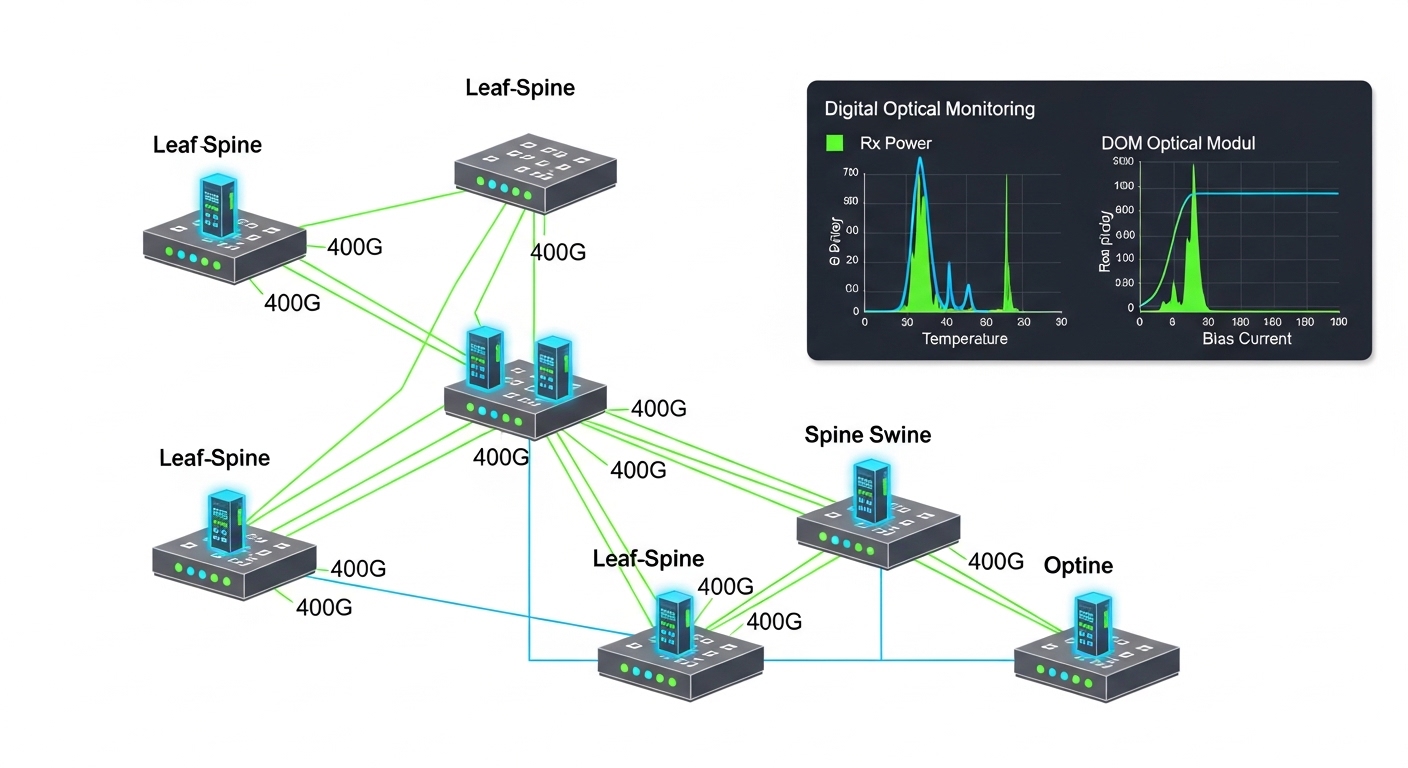

Pro Tip: In production AI racks, treat DOM alarms as an early warning system, not as “nice-to-have.” If you graph Rx power, bias current, and temperature across a 30-day burn-in window, you can catch marginal fiber cleaning or connector polish issues long before they become hard link failures.

Standards, interfaces, and the spec fields that matter in production

Optical modules are governed by industry standards such as IEEE 802.3 for Ethernet physical layers and by transceiver interface specifications from major vendors and consortia. Your practical goal is to map the switch’s required optical interface to a module that matches wavelength, reach class, coding/FEC expectations, and connector type. Field engineers often lose days when a module is “compatible” at the vendor catalog level but not compatible with the specific switch line card revision or optics profile.

Key specification fields to verify before purchase

When evaluating optical modules for AI/ML links, focus on these fields and compare them across candidate parts: wavelength band (typically SR around 850 nm, LR around 1310 nm, DR around 1310 nm with extended reach, and FR around 1550 nm), reach in meters with a defined link budget, data rate and form factor, connector (LC vs MPO/MTP), and operating temperature range for the rack environment. Also verify DOM support (Digital Optical Monitoring), including whether the switch expects I2C-accessible diagnostics.

Comparison table: common AI data-center optics choices

The table below summarizes typical options you will see in AI fabrics. Actual supported ranges depend on switch implementation, fiber type, and measured link budget.

| Module type (common use) | Form factor | Wavelength | Typical reach | Connector | Power class (typ.) | Operating temp | DOM |

|---|---|---|---|---|---|---|---|

| 400G SR4 (leaf-spine within datacenter) | QSFP-DD | ~850 nm (MM) | ~100 m class on OM4; higher on OM5 | MPO/MTP | ~6-12 W | 0 to 70 C (typ.); verify | Yes |

| 400G FR4 (metro or longer campus) | QSFP-DD | ~1310 nm (SM) | ~2 km class on SMF | LC | ~5-10 W | -5 to 70 C (typ.); verify | Yes |

| 800G SR8 (high-radix AI backbone within datacenter) | OSFP | ~850 nm (MM) | ~100 m class (depends on fiber) | MPO/MTP (8-lane) | ~12-20 W | 0 to 70 C (typ.); verify | Yes |

| 800G LR8 (longer AI backbone segments) | OSFP | ~1310 nm (SM) | ~10 km class (depends on budget) | LC (or MPO variants) | ~10-18 W | -5 to 70 C (typ.); verify | Yes |

For standards context, see IEEE 802.3 Ethernet physical layer definitions and the relevant optical module interface standards. In practice, you will also rely on vendor transceiver compatibility lists and datasheets. [Source: IEEE 802.3] and [Source: Cisco SFP/SFP+ and QSFP interoperability documentation] and [Source: Finisar/Foxtel transceiver datasheets]

Choosing optical modules for AI clusters: a field-ready checklist

Selecting optical modules for AI/ML infrastructure is less about “maximum reach” and more about matching the entire link chain: switch optics profile, transceiver electrical characteristics, fiber type, and connector cleanliness. A disciplined checklist reduces the chance of intermittent errors that only appear during sustained training runs. Start with the switch’s published compatibility list, then validate fiber plant assumptions using measured parameters, not estimates.

- Distance and reach class: Confirm the actual installed distance including patch panel slack. Use link budget assumptions consistent with your fiber type (OM4 vs OM5 for MM, core/cladding specs for SMF).

- Switch compatibility: Verify the module form factor (QSFP-DD vs OSFP), port electrical interface, and supported wavelength band. Cross-check vendor compatibility matrices for your exact switch model and line card revision.

- Fiber plant and connector type: Ensure MPO/MTP polarity rules (for multi-fiber trunks) are followed. Confirm LC vs MPO usage and that mating parts are from the same connector family.

- DOM and telemetry requirements: Confirm the switch reads DOM over the expected interface and that alarms map correctly to your monitoring stack. If you use automation, validate I2C access and alarm thresholds.

- Operating temperature and thermal headroom: In dense AI racks, verify module temperature ratings and airflow patterns. If your row has hot-aisle recirculation, plan for derating and additional fans.

- Vendor lock-in risk and procurement strategy: OEM modules can be simplest for compatibility, but third-party modules may reduce cost. Mitigate risk using a pilot group, burn-in testing, and staged rollout.

- FEC and error handling expectations: Ensure both ends support the same FEC mode and have consistent configuration for your switch platform. Validate with BER/CRC counters during traffic ramps.

How engineers run a fast compatibility pilot

A practical deployment pattern is to install a small number of candidate optical modules in a representative rack, then run a controlled traffic test that mimics training bursts. For example, you can use a traffic generator to create 50 to 70 percent line-rate bursts for 30 to 60 minutes while monitoring Rx power, temperature, and error counters. If you see rising corrected errors or CRC spikes, stop and re-check fiber cleaning and polarity before scaling the rollout. This approach prevents a “works in the lab” surprise during week-long training campaigns.

Deployment scenario: 400G leaf-spine fabric with AI traffic bursts

Consider a 3-tier data center leaf-spine topology with 48-port 400G ToR switches as leaves and 96-port 400G spine switches. Suppose the leaves connect to the spines with 16 links per leaf, each using 400G SR4 QSFP-DD over OM5 multimode fiber with MPO/MTP trunks. Patch panels add approximately 6 m of measured additional path per link, and the median physical distance is 65 m, with a worst-case near 90 m. During initial training runs, traffic patterns show short all-reduce bursts that can push links above 60 percent average utilization with microbursts near peak.

In this environment, engineers validate optical module performance by checking DOM telemetry every 5 minutes during the first 48 hours, then hourly thereafter. They also enforce MPO polarity rules using a verified polarity map and standardized cleaning procedures (inspection scope plus lint-free wipes). If a link shows a sudden Rx power drop below the vendor-recommended threshold, the field team inspects the connector end-face geometry and re-cleans before replacing hardware. This scenario illustrates why optical modules must be selected with both electrical and operational assumptions, including monitoring integration and fiber hygiene.

Common mistakes and troubleshooting steps for optical module failures

Most optical module incidents in AI deployments are not “bad parts” but integration errors: connector contamination, polarity reversal, mis-matched optics profiles, or thermal stress. Below are concrete failure modes field teams encounter, with root causes and solutions.

Link comes up but errors spike under sustained traffic

Root cause: Marginal fiber alignment or connector contamination causes increased attenuation and elevated error rates that only appear during higher optical power cycling. Sometimes the issue is a partially seated MPO/MTP connector that passes initial link negotiation but degrades later.

Solution: Inspect the end faces with a microscope, clean with validated procedures, and re-seat the connector while watching DOM Rx power. Then run a controlled traffic ramp and confirm FEC status and CRC counters stabilize. If persistent, verify polarity and check patch cord batch compatibility.

Intermittent link flaps during heat soak

Root cause: Thermal headroom is insufficient due to blocked airflow, high ambient temperatures, or modules operating near the upper temperature boundary. Some third-party modules also have different thermal behavior under identical airflow.

Solution: Measure inlet and exhaust temperatures at the rack level and compare against the module’s operating range. Improve airflow with baffles, verify fan tray health, and consider moving to a module variant with a higher thermal margin. Validate by re-running traffic after a controlled heat soak.

“Incompatible optics” or silent fallback after upgrade

Root cause: Switch firmware or line card revision changes optics handling, including DOM thresholds, FEC mode selection, or supported optic profiles. A module that worked on an earlier firmware version may fail after an upgrade.

Solution: After any firmware change, conduct a link validation pass across a representative port set. Confirm the exact switch OS build and optics profile mapping. Keep a rollback plan and maintain a compatibility-tested module inventory per switch revision.

Higher-than-expected attenuation due to fiber plant assumptions

Root cause: The installed fiber may have higher loss than assumed (dirty patch panels, aged connectors, or a mix of OM4 and OM5 in the same trunk). Engineers often rely on design documents rather than field measurements.

Solution: Use OTDR or certified link test reports for both ends, including patch panels. Re-terminate or replace suspect patch cords, and ensure MPO trunks are correctly assembled and labeled. Recalculate link budgets using measured insertion loss and worst-case margins.

Cost and ROI: balancing OEM optics, third-party modules, and uptime risk

Optical modules pricing varies widely by data rate, reach, and vendor ecosystem. In typical market conditions, OEM QSFP-DD and OSFP transceivers can be priced at a premium, while third-party modules may reduce upfront cost but can increase operational risk if compatibility or thermal behavior differs. For AI deployments with large port counts, the ROI calculation should include not only purchase price but also labor time for troubleshooting, downtime risk, and the cost of failed training runs.

As a realistic planning approach, teams often run a pilot to compare three cost tiers: OEM modules, authorized third-party modules, and (where allowed) generic optics. Then they estimate TCO using a simple model: unit price + expected replacement labor hours + expected incident probability over the first 12 to 24 months. In many operations, the biggest cost driver is not the module itself but the time spent diagnosing intermittent errors caused by connector and polarity issues. That is why standardizing hygiene procedures and telemetry monitoring can deliver more ROI than chasing the lowest module price.

For reference on physical-layer expectations and link behavior, consult IEEE 802.3 and vendor datasheets for the specific optics family you deploy. [Source: IEEE 802.3] and [Source: Vendor transceiver datasheets for QSFP-DD and OSFP families] and [Source: ANSI/TIA-568 connectivity standards]

FAQ: optical modules for AI and high-speed Ethernet ports

What optical module types are most common for AI data centers?

For internal datacenter distances, 400G SR4 QSFP-DD and 800G SR8 OSFP over multimode fiber are common. For longer campus or metro segments, you may use 400G FR4 or 800G LR8 over single-mode fiber. Always confirm the switch’s supported optics profile and connector type.

How do I verify DOM support works with my monitoring stack?

Confirm that the switch reads DOM via the expected interface and exposes the telemetry fields you need (Rx power, bias current, temperature). In a pilot, poll those values while generating traffic and verify alarm thresholds trigger as expected. If you use external monitoring, validate that your polling cadence does not overload switch management resources.

Are third-party optical modules safe for AI training racks?

They can be, but only after compatibility testing with your exact switch model, firmware version, and fiber plant. Many failures come from subtle differences in optics profiles, thermal behavior, or DOM interpretation. Use staged rollout, burn-in traffic tests, and keep a rollback path.

What is the fastest way to troubleshoot high error rates on an AI link?

Start with DOM telemetry trends and connector hygiene. Inspect and clean both ends, confirm MPO polarity and seating, then rerun a traffic ramp while watching FEC and CRC counters. If errors persist, verify fiber attenuation with certified test results.

How much reach margin should I plan for multimode links?

Plan for worst-case insertion loss and patch panel variability, not just the design distance. In practice, teams maintain conservative margins for transceiver aging and connector cleanliness drift over time. The exact margin depends on whether you use OM4 or OM5 and the specific module’s reach specification.

Do optical modules require burn-in before full production?

Many operators do a short burn-in and telemetry validation window, especially for high-density AI racks. A practical approach is 24 to 72 hours with representative traffic patterns and monitoring of Rx power and temperature stability. This catches marginal fibers and thermal issues early.

Choosing optical modules for AI/ML infrastructure demands more than matching reach; it requires alignment with switch optics profiles, fiber plant measurements, and operational monitoring. If you want to deepen your approach, follow up with optical fiber cleaning and polarity best practices to reduce the most common field failures.

Expert bio: I work with network operations teams to validate high-speed optics in production racks, combining DOM telemetry analysis with fiber plant certification results. I also support field rollouts where compatibility matrices, thermal constraints, and connector hygiene determine whether AI training runs complete on schedule.