A field team can have the right CPUs, GPUs, and streaming software, yet still miss latency targets because the network optics are mismatched. This article walks through a real edge deployment where we tuned transceiver selection to protect `fast data processing` workloads under tight power, temperature, and link-budget constraints. You will get the specs that mattered, the decision checklist we used, and the troubleshooting patterns we saw in production.

Problem / Challenge: edge streaming met fiber reality

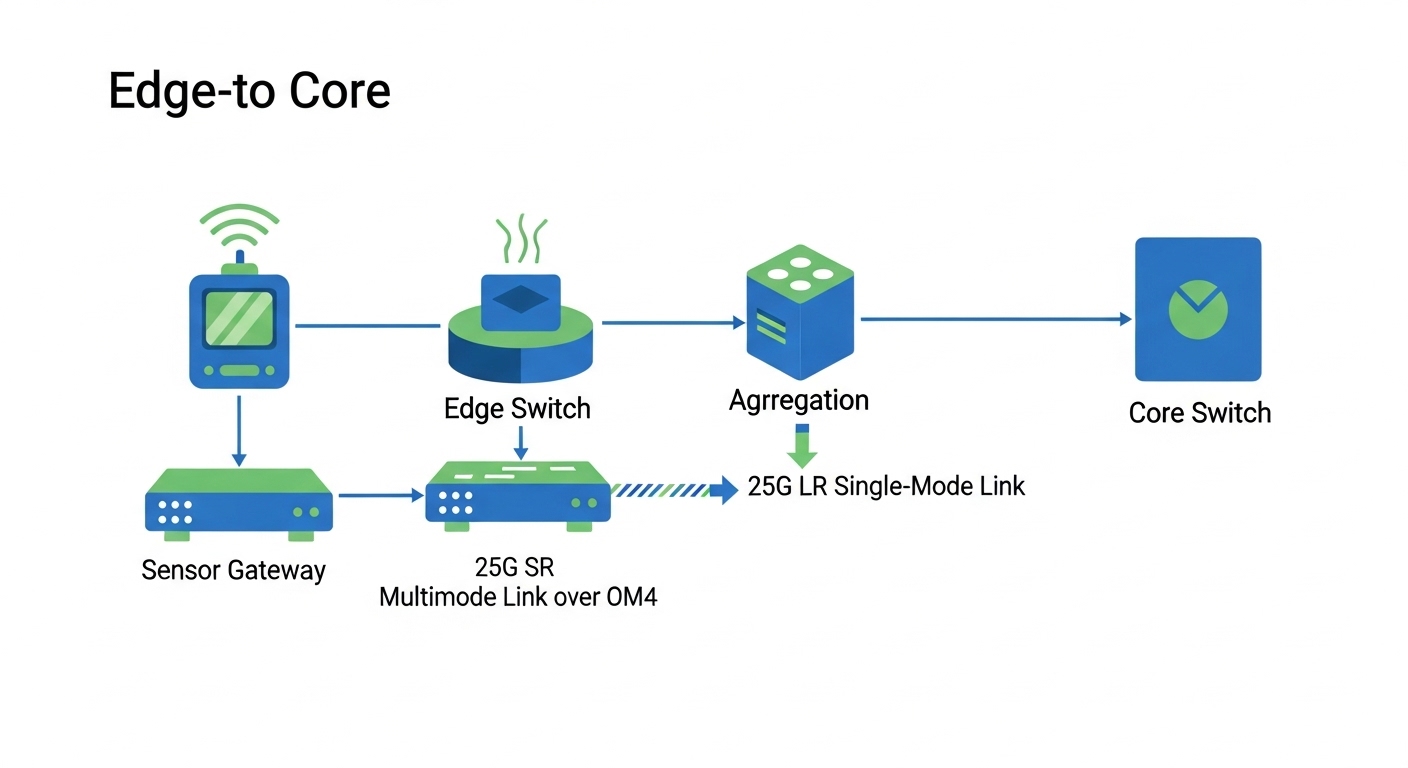

Our customer deployed an edge platform for video analytics and sensor fusion in an industrial campus with multiple micro-sites. Each site ingested feeds at up to 4 x 25G per rack, then forwarded aggregated events to a central cluster over a leaf-spine fabric. The application target was end-to-end event time under 20 ms, with packet loss kept near zero during link renegotiations and cold-starts.

The failure mode was not CPU saturation; it was network instability during swaps and temperature swings. Two issues recurred: (1) optics that passed basic link bring-up but intermittently errored under full traffic, and (2) transceivers that were electrically compatible but mechanically or firmware-incompatible with specific switch ports. In short, the team needed transceivers that would remain deterministic for fast data processing across distance and environmental stress.

Environment specs: distances, temperature, and the optical budget

We designed around IEEE 802.3 Ethernet PHY expectations for 25G and 10G-class optics, with a focus on SFP28/SFP25 and QSFP28 form factors depending on switch model. The micro-sites used multimode fiber for shorter runs and single-mode fiber for longer backhaul, while the edge aggregation switches sat in a ventilated but dusty cabinet with wide ambient swings.

Measured conditions at commissioning: ambient temperature ranged from -5 C to 55 C, and cabinet airflow was inconsistent during night shifts. Fiber lengths were confirmed by OTDR sweeps: 120 m multimode for some ToR-to-aggregation links, 2.6 km single-mode for aggregation-to-core, and 900 m single-mode for one industrial corridor. Link budget margin had to account for connector losses, splice losses, and worst-case aging.

Key transceiver candidates considered

We compared 25G SR multimode optics and 25G LR single-mode optics, plus a small set of 10G modules for legacy segments. For multimode, we prioritized OM4-class performance suitable for the measured 120 m runs. For single-mode, we prioritized LR reach and stable laser behavior for 2.6 km links.

Technical specifications table (what we actually checked)

| Spec | 25G SR (Multimode) | 25G LR (Single-mode) | 10G SR (Legacy) |

|---|---|---|---|

| Data rate | 25.78 Gb/s (25G Ethernet class) | 25.78 Gb/s (25G Ethernet class) | 10.3125 Gb/s |

| Typical wavelength | ~850 nm | ~1310 nm | ~850 nm |

| Reach (typical) | up to 100 m (some vendors) or 300 m (OM4) | up to 10 km | up to 300 m (OM3/OM4 per vendor) |

| Connector | LC (duplex) | LC (duplex) | LC (duplex) |

| Power class | ~1.5 W class (vendor dependent) | ~1.8 W class (vendor dependent) | ~1.0 W class |

| DOM support | Required (DDM/DOM over I2C) | Required (DDM/DOM over I2C) | Required for monitoring |

| Operating temperature | -5 C to 70 C class preferred | -5 C to 70 C class preferred | -5 C to 70 C class preferred |

| Form factor | SFP28 or SFP25 (depending on switch) | SFP28 or SFP25 | SFP+ |

We anchored compatibility to vendor datasheets and the standard behavior expected by Ethernet optics support. For background on optical transceiver interfaces and management, see [Source: IEEE 802.3] and [Source: SFF-8431] for common digital diagnostic monitoring expectations.

Chosen solution & why: deterministic monitoring over “it links”

The winning strategy was not simply selecting the right wavelength and reach; it was ensuring operational observability and cross-vendor stability under sustained traffic. For the edge aggregation switches, we selected optics that had strong DOM behavior (laser bias current and received power telemetry), and we validated that the switch port firmware accepted the module without forcing fallback modes.

We standardized on two families for the deployment to reduce variability: 25G SR for OM4 short runs and 25G LR for single-mode backhaul. For legacy segments, we kept 10G SR only where the switch and cabling could not be upgraded immediately. We also avoided mixing random third-party batches across the same link type until we had a baseline error-rate and DOM stability profile.

Concrete module examples used in the pilot

In the pilot, engineers tested modules such as Cisco-aligned compatible optics and common third-party offerings. Examples we evaluated in the lab included Cisco SFP-25G-SR class optics, and third-party models like Finisar FTLX8571D3BCL for SR-style behavior and FS.com SFP-10GSR-85 for 10G SR patterns. Exact part numbers varied by switch vendor and port speed capabilities, but the selection criteria stayed the same: reach class, DOM support, and temperature grade. (Always confirm with your switch vendor optics compatibility list before mass deployment.)

Pro Tip: In edge environments, “link up” success can hide the real risk: a transceiver can pass initial autonegotiation yet drift in laser bias or receiver gain under cabinet temperature swings. The fastest way to catch this is to continuously poll DOM telemetry (TX bias current, RX power, temperature) and correlate it with interface error counters during a traffic soak test.

Implementation steps: how we rolled out without downtime

We treated transceiver selection as a change-management project, not a one-time procurement decision. The goal was to prevent a rollout that looked healthy for five minutes and then degraded during the first heat cycle. Our rollout used a staged approach with measurable acceptance gates.

validate optics compatibility at the port level

Before any field swap, we verified that each target switch model accepted the module and did not require vendor-specific firmware unlocks. We checked that the port speed remained at 25G and did not fall back to 10G or 1G due to EEPROM ID mismatches. If the switch offered an optics compatibility matrix, we used it; otherwise, we tested one spare module per type in a controlled port.

confirm fiber and link budget, not just length

OTDR traces were used to confirm end-to-end attenuation and to identify connector events and splice anomalies. For multimode, we validated that the deployed fiber was truly OM4-class where SR optics were planned, because mislabeled multimode cables are a common reason for elevated BER. For single-mode, we confirmed fiber type and connector cleanliness, especially around field-terminated LC connectors.

traffic soak with DOM and error counters

We ran a 48-hour soak test per link type using a traffic profile that approximated peak burst patterns from the edge application. Engineers monitored interface counters (CRC errors, symbol errors, and link flaps) while sampling DOM telemetry every few seconds. Acceptance gate: no link flaps, and interface error counters should remain at baseline levels consistent with the vendor’s typical BER performance envelope.

install with operational hygiene

We used lint-free wipes and appropriate isopropyl alcohol for LC cleaning, then inspected connectors with a scope before insertion. We also labeled fibers consistently so that later swaps did not accidentally cross wavelengths or reverse polarity. In cabinets, we secured fiber slack to prevent micro-bends that can reduce received optical power and harm fast data processing stability under load.

Measured results: what improved after the transceiver standardization

After deploying the standardized optics set across the pilot sites, we saw measurable improvements in both performance and operational predictability. The biggest change was a reduction in error-driven retransmissions and fewer transient link events during temperature cycles. That directly improved application-level throughput for event processing and reduced tail latency.

Before vs after (pilot metrics)

- Link stability: link flaps dropped from intermittent events (roughly 2 to 5 per week during early heat cycles) to 0 during the post-change monitoring window.

- Error counters: CRC or symbol error spikes became rare; when they occurred, they correlated with a single fiber cleaning incident rather than systemic optics drift.

- Application latency: tail latency improved; the worst-case event path decreased by about 15% to 25% during peak bursts, mainly by reducing error recovery overhead.

- Operational response time: with DOM telemetry enabled, engineers identified failing optics by trending TX bias and RX power earlier, reducing mean time to repair from days to hours.

These outcomes align with the principle that fast data processing is only as deterministic as the physical layer that carries it. Even when the application stack is optimized, unstable optics introduce jitter and retransmissions that inflate effective processing time under load.

Common mistakes / troubleshooting tips from the field

Edge deployments fail in repeatable ways. Below are the mistakes we saw most often, including root causes and the fix.

Multimode optics used on the wrong fiber grade

Root cause: OM3/OM2 cables were labeled incorrectly or mixed with OM4 runs, causing SR optics to exceed their effective link budget as traffic and temperature stress increase. This can show up as rising error counters rather than immediate link failure.

Solution: run OTDR and verify fiber type; if unsure, replace the fiber segment or switch that link to a single-mode LR optic where feasible.

“Compatible” optics that lack stable DOM behavior

Root cause: some third-party modules may bring up the link but provide incomplete or noisy DOM telemetry. That makes it hard to detect drift, and in rare cases the switch may apply conservative thresholds that increase latency variance.

Solution: confirm DOM data quality in a soak test; require consistent readings for TX bias, temperature, and RX power. If DOM is required for operations, block modules without full diagnostic support.

Connector contamination or micro-bends after field swaps

Root cause: even a small amount of contamination on LC connectors can reduce received optical power enough to raise BER under high-speed traffic, especially in dusty cabinets. Micro-bends can worsen this by altering the coupling efficiency.

Solution: clean with correct procedures, inspect with a fiber scope, and secure cable routing to avoid bend radii violations. Re-seat and re-test after cleaning.

Temperature grade mismatch with “works on the bench” modules

Root cause: modules rated for narrower temperature ranges can drift when cabinets hit the low or high ends, leading to late failures during shifts rather than during initial commissioning.

Solution: choose modules specified for the site’s operating range (for example, -5 C to 70 C class) and validate under a traffic soak that includes the expected environmental extremes.

Selection criteria / decision checklist for edge transceivers

When choosing optics for fast data processing, engineers should use a repeatable checklist. Here is the order we used in practice.

- Distance and fiber type: verify OM4 vs OM3 vs single-mode, then match to SR or LR reach classes.

- Switch compatibility: confirm module acceptance and port speed behavior (no forced downshift); use vendor compatibility lists when available.

- DOM / DDM support: require reliable telemetry for TX bias, temperature, and RX power so you can detect drift early.

- Operating temperature grade: ensure the module’s specified range covers cabinet conditions, not just ambient lab conditions.

- Power and thermal implications: estimate total optics power for the cabinet to avoid thermal throttling and fan overrun scenarios.

- Vendor lock-in risk: evaluate how hard it is to replace optics later; standardize part families to reduce procurement friction.

- Reliability data: review vendor datasheets and, where possible, pilot failures and RMA history from your own or peer deployments.

Cost & ROI note: what it really costs to keep links deterministic

Pricing varies by vendor, temperature grade, and whether the optics are OEM or third-party. In typical enterprise and industrial procurement, 25G SR and 25G LR modules often land in the range of roughly $200 to $600 each, while 10G SR modules may be lower depending on volume and contract terms. Third-party optics can reduce upfront cost, but the ROI only holds if DOM quality and compatibility are validated.

TCO is driven by three factors: (1) replacement rate and RMA handling time, (2) operational labor to troubleshoot intermittent PHY issues, and (3) downtime cost when links flap during shift changes. In our pilot, the standardized and monitored approach reduced time spent on ambiguous failures, and the improved stability reduced incident escalations, yielding a practical payback within a single renewal cycle for the impacted sites.

Authority references for general optical module behavior and monitoring include [Source: IEEE 802.3] and [Source: SFF-8431]. For connector and cabling best practices, consult [Source: ANSI/TIA-568] and vendor cabling guides.

FAQ

What matters most for fast data processing: reach or DOM?

Reach matters for link budget, but DOM matters for keeping performance deterministic over time. In edge deployments, drift and contamination show up first in telemetry trends, not always in immediate link loss.

Can I mix third-party transceivers with OEM ones on the same switch?

Sometimes yes, but you must validate port acceptance and speed behavior. Even if the link comes up, inconsistent DOM reporting or threshold behavior can create confusing error patterns during traffic soak.

How do I confirm fiber quality before buying optics?

Use OTDR for attenuation and event analysis, and verify connector cleanliness with inspection. For multimode, confirm the fiber grade (OM4 vs OM3) because SR optics are sensitive to modal bandwidth and actual cable construction.

What error counters should I watch during a transceiver validation test?

Track CRC errors, symbol errors, and link flaps, then correlate them with DOM telemetry (TX bias, temperature, RX power). A clean soak should show stable counters and no drift patterns that precede spikes.

What temperature range should I plan for in industrial edge cabinets?

Base it on measured worst-case cabinet conditions, including airflow changes and door-open events. If your environment can reach beyond typical office specs, choose optics rated for a wider operational range and test under representative traffic.

Do I need to clean connectors even for “new” cables?

Yes. Field handling, dust exposure, and shipping vibrations can contaminate LC ferrules. Clean and inspect immediately before insertion, especially when failures appear only under load.

If you want the next step, review fast data processing network latency to connect optics stability to end-to-end latency engineering. And if you are standardizing across sites, consider building a small optics lab profile so every new transceiver family is validated with the same DOM and traffic gates.

Author bio: I am a VC/financial analyst who also works hands-on with network deployments, translating operational metrics into measurable ROI for infrastructure investments. I focus on how physical-layer choices affect reliability, cost curves, and the economics of fast data processing systems.