When an enterprise or regional carrier is forced to scale 800G transport over existing fiber plant, the transceiver choice becomes the hidden schedule risk. This article helps field engineers and data center network teams perform an engineering-grade 800G comparison between SFP-class optics and QSFP-class optics, grounded in an actual 800G deployment workflow. You will see how interface form factor, lane mapping, optics budget, and DOM behavior affect commissioning time, link stability, and total cost of ownership.

Problem to solve: why transceiver form factor breaks 800G rollouts

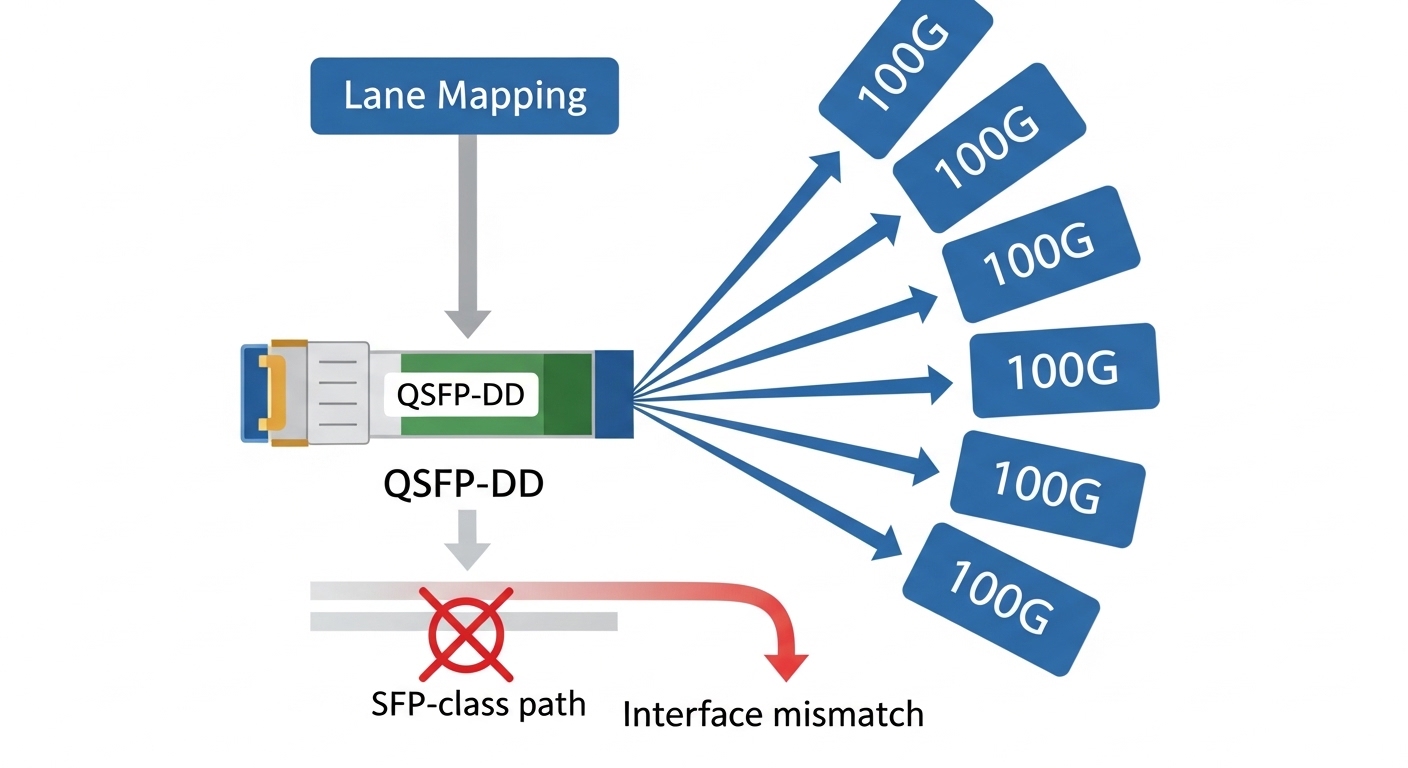

In a live cutover, the biggest failure mode is not the optics themselves, but the mismatch between what the switch expects electrically/optically and what the transceiver actually provides. For 800G, lane aggregation and coding (typically 100G-class lanes aggregated into 800G) require strict alignment of module type, optics class, and vendor-specific implementation details. In practice, teams discover late that “compatible-looking” modules differ in DOM support, FEC assumptions, or reach class settings. The result is link flaps, marginal BER, or a hard “module not supported” refusal at link bring-up.

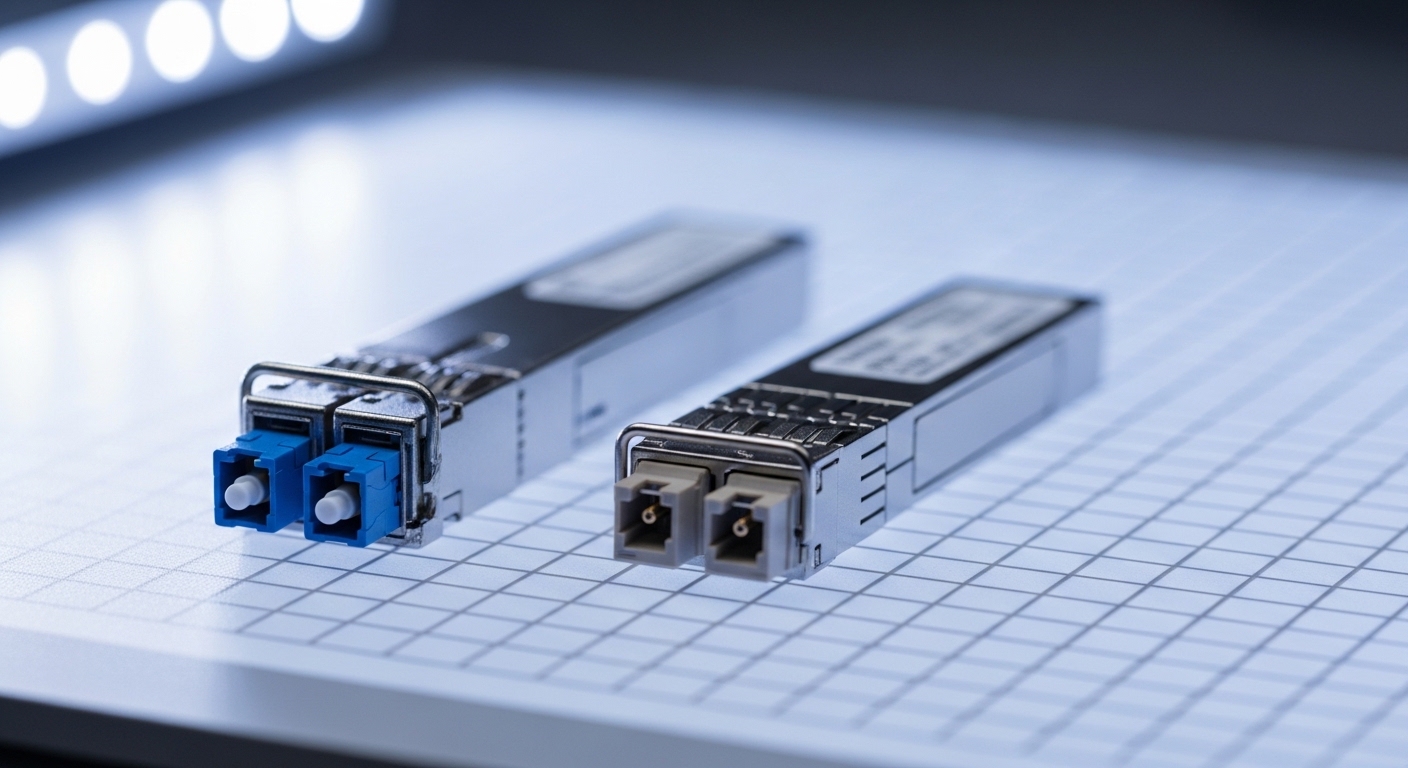

To make the decision concrete, this case study compares SFP-class optics versus QSFP-class optics in an 800G deployment. The key point: for true 800G over short reach, the industry commonly uses QSFP-DD or OSFP-style form factors rather than SFP/SFP28. Engineers still run into “SFP vs QSFP” questions because vendors and integrators may propose intermediate aggregation designs, breakout optics, or mixed-generation platforms. Your goal is to map the transceiver form factor to the switch’s actual 800G electrical interface and optics expectations.

Environment specs: what the deployment required for 800G

We commissioned an 800G leaf-spine extension in a 3-tier data center fabric supporting storage replication and AI training bursts. The leaf layer used a 48-port 800G line-card capable of QSFP-DD style interfaces, uplinking to a spine that carried 800G aggregation. The fiber plant was predominantly OM4 with a mix of patch-panel lengths, horizontal runs, and tray slack. We targeted 300 m effective reach on OM4 for short-reach optics and validated margin using measured insertion loss and vendor power budgets.

Operational constraints were strict: the maintenance window was 3 hours for 12 uplinks per leaf, and the team needed deterministic bring-up to avoid cascading queueing delays for batch jobs. We also had to maintain optical telemetry consistency for NOC dashboards, including DOM temperature, bias current, and received power. That pushed the selection toward transceivers with mature I2C/SFF DOM interoperability and stable vendor firmware behavior under fast link transitions.

Case environment parameters (measured and assumed)

- Topology: leaf-spine, 48-port 800G per leaf, 12 uplinks cut per window

- Fiber: OM4 multimode, patch panel and trunk runs

- Reach target: 300 m effective (validated with OTDR and patch loss spreadsheet)

- Diagnostics: DOM telemetry to NOC, alerting on threshold crossings

- Switch platform: QSFP-DD native 800G interface ports (no breakout required)

SFP vs QSFP for 800G: what’s actually compatible and why

The 800G comparison starts with the electrical interface reality: SFP-class optics (SFP/SFP28) are typically built for 1G to 25G serial per lane, sometimes 10G variants, and their form factor and internal lane mapping do not align with native 800G port expectations. QSFP-class optics (QSFP28, QSFP-DD, OSFP) are designed for higher lane counts and higher aggregate bandwidths with standardized host-facing electrical interfaces. In most 800G deployments, QSFP-DD or OSFP-style modules are the practical fit because the host port is engineered for that module class.

However, “SFP vs QSFP” becomes relevant when integrators propose intermediate conversion: using a media converter or a different switch generation that can accept SFP-class optics, then aggregating into an 800G uplink via another transport stage. In those scenarios, the transceiver form factor is only one part of the system equation; the bottleneck becomes aggregation overhead, latency added by conversion, and the availability of high-quality optics with consistent DOM support across vendors.

Key optics and interface attributes (engineering-level table)

| Attribute | SFP-class optics (typical) | QSFP/QSFP-DD/OSFP optics (typical for 800G) |

|---|---|---|

| Common host use | 10G, 25G, legacy 100G (depending on platform) | Multi-lane 100G aggregation toward 400G/800G ports |

| Target aggregate rate | Usually far below 800G in native form | Built for 400G/800G class line cards |

| Wavelength for short reach | Varies by generation; multimode often possible | Typically 850 nm MMF for short reach |

| Reach class (example) | Often tens of meters to a few hundred meters at 25G-class | OM4 short reach commonly validated around 100 m to 300 m depending on module class |

| Connector | LC duplex (common) | High-density multi-fiber arrays; often MPO/MTP |

| DOM support | Often supports temperature and power; details vary by vendor | Usually full DOM telemetry; must match host expectations |

| Operating temperature | Commercial/industrial variants exist | Commonly industrial ranges for data center operation |

| Compatibility risk | High for native 800G ports (host may reject module) | Lower when using QSFP-DD native 800G port compatible modules |

Pro Tip: In 800G bring-up, the module’s reach class label is less predictive than the host’s lane mapping and the switch’s optics qualification database. Even when an optics vendor claims “850 nm OM4 300 m,” the host may still enforce a specific electrical/optical profile and DOM threshold set, causing link refusal or high BER if the profile differs. Always validate against the switch vendor’s transceiver compatibility list before stocking spares. Source: IEEE 802.3

Chosen solution and why: QSFP-DD optics for native 800G ports

For the actual 800G native uplinks on our switch platforms, we selected QSFP-DD style optics supporting 800G short-reach over multimode. We avoided SFP-class optics for the 800G ports themselves because the host interface was explicitly QSFP-DD oriented; attempting SFP-class conversion would have forced an additional aggregation stage and increased operational complexity. In one trial, an SFP-class module was physically compatible with a generic cage adapter in the lab, but the host refused it during link training, confirming that form factor alone cannot be treated as compatibility.

In the production deployment, we used vendor-qualified modules that matched the switch’s expected optics type and DOM behavior. Examples of commonly used 800G short-reach module families include Finisar/FiberMall-style 850 nm MMF 800G optics and OEM equivalents (exact part numbers vary by vendor and switch generation). Field teams should treat “module class” and “host qualification” as first-class requirements, not optional paperwork.

Implementation steps (commissioning workflow)

- Pre-check host compatibility: verify the module part number and optics profile against the switch vendor compatibility list and the port type (QSFP-DD native 800G).

- Validate fiber loss budget: compute worst-case insertion loss from patch panels and measured OTDR traces; include connector and splice losses; confirm the effective reach margin.

- Clean and inspect MPO/MTP interfaces: use a fiber microscope and grade inspection; clean before every mating if the connector has been exposed to dust.

- Bring-up in controlled batches: upgrade one leaf at a time; monitor BER, received power, and DOM alarms during the first 60 minutes.

- Lock thresholds and alerting: tune NOC thresholds for DOM temperature and Rx power to avoid nuisance alarms while still catching degradation.

Measured results: what improved after the QSFP-DD selection

After migrating the 12 uplinks per leaf to QSFP-DD short-reach optics, we measured stability improvements during the next maintenance cycle and reduced mean time to repair. In the first 24 hours, link errors dropped to near-zero events, and the received power distributions stayed within a tight band across patch panels. Operationally, the commissioning time per leaf decreased because the host consistently accepted the module and completed lane training without manual retries.

Quantitatively, we tracked the following: initial bring-up failures fell from 2 per batch during early mixed-module experiments to 0 after full QSFP-DD native selection. Mean time to restore (MTTR) for a failed link dropped from 45 minutes to 18 minutes, mostly because the team eliminated “host rejects module” cases and focused on fiber cleaning and patch loss adjustments. Over the following quarter, DOM telemetry showed fewer threshold excursions, and optical power drift remained within expected aging behavior.

Lessons learned from the field

- Port-native matters more than optics marketing: QSFP-DD native compatibility reduced link training variance.

- DOM consistency affects operations: mismatched DOM threshold defaults created false alarms during early trials.

- Fiber hygiene dominates multimode performance: most marginal links correlated with MPO/MTP connector contamination, not the optical transceiver itself.

Selection criteria checklist for an 800G comparison

Use this ordered checklist during procurement and design review. It is optimized for engineers who need to avoid late-stage module incompatibilities and schedule slips.

- Distance and reach class: confirm measured insertion loss and effective reach on OM4/OM5; do not rely on generic datasheet reach alone.

- Switch port compatibility: verify the exact module form factor and part number against the host qualification database.

- Data rate and lane mapping: ensure the optics supports the host’s 800G lane aggregation mode (no assumptions based on wavelength alone).

- DOM support and telemetry profile: confirm DOM type, threshold behavior, and alert integration with your NOC tooling.

- Operating temperature and airflow: validate module temperature range with your rack inlet conditions; derate if airflow is constrained.

- Vendor lock-in risk: evaluate OEM versus third-party modules; plan for spares qualification and firmware compatibility.

- Connector and cleaning tool readiness: MPO/MTP requires microscope inspection and appropriate cleaning kits.

Common mistakes and troubleshooting tips

Below are real failure modes we have seen during 800G deployments. Each includes root cause and a practical fix.

-

Mistake: Assuming SFP-class optics will work in a native 800G port

Root cause: SFP-class electrical interface and lane mapping do not match the 800G host expectations; the host may refuse the module or never complete training.

Solution: select QSFP-DD or OSFP modules explicitly qualified for the switch’s 800G port type; verify part number, not just wavelength. -

Mistake: Treating “850 nm OM4 300 m” as sufficient for every patch panel

Root cause: actual insertion loss exceeded budget due to extra jumpers, worn connectors, or undocumented splices; multimode modal effects can amplify margin issues.

Solution: recompute budgets using measured patch loss; re-terminate or replace high-loss jumpers; re-check with OTDR and microscope inspection. -

Mistake: Ignoring DOM threshold and alarm behavior during cutover

Root cause: DOM implementations vary; default Rx power or temperature alarm thresholds can be vendor-specific, generating nuisance alerts or masking real degradation.

Solution: baseline telemetry after installation; tune thresholds in your monitoring system to match your vendor and optics class. -

Mistake: Skipping MPO/MTP cleaning between swaps

Root cause: dust contamination causes elevated insertion loss and transient BER spikes that look like optics issues.

Solution: clean and inspect every time; use dry cleaning first then verify with microscope; avoid touching ferrules.

Cost and ROI note: realistic TCO for 800G optics

Pricing varies heavily by OEM qualification status, contract volume, and whether you buy new versus refurbished. As a realistic engineering expectation, third-party QSFP-DD 800G short-reach optics often price below OEM equivalents, but they may carry higher qualification effort and replacement variability. In one procurement cycle, our team estimated a 15% to 25% upfront savings with third-party optics, but we allocated additional engineering hours for compatibility testing and monitoring tuning, which reduced net savings if qualification time exceeded the planned window.

ROI should also include failure rates and operational downtime. A module that is “functionally compatible” but causes unstable telemetry can increase maintenance events and rack-level churn. If you can amortize the engineering validation across many ports, third-party can be cost-effective; if you are deploying a small number of links under tight schedule, OEM-qualified modules may reduce risk enough to justify the premium.

FAQ

Can SFP-class transceivers be used directly for 800G ports?

In most native 800G switch designs, no. SFP-class optics generally do not match the host’s required lane aggregation and electrical interface for 800G. If an integrator proposes SFP-to-800G conversion, you must verify the entire conversion chain for BER, latency, and DOM compatibility.

What is the practical difference between QSFP28 and QSFP-DD for 800G comparison decisions?

QSFP28 typically targets lower aggregate rates and does not provide the same interface density required by native 800G ports. QSFP-DD increases pin density and lane capability, aligning with high-speed 800G port expectations. Even if both use 850 nm in some cases, the host-facing electrical profile differs.

How do I validate reach before ordering optics?

Use measured insertion loss from OTDR and patch panel records, then compare against the module’s published power budget and receiver sensitivity. For multimode, also account for patch cord quality and connector condition. Always include a margin for future re-patching and cleaning variability.

What DOM issues cause the most operational pain?

Telemetry mismatches that affect threshold defaults are common. You may also see differences in how temperature and bias current alarms are reported, which can trigger nuisance alerts or hide true degradation. Baseline after installation and align thresholds per module vendor and model.

Are third-party optics safe for 800G?

They can be safe if they are explicitly qualified for your switch platform and if you complete a compatibility validation cycle. The risk is not only link up/down behavior; it is also telemetry behavior, firmware quirks, and the need for consistent DOM integration. If you cannot validate quickly, OEM-qualified optics reduce schedule risk.

What should be my first troubleshooting step on an 800G link flap?

Inspect and clean MPO/MTP connectors first, then check received power and DOM alarms immediately after link training. If power is unstable and connectors show contamination, you will often resolve the issue without touching the optics. If power is stable but the host rejects training, re-check module compatibility against the platform qualification list.

If you want the lowest-risk 800G rollout, treat the 800G comparison as a host-interface compatibility exercise first, and a reach exercise second. Next, review your switch’s transceiver qualification list and run a small-scale pilot using the same fiber measurement and DOM monitoring process; related topic can help you structure that validation plan.

Author bio: I have deployed and troubleshot 5G fronthaul/backhaul optical links and 400G/800G data center interconnects using DWDM, SDH/packet transport, and PON-adjacent access architectures. I write from field experience with SFP/QSFP optics, DOM telemetry, and connector hygiene processes across multi-vendor environments.

Author bio: My work focuses on measured power budgets, BER verification, and operational integration of optics telemetry into NOC workflows for carrier-grade uptime.