AI infrastructure teams often discover that “more bandwidth” is not the same as “better performance.” In fabric networks, transceiver choice affects port density, power, optics compatibility, and operational risk across upgrades. This guide helps network and facilities engineers compare 50G and 100G transceivers using real deployment constraints, especially for leaf-spine and GPU clusters. You will end with an implementation checklist you can run during rollout planning.

Prerequisites: what you must measure before choosing 50G or 100G

Before selecting optics, confirm the actual traffic pattern and physical layer budget. For AI infrastructure, east-west traffic can be bursty and latency-sensitive, so oversubscription and optics reach limits matter. You also need the switch platform’s optics compatibility list and the vendor’s DOM interpretation details.

Gather these inputs (minimum set)

- Switch models and transceiver cages: record exact SKU (example: Cisco Nexus 93180YC-FX, Arista 7280R, Juniper QFX10008) and optics part numbers supported by the vendor.

- Fiber plant details: count of MMF and SMF runs, connector type (LC/SC), and measured link attenuation at the planned wavelength.

- Planned topology: leaf-spine, spine-super-spine, or ToR aggregation; include oversubscription ratio and expected utilization.

- Thermal constraints: ambient temperature envelope, airflow direction, and whether you will run optics in “high-power” mode (if supported).

- Operational monitoring requirements: DOM telemetry fields you need (Rx power, Tx bias, temperature, alarm thresholds).

Authority note: Ethernet physical layer behavior is standardized by IEEE 802.3 and optics reach assumptions are reflected in vendor datasheets; always validate against the specific switch vendor compatibility list. Source: IEEE 802.3

Step-by-step implementation plan: compare 50G vs 100G optics in your fabric

This section is written as a rollout procedure you can execute. The goal is to decide whether 50G or 100G transceivers reduce risk and total cost while meeting latency and reach targets for AI infrastructure.

Map each link class to a target reach and budget

Classify links by distance and expected margin. For example, in a single row ToR-to-spine design, you may have 50 m MMF patching plus 10 m backbone fiber, while some inter-rack links may approach the limit. Use measured attenuation and connector loss rather than assuming “spec sheet reach” is safe.

Expected outcome: a per-link budget table with required receive power margin for the planned wavelength and transceiver type.

Choose the transceiver form factor aligned to port density

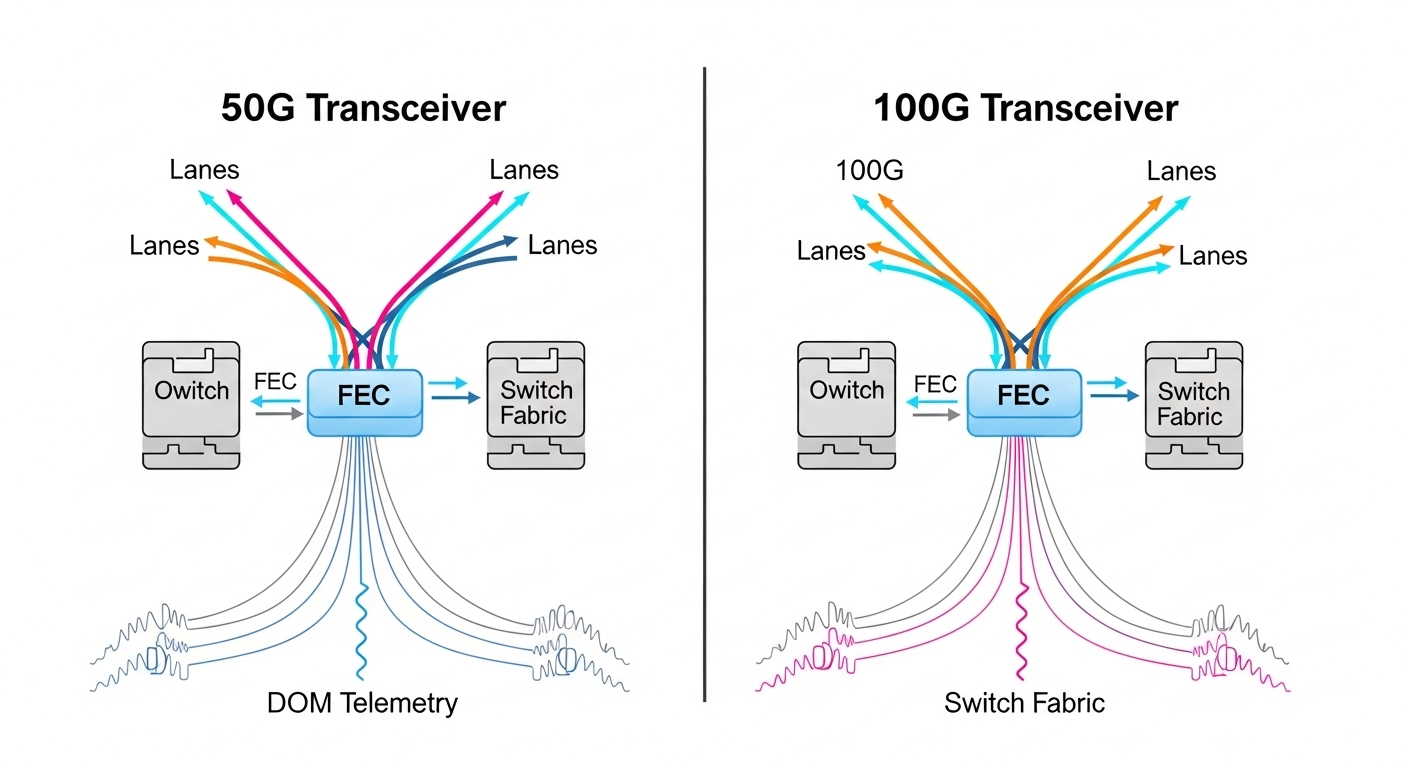

50G optics are commonly deployed as 50G-capable pluggables (often QSFP-like families) that let you pack more lanes or more ports per chassis, depending on the platform. 100G optics typically use higher-rate serialization and may occupy different cage types or consume more power per port. Confirm whether your switch supports 50G and 100G in the same slot family, and whether lane mapping is transparent to the operating system.

Expected outcome: a “slot-to-speed” compatibility matrix for your exact switch SKUs.

Validate link training, speed negotiation, and FEC mode

Even when both sides are “Ethernet,” optics can differ in FEC (Forward Error Correction) behavior and link training timing. Use switch CLI to confirm negotiated speed and FEC settings after bring-up. For many platforms, 100G modes may default to a particular FEC profile while 50G may use a different one; mismatches can cause link flaps or degraded error counters.

Expected outcome: stable link state with correct negotiated speed and expected FEC, plus baseline counters (CRC/SECs) for day-0 monitoring.

Run an acceptance test for DOM telemetry and threshold alarms

DOM (Digital Optical Monitoring) allows you to trend Rx power, Tx bias, and temperature. Configure thresholds so you get early warnings before hard failures. Many operators standardize on alerting when Rx power drops below a defined margin or when temperature approaches the vendor maximum.

Expected outcome: a telemetry dashboard and alert thresholds that match your maintenance window and escalation path.

Perform a controlled burn-in and error-rate verification

For AI infrastructure, you need more than “link up.” Run traffic tests that resemble your workload burst profile (for example, short all-to-all patterns plus steady background). Measure link errors over time; if you can, include a thermal soak test to capture airflow sensitivity.

Expected outcome: acceptance criteria met for error counters and stable Rx power drift within the vendor’s recommended operating range.

Calculate TCO and plan the spares strategy

50G may allow more ports per chassis and potentially reduce oversubscription, but 100G can reduce the number of optics you must manage for a given aggregate bandwidth. Include optics cost, installation labor, expected failure rate based on your historical RMA data, and the cost of downtime. In many deployments, the “spares footprint” becomes a hidden cost driver during AI infrastructure scaling.

Expected outcome: a 3-year TCO model comparing “more 50G optics” versus “fewer 100G optics,” including spares and replacement logistics.

50G vs 100G transceivers: practical comparison for AI infrastructure

In AI infrastructure networks, the decision usually comes down to port density, power, reach fit, and operational compatibility. While both 50G and 100G can serve the same physical roles, they interact differently with your switch’s lane architecture and your fiber budget. The comparison below focuses on the parameters engineers actually use during procurement and acceptance.

| Spec category | Typical 50G transceiver | Typical 100G transceiver |

|---|---|---|

| Data rate per port | 50G (exact encoding depends on module) | 100G (twice per port) |

| Common short-reach wavelength families | Often 850 nm MMF for short reach | Often 850 nm MMF for short reach |

| Connector type | Usually LC | Usually LC |

| Reach (example class) | Short-reach MMF classes commonly targeted at tens of meters | Short-reach MMF classes commonly targeted at tens to ~100 m depending on vendor and fiber grade |

| Power and thermal impact | May be lower per port but more ports for same aggregate bandwidth | May be higher per port but fewer optics for same aggregate bandwidth |

| DOM support | Typically supports temperature, Tx bias, Rx power alarms | Typically supports temperature, Tx bias, Rx power alarms |

| Temperature range | Vendor-specific; often standard industrial data center ranges | Vendor-specific; verify exact spec for your modules |

Vendor-specific example reality check: Many operators standardize on specific OEM or third-party part numbers that are listed as compatible with their switch. Examples you may see in the field for short-reach 850 nm optics include Cisco-branded and Finisar/FS-branded modules (always validate exact wavelength, reach rating, and DOM support against your platform). Use the switch vendor’s optics matrix as the source of truth for AI infrastructure deployments.

Authority note: DOM and transceiver monitoring are addressed in vendor implementation guidance and optics standards ecosystems; treat interoperability as a testable requirement, not a guarantee. Source: ITU optics context

Pro Tip: In dense AI infrastructure racks, the “it links up” phase can mask marginal optics. Track Rx power trend over 24 to 72 hours after initial installation; if you see a steady downward drift toward vendor alarm thresholds, you likely have a connector cleanliness issue or a fiber bend/strain problem rather than a transceiver defect.

Selection criteria checklist engineers use during procurement

Use this ordered list to reduce rework and avoid compatibility surprises. It is tuned for AI infrastructure where scaling cycles can be fast and downtime costs are high.

- Distance and fiber grade: confirm MMF type (OM3/OM4/OM5) or SMF cores, measured attenuation, and connector losses.

- Switch compatibility: only buy optics explicitly supported by your switch model and software version; verify both 50G and 100G modes if you plan to mix.

- DOM and monitoring integration: ensure the transceiver exposes required telemetry and alarms; confirm your monitoring system can parse vendor DOM fields.

- Operating temperature and airflow: validate module temperature range against your inlet air measurements and worst-case seasonal conditions.

- Power and cooling budget: estimate total power for all optics (including spares if they are powered) and check whether your PSU and airflow plan can accommodate it.

- Vendor lock-in risk: OEM optics may simplify acceptance, but third-party options can reduce capex; mitigate by defining acceptance tests and a known-good spare pool.

- Upgrade path: if your roadmap moves from 50G to 100G (or vice versa), confirm whether cage types and optics profiles remain usable.

Common mistakes and troubleshooting for 50G vs 100G links

Below are three frequent failure modes seen during AI infrastructure rollouts, with root causes and fixes. These are practical patterns rather than theoretical possibilities.

Failure mode 1: Link flaps during high traffic but is stable at idle

Root cause: marginal optical power budget that passes link training but fails under bursty traffic due to error accumulation. Connector contamination or slight fiber attenuation can worsen under thermal drift. Solution: clean connectors, verify fiber attenuation with an OTDR/OLTS workflow, and replace any suspect patch cords. Confirm negotiated FEC and check error counters immediately after traffic ramps.

Failure mode 2: “Supported” optics still fail in only one direction or only on specific ports

Root cause: port-level lane mapping differences or transceiver profile mismatches that are not obvious from generic compatibility lists. Some platforms treat certain ports as requiring specific optics profiles. Solution: test the same module in a known-good port, then compare port configuration and software version. If behavior is port-specific, follow the vendor’s optics and port mapping guidance and standardize on a single module profile per port group.

Failure mode 3: DOM alarms but link remains up for days, then hard failure occurs

Root cause: alarm thresholds are not aligned with your monitoring system, so you miss early degradation. Alternatively, the monitoring integration may be reading the wrong DOM field scaling. Solution: set alarms using vendor-recommended thresholds, validate DOM parsing with a known module, and implement an escalation workflow for high Tx temperature or low Rx power drift.

Cost and ROI note: how to estimate the real difference

Pricing varies by OEM, reach class, and whether you buy directly from the switch vendor or a third-party. As a realistic planning range, many short-reach 850 nm optics are often priced in the tens to low hundreds of USD per module, with OEM typically higher than third-party. For ROI, engineers should model not just module cost, but also labor for installations, expected RMA rates, and the cost of a maintenance window when AI infrastructure capacity is constrained.

Practical TCO heuristic: If your design requires many more ports for 50G to match the same aggregate bandwidth, you may pay more in optics count and management overhead even if per-port module cost is lower. Conversely, 100G can reduce optics count but may increase per-module cost and can carry higher power per port, affecting cooling margins.

FAQ: buying decisions for AI infrastructure transceivers

Is 50G better than 100G for AI infrastructure?

Not universally. 50G can improve port density and may fit specific switch lane architectures, while 100G reduces optics count for the same bandwidth. Choose based on your measured fiber budget, switch compatibility, and monitoring maturity rather than on raw line rate alone.

Can I mix 50G and 100G optics in the same leaf-spine fabric?

Sometimes yes, but only if your switch supports both speeds per the same port groups and your software version handles lane mapping consistently. You must validate with acceptance tests and confirm DOM telemetry and alarm thresholds for each optics type.

What fiber reach should I plan for AI infrastructure links?

Plan using measured attenuation and conservative margins. Vendor reach ratings assume specific test conditions; real patch cords, connector cleanliness, and bend radius can reduce link margin. Use OLTS/OTDR to verify the plant before final procurement.

Do I need OEM optics for 100G modules?

OEM optics can reduce compatibility risk, but third-party optics can work reliably if they are on the switch vendor’s supported list and pass your acceptance tests. The safest approach is to standardize part numbers and validate DOM alarms and error counters during a controlled pilot.

What telemetry should I alert on for early failure prevention?

Track Rx power, Tx bias, and module temperature with thresholds aligned to vendor guidance. Also monitor link error counters and CRC/second error rates so you catch marginal optics under burst traffic.

How many spares should I keep for AI infrastructure optics?

It depends on your criticality and lead times, but a common operational pattern is to keep spares per switch line card type and per optics family. If you expect rapid scaling, include spares for the exact module part numbers you plan to deploy next quarter.

In AI infrastructure, choosing between 50G and 100G transceivers is a system decision that blends fiber physics, switch compatibility, power/thermal limits, and monitoring reliability. Run the step-by-step acceptance workflow, build a fiber budget from measurements, and then compare TCO with spares and downtime costs. Next, review AI fabric optics planning to align optics selection with your leaf-spine scaling model and operational procedures.

Expert bio: I have deployed and troubleshot short-reach Ethernet optics in production data centers, including DOM telemetry integration and link-budget acceptance testing under real traffic. I write these guides from field constraints, referencing IEEE 802.3 behavior and vendor datasheets to keep decisions safe and verifiable.