When you are planning an 800G leaf-spine upgrade, the transceiver cabling decision can quietly dominate schedule, cooling load, and reliability. This article is a field-oriented deployment comparison of AOC (Active Optical Cable) versus DAC (Direct Attach Copper) for 800G links, aimed at network engineers, facility reliability teams, and QA owners writing acceptance criteria. You will get practical specs to verify, a checklist for switch compatibility, and troubleshooting patterns seen in the first 90 days of rollout.

Why 800G link choice turns into a reliability and QA issue

At 800G, the physical layer margin is unforgiving: link training, equalization, and thermal drift all matter, and a “works on the bench” cable can fail under real rack airflow. In data centers, the cabling choice also affects installation speed, serviceability, and the probability of connector damage during moves. For QA, you need acceptance tests that cover optics power, link stability, and environmental stress, not just link-up status. For reliability engineering, you also need a maintenance model: how often you will swap assemblies, and whether failures cluster by batch or by handling events.

IEEE 802.3 defines the Ethernet physical layer behavior for different speeds and media classes; for 800G Ethernet, vendor implementations typically align with 800G optical or copper electrical specifications depending on the transceiver type. For background on optical transceiver requirements and test practices, use vendor datasheets and test methodologies referenced by IEEE and transceiver vendors. For field test and compliance language, reliability teams often reference vendor documentation and general optical safety and cleaning standards.

Core spec comparison: AOC vs DAC for 800G

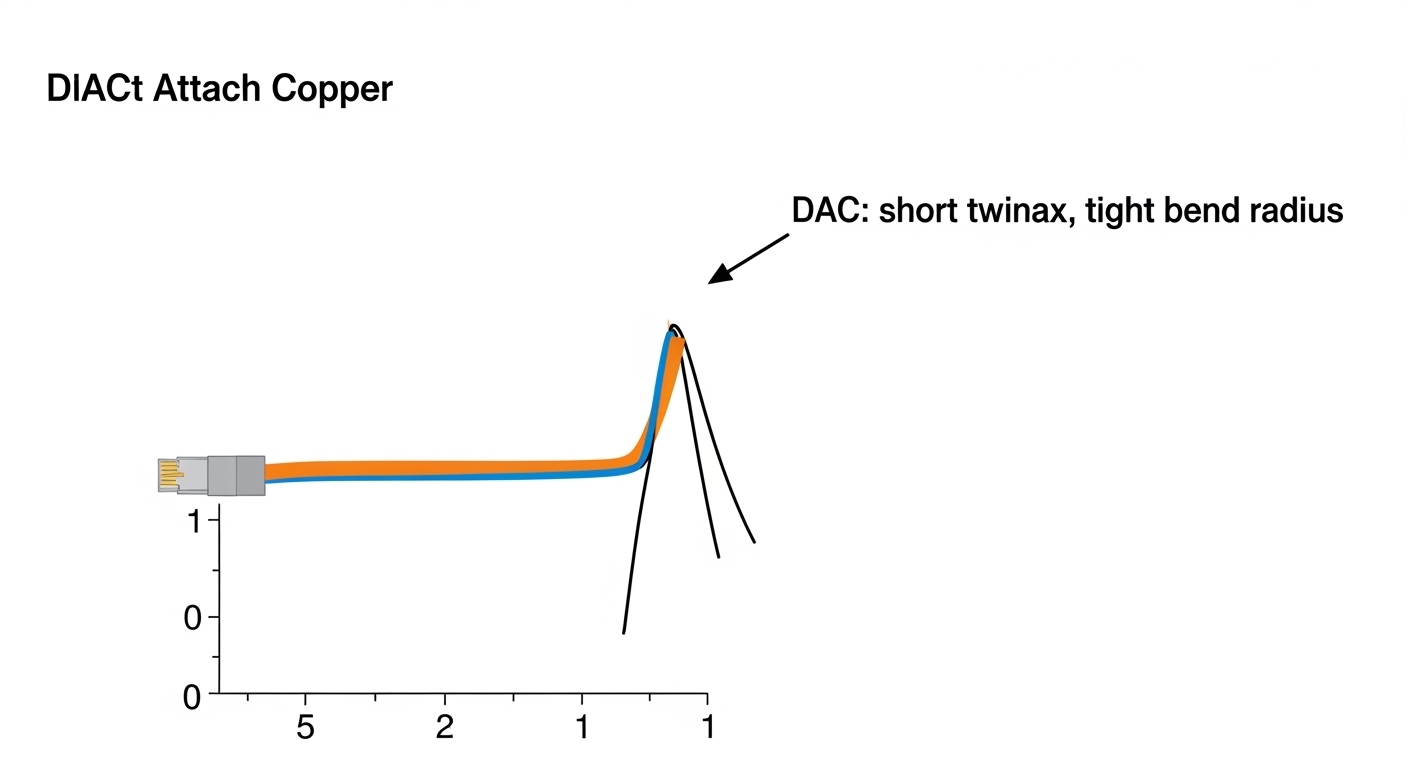

The fastest way to prevent deployment churn is to compare the constraints that actually differ between AOC and DAC: reach, power/heat, connectors, and environmental limits. For 800G, DAC is typically limited to short reaches (often up to a few meters depending on vendor and speed encoding), while AOC is built for longer reach with optical signal integrity. Both can support the same logical Ethernet framing, but the transceiver form factors, thermal budgets, and optics/copper compliance differ.

Quick reference table (verify against your exact switch and part numbers)

| Spec item | 800G AOC (Active Optical Cable) | 800G DAC (Direct Attach Copper Twinax) |

|---|---|---|

| Typical reach | Commonly ~10 m class (varies by vendor and optics class) | Commonly ~1 to 3 m class (varies by vendor) |

| Connector / form factor | Optical pluggable interface (vendor-specific; often QSFP-DD or OSFP-class depending on platform) | Electrical pluggable interface (twinax; vendor-specific high-density connector) |

| Power and heat | Includes active optical components; heat mainly at module ends; airflow sensitive | No optical power generation; typically lower per-assembly heat than AOC but can still be significant at high port density |

| Environmental operating range | Typically supports data center ambient specs, but verify module temperature limits and derating curves | Verify maximum case temperature and cable bend/handling limits; copper performance degrades with stress |

| Signal integrity sensitivity | Less sensitive to copper loss; sensitive to optical cleanliness and connector handling | Highly sensitive to insertion loss, return loss, and physical placement/strain |

| Serviceability | Swap modules; cleaning may be required if optical connectors are exposed | Swap cable assemblies; inspect for bent pins, cracked latches, and connector wear |

| Best-fit deployment | Middle-of-rack to end-of-row runs; constrained routing where optics can tolerate longer paths | Top-of-rack to adjacent spine or short within-rack patching where cable management is simple |

Because platforms vary, you must validate using the specific switch vendor compatibility matrix. For example, an 800G platform might use a specific QSFP-DD or OSFP-like form factor for both AOC and DAC, but the underlying electrical lanes and firmware expectations can still differ. Use your exact transceiver part numbers and confirm DOM support, speed bin, and vendor interoperability notes from the datasheets.

Pro Tip: In early rollouts, the most common “mystery” failures are not optics vs copper by itself; they are mismatched lane mapping expectations plus marginal connector seating. Even when the link comes up, you can see intermittent FEC or CRC bursts that only appear under sustained traffic. Add a 4 to 24 hour traffic soak with error counters before declaring the run complete.

Deployment comparison: where each wins in 800G racks

This deployment comparison is best framed by your physical topology and your operational constraints. AOC tends to win when the run exceeds the reliable copper budget or when you need routing flexibility without tight bend radii. DAC tends to win when you want the simplest bill of materials, shortest install time, and minimal connector cleaning steps—provided the run length is within the vendor’s validated range.

Scenario A: 3-tier data center leaf-spine upgrade with short patching

In a 3-tier data center leaf-spine topology with 48-port 10G/25G ToR switches upgraded to 800G spine uplinks, you may have runs from ToR to spine that are 1.5 m to 2.2 m via front-to-front patch panels. If your target latency budget and rack density keep the cable path short, a 2 m 800G DAC (twinax) often reduces install complexity and avoids optical cleanliness risk. In a typical change window, teams can swap and label DAC assemblies faster, which reduces exposure time to accidental latch damage during maintenance. The tradeoff is that if airflow or cable handling causes additional loss or strain, copper equalization can fail to hold link stability.

Scenario B: same row but longer reach with constrained cable trays

In another deployment in the same facility, a different spine pair may require 7 m to 12 m patching due to aisle layouts and cable tray routing rules. In that case, 800G AOC is typically the practical choice because it uses active optics and supports longer reach than copper twinax. Engineers often report fewer intermittent link retrains once AOC is used within its specified bend radius and connector handling procedure. The operational tradeoff is that AOC introduces optical components that require careful handling and may require cleaning if connectors are exposed during swaps.

Selection criteria checklist for 800G AOC vs DAC

Use this ordered list during planning so your compatibility and acceptance criteria are locked before purchase orders. It is designed to reduce rework and to support ISO 9001 style traceability: part number, serial/batch, test results, and installation records.

- Distance and reach budget: confirm the maximum validated reach for your exact 800G speed implementation and transceiver class; include patch panel and slack allowances.

- Switch compatibility matrix: verify the transceiver part number is listed for your exact switch model and firmware version; confirm lane mapping and supported breakout mode.

- DOM support and telemetry: check whether the platform reads DOM fields for AOC and DAC (optical power, temperature, bias for AOC; electrical diagnostics for DAC where available).

- Operating temperature and airflow: confirm module temperature limits and any derating guidance; plan cable placement relative to hot aisle/cold aisle airflow.

- Connector and handling constraints: for AOC, define cleaning steps and inspection procedure; for DAC, define allowable bend radius and strain relief.

- Vendor lock-in risk: evaluate whether third-party transceivers are validated; quantify the risk of firmware changes that break interoperability.

- Failure mode history: request MTBF or reliability data where available, but also plan your own acceptance testing and burn-in to detect batch-specific defects.

- Maintenance model: define how quickly you can source spares and whether you can hot-swap without disrupting routing and labeling conventions.

Common pitfalls and troubleshooting patterns

Below are concrete failure modes that frequently show up in 800G deployments. The goal is to map symptoms to root causes quickly and to prevent repeat incidents by strengthening installation and QA gates.

Pitfall 1: Link up but unstable under load (CRC/FEC bursts)

Root cause: marginal seating or slight connector misalignment; for copper, additional loss from cable strain; for AOC, possible contamination at optical interfaces. Solution: reseat the transceivers, inspect latch engagement, and run a sustained traffic test while monitoring interface error counters. If AOC is involved, follow the vendor cleaning procedure and re-test after cleaning.

Pitfall 2: Works in the lab, fails in the rack (thermal or airflow mismatch)

Root cause: module case temperature exceeds the validated operating range due to blocked airflow or recirculation near high-density ports. Solution: measure ambient and module-adjacent temperatures during traffic; adjust cable routing and airflow baffles; confirm that the installation meets the vendor’s supported airflow direction and minimum clearance.

Pitfall 3: Intermittent link retrains during cable moves

Root cause: DAC sensitivity to physical stress: bending beyond the minimum radius, twisting the twinax, or pulling against the connector latch. Solution: implement strain relief and cable management rules; train field techs to avoid moving cables while monitoring link state; verify bend radius compliance on every assembly.

Pitfall 4: DOM mismatch or missing telemetry leads to false “healthy” status

Root cause: platform does not fully support DOM fields for a given third-party module, or firmware expects different diagnostic pages. Solution: confirm DOM field availability for your switch model; update your acceptance tests to include physical-layer metrics (errors, link stability) rather than relying solely on “module detected.”

Cost and ROI note for 800G deployments

Pricing swings by vendor, certification, and lead time, but you can plan realistic ranges for budgeting. In many markets, third-party compatible 800G DAC assemblies are often cheaper than OEM, while AOC assemblies may have a smaller price gap depending on optics sourcing and validation. A typical cost planning approach is to compare not only the per-cable price but also the installed labor time and the expected swap rate.

TCO levers: installation labor (minutes per link), failure handling time, downtime risk, and spares inventory. If your run length forces AOC, using DAC beyond its validated reach can increase early-life failures and cause extended troubleshooting windows, which destroys ROI. Conversely, using AOC where DAC would fit can increase cost and introduce optical cleaning steps, but it may improve stability if your routing is messy.

For reliability planning, request any available reliability evidence (vendor test reports, burn-in results, and warranty terms). Even if a vendor provides MTBF-like claims, your acceptance testing should include a short burn-in at elevated ambient and a traffic soak with error monitoring, because field conditions often dominate.

FAQ

Which is better for 800G reach: AOC or DAC?

In most deployments, AOC supports longer reach than DAC within vendor-validated limits, making it the safer choice for multi-meter patching. If your run is short and within the DAC spec, DAC can be efficient and simpler to manage.

Do AOC and DAC require different switch firmware or settings?

They often require the platform to recognize the transceiver type and supported speed/feature set. Always verify the transceiver part number on the vendor compatibility matrix and confirm that your current firmware supports DOM and diagnostic telemetry for that module.

What should we test during acceptance for a deployment comparison?

Beyond link-up, test sustained traffic for at least 4 to 24 hours, monitor CRC/FEC/error counters, and verify telemetry (where available). Also include a re-seat and move test for a sample link to catch connector sensitivity issues.

Are third-party AOC or DAC modules safe to deploy?

They can be safe when the exact part numbers are validated for your switch model and firmware, but interoperability risk is real. Mitigate by requiring documented compatibility, running a pilot, and tracking serial numbers to detect batch-specific defects.

How do we handle optical cleaning for AOC?

Use the vendor-recommended cleaning method and train technicians to inspect connectors before insertion. Define a “clean-before-test” rule for any AOC that was exposed during handling, then re-run traffic soak to confirm error counters return to baseline.

What is the most common reason 800G links fail after installation?

The most common causes are usually physical: connector seating, cable strain or bend radius violations, and airflow/thermal mismatch. A good QA gate catches these with telemetry plus sustained traffic rather than relying on immediate link status.

To finalize your cabling plan, start with the distance and compatibility checklist, then validate with a short pilot that includes traffic soak and error monitoring. Next, use optical_vs_copper_transceiver_selection to connect your decision to broader transceiver selection and acceptance criteria.

Author bio: Field reliability engineer focused on Ethernet physical-layer acceptance, environmental stress testing, and failure-mode analysis in data center rollouts. Writes QA test plans that align cabling decisions with maintainability, traceability, and measurable MTBF-driven risk reduction.