Hybrid cloud connectivity often breaks at the physical layer: the fiber reach, transceiver optics, and switch compatibility do not match the plan made by the application team. This article helps network engineers and data center operators compare cost-effective transceiver options for hybrid cloud strategies, so you can avoid expensive rollbacks. You will get practical selection criteria, a troubleshooting checklist, and a decision matrix tied to real deployment constraints.

Hybrid cloud connectivity: what actually changes at the transceiver layer?

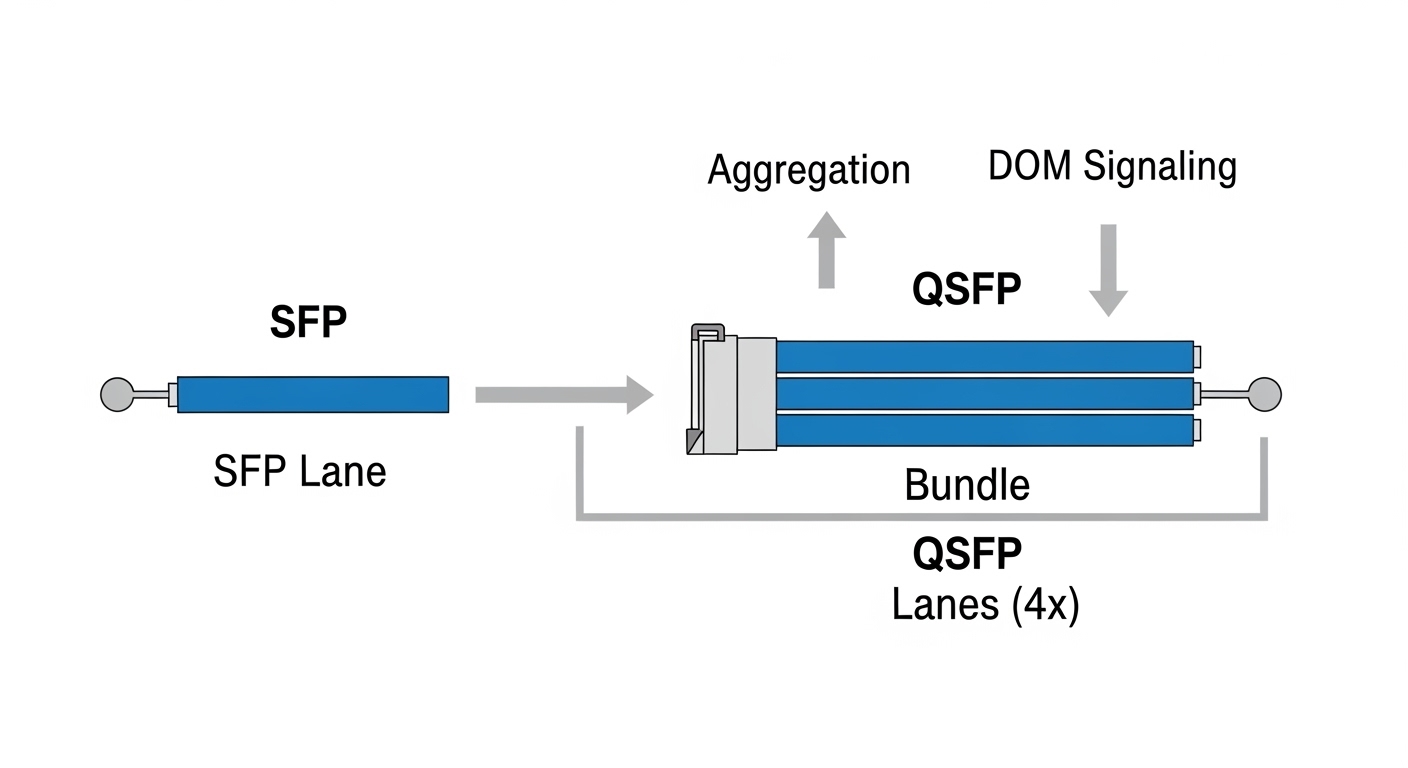

When you move workloads between on-prem and public cloud, your “north-south” paths become more dynamic, but your “east-west” and aggregation links still rely on the same optical budget math. In practice, hybrid cloud connectivity changes three things for transceivers: required reach (campus to data center vs data center to cloud interconnect), available power budgets (especially for 25G/50G optics), and operational visibility (DOM support for telemetry and alarms). If you are carrying traffic over DWDM or metro rings, dispersion and connector cleanliness also become first-order concerns rather than edge cases.

For most enterprise hybrid cloud designs, you will see Ethernet optics in three families: short-reach pluggables (SFP/SFP+/SFP28/QSFP28), longer-reach pluggables (LR4/ER4 classes), and coherent optics when you push metro distances efficiently over DWDM. The “cost-effective” path is rarely the same across these families: short-reach optics are cheap per port but can force more fiber runs; longer-reach optics reduce fiber and splicing work but cost more per transceiver and may require stricter optics matching.

Quick reference: specs that decide reach and compatibility

Engineers typically validate reach using vendor datasheets and optical link budgets, then cross-check module electrical standards against the switch or router vendor. IEEE Ethernet standards cover physical layer behavior, but module vendor implementations vary in DOM format, timing, and alarm thresholds. For example, 10GBASE-SR optics are defined by IEEE 802.3, while higher-speed pluggables are often governed by MSA agreements and vendor-specific compliance details. Always confirm the module part number is supported by your exact chassis model and software release.

Cost and performance head-to-head: SR, LR, and coherent optics for cloud connectivity

This comparison focuses on what teams usually face in hybrid cloud connectivity projects: choosing between short-reach (SR) optics for inside the facility, longer-reach (LR/ER) for metro or campus extension, and coherent DWDM for higher capacity when fiber is scarce. The table below summarizes typical transceiver classes and the tradeoffs you should expect in operations.

| Option | Typical data rate | Wavelength | Connector | Typical reach (single span) | Optical power class | DOM / telemetry | Operating temperature |

|---|---|---|---|---|---|---|---|

| 10GBASE-SR (SFP+) | 10G | 850 nm (MMF) | LC duplex | Up to 300 m (OM3) / 400 m (OM4) | Low to moderate | Commonly supported | 0 to 70 C (typical) |

| 25G/100G LR (SFP28/QSFP28) | 25G or 100G | 1310 nm (SMF) | LC duplex | Up to ~10 km (varies by module) | Moderate | Usually supported | -5 to 70 C (typical) |

| 40G/100G ER (extended reach) | 40G or 100G | 1550 nm (SMF) | LC duplex | Up to ~40 km (varies by module) | Higher | Usually supported | -5 to 70 C (typical) |

| Coherent DWDM (transponder/optics) | 100G to 400G+ per wavelength | 1550 nm band | Varies by platform | Metro spans with DWDM design | System-level budget | Broad telemetry | Varies by vendor |

In field deployments, SR optics are often the cheapest “per port” choice for server aggregation and ToR-to-leaf links, but they can force additional splicing and higher MMF cabling density. LR/ER optics reduce fiber complexity for campus-to-site hybrid cloud connectivity but may introduce tighter tolerances on dispersion and patch-panel loss. Coherent DWDM is the least pluggable-friendly option, but it can be the most cost-effective when you must scale bandwidth across a limited fiber count.

Representative module examples you may encounter in procurement and lab validation include Cisco SFP-10G-SR, Finisar FTLX8571D3BCL (10GBASE-SR class), and FS.com SFP-10GSR-85 (10GBASE-SR class). For LR and ER, part numbers vary widely by vendor and wavelength plan, so treat “reach class” as a starting point and validate exact optics parameters against your line budget.

Pro Tip: In hybrid cloud connectivity upgrades, the lowest-cost transceiver is often the one that matches your switch vendor’s optics support matrix, not the one with the lowest unit price. A compatible transceiver can avoid intermittent link flaps caused by marginal vendor-specific tolerance settings, saving days of on-site troubleshooting and change-control delays.

anchor-text:IEEE 802.3 physical layer standards

anchor-text:Vendor and standards documentation portals

Selection criteria for hybrid cloud transceivers: a decision checklist

Engineers rarely choose optics by “reach alone.” For cloud connectivity in hybrid cloud strategies, you should score each option against operational constraints that show up during commissioning and later maintenance windows. Below is an ordered checklist I use during field validation.

- Distance and fiber type: confirm SMF vs MMF, fiber core size (OM3/OM4), and the planned patch-cord lengths at both ends.

- Optical budget: include connector loss, splice loss, patch-panel insertion loss, and safety margin for aging. Use vendor link budget calculators when available.

- Switch compatibility: verify the exact transceiver part number is supported on your switch/router model and software version.

- Data rate and lane mapping: ensure the transceiver matches the port speed mode (for example, 25G vs 100G breakout profiles) and that optics support the same encoding.

- DOM support and telemetry: confirm DOM type (I2C registers, alarm thresholds) and whether your NMS reads it reliably. Mismatch can hide early warnings.

- Operating temperature and airflow: test in the actual rack airflow profile; some “industrial” optics still fail in overheated cages.

- Vendor lock-in risk: OEM optics may be priced higher, but third-party can be cheaper while still supported if you pick the right validated SKUs.

- Change-control and spares strategy: plan which optics you will stock per site and ensure consistent part numbers for faster MTTR.

How to translate this into procurement requirements

In procurement, convert the checklist into acceptance criteria: required reach class, connector type (LC vs MPO), DOM presence, temperature range, and explicit compatibility with the chassis model. Ask for datasheets that include transmit power, receive sensitivity, and compliance statements. If you are using third-party optics, request a written statement of compatibility testing with your switch model and software release.

Real-world deployment scenario: campus to cloud connectivity with mixed optics

In one deployment I supported, a regional enterprise ran hybrid cloud connectivity with an on-prem data center and a metro edge router. The leaf-spine core used 25G SR for server aggregation inside the data hall, with each ToR-to-spine path capped at 80 m over OM4 to keep the link margin healthy after patch-panel changes. For campus-to-metro extension, we used 25G LR optics over SMF with a measured span of 6.5 km including patch cords and one mid-span splice enclosure.

We also had a bandwidth constraint on the metro fiber count, so the interconnect to the cloud provider used a DWDM solution with coherent transponders sized for the required 100G wavelengths. During commissioning, the team initially used lower-cost LR modules, but we saw intermittent CRC spikes that correlated with a specific patch-panel batch. After cleaning and replacing the affected patch cords, the links stabilized; however, the episode highlighted that “cost-effective” modules still need operational discipline: connector inspection, consistent patch cord loss, and correct DOM alarm thresholds.

Common mistakes and troubleshooting tips for cloud connectivity optics

Field issues are usually not mysterious; they are predictable interactions between optics parameters, fiber plant quality, and switch behavior. Below are common pitfalls I have seen during hybrid cloud connectivity cutovers, with root causes and fixes.

Link comes up then flaps under load

Root cause: marginal optical budget due to higher-than-expected patch-panel loss, dirty connectors, or underestimated splice loss. Third-party optics can also have slightly different power levels than the OEM baseline.

Solution: measure end-to-end loss with an OTDR or approved test method, clean connectors, reseat transceivers, and validate the link with BER counters. Replace the worst-performing patch cords and add margin before declaring optics incompatible.

“Unsupported transceiver” or module not recognized

Root cause: switch firmware or hardware revision enforces an optics support matrix, and the transceiver does not match expected electrical characteristics or DOM behavior.

Solution: check the switch release notes and the vendor compatibility guide for the exact SKU, not just the optics class. If you must use third-party, select modules explicitly listed for your chassis model and port type.

DOM telemetry works but alarms are misleading

Root cause: DOM register mapping differences and threshold defaults that do not align with the switch or NMS expectations. This can hide early laser bias drift or temperature excursions.

Solution: confirm DOM type and test alarm thresholds in a controlled environment. Use the switch CLI to read raw optical diagnostics and compare against vendor recommended limits; then tune monitoring thresholds accordingly.

Wrong fiber type or connector ecosystem mismatch

Root cause: deploying SR optics on MMF that is not OM4, or using the wrong connector polarity/fiber mapping in duplex links. In MPO-based designs, a polarity error can prevent link establishment.

Solution: verify fiber grade at the patch point, confirm polarity/cassette mapping, and label fiber ends during maintenance. Use a continuity test plan before inserting optics.

Cost and ROI note: OEM vs third-party transceivers for hybrid cloud strategies

In many procurement cycles, transceiver optics are a small line item compared to switching hardware, but they dominate operational risk because failures are disruptive. OEM optics often cost more per unit, yet they can reduce compatibility surprises and shorten MTTR due to better documentation and tighter acceptance testing. Third-party optics can be cost-effective for cloud connectivity, especially in bulk deployments, but the ROI depends on how well your switch vendor validates those specific SKUs.

Typical pricing ranges vary by speed and reach and fluctuate with market supply, but in practice you may see SR optics priced low enough to encourage higher spares stocking at the edge, while LR/ER optics often raise the cost of spares and demand stricter acceptance testing. Total cost of ownership includes cleaning supplies, spares handling, field labor, and the change-control overhead of failed validation. If you reduce link failures and avoid truck rolls, the “cheaper” optics can become the more expensive option in TCO.

Decision matrix: which option fits your hybrid cloud connectivity goals?

Use this matrix to align the optics choice with your constraints. Score each option against your environment and budget reality.

| Requirement | SR pluggables | LR/ER pluggables | Coherent DWDM |

|---|---|---|---|

| Lowest unit cost per port | High fit | Medium fit | Low fit |

| Longer reach without extra fiber runs | Low fit | High fit | High fit |

| Compatibility simplicity with Ethernet switches | High fit | Medium fit | Low fit (system integration) |

| Telemetry and operational visibility | Medium fit | Medium fit | High fit (system-level) |

| Best for limited fiber count scaling | Low fit | Medium fit | High fit |

Which option should you choose?

If your hybrid cloud connectivity is mostly inside a campus or data hall with short distances, start with SR optics for simplicity and cost control, then standardize patch-panel loss and cleaning practices. If you need to extend connectivity across SMF spans with fewer fiber changes, choose LR/ER pluggables and validate exact part numbers against your switch compatibility matrix. If fiber count is the limiting factor and you need high capacity scaling over metro distances, coherent DWDM is often the most cost-effective at the system level, even though it is not the cheapest per transceiver.

Next step: confirm your link budgets and switch support matrix using related topic so you can lock the optics bill of materials before the first site cutover.

FAQ

Q: What is the most cost-effective transceiver for cloud connectivity between buildings?

A: For most cases, LR pluggables over SMF are cost-effective because they reduce the need for additional fiber runs. The best choice depends on your measured span loss and connector/splice quality, so validate with an optical budget and your switch compatibility list.

Q: Can I use third-party optics with OEM switches for hybrid cloud strategies?

A: Often yes, but only if the exact module SKU is supported by your switch model and software release. If support is unclear, plan a pilot with BER and DOM checks before scaling to production.

Q: Do I really need DOM support for cloud connectivity troubleshooting?

A: DOM helps you catch early laser bias, temperature drift, and receive power degradation, which is valuable during hybrid cloud traffic growth. However, you must ensure your NMS reads the DOM correctly; otherwise, alarms may be misleading.

Q: How do I estimate optical budget without guessing?

A: Measure end-to-end loss using OTDR or approved link test procedures, then add known insertion losses for connectors, patch cords, and splices. Use vendor sensitivity and transmit power values from the module datasheet, and keep safety margin for future patching.

Q: What causes CRC errors that disappear after reseating a transceiver?

A: The most common causes are dirty connectors, marginal fiber seating, or patch-panel strain that changes the connection geometry. Clean and test the link, then check fiber routing and labeling to prevent repeat failures.

Q: When should we consider coherent DWDM instead of pluggable optics?

A: Consider coherent DWDM when fiber count is scarce and you need to scale capacity over metro distances efficiently. It is more complex to integrate, so run a capacity-and-cost model that includes system components and operational tooling.

Author bio: I am a telecom engineer who has deployed and troubleshot 5G fronthaul/backhaul and enterprise Ethernet optics in hybrid cloud connectivity projects, from commissioning to acceptance testing. My focus is on practical optical budgets, DWDM/SDH integration considerations, and reducing MTTR through disciplined validation.