A three-tier data center rollout can fail in subtle ways: links come up, traffic flows for days, then CRC errors and retransmits spike. This article walks through a real deployment where the team used SNR optical transceiver selection and validation to reduce optical noise sensitivity in 10G fiber links. It helps network engineers, field technicians, and procurement teams estimate market fit, operational risk, and ROI.

Problem and challenge: when “it links” still means “it hurts”

In our case study, the challenge appeared after a leaf-spine refresh. The new ToR switches used 10G SFP+ ports to connect to aggregation at 300 to 400 meters over OM3 and OS2 runs. After install, most links passed initial diagnostics, but after roughly 72 to 120 hours certain circuits showed rising interface errors, including CRC and FCS counters, plus elevated retransmits at the application layer.

The team’s first instinct was to swap optics and patch cords. However, the pattern correlated with transceiver batch and specific fiber spans, not just transceiver type. Because optical receiver sensitivity margin is fundamentally tied to signal quality and noise, the team focused on SNR optical transceiver characteristics rather than only reach class. Vendor datasheets list nominal sensitivity, but field reality depends on launch power, receiver limiting behavior, and how noise interacts with the receiver’s front-end.

To keep the work grounded, they aligned the evaluation with IEEE Ethernet PHY concepts (link integrity, BER expectations) and practical optical measurement workflows described in vendor optical test guidance. For standards context, reference IEEE 802.3 for 10GBASE-LR/SR requirements and operating assumptions. anchor-text: IEEE 802.3 standard

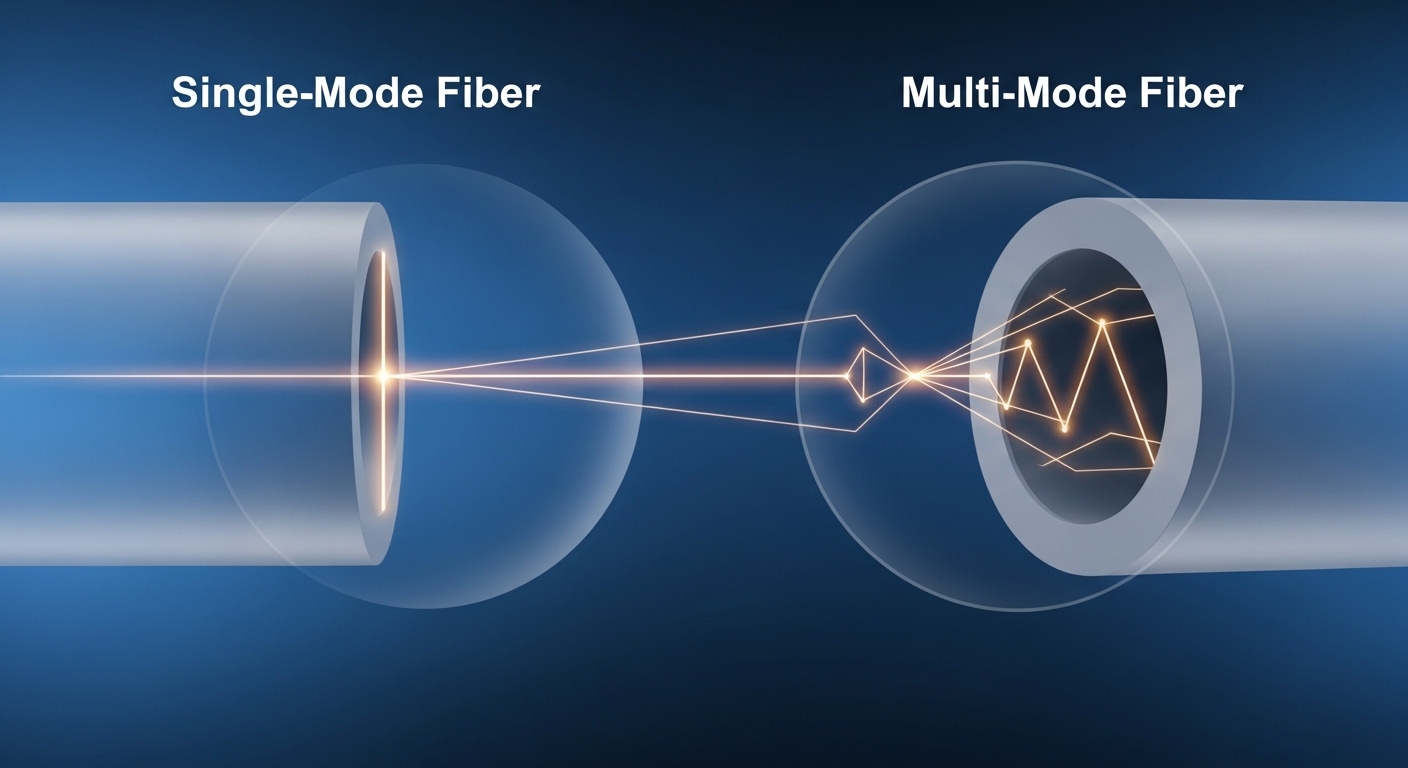

Environment specs: fiber plant, optics classes, and what “noise” meant

The environment combined short-reach multimode and longer-reach single-mode. We documented fiber type, link length, connector cleanliness, and transceiver spec class. The key was translating “SNR” into operational variables we could measure: how much optical power arrived at the receiver, how the receiver responded near sensitivity, and how much noise margin remained under real conditions.

We used two transceiver families during the remediation: 10G SR optics for OM3 and 10G LR optics for OS2. Examples of commonly deployed modules include Cisco SFP-10G-SR and Finisar FTLX8571D3BCL-class 10G SR, plus FS.com style 10G LR modules such as FTLX1471D3BCL equivalents. Actual model numbers vary by OEM, but the evaluation method stayed consistent.

| Parameter | 10G SR (OM3) | 10G LR (OS2) |

|---|---|---|

| Data rate | 10.3125 Gb/s (10GBASE-SR) | 10.3125 Gb/s (10GBASE-LR) |

| Wavelength | 850 nm VCSEL | 1310 nm DFB laser |

| Nominal reach | 300 m over OM3 (typical) | 10 km over OS2 (typical) |

| Connector | LC duplex | LC duplex |

| Typical Tx power class | Low-to-medium launch power (datasheet dependent) | Low-to-medium launch power (datasheet dependent) |

| Receiver sensitivity class | Specified in datasheet (compare across vendors) | Specified in datasheet (compare across vendors) |

| DOM support | Usually digital optical monitoring (I2C) | Usually digital optical monitoring (I2C) |

| Operating temperature | Commercial: often 0 to 70 C | Commercial/industrial variants exist |

We also tracked DOM telemetry. For each link, engineers logged Tx bias current, Tx power, Rx power, and temperature. While DOM does not directly report SNR, it provides the inputs needed to infer whether the receiver is operating with adequate margin. In practice, the “SNR optical transceiver” decision becomes an exercise in ensuring the system stays away from the receiver’s noisy cliff under temperature drift and connector aging.

Chosen solution and why: selecting optics with SNR margin in mind

The chosen approach was not “buy the most expensive transceiver,” but rather “use consistent optics behavior with verified receiver margin.” The team implemented a two-stage decision: first, narrow candidates by compatibility and standard class; second, validate noise sensitivity indirectly by measuring received power distribution, DOM stability over time, and error-free performance under controlled stress.

compatibility and electrical interface checks

They confirmed that the switch vendor’s transceiver compatibility list included the target form factor and that the optics met the expected electrical interface behavior for 10GBASE-SR or LR. Most SFP+ modules support the same general baseline, but there are caveats: some switches enforce stricter thresholds on DOM reporting, and some third-party optics can misreport or omit calibration fields. Those mismatches can cause the switch to treat the link as marginal or to apply conservative thresholds.

They prioritized modules that advertise compliant I2C registers and stable DOM reads. For reference on optical monitoring behavior and SFP/SFP+ module interfaces, consult vendor interface docs and general SFP management guidance. anchor-text: SNIA technical resources

“SNR optical transceiver” by margin, not marketing

In the field, the team treated SNR as the effective remaining margin after accounting for: fiber attenuation, connector loss, splice loss, and receiver sensitivity. They targeted a received power window that stayed comfortably above sensitivity across temperature swings. Practically, that meant selecting optics where the measured Rx power stayed within a stable band for at least 7 days under normal rack thermal conditions.

When they compared optics batches, they observed that modules with similar nominal reach behaved differently in DOM stability. The “better SNR optical transceiver” candidates showed less Rx power volatility and smaller temperature-to-power coupling. That reduced the probability of occasional receiver overload or noisy operation near sensitivity.

Pro Tip: In troubleshooting, rely on Rx power plus DOM temperature trend together. A rising bias current with flat Rx power often indicates a receiver chain bottleneck or connector contamination; a falling Rx power with stable temperature points more toward fiber loss changes or patch cord wear.

Implementation steps: from lab checks to production cutover

The remediation plan ran in phases to limit downtime and isolate variables. The team documented each link with a unique ID, then captured baseline telemetry before swap. They also scheduled work during a maintenance window that allowed them to observe post-change behavior for at least 48 hours before declaring victory.

Phase A: baseline measurement and fiber hygiene

Before touching optics, they cleaned LC connectors using a validated process and inspected with a fiber microscope. Connector contamination is a common root cause of noise-like symptoms: it increases backscatter and changes effective coupling, which can degrade the receiver margin in ways that look like random errors. They replaced suspect patch cords where inspection showed scratches or residue.

Field measurement included optical power checks at the patch panel and end-to-end if tools allowed. They also verified that the expected fiber type matched the link class (OM3 for SR, OS2 for LR). Mixed fiber type can still “work” at first, but it often fails under temperature or traffic patterns.

Phase B: optics swap with controlled sets

They swapped in optics in batches, keeping one variable fixed: same switch port configuration, same fiber span, and same patch cord set. They compared two groups: candidate optics from different vendors or OEM supply chains with known differences in DOM stability. For SR links, they used known 850 nm SR optics such as Cisco SFP-10G-SR and FS.com-style 10G SR modules like SFP-10GSR-85 variants, depending on budget. For LR links, they used 1310 nm LR optics from the same vendor family where possible to reduce calibration drift.

During the first 24 hours after cutover, they collected interface error counters and correlated them with DOM changes. Only after error counters stabilized did they proceed to the next batch.

Phase C: stress validation and monitoring

They applied steady traffic and monitored for BER-like symptoms using the switch’s counters. While Ethernet counters are not a direct BER meter, sustained CRC/FCS increases are strong indicators of optical margin erosion. They also monitored temperature for both the module and switch environment. In one rack, an unexpected airflow restriction created a 6 to 8 C temperature rise at the top-of-rack ports, which directly affected DOM temperature and receiver behavior.

Measured results: stability gains and operational impact

After the SNR margin-focused optics rollout, the team saw measurable improvement. For previously problematic 10G circuits, CRC counters dropped from sporadic spikes to near-zero values over a 30-day window. In the worst-affected links, the error rate decreased enough that upper-layer retransmit counts fell to baseline.

DOM telemetry also improved. The selected optics showed less Rx power drift over temperature. In practical terms, the received power stayed within a tighter band, reducing the time the receiver spent near its sensitivity boundary. That is the operational equivalent of higher “SNR optical transceiver” quality, even when the module does not expose a direct SNR metric.

From an operations perspective, the mean time to repair improved because fewer swaps were needed. Instead of random trial-and-error, the team used a consistent decision framework: compatibility first, then received power margin and DOM stability. This reduced field visits and shortened troubleshooting cycles.

Market and moat perspective: why SNR-aware selection matters commercially

From a VC and market-size viewpoint, the optics market is mature, but the differentiation increasingly lives in reliability engineering, supply chain consistency, and module-to-switch interoperability. The “moat” for better suppliers is often not the laser alone; it is yield consistency, calibration quality, DOM firmware accuracy, and thermal control. Many modules look identical externally, but their realized performance under aged connectors and temperature cycling can differ.

Monetization tends to be straightforward per-unit pricing, but long-term revenue comes from trust: enterprise buyers reorder when optics reduce outage risk. That pushes vendors to invest in stable receiver design and better test data. For buyers, the moat you can capture is process discipline: measure margin, enforce compatibility, and track DOM trends like a reliability signal.

Cost and ROI note: what you can expect to pay and where savings come from

Typical retail or distributor pricing varies by volume and OEM branding. As a practical range, 10G SR SFP+ optics often cost roughly $30 to $120 per module, while 10G LR SFP+ can range from $60 to $250 depending on vendor and DOM options. OEM-branded modules may cost more, but they reduce compatibility risk and sometimes reduce RMA rates.

TCO is dominated by failure handling: downtime costs, labor hours for swaps, and the cost of cleaning and inspection tools. In our case, reduced error-driven troubleshooting lowered technician time and avoided multiple re-cabling events. Even if higher-cost optics increased module unit price by 20 to 40 percent, the avoided field labor and fewer repeat failures often delivered ROI within 1 to 2 quarters for high-port-count deployments.

Common mistakes and troubleshooting tips

Even with good optics, failures happen. Below are common pitfalls we saw during similar rollouts, with root causes and fixes.

-

Mistake: Swapping optics without cleaning and inspecting connectors.

Root cause: Contamination increases insertion loss and backscatter, degrading receiver margin and creating intermittent CRC spikes.

Solution: Use a fiber inspection scope on every affected LC connector; clean with validated wipes and re-inspect before concluding optics are at fault. -

Mistake: Treating reach class as the only requirement.

Root cause: “Works on day one” can still fail when temperature drift and aging push the link toward sensitivity limits; SNR margin is the hidden variable.

Solution: Track Rx power via DOM and require a comfortable received power window across temperature and time. -

Mistake: Mixing optics vendors across a critical path without validating DOM behavior.

Root cause: Some modules misreport DOM calibration fields or use different thermal characteristics, confusing switch threshold logic.

Solution: Validate in a pilot group. Confirm stable DOM reads and stable interface counters before broad deployment. -

Mistake: Ignoring airflow and temperature hotspots in the rack.

Root cause: Module temperature affects laser bias and receiver behavior; hotspots can create periodic error bursts.

Solution: Measure port-side temperature, fix airflow, and compare DOM temperature trend before and after remediation.

Selection criteria checklist for SNR optical transceiver purchases

Use this ordered checklist during procurement and engineering validation. It is designed for practical decision-making, not just spec-sheet comparison.

- Distance and fiber type match: SR for OM3/OM4 at 850 nm; LR for OS2 at 1310 nm. Confirm connector and patch topology.

- Budget and acceptable risk: Decide whether OEM branding is worth the premium for your outage tolerance.

- Switch compatibility: Verify the switch vendor’s supported optics list and confirm DOM register behavior.

- DOM support and monitoring plan: Ensure the module provides stable Tx power, Rx power, and temperature telemetry for trend analysis.

- Operating temperature range: Prefer modules rated for your environment; industrial variants may reduce thermal drift risk.

- Vendor lock-in risk: If you must standardize for reliability, negotiate supply continuity and RMA terms to avoid future shortages.

- Test and validation strategy: Pilot a small set, monitor error counters and DOM stability for at least several days, then scale.

FAQ

What does “SNR” mean for an optical transceiver in practice?

In many modules, SNR is not directly exposed as a numeric field. Instead, engineers infer optical quality through receiver sensitivity margin, Rx power stability, and error counters under real operating conditions. That is why an SNR optical transceiver selection should be based on measured link margin and DOM trends.

Do I need special instruments to choose a better transceiver?

You need more than just a “works/does not work” test. A basic optical power meter and a fiber inspection scope are often enough for strong pre-checks. For deeper validation, track DOM telemetry and correlate it with CRC/FCS counters over time.

Will third-party optics always be worse than OEM?

Not always. Some third-party suppliers produce modules with excellent calibration and stable DOM behavior. The risk comes from variability in yield, thermal control, and compatibility nuances with specific switch models, so you still need a pilot validation.

How do I know if noise is the real issue rather than fiber loss?

If Rx power sits comfortably above sensitivity yet errors still rise, suspect noise-like effects such as connector contamination, backscatter, or receiver margin instability. If Rx power is low or drifting downward, fiber loss and aging are more likely. Correlate DOM Tx/Rx trends with inspection results to separate the causes.

What is the fastest troubleshooting path for intermittent CRC errors?

First, inspect and clean connectors on the affected circuit. Second, check DOM temperature and Rx power trend during error events. Third, swap optics with a known-good module while keeping the fiber path constant to isolate the variable.

How long should I monitor after changing transceivers?

For reliability-focused validation, monitor at least 48 to 72 hours to capture early drift and traffic pattern effects. For high-risk links, extend to 2 to 4 weeks to detect slower connector or thermal aging impacts.

In our case, treating SNR optical transceiver selection as a margin-and-stability problem reduced CRC spikes and shortened field troubleshooting cycles. Next, review optical link power budget basics to tighten your received power window and make future transceiver decisions more deterministic.

Author bio: I have deployed and operated multi-vendor 10G and 25G optical networks in production data centers, using DOM telemetry, power budgets, and fiber inspection to cut error-driven outages. I write from field measurements and procurement experience to help teams choose optics with predictable reliability.

Image prompts and placements: