technical guide for telecom optical choices under real constraints

In telecom builds, the fastest way to miss schedule is to pick optics by headline reach and ignore power budgets, vendor DOM behavior, and connector losses. This technical guide helps network engineers and field deployment teams select transceivers, wavelengths, and cabling approaches that will actually pass acceptance tests. You will get measurable selection criteria, a comparison table of common module classes, and troubleshooting patterns seen during turn-up. It also includes compatibility caveats for switch optics and optics management.

Start with the system math: wavelength, reach, and link budget

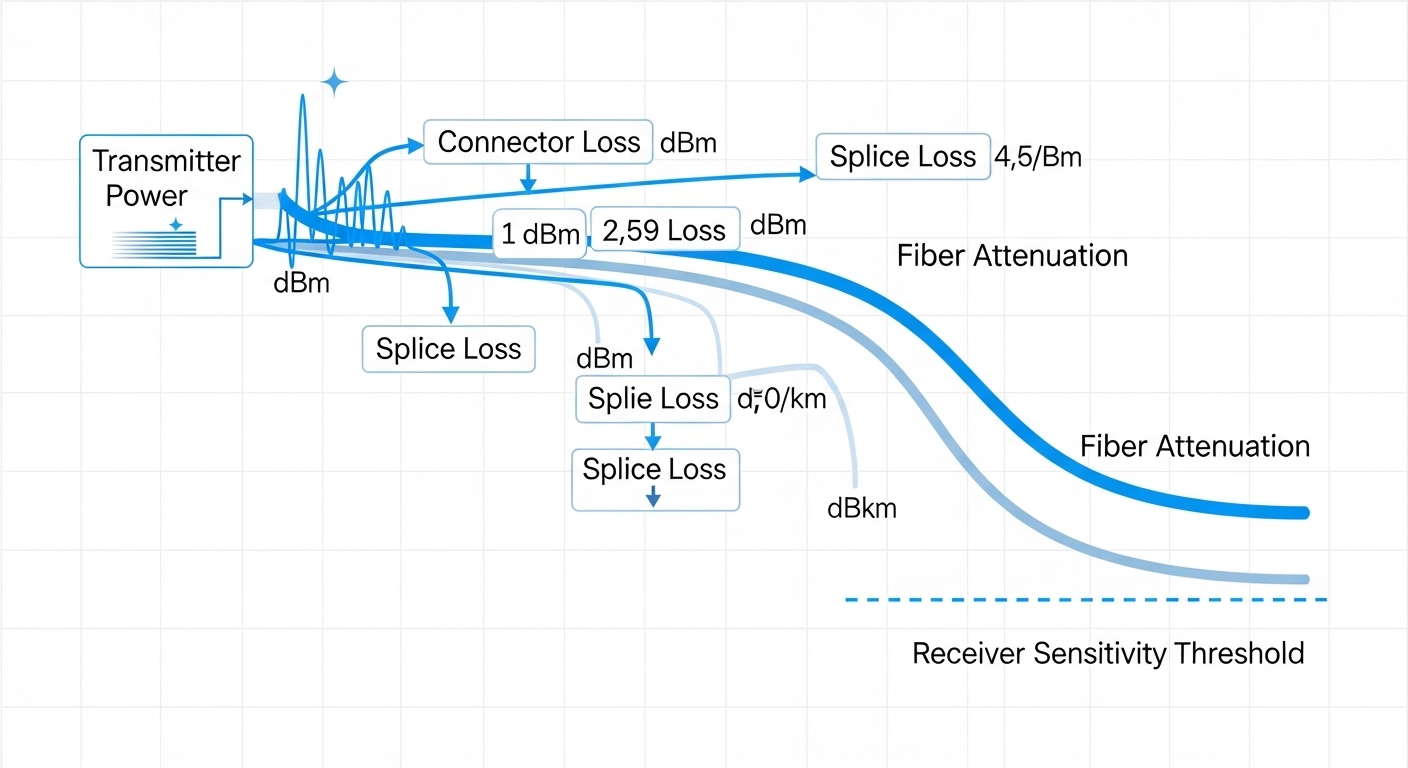

Optical selection in telecom is fundamentally a link budget problem plus mechanical compatibility. First decide the wavelength grid and the fiber type: single-mode at 1310 nm or 1550 nm for long-haul, and short-reach multimode for some access designs. Then compute power budget using measured transmitter launch power, receiver sensitivity, connector/splice losses, and fiber attenuation at the chosen wavelength. For standards alignment, use IEEE Ethernet optics guidance and vendor datasheet parameters when available: IEEE 802.3 Ethernet Standard.

What to calculate before you buy

- Transmit power (dBm) at the module output, from the transceiver datasheet.

- Receiver sensitivity (dBm) for the target BER target, from the same datasheet.

- Margin for aging, temperature drift, and field cleaning variances (commonly 3 to 5 dB operational headroom).

- Connector and splice losses based on measured mated connector type and cleaning condition; typical values range from 0.2 dB to 0.5 dB per mated pair when properly cleaned and polished, but field conditions can be worse.

- Fiber attenuation (dB/km) at the wavelength; single-mode values are typically around 0.35 dB/km at 1310 nm and 0.20 dB/km at 1550 nm, depending on fiber spec.

How to interpret acceptance-test settings

In telecom turn-up, acceptance often uses BER testing, optical power verification, and sometimes eye-diagram or OMA/OSNR checks depending on the optics class. For DWDM or coherent solutions, you must also consider OSNR, dispersion, and channel spacing; for direct-detect SFP/SFP+/QSFP modules, power and dispersion tolerance dominate. If your acceptance plan requires deterministic latency or strict alarms, verify that the module supports the required management interface behavior and alarm thresholds.

Pro Tip: Many field failures are not “bad optics” but underestimated connector cleanliness variance. In practice, a single unclean LC/APC polish can add enough loss to push a marginal link over the receiver sensitivity threshold after temperature rise. Always include a cleaning verification workflow and budget an extra 1 to 2 dB of margin when commissioning new patches.

Choose the right module family: SFP, SFP+, QSFP, and coherent optics

Module selection is a three-axis constraint: electrical interface to the switch/router, optical performance to the link budget, and management/telemetry behavior to your NMS. For Ethernet and packet transport, common families include SFP, SFP+, QSFP, and QSFP28, each mapping to distinct line rates and lane counts. For long-haul transport, coherent pluggables or transponder solutions handle DWDM with advanced modulation formats and require different commissioning steps than direct-detect optics.

Module compatibility reality check

Even when a module is “optically compatible,” it can fail electrically if the host switch expects a specific electrical signaling profile or DOM feature set. Always validate against the switch vendor’s supported optics list and confirm whether the platform enforces vendor lock-in or checks vendor ID. For coherent systems, verify that the host supports the specific client interface (e.g., Ethernet, OTN) and that the transponder supports the required coding and FEC mode.

Key specification comparison for common direct-detect optics

Use this table as a sanity check, then replace values with the exact datasheet for the part number you plan to deploy. Telecom procurement should always capture wavelength, reach, connector type, temperature range, and power consumption because these affect thermal design and reliability.

| Module class | Typical wavelength | Reach class | Connector | Data rate | Typical power | Operating temperature | Notes |

|---|---|---|---|---|---|---|---|

| SFP | 1310/1550 nm (single-mode) | Up to ~80 km (model dependent) | LC | 1G / 2G | ~1 to 2.5 W | 0 to 70 C or -40 to 85 C | Often used in access and aggregation when port density is lower |

| SFP+ | 1310 nm (SM) or 850 nm (MM) | ~10 km (SM) or ~300 m (MM) | LC | 10G | ~1.5 to 3.5 W | 0 to 70 C or -40 to 85 C | Direct-detect; budget margin for connectors and patching |

| QSFP28 | 1310/1550 nm (SM) or 850 nm (MM) | ~10 km (SM) or up to ~100 km with specific models | LC | 25G | ~3 to 6 W | 0 to 70 C or -40 to 85 C | Higher lane count increases thermal sensitivity in dense racks |

| Coherent pluggable or transponder | 1550 nm DWDM band | 80 km to 1000+ km (system dependent) | Depends on solution | 25G/50G/100G+ per channel | ~8 to 25 W per channel (varies widely) | -5 to 70 C typical for many platforms | Requires OSNR and dispersion planning; different alarms and commissioning |

Fiber and connector choices: the hidden loss budget

In telecom deployments, the optical transceiver is rarely the weak link; the weak link is the fiber plant, connectors, and patching method. Connector insertion loss and return loss are not just theoretical numbers: they are affected by cleaning, ferrule condition, and whether you use APC vs UPC polish types in the right locations. When you standardize on connector types and enforce an inspection-based cleaning workflow, you reduce link bring-up variability and reduce rework cycles.

Single-mode vs multimode selection in telecom

Single-mode dominates long-haul and metro transport because attenuation and modal dispersion are manageable over kilometers. Multimode can be cost-effective for short reach, but it becomes sensitive to launch conditions, bandwidth limits, and patching quality; this matters in high-rate systems where modal constraints tighten. If your telecom design includes a mix of legacy and new optics, confirm that the fiber plant meets the required OM and bandwidth specs and that the patch cords are consistently terminated.

Connector polish and return loss considerations

Use APC where you must control reflections and where the system design benefits from reduced back-reflection. Use UPC where the ecosystem expects it and where return loss requirements are met by the equipment. Field practice: label patch panels and enforce consistent connector types on each side of the link to avoid reflection-induced receiver errors or intermittent alarms.

Selection checklist for telecom procurement and field acceptance

When you are choosing optics and optical solutions under schedule pressure, use a decision checklist that aligns procurement, engineering, and field acceptance. This prevents the common failure pattern where a module “meets reach on paper” but fails in the installed plant because of management behavior, thermal limits, or connector loss variability.

- Distance and fiber type: confirm route length with as-built fiber counts and identify single-mode vs multimode.

- Wavelength and reach class: pick 1310 nm or 1550 nm for single-mode where appropriate; verify the exact reach for the part number.

- Switch or chassis compatibility: validate against the host vendor’s supported optics list and confirm electrical lane mapping.

- DOM and telemetry support: check whether the module provides digital optical monitoring and whether the host reads it consistently.

- Operating temperature and thermal headroom: ensure the module spec matches the rack’s ambient and airflow assumptions; QSFP28 density can raise local temperatures.

- Power consumption and PSU constraints: include optics power in thermal design; plan for worst-case conditions.

- Budget for aging margin: include connector re-cleaning variability and optical power drift over time.

- Vendor lock-in risk: evaluate third-party optics acceptance risk, including alarm threshold behavior and firmware interaction.

- Acceptance test plan: confirm what tests you will run (optical power, BER, eye metrics, OSNR for coherent) and what thresholds you will use.

Common mistakes and troubleshooting patterns in optical turn-up

Below are concrete failure modes seen during telecom commissioning. Each includes a root cause and a corrective action that field teams can execute quickly.

Link down after installation despite correct part number

Root cause: connector contamination or mismatched polish type leading to excess insertion loss or reflection behavior. Field inspection often reveals film, micro-scratches, or wrong adapter type.

Solution: clean with a documented connector cleaning kit, inspect with a fiber scope, re-terminate only if ferrule damage is confirmed, and verify with measured optical power at the receiver.

High error counters that worsen with temperature

Root cause: marginal power budget with insufficient margin for aging and thermal drift, or thermal airflow issues in high-density QSFP28 deployments. The symptom appears stable at room temperature and degrades during peak load.

Solution: re-measure optical power, compute actual margin from measured launch/receive, improve airflow or module placement, and if needed replace with a higher power class or longer-reach variant.

Module not recognized or DOM alarms flooding the NMS

Root cause: incompatibility between module DOM implementation and host parsing expectations, or unsupported transceiver management behavior. Some hosts also enforce vendor ID checks.

Solution: verify transceiver compatibility against the host’s supported list, update host firmware if vendor documentation supports it, and check DOM alarm thresholds and polling intervals in the NMS.

Coherent link shows intermittent loss of lock

Root cause: OSNR below target due to dirty optics, poor DWDM channel plan, or unaccounted fiber impairments such as dispersion and polarization mode effects.

Solution: clean all patch points, validate channel mapping and power per channel, then run coherent diagnostics to confirm OSNR and impairment compensation settings.

Deployment scenario: metro aggregation with 25G over single-mode

Consider a 3-tier metro data network with leaf-spine aggregation, where each top-of-rack switch uplinks to aggregation using 25G QSFP28. The build uses single-mode fiber with as-built distances of 7.2 km, and each link includes 2 connectors per end plus 2 splices in the route. Engineers select 25G single-mode optics rated for the required reach, then enforce a margin policy of at least 3 dB beyond the computed budget. During acceptance, the team verifies receiver optical power and monitors error counters over a 30-minute thermal stabilization window to catch temperature-sensitive marginal links.

In practice, the biggest schedule risk is rework caused by patch panel cleanliness and inconsistent adapter types. A disciplined inspection workflow plus a pre-commissioned fiber cleaning SOP prevents most “works in lab, fails in field” outcomes. For standards context on Ethernet physical layer expectations, align your acceptance criteria with IEEE Ethernet guidance: ITU site.

Cost and ROI note: transceiver price is only part of total cost

Typical street prices vary by region, volume, and whether you choose OEM vs third-party. As a realistic planning range, direct-detect SFP/SFP+ modules often land in the tens to low hundreds of dollars per unit, while QSFP28 single-mode options and higher-temperature variants can be higher; coherent solutions can be several times more per channel due to optics and DSP complexity. TCO is dominated by installation labor, re-clean/rework cycles, and downtime risk from marginal links, not only the purchase price.

OEM optics reduce integration risk when switch vendors enforce strict compatibility, while third-party optics can cut capex but increase validation time and acceptance effort. If your operation has strong testing automation and a reliable DOM monitoring workflow, third-party can be cost-effective; if you need fast field turn-up with minimal rework, OEM or tightly validated third-party is usually the safer ROI. For structured storage and data reliability considerations that influence optics monitoring and telemetry practices, see SNIA guidance: SNIA.

FAQ

What optical solution should I prioritize for a telecom metro link?

Start with direct-detect single-mode optics if your rate and reach fit the power budget, then standardize connector types and cleaning procedures. If you need dense DWDM capacity or very long reach, shift to coherent or transponder solutions and budget for OSNR and impairment planning.

How do DOM and telemetry affect module selection?

DOM determines whether the host can read temperature, bias, and optical power alarms consistently. If your NMS relies on those alarms for auto-isolation, verify that the module-host pair behaves correctly under firmware versions you will deploy.

Is multimode still viable for telecom access networks?

It can be viable for short reach and controlled patching environments, especially when you inherit a compliant multimode plant. For higher-rate optics, multimode becomes more sensitive to patch cord quality and launch conditions, so you must validate the installed plant, not just the fiber spec.

What temperature range matters most in field deployments?

The module operating temperature range matters, but also the rack’s local ambient and airflow. QSFP28 and higher-density optics can experience local hotspots, so validate thermal headroom using worst-case load and measured air temperatures.

What is the fastest way to troubleshoot a marginal link?

Measure optical receive power at the host, then inspect and clean both ends using a scope-based workflow. If power is adequate yet errors persist, check patching polarity, verify switch optics compatibility, and confirm that the host is not applying an unexpected FEC or signaling mode.

How do I avoid vendor lock-in risk?

Use a compatibility validation program: test the specific third-party part numbers in the specific switch/firmware versions you run. Also define acceptance criteria that include DOM alarm behavior and BER/eye metrics where available.

If you treat optics selection as an engineered system—power budget, compatibility, and connector discipline—you reduce rework and shorten acceptance cycles. Next, review optical link budgeting and fiber connector cleaning best practices to tighten your installation workflow and improve first-pass success.

Author bio: I have deployed and commissioned optical transport links in metro and data center environments, validating transceiver DOM telemetry and BER acceptance under live operating conditions. My work focuses on reproducible link budgets, connector cleanliness controls, and standards-aligned troubleshooting workflows.