Open RAN deployments fail quietly when components are “compatible on paper” but misaligned on timing, fronthaul transport, management models, or compliance profiles. This technical guide helps enterprise and service-provider architects evaluate RU, DU, CU, OAM, and transport choices with measurable validation steps. It is written for IT directors, network engineers, and integration leads who need governance controls, budget realism, and an ROI-backed approach to multi-vendor Open RAN.

Compatibility is not a checklist: define the interface contracts first

Open RAN is built on layered interfaces, and vendor claims often mix capabilities at different layers. Your first governance task is to translate marketing feature lists into testable interface contracts: functional behavior, performance envelopes, and operational semantics. In practice, compatibility breaks down in three areas: radio unit fronthaul requirements, timing and synchronization, and management and orchestration expectations. If you lock these contracts early, you reduce integration risk and avoid re-spins of transport and timing designs.

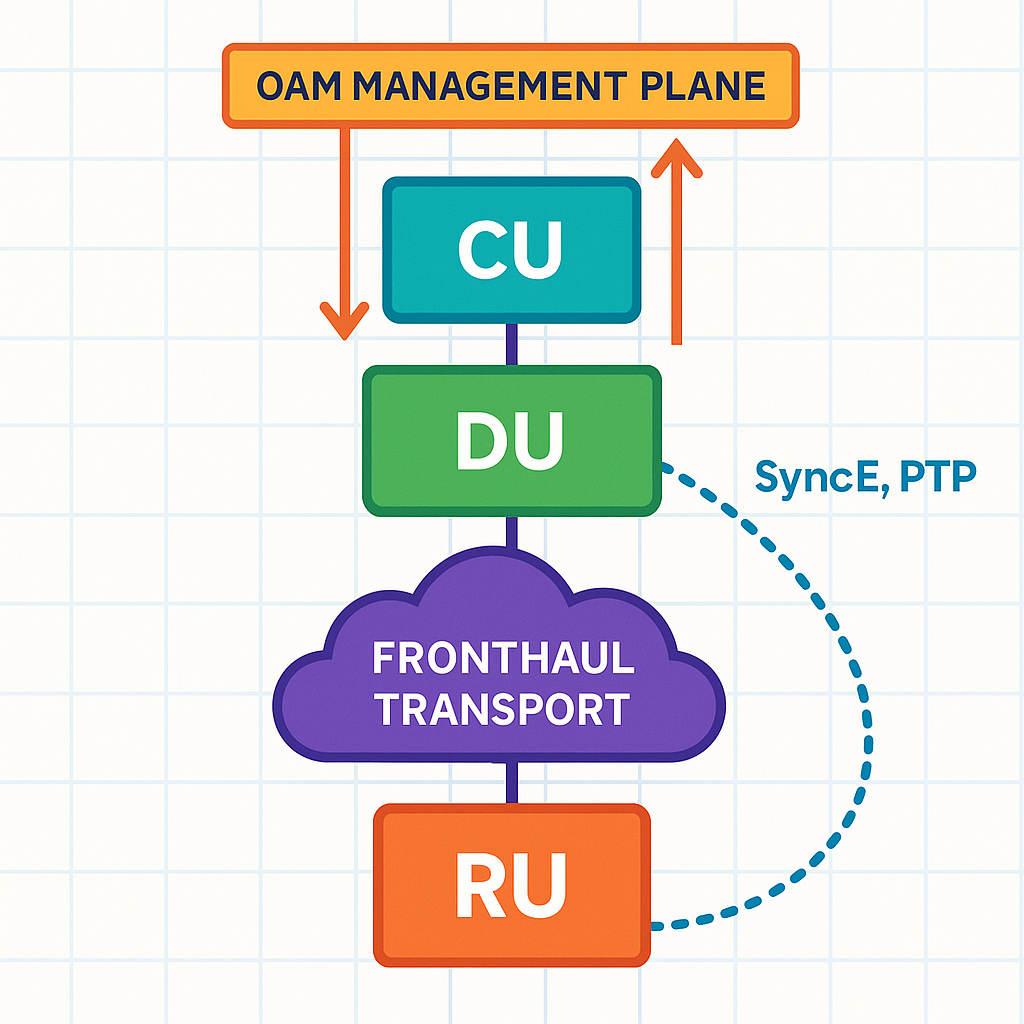

Map the stack: RU, DU, CU, transport, timing, and OAM

Start by drawing a reference architecture with explicit boundaries. For each boundary, list the interface technology, the expected data rates, latency budgets, and control-plane dependencies. A typical integration scope includes: RU to DU fronthaul (often using C-Plane and user-plane behaviors), DU to CU split (control and user-plane split characteristics), transport fabric (packetization, QoS, and redundancy), timing (SyncE and PTP), and OAM (fault, configuration, performance, and security). Then attach acceptance criteria that can be verified with packet captures, logs, and field measurements.

Use standards as the baseline, not the end state

Standards define the “minimum viable interoperability,” but real networks add constraints: jitter tolerance, packet loss handling, and operational tooling. For example, IEEE timing specifications matter because Open RAN relies on precise frequency and phase alignment for radio performance. Use [Source: IEEE] references as baseline requirements and validate against vendor implementation notes. IEEE Standards

Pro Tip: In multi-vendor Open RAN trials, treat timing as a first-class dependency. We have seen “link up” success while the system later fails under load because PTP boundary clock placement introduced microbursts that violated RU fronthaul jitter tolerance. Always validate with traffic at the same offered load you expect in production, not with idle links.

Technical compatibility matrix: RU to DU fronthaul and DU to CU split

The most visible integration risk is fronthaul mismatch. Even when the same functional split is selected, vendors may implement different transport mappings, compression modes, or buffer strategies. This section provides a practical compatibility matrix you can convert into a procurement requirement and an acceptance test plan.

What to verify at the RU to DU boundary

At the RU to DU boundary, confirm: supported fronthaul options, required line rate and oversubscription assumptions, supported encapsulation, and the exact timing inputs (SyncE and PTP profile support). Validate whether the RU expects a specific number of PRBs, symbol rates, and whether it supports the same compression or IQ transport method in your selected mode. Also verify the RU’s behavior under packet loss and reordering; these behaviors often surface only during traffic stress tests.

What to verify at the DU to CU boundary

At the DU to CU boundary, confirm: the functional split, supported control-plane messaging, and how the DU exposes management and telemetry. Validate whether the DU and CU versions support the same E2 interface expectations for RAN intelligent control and how alarms and performance metrics are modeled. If you plan near-real-time control loops, verify the latency and jitter tolerance between DU and CU and ensure your transport provides deterministic QoS.

Key technical specifications comparison table

Use the following table as a starting template for your compatibility matrix. Values are representative of what engineers test in the field; your vendors must supply exact figures in datasheets and integration test documents.

| Interface / Component Boundary | Primary Parameters to Match | Typical Target Range for Validation | Connector / Transport Detail | Operating Temperature Range | What Breaks Compatibility |

|---|---|---|---|---|---|

| RU to DU fronthaul (user-plane) | Line rate, encapsulation, IQ transport mode, compression, jitter tolerance | Validate at configured load; jitter and packet loss thresholds vendor-specific | Packetized transport over Ethernet; QoS required | -5 C to 55 C (typical for outdoor RU; confirm per model) | Different compression modes, timing intolerance, buffer strategy mismatch |

| Timing inputs (SyncE / PTP) | PTP profile support, boundary clock behavior, traceability, holdover | Measure stability under link stress; verify bounded jitter | Ethernet timing distribution | 0 C to 50 C (typical for timing-aware appliances) | Wrong PTP domain, clock hierarchy mismatch, poor BC placement |

| DU to CU split (control and user-plane) | Functional split, control-plane messaging, telemetry model | Latency budget per vendor split; validate end-to-end | Packet transport with deterministic QoS | 0 C to 45 C (typical for DU/CU servers) | Split mismatch, incompatible telemetry schema, control-plane timeouts |

| OAM and orchestration | Management APIs, software release compatibility, alarm mapping | Validate upgrade path and rollback behavior | REST/gRPC models plus event streaming | 0 C to 40 C (typical for management VMs) | Different versioned schemas, missing rollback hooks, inconsistent RBAC |

Governance controls that prevent “it works in the lab” failures

Compatibility is both technical and contractual. A mature governance program defines how you select releases, run interoperability tests, track known issues, and control change across vendors. Without these controls, an upgrade on one side can silently invalidate the compatibility assumptions of the other side.

Release and version governance

Require vendors to provide a compatibility statement by software release, not only by hardware model. In our integration work, we have seen “same hardware, different firmware” change fronthaul buffer behavior and break packet loss recovery. Establish a release matrix with: RU firmware version, DU software build, CU software build, OAM version, and transport switch firmware. Then enforce a change-control gate that blocks upgrades unless interoperability test evidence is attached.

Test plan governance: from lab proof to acceptance criteria

Define test cases that cover: link bring-up, baseline throughput, PRACH/traffic patterns that stress timing, controlled packet loss, and failover behavior. For packet loss and jitter, run impairment tests at the transport layer and confirm RU and DU recover without long-lasting degradation. For OAM, validate that alarms map correctly and that configuration changes propagate within your operational SLA.

Operational telemetry as a compatibility requirement

Two vendors can interoperate while still being operationally incompatible. If telemetry events cannot be correlated across RU, DU, CU, and transport, your operations team will lose time during incidents. Require standardized metric naming, consistent timestamps, and the ability to export logs to your SIEM or monitoring platform with stable schemas. Tie this into governance by requiring “observability coverage” targets during acceptance testing.

Selection criteria checklist for multi-vendor Open RAN programs

Procurement and architecture decisions should follow a repeatable order of operations. This ordered decision checklist is what we use to reduce integration risk while protecting budget and timeline.

- Distance and latency budgets: quantify end-to-end latency and jitter tolerance across transport, including any additional hops introduced by firewalls, aggregation layers, or routing domains.

- Timing strategy compatibility: confirm SyncE and PTP support, profile behavior, boundary clock placement, and operational holdover expectations.

- Fronthaul transport mapping: verify encapsulation, compression or IQ transport mode, QoS marking behavior, and packet loss recovery characteristics.

- Switch and NIC compatibility: confirm that your top-of-rack and aggregation switches support required QoS queues, ECN behavior (if used), and consistent packet scheduling under load.

- Release matrix alignment: require vendor release compatibility statements across RU, DU, CU, OAM, and any middleware.

- DOM and diagnostics support: ensure the platform supports optical diagnostics if you use SFP or QSFP optics in fronthaul paths, including DOM readings for temperature and bias current for maintenance workflows.

- Operating temperature and power envelope: confirm RU outdoor temperature ranges, shock/vibration ratings where applicable, and power draw under worst-case traffic patterns.

- Vendor lock-in risk: evaluate how tightly the orchestration layer couples to specific vendor APIs and whether you can run parallel validation before committing.

- Security and RBAC model compatibility: confirm identity integration, certificate rotation processes, and audit log export format consistency.

- Upgrade and rollback economics: estimate downtime impact, test effort, and whether rollback is supported without full redeploy.

Cost, TCO, and ROI: where Open RAN compatibility decisions pay off

Open RAN can reduce vendor lock-in and increase deployment flexibility, but compatibility work has real cost. In a typical pilot, integration labor dominates early spend: lab time, impairment testing, and release qualification. For production, TCO depends on operational efficiency: how quickly incidents are diagnosed, how reliable upgrades are, and how much vendor support you need during outages.

Realistic cost ranges and procurement trade-offs

Pricing varies widely by region and scale, but for budgeting you can assume: RU hardware and radios represent a major capex share; DU/CU compute can be purchased as appliances or as software on standardized servers; OAM and orchestration tooling adds additional capex and licensing. In field programs, we often see that third-party components can be cost-effective only when compatibility validation is thorough. If you skip validation, the “savings” can evaporate under delay penalties and extended support contracts.

From a power and operations standpoint, multi-vendor deployments can either improve ROI or erode it. The ROI improves when you select components with mature telemetry, predictable upgrades, and transport designs that avoid overprovisioning. The ROI erodes when compatibility problems require repeated engineering cycles and when transport layers become complex enough to raise maintenance cost.

Governance-driven ROI model we use: quantify integration effort in person-weeks per release, add the probability-weighted cost of rollback, and compare that against the expected savings from avoiding a single-vendor premium. This turns “compatibility” from a vague risk into a measurable business case.

Common mistakes and troubleshooting tips in Open RAN compatibility

Field failures usually share the same root causes: timing misalignment, fronthaul transport mismatch, or release and telemetry incompatibility. Below are concrete pitfalls that teams encounter during integration and what to do about them.

Pitfall 1: PTP domain or boundary clock placement mismatch

Root cause: The RU or DU expects a specific clock hierarchy and PTP domain configuration. A boundary clock placed incorrectly can introduce latency variation that only appears under load. Solution: verify PTP domain IDs, clock type, and servo behavior with packet-level inspection. Then run an impairment test while generating sustained fronthaul traffic to confirm jitter stays within vendor thresholds.

Pitfall 2: Fronthaul encapsulation or compression mode not identical

Root cause: Vendors may support multiple fronthaul modes, but the deployment selects one implicitly via configuration defaults. The DU might packetize differently than the RU expects, leading to intermittent decode errors. Solution: enforce explicit configuration for encapsulation and compression modes, and confirm with captured traffic that the RU parses the expected fields. Require vendor-side validation evidence for the exact RU and DU release combination.

Pitfall 3: QoS and scheduling behavior differ from what the RU timing budget assumes

Root cause: Even if the physical link is fine, switch queue behavior can starve fronthaul packets during bursts. This often happens when QoS policies were tuned for normal data traffic rather than time-sensitive fronthaul. Solution: implement and verify QoS mapping end-to-end, then measure queue latency and packet drops during controlled bursts. Validate redundancy behavior during link failover.

Pitfall 4: Telemetry schema drift across software releases

Root cause: After an upgrade, metric names and alarm codes change, breaking your monitoring rules and incident workflows. Solution: include schema regression tests in your upgrade runbook. Require vendors to provide release notes that include telemetry changes, and update your dashboards using a controlled rollout with rollback readiness.

Pitfall 5: Outdoor RU environmental mismatch and power budget surprises

Root cause: RU operating temperature assumptions can be wrong when mounting conditions differ from lab conditions. Additionally, power supplies in the field may sag during peak load. Solution: validate thermal behavior with realistic mounting and airflow models. Measure input power quality and confirm that your power distribution meets vendor tolerance under peak load.

FAQ: technical guide answers for Open RAN component compatibility

How do I prove compatibility before buying production equipment?

Run a release-specific interoperability lab test using the exact RU, DU, CU, OAM, and transport configurations you plan to deploy. Include impairment testing for jitter and packet loss, and validate both user-plane performance and OAM fault mapping. Require written vendor evidence tied to the specific software builds.

What is the most common reason Open RAN fails after a successful link bring-up?

The most common causes are timing and transport behavior under load. Teams often validate with idle or low traffic and only later discover jitter or queue starvation effects. Confirm PTP and QoS behavior while generating fronthaul traffic at production-like load.

Do I need to standardize on one switch vendor for Open RAN?

Not necessarily, but you must ensure that your switches meet the QoS and scheduling requirements implied by fronthaul timing budgets. Validate queue behavior, packet loss characteristics, and redundancy failover behavior across the specific switch models and firmware versions you will use.

How important is telemetry and management plane compatibility?

It is critical for operations and governance, even if the radio traffic works. If alarms and metrics do not map consistently across components, incident response times increase and upgrades become risky. Make observability coverage part of acceptance criteria and test it during integration.

Can I mix RU and DU hardware from different vendors safely?

Yes, but only when you align functional split, fronthaul transport mapping, and release compatibility. The safest approach is to require vendor interoperability statements and to run a lab test with the exact release pairings. Avoid relying on “supports the standard” claims without build-level evidence.

What should I include in my procurement requirements to reduce integration risk?

Request a compatibility matrix by software release, fronthaul mode details, timing profile support, and upgrade/rollback behavior. Also require acceptance tests for impairments, OAM mapping, and telemetry schema stability. Tie these requirements to measurable KPIs like jitter bounds, alarm correlation success rate, and upgrade downtime limits.

Open RAN compatibility is achievable, but only when you treat interfaces, timing, transport, and operational governance as enforceable contracts. Use this technical guide to build a release matrix, run impairment-based acceptance tests, and lock measurable acceptance criteria before scaling. Next step: review Open RAN OAM and orchestration governance to ensure your management plane and telemetry model stay consistent across upgrades.

Author bio: IT director focused on enterprise architecture and network governance, with hands-on experience qualifying multi-vendor radio and transport stacks in production-like labs. Former field integration lead who builds interoperability acceptance tests and ROI models for telecom-grade deployments.