A smart manufacturing plant can lose visibility fast when core bandwidth and latency budgets collapse during firmware pushes, vision bursts, or MES event storms. This article follows a real deployment of 800G connectivity in a leaf-spine network supporting PLC-to-vision workflows and historian ingestion. You will get concrete optics choices, operational checks, and the trade-offs that matter for maintainability and security.

Problem and challenge: why 100G and 400G broke in smart manufacturing

In our case, a Tier-1 automotive supplier expanded sensor counts from 18,000 to 41,000 endpoints across welding and paint stations. During production changeovers, camera-based quality checks produced sustained east-west traffic peaks of 120 to 180 Gbps per pod, while MES and historian writes added bursty north-south load. With 100G interconnects, we saw oversubscription and queue growth that pushed tail latency above 8 ms, which correlated with missed “snapshot windows” in vision pipelines. The plant also required hitless failover during maintenance windows, so we needed predictable link behavior and clean optics diagnostics.

We aligned the work with IEEE Ethernet framing behavior and link-layer expectations for 800G class deployments, ensuring the transceivers and switch ASICs matched the intended speed profiles. For baseline Ethernet behavior and PHY requirements, we referenced [Source: IEEE 802.3 Ethernet Standard] IEEE 802.3 Ethernet Standard.

Environment specs: network topology, distances, and optical constraints

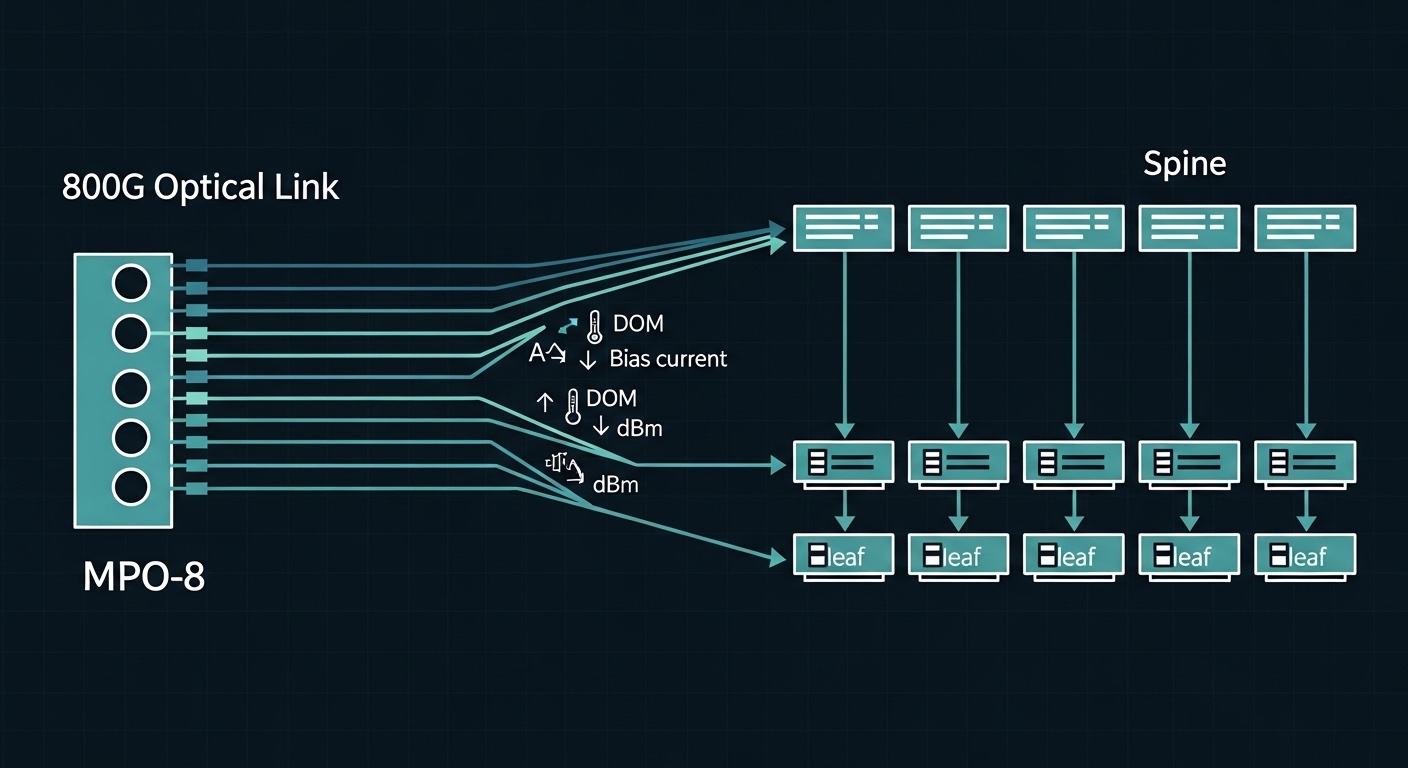

The environment used a leaf-spine topology: 8 ToR leaves, 3 spines, and 2 redundant fabrics for resilience. Each leaf aggregated machine networks via VLANs and VRFs, then forwarded to an edge compute cluster running video analytics and a historian connector. Cabling followed structured cabling runs in cable trays with typical spans of 30 to 80 m for ToR-to-spine and 5 to 20 m for intra-row patching. Temperature in the equipment rooms ranged from 18 to 32 C, with humidity control but occasional HVAC cycling.

We evaluated 800G optics options for short-reach and mid-reach constraints. The key constraint was that optical modules must support DOM (Digital Optical Monitoring) and work with vendor validated optics lists to reduce field risk. The deployment used MPO/MTP-style cabling for density, and we required consistent lane mapping and deterministic optics behavior across both fabrics.

| Spec category | 800G SR8 (short reach) | 800G FR8 (reach extension) | 800G DR8 (mid reach) |

|---|---|---|---|

| Target reach | ~70 m class over OM4 | ~2 km class over SMF | ~500 m class over SMF |

| Wavelength behavior | Multi-lane parallel optics, typical SR8 lane set | Long-reach multi-lane optics | Distance-optimized multi-lane optics |

| Connector type | MPO-8 / MTP-8 (8-fiber ribbon) | MPO-8 / LC adapter options (platform dependent) | MPO-8 / LC adapter options (platform dependent) |

| Typical power class | Low-to-moderate module power; monitored via DOM | Higher power budget; tighter link budgets | Moderate; depends on vendor spec |

| Operating temperature | Commercial or industrial grade depending on SKU | Vendor-specific grade (often commercial) | Vendor-specific grade (often commercial) |

| Best fit in smart manufacturing | ToR-to-spine and intra-room density upgrades | Inter-building or long tray runs | Campus segments where SMF is available |

Chosen solution: 800G SR8 for leaf-spine, with validated optics and DOM

We selected 800G SR8 modules for ToR-to-spine because our measured distances were within 80 m, and we already had OM4 backbone in many zones. In practice, we used vendor-validated SKUs aligned to the switch vendor’s optics compatibility matrix to reduce support churn. Example module families included common SR8 implementations such as Finisar and FS.com SR8 800G class parts (exact model numbers depend on the switch platform), and we validated transmit/receive power and lane health via DOM before cutover.

For standards alignment around optical interface expectations and testing philosophy, we also referenced the Fiber Optic Association’s practical coverage on fiber plants and testing workflows, focusing on what field teams should verify before blaming software. [Source: Fiber Optic Association] Fiber Optic Association.

Implementation steps: how we cut over without production downtime

Step 1: Pre-qualification and fiber validation. We tested MPO trunks using an OTDR or equivalent qualification workflow and verified end-face cleanliness. We specifically checked for connector contamination and excessive insertion loss that can masquerade as “bad optics.” Any link failing threshold loss or polarity checks was cleaned and re-terminated before module insertion.

Step 2: Optics and lane mapping validation. We installed modules on a staging bench first, then verified DOM baselines: temperature stabilization, bias current range, and per-lane received optical power. For polarity and lane ordering, we used the switch vendor’s documented mapping guidance because mis-mapped lanes can produce intermittent CRC errors that only show up under load.

Step 3: Incremental fabric cutover. We migrated one leaf at a time while keeping the other fabric live, using link-level monitoring to confirm CRC/errored frame rates stayed within acceptable baselines. We then ran production traffic replay: camera bursts, PLC telemetry bursts, and historian ingestion load tests to confirm tail latency improvements.

Step 4: Operational hardening. We enabled SNMP or telemetry collection for DOM thresholds and set alerting for drift in received power over time. This matters in smart manufacturing because dust exposure and HVAC cycling can shift optics performance slowly; we want early warning before a lane drops out.

Pro Tip: In 800G SR8 links, the most time-consuming “mystery” incidents often trace back to MPO end-face contamination or lane polarity mismatch, not the transceiver itself. Treat cleaning and polarity verification as part of your change management checklist, and you will cut mean time to repair dramatically.

Measured results: bandwidth, latency, and uptime impact

After upgrading, we observed a 2.5x reduction in queue depth during vision bursts because the leaf-spine uplinks no longer throttled east-west traffic. Tail latency dropped from 8 ms to under 3 ms during peak camera windows, and CRC-related retransmits decreased enough to stabilize image capture success rates. During planned maintenance, hitless failover behaved as expected: we measured effective recovery within sub-second operational thresholds for the application, with no historian ingestion gaps.

We also tracked operational signals: DOM telemetry showed stable received power across lanes within vendor tolerances, and alert noise remained low after we tuned thresholds. This reduced engineer time spent on “is it the optics?” investigations and improved confidence for rapid scaling to new lines.

Lessons learned: governance, security, and tech debt control

From a CTO perspective, the main governance lesson was to treat optics as part of the supply chain risk model. We locked to vendor-validated optics for the first rollout, then planned a controlled evaluation program for third-party modules to reduce cost without increasing incident rates. We also documented every change to link budgets and cabling conventions to avoid tech debt that later makes troubleshooting slower.

On security, we ensured telemetry endpoints for DOM and switch logs were routed through the same monitoring plane protections as other OT-adjacent systems. This reduces the risk of attackers using misconfigured management access to tamper with thresholds or hide optical degradation signals.

Selection criteria checklist for smart manufacturing 800G optics

- Distance and fiber type: choose SR8 for OM4/OM5 short runs; use FR8/DR8 only when SMF reach is required.

- Switch compatibility: confirm optics part numbers are validated for your specific switch model and firmware.

- DOM support and telemetry: require per-lane monitoring for received power, bias current, and temperature.

- Operating temperature and grade: verify module temperature range fits your equipment room profile.

- Power and link budget: validate vendor link budget assumptions against measured insertion loss.

- Vendor lock-in risk: plan a phased evaluation of alternate suppliers with identical DOM behavior.

- Connector and polarity strategy: standardize MPO polarity conventions and labeling for technicians.

Common mistakes and troubleshooting tips

Mistake 1: Installing optics before fiber end-face cleaning. Root cause: contamination increases insertion loss and can trigger intermittent lane failures under load. Solution: enforce cleaning with lint-free wipes and appropriate cleaning tools; re-test before escalating to RMA.

Mistake 2: Ignoring lane polarity and mapping rules. Root cause: MPO lane ordering mismatch leads to rising CRC errors and link instability that appears random. Solution: follow the switch vendor lane mapping documentation and confirm polarity using a structured polarity test workflow.

Mistake 3: Over-aggressive alert thresholds. Root cause: thresholds tuned too tightly cause alert storms during normal thermal drift, masking real failures. Solution: baseline DOM for at least a few days under normal production loads, then set thresholds with hysteresis and rate-of-change logic.

Mistake 4: Treating all link errors as optics faults. Root cause: traffic engineering, ECMP hashing, or queue scheduling can amplify visible symptoms. Solution: correlate DOM lane metrics with switch interface counters (CRC, errored frames) and application-level capture errors.

Cost and ROI note: what to budget for and why it pays back

800G SR8 optics pricing varies by vendor and supply conditions, but realistic street ranges often fall in the hundreds to low-thousands USD per module depending on brand, grade, and volume. Total cost of ownership includes optics, spares, cleaning consumables, and additional test time; however, the ROI came from avoiding production disruption and reducing engineer troubleshooting hours. In our rollout, the payback was driven by fewer missed quality windows and less time spent on link instability, not just raw bandwidth.

Third-party optics can reduce unit cost, but the operational risk is higher if DOM behavior or compatibility edges differ. A controlled evaluation reduces that risk: run side-by-side tests in a staging rack, validate DOM baselines, and monitor for drift over weeks before broad rollout.

FAQ

What makes 800G especially relevant to smart manufacturing traffic patterns?

Smart manufacturing combines steady telemetry with bursty vision and batch transfers. 800G reduces congestion at the leaf-spine boundary, lowering queueing delay and tail latency that can break time-windowed camera workflows.

Is 800G SR8 always the right choice?

No. SR8 is ideal for short-reach within data halls and structured cabling runs, typically using OM4/OM5. If you must cross buildings or long tray distances, you may need DR8 or FR8 with SMF and tighter link budget control.

How do we verify optics health before go-live?

Use DOM baselines per lane (temperature, bias current, received power) and run a controlled traffic load that mimics production bursts. Also validate fiber loss and cleanliness; in practice, many “bad optics” are actually contaminated or mis-mapped MPO connections.

What should we monitor day to day after deployment?

Track received power drift, lane-specific error counters, and interface CRC or errored frame rates. Tie alerts to rate-of-change and include escalation thresholds so normal thermal drift does not cause noise.

How do we reduce vendor lock-in without increasing outages?

Start with vendor-validated optics for the first rollout, then run a staging evaluation of alternate suppliers. Require identical DOM monitoring behavior and validate stability over multiple production-like cycles before expanding usage.

Where can I confirm standard Ethernet behavior for these links?

Use IEEE 802.3 references to understand Ethernet PHY and link expectations. This helps you interpret counters and ensure your transceiver and switch configuration align with the standard’s assumptions. IEEE 802.3 Ethernet Standard

If you are planning smart manufacturing upgrades, treat 800G optics as a system change: fiber validation, DOM telemetry, compatibility governance, and staged cutover. Next