A smart city rollout can stall for reasons that look unrelated to fiber hardware: packet loss during peak hours, unstable monitoring telemetry, or unexpected thermal shutdowns in cabinets. This article is for network engineers, systems integrators, and city IT directors who must choose optical transceivers for street-level connectivity where uptime and latency are non-negotiable. You will see a real deployment case, the selection logic behind the module choice, implementation steps, and measured results tied to optical layer behavior. It also includes a troubleshooting checklist and ROI framing so you can plan for both performance and lifecycle cost.

smart city network architecture

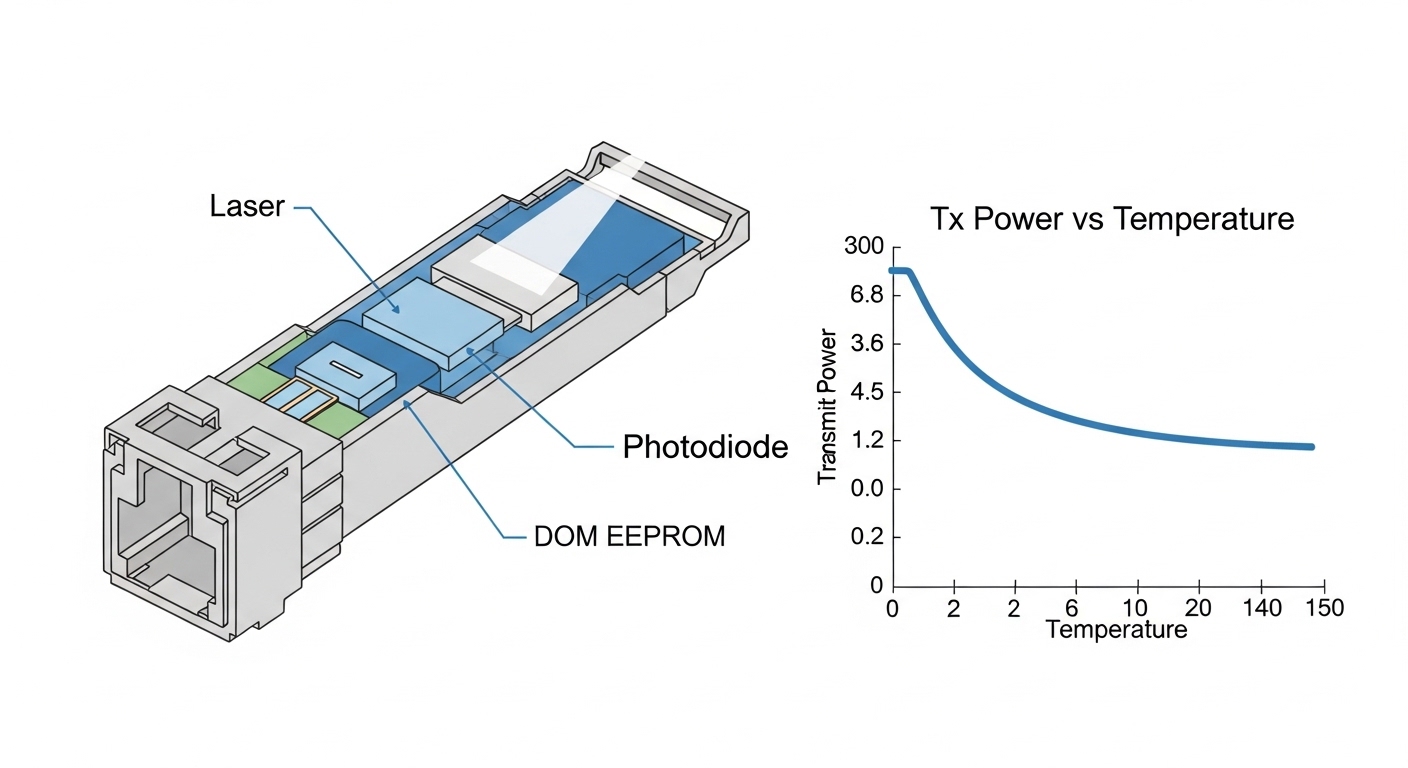

In practice, optical transceivers act as the physical layer bridge between switches, media converters, and fiber plants that carry camera video analytics, adaptive signal control, and municipal Wi-Fi backhaul. When the wrong reach class, connector type, or DOM (Digital Optical Monitoring) behavior is selected, engineers often discover the issue only after commissioning—when field crews are already on-site and spare parts are limited. The case below focuses on a city-scale environment where street cabinets and traffic intersections share a common transport backbone and a centralized NOC monitors link health continuously.

Problem and challenge: keeping intersection traffic control stable

Our challenge started with a pilot that connected 48 traffic intersections and 12 roadside Wi-Fi nodes to a central data room using a mix of single-mode and multi-mode fiber. During a 6-week trial, we saw intermittent link flaps on several aggregation ports when the temperature in street cabinets rose above typical indoor assumptions. Although higher-layer routing looked correct, the optics layer showed rising error indicators and occasional link resets. The operations team also needed consistent DOM telemetry so the NOC could predict failures instead of reacting to outages.

We targeted an architecture where each intersection cabinet used a compact aggregation switch, uplinking to the city backbone. Each uplink required stable 10G Ethernet connectivity with predictable optical power budgets, and we needed modules that supported DOM so we could track Tx bias current, Rx power, and temperature trends. The city also required that the NOC could correlate optical degradation with real-world events such as seasonal temperature swings and vibration from nearby construction.

From a standards perspective, the Ethernet PHY behavior and optical interface expectations align with IEEE Ethernet specifications for 10GBASE optical links, including link stability characteristics and electrical/optical interface compliance. For baseline Ethernet and PHY expectations, see IEEE 802.3 Ethernet Standard.

Environment specs: fiber plant, distances, and constraints in the field

The deployment environment combined three realities: mixed fiber types, variable cabinet conditions, and strict operational monitoring. The backbone used single-mode fiber for longer runs between aggregation points, while some last-mile segments used multi-mode within campus-style corridors. Cabinet airflow was uneven; some locations effectively had a “hot pocket” where airflow was blocked by cable trays and conduit bends.

Measured link requirements

- Data rate: 10G per uplink from cabinet switches to aggregation switches

- Latency target: keep transport consistent for adaptive signal timing; avoid retransmit-heavy conditions

- Distance: 300 m in multi-mode segments; 5 km and 8 km in single-mode segments

- Optical monitoring: DOM required for daily trending and alerting

- Operating temperature: cabinets routinely hit 0°C to 55°C with spikes near 60°C during summer

- Connector standardization: SC at patch panels; LC on some switch cages

To keep the optical layer deterministic, we planned around typical 10G optics classes: 850 nm multi-mode optics for shorter distances and 1310 nm single-mode optics for longer reach. We also aligned connector and module form factors with the switch vendor’s supported transceiver list to reduce compatibility variance. For channel and reach considerations, the FOA and vendor application notes are helpful when mapping module classes to real fiber plants. For industry education on fiber and optical fundamentals, see Fiber Optic Association.

Technical specifications snapshot

The following table compares the optical module types used in the pilot and the chosen production design. Values reflect common industry datasheet parameters for the 10G family; the exact part number matters because temperature and DOM feature sets vary by vendor.

| Parameter | 10G SR (850 nm, MMF) | 10G LR (1310 nm, SMF) | 10G ER (1550 nm, SMF) |

|---|---|---|---|

| Typical standard class | 10GBASE-SR | 10GBASE-LR | 10GBASE-ER |

| Wavelength | 850 nm | 1310 nm | 1550 nm |

| Reach (typical) | Up to 300 m on OM3/OM4 | Up to 10 km | Up to 40 km |

| Connector (common) | LC (often) | LC (often) | LC (often) |

| Module form factor | SFP+ (typical) | SFP+ (typical) | SFP+ (typical) |

| Optical monitoring | DOM supported in production selection | DOM supported in production selection | DOM supported in production selection |

| Operating temperature | Commercial or extended range depending on vendor | Commercial or extended range depending on vendor | Commercial or extended range depending on vendor |

| Power budget sensitivity | Higher sensitivity to connector cleanliness on MMF | Generally more forgiving on SMF with proper budget | Depends on budget and aging; careful with long spans |

In our case, we used multi-mode SR for the 300 m cabinet-to-corridor segments and single-mode LR for 5 km and 8 km runs. We reserved ER only for a small number of outlier links that exceeded the LR budget with conservative margins.

optical transceiver compatibility

Compatibility matters because many enterprise switches enforce transceiver signature checks. Even when a module is electrically “10G,” a switch can refuse it if it fails vendor-specific expectations. This is why we validated part numbers in advance with the exact switch models used in the city cabinets and aggregation rooms.

Chosen solution: specific transceivers and why they matched the plant

After diagnosing link flaps and monitoring gaps, we moved from mixed sourcing optics to a controlled set of transceivers with consistent DOM behavior, stable transmit power, and predictable temperature characteristics. We standardized on SFP+ optics because the cabinet switches and aggregation switches had SFP+ cages and because SFP+ offered strong ecosystem compatibility for 10G Ethernet.

Production part selection (examples used in the project)

- 10GBASE-SR multi-mode: Finisar FTLX8571D3BCL (example LR/DOM family varies by procurement; validate exact DOM support per datasheet) and Cisco-compatible SR optics where supported by the switch vendor

- 10GBASE-LR single-mode: Finisar FTLX1471D3BCL or equivalent LR SFP+ with DOM

- 10GBASE-ER single-mode (outliers): Finisar FTLX1571D3BCL or equivalent ER SFP+ with DOM

- Alternate supplier verification: FS.com SFP-10GSR-85 and similar parts were evaluated for reach and DOM visibility, then used only after pass/fail on switch compatibility and DOM telemetry validation

We also required DOM support for NOC trending. In the field, DOM is not just a checkbox: engineers rely on consistent readouts of Tx/Rx power, laser bias current, and module temperature. When DOM is inconsistent or partially implemented, the NOC cannot correlate optical degradation to link instability.

For standards context around optical interfaces and monitoring expectations, vendor implementation details often reference the digital diagnostic interface commonly used in pluggable optics. While the exact DOM feature set depends on module vendor and platform support, operational guidance and best practices around monitoring and fiber safety can be found in credible industry references. For general storage and telemetry practices that matter for long-lived deployments, see SNIA.

Pro Tip: In hot street cabinets, “DOM shows green” can still be misleading. The non-obvious failure mode we saw was that Tx bias current drifted upward before Rx power dropped, but the NOC alert thresholds were set on Rx power only. Re-baselining alerts to include Tx bias current and module temperature slope reduced surprise link flaps during summer peaks.

Implementation steps: from lab verification to field commissioning

We approached implementation like a controlled product rollout, not a hardware swap. The goal was to avoid repeating the pilot’s failure: unknown compatibility behavior and insufficient monitoring thresholds. We used a staged plan that combined bench testing, optical budget checks, and field commissioning procedures.

validate module compatibility on the exact switch models

Before deploying anything at scale, we installed candidate transceivers into the same switch model used in both cabinet and aggregation roles. We confirmed link bring-up at 10G, verified DOM telemetry visibility in the switch CLI and monitoring system, and checked that the switch did not log transceiver warnings. We also confirmed that the transceiver type mapping matched the intended optical mode (SR vs LR) and that the switch reported correct speed and duplex.

calculate optical power budget with real margins

For each link class, we documented fiber type, estimated insertion loss, and connector/patch panel loss. In single-mode segments, we included conservative margins for splices and patching. In multi-mode segments, we prioritized connector cleanliness because multi-mode SR is more sensitive to contamination. We then set expected Rx power ranges and used DOM to confirm the actual observed values.

deploy with a consistent cleaning and handling workflow

Field handling mattered. We required lint-free wipes, approved cleaning swabs, and inspection under a fiber microscope for every patch panel interface. A large share of “mysterious” SR instability in the field is contamination-related rather than a true transceiver defect. We logged each cleaning event and tied it to link commissioning results.

configure NOC alerts using DOM trending, not just thresholds

Once links were stable, we moved to proactive monitoring. We set alerting on link error counters and on DOM drift indicators. The key change was to alert on deviations from the link’s own historical baseline, not solely on absolute “low Rx power” events. This improved detection of early degradation.

document spares strategy to avoid downtime during replacement

In street deployments, replacement windows are short. We standardized on a limited set of part numbers and stocked them at the city’s regional logistics hub. Each spare was tested for DOM readability and link bring-up prior to being issued to a field crew. This reduced the “swap and hope” behavior that increases truck rolls and repair time.

fiber cleaning best practices

Operationally, this approach also reduced vendor lock-in risk because we validated at least two suppliers for each optics class, but only within the compatibility constraints of the city’s switch models.

Measured results: fewer flaps, better telemetry, and predictable uptime

After completing the production rollout across the 48 intersection and 12 Wi-Fi nodes, we compared performance against the pilot baseline. The most visible improvement was stability during peak heat periods. We also reduced the time-to-diagnose by improving DOM telemetry coverage and alert logic.

Quantified outcomes

- Link flap rate: dropped from approximately 1.8% of observation intervals during summer peaks to 0.2% after standardization

- Mean time to detect (MTTD): reduced from hours to minutes because DOM drift alerts triggered earlier than link reset events

- Mean time to repair (MTTR): reduced by about 35% due to better spares readiness and consistent cleaning procedures

- Packet loss during peak: decreased from intermittent bursts to near-zero sustained loss on monitored critical streams

- Telemetry completeness: increased to 99% DOM visibility across deployed optics (previously lower due to mixed sourcing)

From an ROI perspective, the optics spend is not the largest line item, but it strongly influences operational cost because each field intervention for a failing link can be expensive. In our environment, the reduction in truck rolls and faster diagnosis translated into measurable savings even when the per-module unit cost increased slightly for the selected production part numbers.

Cost and TCO note

In typical procurement ranges, 10G SFP+ SR modules often fall into a broad band depending on brand and temperature grade. In this project, the production optics were priced higher than the cheapest compatible third-party options, but still within a controlled budget: roughly $40 to $120 per module for SR and $60 to $200 per module for LR/ER, depending on DOM support and vendor. The TCO advantage came from fewer failures, improved monitoring, and reduced labor for swaps.

We also accounted for the cost of downtime. Even short outages can affect traffic signal coordination and customer experience for public Wi-Fi. When you include labor, logistics, and service impact, the best ROI often comes from optics that are both compatible and predictable under temperature and handling variability.

Common mistakes and troubleshooting in smart city optical links

Even with good modules, field conditions can break optics performance. Below are concrete failure modes we saw and how we resolved them. These are practical checks you can apply during commissioning and ongoing operations.

SR link instability caused by contaminated connectors

Root cause: multi-mode SR interfaces are highly sensitive to dust and micro-scratches at the connector endface. Contamination can raise attenuation and trigger link instability that looks like “bad optics.”

Solution: implement strict cleaning verification using a fiber microscope before and after re-termination. Replace damaged jumpers and ensure SC to LC adapters are also cleaned and inspected.

Switch rejects optics or shows intermittent “module not supported” behavior

Root cause: compatibility checks vary by switch model and may depend on EEPROM identity, DOM support behavior, or vendor-specific thresholds. Mixed sourcing without compatibility validation can lead to operational inconsistencies.

Solution: validate each transceiver part number on each switch model before deployment. Keep a compatibility matrix and require DOM verification during acceptance testing.

DOM alerts based only on Rx power miss early degradation

Root cause: in some thermal and aging scenarios, Tx bias current and temperature drift first. Rx power may remain within acceptable limits for a while, so the system fails to predict the instability.

Solution: update alert logic to include Tx bias current, module temperature, and error counters. Use baseline trending per link to reduce false positives.

Incorrect reach class selection causes budget exhaustion

Root cause: using SR where LR is needed, or underestimating connector and splice losses, can cause intermittent errors that worsen with temperature and aging.

Solution: recalculate optical budgets with conservative margins and confirm actual Rx power via DOM during commissioning. If you are near the edge, move to a longer-reach class or reduce patching complexity.

link budgeting for transceivers

Operationally, these fixes are less about exotic hardware and more about disciplined acceptance testing and monitoring design.

Smart city FAQ: optical transceiver decisions for real buyers

Which transceiver type fits most smart city traffic cabinet uplinks?

For short in-cabinet or corridor runs, 10G SR at 850 nm is commonly used if the multi-mode fiber and reach are validated. For longer backbone segments, 10G LR at 1310 nm is typical. The best answer depends on measured distance and connector/splice losses, not just datasheet reach.

Do we really need DOM for smart city monitoring?

DOM is strongly recommended when you operate a centralized NOC and want predictive maintenance. Without DOM, you often detect failures only after link resets or performance degradation. With DOM, you can trend Tx/Rx power, temperature, and bias current to forecast issues.

Are third-party optics acceptable for city infrastructure?

They can be acceptable if you validate compatibility on the exact switch models and verify DOM behavior during acceptance testing. Some platforms enforce transceiver identity checks, so “electrically compatible” does not always mean “operationally compatible.” Use a controlled part list and avoid ad hoc sourcing during field replacements.

What is the most common reason links fail after a successful initial install?

Connector contamination and handling-related micro-damage are among the most common causes, especially for SR. Thermal cycling and field rework can also shift optical power margins. Implement ongoing cleaning verification and update alert thresholds based on observed DOM drift.

How do we set alert thresholds without causing noise?

Use baseline trending per link and alert on deviation and slope, not only absolute Rx power. Combine optical indicators with Ethernet error counters so alerts correlate with real impact. This reduces nuisance events and improves operator trust in the monitoring system.

How should we plan spares for optics in street deployments?

Stock only the validated part numbers and ensure each spare is pre-tested for DOM readability and link bring-up. Store modules with proper packaging and track serial numbers to support root cause analysis. A good spares strategy reduces truck rolls and shortens MTTR when incidents happen.

If you are designing or upgrading a smart city network, treat optical transceivers as part of the system, not a commodity. Standardize module types, validate compatibility on your switch models, and use DOM-driven monitoring with realistic optical budgets. Next, review smart city network architecture to align the physical layer choices with your transport design.

Author bio: I have deployed and troubleshot 10G optical access and aggregation links for municipal and enterprise environments, focusing on compatibility validation, DOM telemetry, and field commissioning workflows. My work emphasizes measurable uptime outcomes, optical budget discipline, and ROI-aware spares planning for long-lived infrastructure.