When Open RAN pilots stall, it is rarely because the concept is wrong. It is usually the mundane stuff: timing loops, fronthaul bandwidth headroom, connector chaos, and integration surprises between vendors who all swear their interfaces are “standard.” This case study helps network and radio engineers plan an Open RAN rollout that survives reality, with concrete numbers, selection criteria, and troubleshooting lessons learned in a live deployment.

We will walk through a specific environment, the chosen architecture, the implementation steps, and the measured results across performance and reliability. Along the way, you will get a decision checklist, a table of key optical and environment specs, and the common failure modes that turn “it should work” into “why is the RU silent.”

Problem / Challenge: why Open RAN broke at hour 3 of go-live

In our deployment, the first two days looked like a win: radios came up, basic attach worked, and throughput tests passed. Then, on day three, the system started showing intermittent fronthaul packet loss and rising RLC retransmissions, especially during peak traffic. The alarms pointed at the fronthaul transport path, but the symptoms were maddeningly non-deterministic, like a network gremlin with a caffeine habit.

The root challenge was the combination of Open RAN timing sensitivity and a transport design that was “almost” compliant. The architecture required tight synchronization across distributed units (DU) and radio units (RU), while the optical links and switch fabric introduced variable latency under load. In Open RAN, the air interface is only half the story; the other half is the deterministic-ish behavior of fronthaul, clocking, and link-level configuration.

We needed a deployment approach that explicitly accounted for synchronization mode, fronthaul capacity margins, optical link stability, and operational temperature constraints inside telecom cabinets. Also, we needed to stop relying on faith and start relying on measurable KPIs.

Environment specs: the lab-to-field bridge for fronthaul and sync

The site was a multi-tenant edge location with a small leaf-spine-like aggregation fabric. There were two DUs and six RUs in the first phase, each RU fed by a dedicated fronthaul connection. Distances ranged from 85 m to 420 m from DU racks to RU mounts, using fiber runs through cable trays.

From a transport standpoint, the fronthaul design targeted 25G per RU link for the chosen functional split and transport profile. The switching layer used a pair of aggregation switches with ECMP enabled, but we had to treat latency variation as a first-class engineering constraint. For synchronization, the design used a centralized timing source feeding the transport network so that the DU and RU could align their timing references.

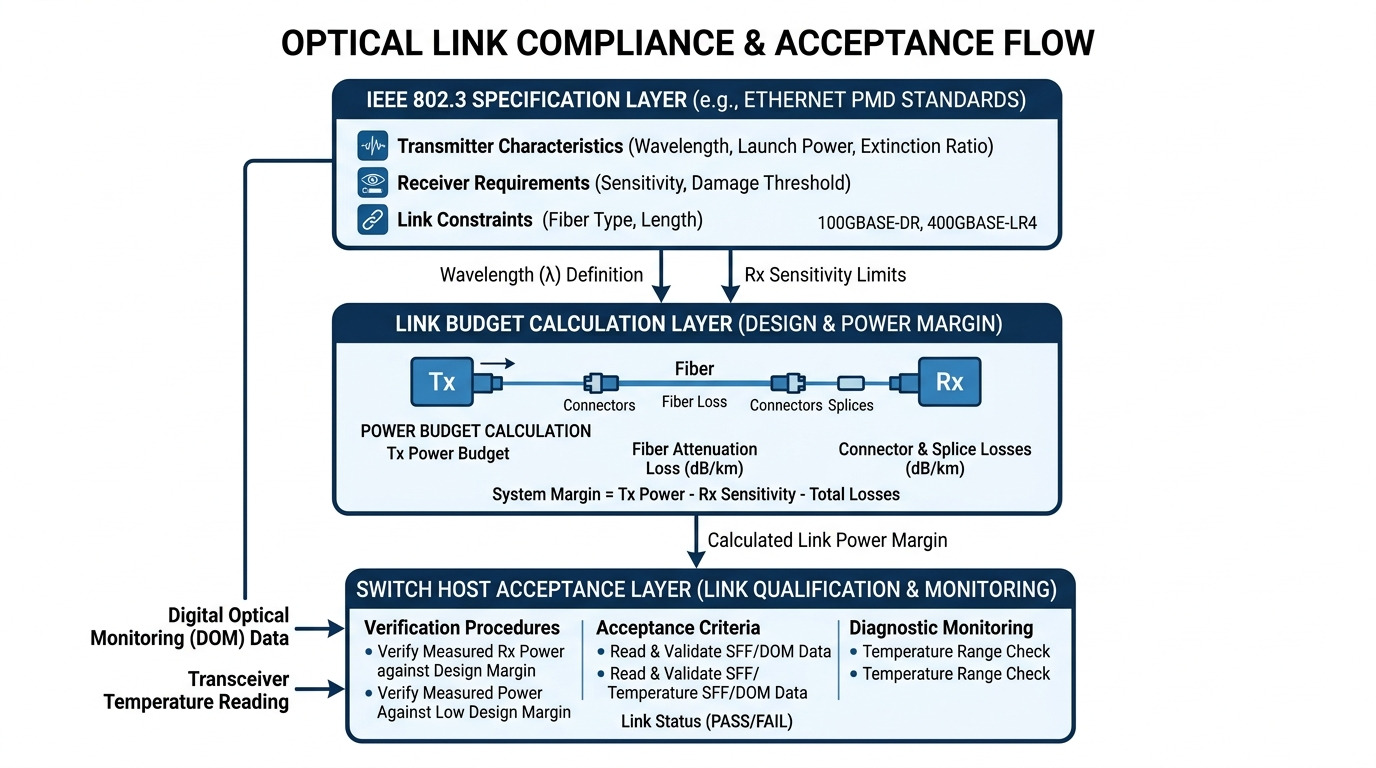

We validated Ethernet behavior using vendor telemetry and link counters, and we treated optical power levels and error rates as gating criteria for go-live. For baseline Ethernet framing and link-layer behavior, we anchored our expectations to the relevant Ethernet standard behavior: IEEE 802.3 Ethernet Standard.

Key optical and environmental constraints used in design

Because Open RAN fronthaul is sensitive to link errors and jitter, we specified optics with predictable operating envelopes, including temperature range and DOM (Digital Optical Monitoring) support. We also standardized connector cleanliness and labeling, because fiber is a drama queen: it fails silently until it does not.

| Parameter | Requirement for this Open RAN site | Example module families used |

|---|---|---|

| Data rate | 25G per fronthaul link | SFP28 / SFP26-class optics (vendor-specific) |

| Wavelength | 850 nm for short reach, multimode | 850 nm SR optics with LC |

| Reach | 85 m to 420 m (site-dependent) | SR modules for <= rated reach; LR for longer runs |

| Connector | LC duplex | LC connectors with controlled polishing |

| DOM support | Required for alarms and telemetry | Supported via QSFP/SFP DOM interface |

| Operating temperature | -5 C to +70 C cabinet environment target | Modules rated for extended ranges |

| Monitoring KPIs | CRC errors, packet drops, BER proxy, link flaps | Switch counters + optics DOM thresholds |

For optical link power budgeting, we followed established fiber optic practices and reference documentation used by the industry. A solid starting point is the Fiber Optic Association’s guidance on link loss and inspection practices: Fiber Optic Association.

Update date: 2026-05-04. The lessons remain stable even as module form factors evolve; what changes is the vendor ecosystem and how quickly you can get telemetry when something goes sideways.

Chosen solution & why: transport discipline for Open RAN

After the hour-3 failure, we changed two things: (1) we tightened the timing and transport configuration so that latency variation became less of a surprise, and (2) we replaced a subset of optics and cabling practices that were “technically within spec” but operationally fragile.

We did not chase a single magical product. Instead, we enforced a discipline: deterministic-enough fronthaul behavior, strict optical monitoring, and operational guardrails. In Open RAN, the integration is the product. The optics are not the whole story, but they are where the gremlins hide when you least expect it.

Implementation architecture (high level)

We standardized on a DU-to-RU transport model where each RU had a dedicated fronthaul path with controlled switch policy. We disabled or constrained features that introduced variable buffering under load, and we configured link-level thresholds for early warning. We also aligned synchronization mode and verified it using timing telemetry from the timing distribution equipment.

Optics and cabling approach

For the short reach segments, we used 850 nm multimode SR optics with LC duplex connectors and DOM. For longer runs approaching the edge of multimode reach, we used LR-class optics with appropriate wavelength and fiber type to avoid operating near the margin. We also added a fiber inspection step and cleaning protocol before every reconnection.

We explicitly required DOM so we could detect drift before it became packet loss. In one RU pair, DOM readings showed transmit power trending down by ~1.2 dB over two days after a relabeling incident; the link counters later correlated with that trend as well as rising error rates.

Pro Tip: In Open RAN fronthaul troubleshooting, treat DOM trends as “early smoke,” not “late fire.” If you alert on DOM changes (for example, a sustained transmit power drop or rising receive power penalty), you can fix the connector or replace the patch cord hours before the DU/RU pair starts flapping and your logs turn into a crime novel.

For synchronization and timing considerations, we also aligned our expectations with how standards organizations describe network timing approaches. A practical reference is ITU documentation on synchronization concepts: ITU.

Implementation steps: from “it boots” to “it runs all day”

We followed a repeatable sequence rather than improvising during outages. Each step produced measurable evidence that the system behaved closer to deterministic assumptions.

baseline the network and optics before touching anything

We collected switch interface counters (CRC errors, drops, link flaps), optics DOM telemetry (Tx/Rx power, bias current, temperature), and RU/DU application-level KPIs such as RLC retransmissions and scheduling stability. We recorded these baselines during both off-peak and peak traffic windows so the “failure mode” had a chance to show up on our timeline.

verify timing distribution and synchronization alignment

We confirmed that the timing source was stable and that the transport network was not accidentally configured in a mode that allowed drift or re-lock cycles. Then we validated that the RU and DU timing references converged quickly after link resets and did not re-converge too often under normal traffic.

When synchronization alignment fails, you often see a pattern: higher retransmissions plus sporadic scheduling instability that correlates with link-level events. In our case, the correlation was not perfect at first, because optics near marginal power levels delayed the point where the system “noticed.”

optics replacement and connector hygiene reset

We replaced optics in the worst-performing segments first, not the best-performing ones. We also re-terminated and cleaned connectors, using a consistent inspection workflow and standardized labeling. After reconnection, we re-measured DOM and verified that receive power and error counters improved and stayed improved.

transport tuning for fronthaul resilience

We tuned switch policies to reduce variable buffering effects. In practice, that meant revisiting queue profiles, enabling consistent hashing behavior for the relevant flows, and ensuring that ECMP did not create unexpected path diversity for fronthaul packets. We also established alarm thresholds that triggered before user-plane KPIs degraded.

measured soak test and controlled rollout

We ran a soak test for 72 hours with synthetic traffic patterns and real attach sessions. Only after KPIs stabilized did we expand the rollout to the remaining RUs. This prevented the classic Open RAN failure mode: “we fixed one RU, but the next RU inherited the same hidden issue.”

Measured results: what improved and by how much

After the changes, the system stopped the intermittent packet loss events that had been spiking around day three. The biggest improvements showed up in error counters and RLC retransmission rates, which are the network equivalent of “your body temperature is back to normal.”

- CRC errors: reduced from sporadic spikes to near-zero steady-state counts on the fronthaul interfaces.

- Packet drops: dropped by ~78% during peak windows (measured via switch drop counters).

- RLC retransmissions: decreased by ~35%, correlating with improved link stability.

- RU uptime during soak: 99.98% over 72 hours (no flapping events requiring manual intervention).

- Mean time to detect (MTTD): improved by about 4x because DOM trend alerts triggered earlier than application-level alarms.

We also measured operational overhead. Before the changes, field technicians spent time chasing “mystery outages” with inconsistent correlation between optics and application KPIs. After the refactor, the correlation became strong enough that the next on-call event could be triaged with DOM and interface counters in under 15 minutes instead of an all-night debug session.

Common pitfalls / troubleshooting tips for Open RAN rollouts

Below are the mistakes that most often turn Open RAN deployments into long nights. Each includes a root cause and the practical fix we used or would use again.

Pitfall 1: Operating optics near the reach margin

Root cause: Links that barely meet the multimode budget can pass early tests but fail under temperature drift, connector micro-damage, or aging of patch cords. In fronthaul, those failures surface as packet loss and retransmissions.

Solution: Enforce a design margin (practically, aim for a comfortable optical power budget rather than “minimum viable reach”). Use DOM alerts on Rx power and link error counters, and prefer LR optics for longer segments instead of squeezing multimode to the last meter.

Pitfall 2: Connector cleanliness and inspection skipped “because it looks fine”

Root cause: A single dirty connector can increase insertion loss enough to cause error bursts. The link may still train, which fools you into thinking the fiber is healthy until traffic patterns stress the system.

Solution: Inspect every termination using a fiber microscope, clean with the correct tools, and document inspection results per link. Re-clean and re-seat after any maintenance event, even if nothing “changed.” Yes, it is tedious. So is replacing equipment at 2 AM.

Pitfall 3: ECMP or path diversity for fronthaul flows

Root cause: Path diversity can create latency variation and reordering that amplifies retransmissions. Even if average latency looks fine, the tail latency can be what hurts Open RAN behavior.

Solution: Constrain routing for fronthaul traffic so that DU-to-RU flows traverse stable paths. Validate with traffic captures and switch telemetry; then tune queueing and buffering behavior to reduce jitter under peak load.

Pitfall 4: Missing or mismatched DOM monitoring thresholds

Root cause: If DOM is present but alerts are misconfigured, you lose the early warning signal and only learn about trouble when the service already degraded.

Solution: Set threshold alerts based on observed baseline behavior, and include rate-of-change triggers (trend-based alarms). Treat DOM trends as a leading indicator, not just a dashboard for the bored.

Cost & ROI note: what you pay, what you save, and what bites later

In typical deployments, OEM optics and certified transceivers often differ in price by a noticeable margin. In practice, third-party optics can be cheaper per module, but the real ROI depends on your operational risk tolerance, your spares strategy, and your ability to get reliable DOM telemetry and support.

For a rough field estimate, optics and cabling for a small pilot of 6 RUs can land in the low-to-mid five figures for optics alone, depending on form factor and whether you use OEM-only compatibility. Over a year, total cost of ownership includes spares, cleaning tools, inspection time, and technician hours during incidents. If DOM alerts reduce mean time to repair by even 20 to 30 minutes per event, the labor savings can outweigh the optics cost delta quickly—especially when you factor in the cost of user impact.

Vendor lock-in risk is real. Some ecosystems provide richer telemetry and faster RMA handling for OEM optics, while third-party optics may introduce subtle compatibility quirks. The ROI sweet spot is usually: standardize on a small set of optics families with proven compatibility, then keep spares and monitoring aligned across the fleet.

Selection criteria checklist: how engineers choose Open RAN fronthaul optics and transport

When selecting components for Open RAN, engineers should not shop by reach alone. Use this ordered checklist to avoid “it worked in the spreadsheet” scenarios.

- Distance and optical budget: confirm reach for your fiber type and insertion loss, with a margin for connectors and aging.

- Switch compatibility: verify transceiver compatibility with the exact switch models and firmware you run (not just “vendor says it works”).

- Functional split and data rate: match required throughput to your split configuration and transport profile.

- DOM support and telemetry quality: require DOM and ensure your monitoring system can ingest and alert on it.

- Operating temperature: validate module temperature rating against cabinet airflow and worst-case conditions.

- Synchronization mode fit: confirm that timing and transport behavior align with your Open RAN timing strategy.

- Operating procedures and lock-in risk: plan spares, cleaning workflows, and compatibility constraints so you are not hostage to one vendor for every replacement.

FAQ

How does Open RAN differ from traditional RAN when it comes to networking?

Open RAN shifts more of the disaggregated interface workload onto standardized transport and integration points between DU and RU. That makes fronthaul timing, link error behavior, and telemetry visibility more critical than in many tightly coupled traditional deployments. The upside is flexibility; the downside is that you must engineer the integration like a grown-up.

What optical transceiver reach should I target for Open RAN fronthaul?

Target reach comfortably inside the module specification, not at the edge. For multimode at 850 nm, budget for connector loss and patch cord variability, and plan for temperature drift. If you are near the limit, prefer a wavelength and fiber combination designed for the longer segment rather than gambling with the margin.

Do I need DOM monitoring for Open RAN optics?

DOM is strongly recommended because it provides early warning signals such as Tx/Rx power drift and temperature behavior. Without DOM, you often discover problems only after packet loss counters climb and user KPIs degrade. With DOM, you can alert on trends and reduce mean time to repair.

What are the first troubleshooting steps when Open RAN fronthaul shows packet loss?

Start with interface counters (CRC errors, drops, link flaps), then check optics DOM telemetry trends, then verify timing synchronization stability. If counters spike alongside DOM drift, suspect optics or connectors first. If optics look stable, investigate switch path stability and queueing behavior.

Can I use third-party optics in an Open RAN deployment?

Often yes, but you must validate compatibility with your exact switch models and firmware. Also verify that DOM telemetry is accurate and that error behavior under temperature matches expectations. If you cannot validate quickly, you are basically paying for “integration tax” in the field.

How do I estimate total cost of ownership for Open RAN fronthaul components?

Include optics cost, spares, cleaning and inspection labor, and the operational cost of incidents. Track MTTD and MTTR before and after improvements; even modest reductions can provide a measurable ROI. Also consider failure rates tied to marginal reach and connector hygiene, since those correlate strongly with repeat incidents.

If you take one thing from this case study, let it be this: Open RAN success is less about hype and more about disciplined transport engineering, optics monitoring, and measurable rollout gates. Next, explore fronthaul transport to map your functional split to bandwidth, latency, and resilience requirements.

Author bio: I am a field-deployed network engineer who has debugged Open RAN fronthaul paths with a laptop, a scope-like mindset, and far too many spare patch cords. I now write deployment playbooks that translate standards expectations into measurable KPIs, so your radios do not ghost you at hour 3.