In AI clusters, the interconnect between GPUs and leaf switches can make or break training throughput. This article helps platform and network engineers compare DAC (direct-attach copper) versus AOC (active optical cable) for common AI workload patterns like all-reduce and distributed inference. You will get practical selection criteria, real troubleshooting pitfalls, and a cost and TCO lens for mixed topologies.

Why DAC and AOC behave differently under AI traffic

Both DAC and AOC carry Ethernet traffic at short to mid distances, but their physical layers differ enough to affect system behavior. DAC uses copper traces and serialization within the module, while AOC converts electrical signals to optical and back using active electronics at both ends. In AI workloads, that translates into different tradeoffs for latency consistency, power draw, thermal stress, and link budget margin when you scale port counts.

For many GPU clusters, the dominant pattern is frequent small-to-medium bursts driven by collective operations (for example, all-reduce) plus periodic synchronization. That means engineers care about link stability and error recovery as much as raw bandwidth. IEEE Ethernet links use forward error correction and link-layer retransmission mechanisms; however, the probability of transient bit errors can rise with marginal power, temperature, or cabling geometry. [Source: IEEE 802.3 Ethernet Standard] IEEE 802.3 Ethernet Standard

Operationally, DAC often wins on simplicity: fewer optical components, fewer conversion stages, and typically lower per-link power at short reach. AOC can win when you need higher density in constrained cable routing, longer effective reach than copper, or better resilience to EMI in noisy environments like power-dense racks. Still, AOC introduces additional optics, which can be sensitive to connector cleanliness and can show different aging behavior over temperature cycles.

Performance comparison: latency, power, reach, and environmental limits

Below is a practical comparison using typical 25G and 100G-class optics/cables found in data centers. Exact numbers depend on vendor firmware, module generation, and transceiver class, so treat this as a planning baseline rather than a guarantee. For AI racks, the most important takeaway is that reach and thermal headroom often determine whether you stay in a stable operating region during peak training.

| Parameter | DAC (Direct-Attach Copper) | AOC (Active Optical Cable) |

|---|---|---|

| Typical data rates | 25G, 40G, 100G (and higher in newer generations) | 25G, 50G, 100G (often multi-rate capable) |

| Wavelength / medium | Electrical copper (no wavelength) | 850 nm multimode common for short reach |

| Reach (planning range) | ~1 to 7 m for many 25G/100G DAC SKUs | ~10 to 100 m+ depending on generation and OM grade |

| Power per link (typical) | Lower at short reach (often single-digit watts) | Higher than DAC in many cases (active optics electronics) |

| Connectorization | No fiber connectors; module ends seat into cage ports | Uses optical conversion ends; still has connector/cleanliness considerations |

| Temperature range | Often data-center grade; check vendor spec for 0 to 70 C class | Also typically data-center grade; validate operating temperature and derating |

| EMI / shielding | Susceptible to high electromagnetic noise in some layouts | Optical path reduces conducted EMI across the span |

| Common failure mode | Insertion stress, poor seating, or cable geometry degradation | Connector contamination, optics aging, or improper handling during install |

When you evaluate AI performance, focus on link stability metrics you can observe: interface counters for CRC errors, symbol errors, and link flaps. In the field, we often see that a “fast” link that intermittently retrains can reduce effective throughput during heavy all-reduce because workloads synchronize and stall on collective completion. Vendor datasheets for specific modules matter, for example common DAC and AOC families like Cisco SFP-10G-SR are fiber-based, while DAC and AOC products are typically sold as QSFP and QSFP-DD DAC/AOC assemblies; always confirm compatibility with your switch transceiver profile and optics support matrix.

For external standards context around optical link performance and media considerations, the Fiber Optic Association provides practical guidance on fiber classes and connector handling. Fiber Optic Association While AOC is a cable assembly, the same physical realities—optical cleanliness and link budget—still apply because AOC depends on optical transmit/receive performance and stable coupling into the connectorized ends.

Decision checklist: how engineers pick DAC or AOC in real AI clusters

Start by mapping your physical topology and workload profile, then validate against switch support and expected operating conditions. The checklist below reflects how teams avoid late surprises during burn-in and production cutovers.

- Distance and routing constraints: Measure the actual end-to-end path from switch to GPU server NIC. If you need more than typical DAC reach (often a few meters), AOC becomes the safer option.

- Switch compatibility and optics profile support: Confirm the switch firmware supports the transceiver class and rate (including any “compatible DAC” policy). Some platforms enforce vendor-specific EEPROM parsing and may flag unknown modules.

- DOM and telemetry requirements: For managed operations, prefer modules that expose Digital Optical Monitoring equivalents (or at least consistent diagnostic pages). Even DAC can provide temperature and voltage telemetry; AOC may provide optical power indicators.

- Operating temperature and airflow: In AI racks, local hot spots near fan walls and PSU exhaust can push module temps beyond comfort. Validate the module operating range and whether derating occurs above a threshold.

- Budget and power envelope: DAC often reduces power per link in short spans, which matters when you have thousands of ports. AOC may cost more per link but can reduce overall cabling complexity.

- Vendor lock-in and spares strategy: If you standardize on one vendor’s AOC/DAC families, plan spares and replacement lead times for RMA events.

- EMI and environmental risk: If racks sit near high-current busbars, motor drives, or heavy grounding noise, AOC’s optical isolation can improve stability.

If you maintain a large fleet, also consider operational tooling: do your monitoring dashboards normalize interface error counters and transceiver diagnostics across module types? Teams that lack that normalization can waste hours during incident response when they need to correlate failures to specific link segments.

Pro Tip: During AI training, don’t trust only link “up/down” state. In practice, you want to baseline CRC error rate and link flaps per port over a representative workload run; a marginal DAC that stays “up” can still trigger intermittent retransmits that quietly reduce all-reduce efficiency.

Deployment scenario: DAC on ToR, AOC between frames in a GPU pod

Consider a 3-tier AI data center leaf-spine topology with 48-port 100G Top-of-Rack switches and 8-GPU servers using 2x100G NICs. In each rack, the switch-to-server distance is about 1.5 to 2.5 m, and cable routing is straightforward. The team deploys 100G DAC between ToR and servers because it reduces power and avoids optical connector handling. Over 10 weeks of training runs, they track port errors and find stable operation with negligible CRC counts.

However, for inter-rack links between two frames where the physical gap is about 15 to 25 m, the team cannot use DAC within the supported reach. They install 100G AOC for those links, routing the cables through overhead trays where EMI from adjacent power distribution is noticeable. With AOC, they also simplify maintenance: a single cable assembly replaces a complex fiber patching workflow, and technicians can swap it without needing to clean fiber connectors at the rack.

The operational difference shows up during hot-aisle temperature spikes. The team uses airflow telemetry and validates that module temperatures remain within the vendor’s stated operating range under a worst-case fan-failure simulation. Once stabilized, they see fewer link retrains compared to an earlier mixed approach that tried to stretch DAC beyond its planning reach.

Common pitfalls and troubleshooting for DAC vs AOC

Even experienced teams can get tripped up by physical layer realities. Here are failure modes we commonly see, with root cause and what to do next.

Link instability that looks intermittent but never fully drops

Root cause: A DAC that is slightly beyond its recommended reach or installed with excessive bend radius can cause rising BER and occasional retrains. The interface may remain “up,” but CRC/symbol error counters climb during bursts.

Solution: Replace with a shorter DAC SKU or a higher-quality cable assembly rated for your exact distance. Verify seating and inspect for mechanical stress at the module latch. Then rerun a controlled all-reduce workload while monitoring error counters per port.

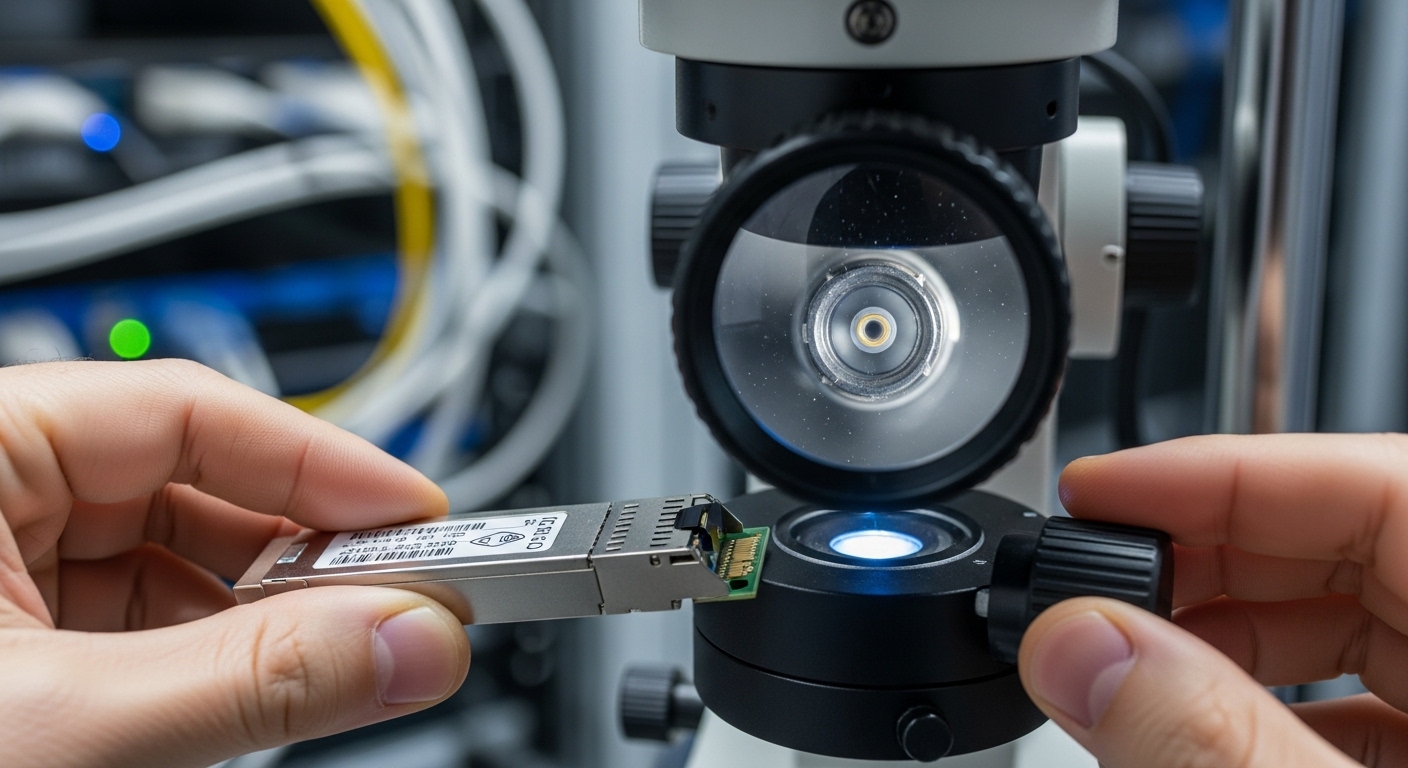

AOC errors after maintenance or “it worked yesterday” incidents

Root cause: Connector contamination, dust, or improper handling during swap can degrade optical receive power. Even if the cable assembly is “plug and play,” optical surfaces can collect particulates in a dusty data hall.

Solution: Use approved cleaning procedures and inspect connectors with a scope if your SOP allows. Confirm that transceiver diagnostics show reasonable optical receive power and that no alarms indicate low signal. Establish a verification step in change management: check counters immediately after install.

Thermal derating in AI racks causing gradual performance degradation

Root cause: Modules near exhaust paths or obstructed airflow run hotter. DAC can be sensitive to board-level thermal effects; AOC can be sensitive to optical/electrical conversion electronics under high temperature cycles.

Solution: Measure actual module temperatures via platform telemetry and compare to the vendor operating spec. Improve airflow (fan direction, baffles, cable management) and, if needed, reposition links using shorter runs that reduce module heat soak.

Compatibility mismatches due to transceiver authentication or EEPROM parsing

Root cause: Some switch models enforce strict compatibility checks for transceiver identifiers. A third-party DAC/AOC might link but run at an unexpected mode, or it may trigger frequent link resets under load.

Solution: Validate with your switch vendor’s compatibility list and confirm negotiated speed and FEC settings. If you must use third-party modules, test in a staging rack with the same switch firmware and run a soak test.

Cost and ROI: what you should budget for and how to justify the choice

Pricing varies widely by vendor, rate, and length. As a planning range, enterprise data centers often see DAC assemblies priced roughly below AOC when comparing like-for-like speed classes and very short reach. AOC typically costs more per link, especially at 100G, but it can reduce labor time during installation and can prevent expensive downtime when DAC would be forced beyond reliable reach.

TCO is not only the module cost. Include operational costs: technician time, spares inventory, and the probability of RMA. In practice, if you have thousands of ports, a small power difference matters. For example, if DAC saves even 1 to 2 W per link at scale, that can translate into measurable facility power and cooling impact. Conversely, if AOC reduces cabling complexity and avoids connector handling errors, the labor savings and reduced incident frequency can outweigh higher per-unit cost.

FAQ

Is DAC always lower latency than AOC for AI?

Not always. DAC often has fewer conversion stages, which can reduce deterministic latency, but system latency impact depends on switch pipeline, NIC settings, and whether either link triggers retransmits. The best indicator is to measure end-to-end performance for your workload and validate link error counters under load.

What reach should I assume for DAC in AI racks?

Many 25G and 100G DAC SKUs are commonly planned for roughly 1 to 7 m, but vendor specs vary by generation and cable construction. Measure your actual path, include slack for cable management, and avoid pushing to the maximum rating.

When does AOC become the safer choice?

Choose AOC when you need longer reach than copper supports, when routing forces sharp constraints, or when EMI is a concern. AOC is also useful when you want to reduce fiber patching complexity while still moving beyond typical DAC distance limits.

How do I verify performance beyond “link is up”?

Track interface error counters such as CRC errors and link flaps, and correlate them with training events. Also check module telemetry where available, including temperature and optical diagnostics for AOC.

Can I mix DAC and AOC in the same fabric?

Yes, but you must validate switch compatibility and ensure consistent speed negotiation and error recovery behavior. Plan a staged rollout and run a soak test that reflects your AI traffic pattern, not just a simple ping.

What should I standardize for spares and RMA?

Standardize on a small set of module SKUs that your switch firmware fully supports. Keep spares for the most failure-prone lengths used in your topology and document the exact vendor part numbers and firmware compatibility.

If you want the fastest path to a stable AI fabric, start with the physical distance and switch compatibility checklist, then validate with workload-based error counter baselines. Next, review DAC vs fiber transceivers and AOC compatibility and diagnostics to align module selection with your monitoring and spares strategy.

Author bio: I have deployed and debugged high-density Ethernet links in GPU pods, including DAC and AOC rollouts with telemetry-driven incident response. I focus on measurable link stability, power/thermal effects, and practical compatibility testing across switch firmware versions.