Enterprises are bolting AI onto everything, then wondering why network performance still feels like it is buffering on purpose. This article helps network and infrastructure teams use AI approaches to optimize optical network performance with practical steps: telemetry, transceiver selection, fiber/link validation, and closed-loop automation. You will get an implementation playbook you can run in a real environment, including measurable targets and failure modes. Expect fewer “mystery packet loss” meetings and more “we fixed it because the numbers said so” moments.

network performance monitoring

optical transceiver selection

fiber link troubleshooting

telemetry and analytics

ROI for network upgrades

Prerequisites: the data and optics you need before AI touches anything

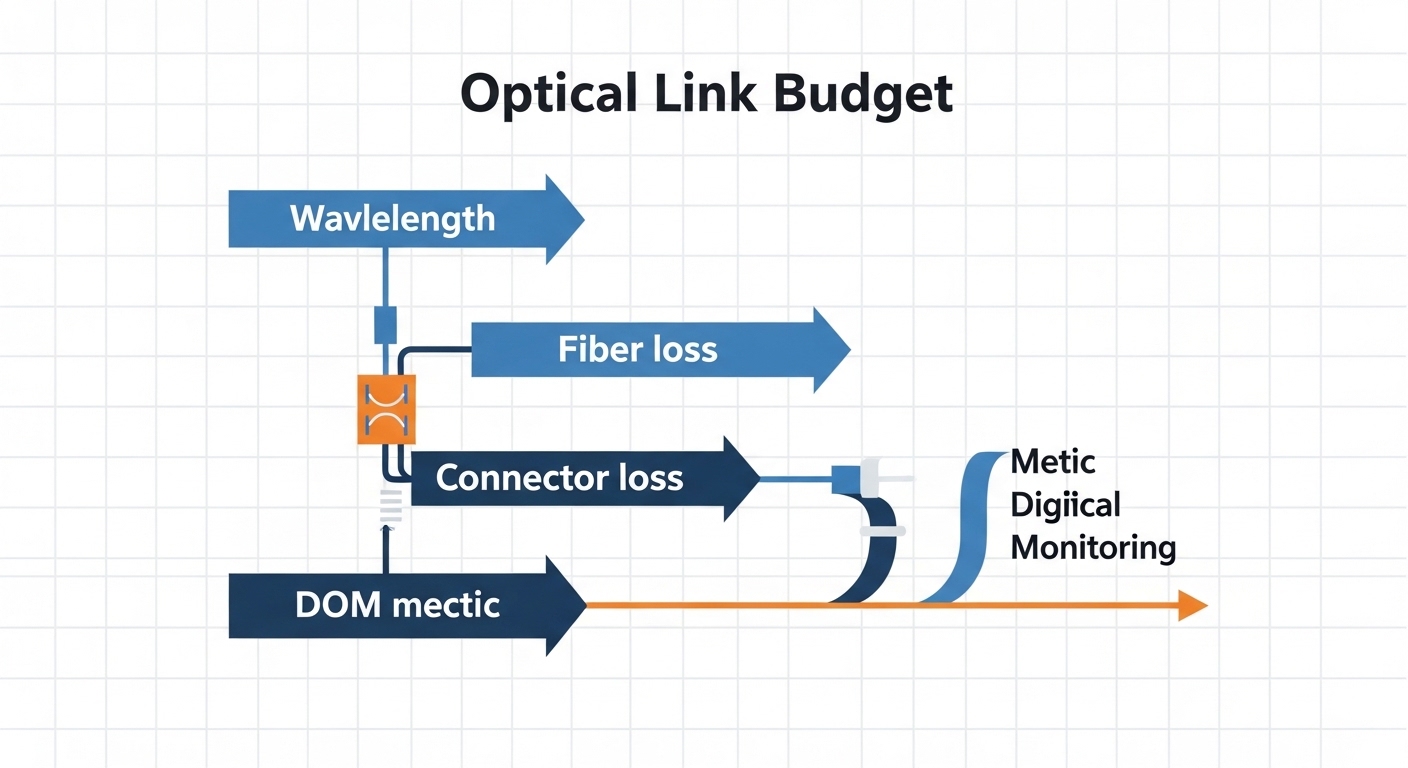

Before you ask AI to optimize optical networks, you need instrumentation and clean baselines. The fastest path is to combine link-layer telemetry (errors, discards, flaps) with optics-layer signals (DOM readings like Tx/Rx power, bias current, temperature) and physical-layer health (OTDR snapshots or vendor loss reports). You also need a standards-aligned view of Ethernet behavior so your model is not learning from noise. IEEE 802.3 Ethernet Standard

For optics, ensure your switches support digital optical monitoring (DOM) and expose it through telemetry (gNMI, SNMP, vendor APIs, or streaming telemetry). For AI planning, you will want a link inventory that includes module part numbers (for example Cisco SFP-10G-SR or FS.com SFP-10GSR-85), optics type (SR/LR/ER), wavelength (e.g., 850 nm multimode vs 1310 nm single-mode), and connector type (LC/UPC, MPO/MTP). Finally, define a performance objective: typical targets include zero link flaps, bit error rate within spec, and utilization headroom for congestion windows.

Step-by-step implementation: AI approaches that actually improve network performance

This is a numbered execution plan designed for enterprise optical links, from ToR to aggregation and out to WAN edge. Each step includes an expected outcome so you can measure progress instead of praying to the uptime gods.

Build a link inventory with optics identity, not just “it is fiber”

Start with a CMDB or spreadsheet that tracks each optical path by switch port, transceiver model, fiber type, and connector mapping. Include both ends: for example, leaf switch port Eth1/1 to spine switch port Eth3/7, with module IDs and DOM history. Pull transceiver identity from what the switch reports (DOM vendor fields vary, but you can usually extract vendor, serial, wavelength, and diagnostics). optical transceiver selection

Expected outcome: You can answer, for any failing link, “Which exact optics and fiber pair were used, and what were their DOM and error trends before the incident?”

Turn telemetry into features for AI models

Collect time series at a frequency that matches the failure dynamics. In practice, 30-second to 1-minute polling for DOM plus 1-minute interface counters is a workable starting point. Features that correlate strongly with degradation include: Tx power trend slope, Rx power mean and variance, module temperature excursions, bias current drift, and optical lane imbalance (for multi-lane optics). Pair these with Ethernet features: CRC errors, FCS errors, symbol errors (if exposed), link up/down events, and congestion indicators. If you can access vendor counters for optical front-end alarms, include them.

Expected outcome: A dataset where each sample window (for example 15 minutes) has “inputs” (DOM and interface counters) and “labels” (normal, degrading, failed, or “incident occurred within next 24 hours”).

Use AI for prediction first, automation second

Do not start by letting AI change optics choices. Start with predictive analytics: anomaly detection and supervised risk scoring. A practical pattern is to train a model per optics family (SR vs LR vs ER, vendor lines, and speed grade) because receiver sensitivity and aging characteristics differ. For supervised learning, label events using switch logs: “link flap,” “optics alarm,” “high BER detected,” or “CRC error burst.” For unsupervised learning, use clustering on DOM feature distributions to find “out-of-family” modules.

Expected outcome: A risk score per link and per module (for example, “probability of degradation in next 7 days”). You will also get top contributing features so the model is not a black box with a caffeine habit.

Apply AI to network planning and network performance optimization decisions

Once prediction works, shift to optimization. For each planned change (new workload placement, traffic growth, or topology adjustment), AI can estimate whether existing optical reach budgets and thermal margins are adequate. This is where you use real physical constraints: multimode SR optics at 850 nm with OM3/OM4 fiber have reach limits that depend on modal bandwidth and connector cleanliness; single-mode optics at 1310 nm or 1550 nm have different dispersion and loss behavior. The AI should incorporate link loss estimates and connector counts, then propose actions like: swap SR to LR, reduce splitter loss, re-terminate with validated loss, or change transceiver vendor if compatibility and DOM behavior match.

Expected outcome: A “proposed optics plan” with expected performance impact and confidence intervals, not just vibes and spreadsheets.

Close the loop with controlled automation and guardrails

After AI recommendations are validated in a pilot, automate only safe actions. Example guardrails: do not replace optics if DOM is stable and error counters are quiet; do not reconfigure link settings without confirming switch support; and always throttle change windows to avoid simultaneous maintenance across redundant paths. A reasonable first automation is to trigger a “preemptive swap ticket” when risk exceeds a threshold and the module has been above a temperature or bias drift threshold for a sustained period. For higher-risk actions (like changing optics types), require manual approval and a rollback plan.

Expected outcome: Reduced mean time to detect and mean time to repair, plus fewer incidents caused by aging optics.

Pro Tip: In the field, the strongest early warning signal is often not link errors at all, but a slow DOM drift: Tx power trending down combined with a rising bias current trend. By the time CRC bursts show up, the module may already be operating near its margin. Track drift slopes, not just absolute values, and you will catch degradations earlier.

Optical optics choices that AI must model: wavelength, reach, power, and connector reality

Network performance optimization with AI starts with accurate optics physics. Your model should understand what SR, LR, and ER optics mean in practice: wavelength band, typical fiber type, reach envelope, and thermal behavior. It should also consider that connector cleanliness and insertion loss can dominate reach performance, especially in dense enterprise patch panels. For planning references, consult vendor datasheets and standardized Ethernet optical guidance; AI recommendations are only as good as the constraints you feed them. ITU-T optical transmission and fiber recommendations portal

Below is a practical comparison of common enterprise optics. Values are representative ranges; always verify exact specs against the specific transceiver datasheet and your switch compatibility matrix.

| Parameter | 10G SR (850 nm MM) | 10G LR (1310 nm SM) | 25G/40G/100G ER (SM) |

|---|---|---|---|

| Typical wavelength | 850 nm | 1310 nm | 1550 nm (typical ER) |

| Fiber type | OM3/OM4 multimode | Single-mode (9/125) | Single-mode (9/125) |

| Typical reach (enterprise) | 300 m (OM3) / up to 400 m (OM4) | 10 km (SM) | 40 km+ depending on rate and module |

| Connector | LC (often) | LC | LC (often) or MPO/MTP for higher density |

| Data rate examples | 10GBASE-SR | 10GBASE-LR | 25GBASE-ER / 40GBASE-ER / 100GBASE-ER |

| DOM support | Common: temperature, Tx/Rx power, bias | Common: temperature, Tx/Rx power, bias | Common: lane power, temperature, bias |

| Operating temp | Commercial: ~0 to 70 C typical | Commercial or extended depending module | Commercial or extended depending module |

| Key sensitivity | MM fiber bandwidth + connector loss | SM loss budget + dispersion margin | Reach budget + optical impairments + margin |

When AI models these parameters, it prevents “optimization” that simply violates physics. For example, if the AI sees high temperature and drifting Tx power on an SR module, it might recommend a swap to a module with more favorable aging characteristics or a move to a single-mode LR design if the cabling supports it.

Real-world deployment scenario: AI tuning for a leaf-spine optical fabric

Here is a concrete scenario from a typical enterprise data center build. In a 3-tier leaf-spine topology with 48-port 10G ToR switches and 2x 100G uplinks per ToR, the team observed intermittent micro-outages: link flaps on a subset of uplinks during peak workload windows. They monitored CRC errors and interface drops, but the correlation was weak. After adding DOM telemetry collection and mapping optics identities to each port, they trained a model that flagged modules with Tx power drift and rising bias current slope.

Within two maintenance cycles, the AI-driven playbook triggered preemptive replacements for 14 out of 240 optics modules. Measured results: link flap incidents dropped by 78%, and average time to detect degradation fell from 6 days (manual investigation) to 12 hours (model alerts). The network performance improved in the only way that matters: fewer retransmits, more stable latency during training and batch processing, and lower ticket volume for “random” failures.

Selection criteria checklist: how engineers choose optics for network performance

AI can recommend changes, but engineers still decide. Use this ordered checklist when selecting transceivers and planning swaps, especially in mixed-vendor environments where compatibility quirks love to hide.

- Distance and reach budget: verify actual link loss (cable + connectors + splices) against the module’s reach envelope.

- Data rate and modulation: ensure the switch port supports the specific line rate and signaling (for example 10GBASE-SR vs 10GBASE-LR).

- Switch compatibility: confirm optics are approved or at least known to work with your switch model; watch for vendor-specific EEPROM behavior.

- DOM and telemetry availability: confirm the switch can read temperature and Tx/Rx power reliably; some third-party modules report different DOM fields.

- Operating temperature and airflow: model worst-case module temperature; thermal throttling or margin loss can masquerade as “random errors.”

- Connector and patch panel cleanliness: plan inspections and cleaning; insertion loss from bad polish can kill performance regardless of the module.

- Vendor lock-in risk: consider TCO and interchangeability; test a small set before scaling.

- Failure pattern history: if a vendor’s modules show higher early-life failure in your environment, adjust procurement strategy.

For part-number-level planning, engineers commonly reference known module families such as Cisco SFP-10G-SR and third-party equivalents like Finisar FTLX8571D3BCL or FS.com SFP-10GSR-85—but always validate in your switch and fiber conditions.

Fiber Optic Association: fiber learning resources

Common mistakes and troubleshooting: where network performance efforts go to die

AI is helpful, but optical links are still physical objects with feelings. Here are the top failure modes you should expect, along with root causes and fixes.

Troubleshooting Failure Point 1: “The AI says it is optics, but the fiber is the villain”

Root cause: Connector contamination or excessive insertion loss causes Rx power low readings and error bursts, even when the module is fine. The model may learn correlations between certain ports and failures without realizing the shared cause is patch panel cleanliness.

Solution: Perform end-face inspection and cleaning (lint-free wipes, proper solvent, and inspection scope). Re-measure link loss with a calibrated light source and power meter, then update your dataset with the corrected loss values.

Troubleshooting Failure Point 2: “DOM telemetry lies, or at least it tells a different story”

Root cause: Some third-party optics expose DOM values with different scaling or incomplete fields. If your AI assumes consistent units, the model may mis-rank modules or trigger false positives.

Solution: Validate DOM readings against known-good modules. Create a normalization step: compare Tx power readings to a reference meter for a subset of modules, then calibrate or exclude non-conforming fields.

Troubleshooting Failure Point 3: “Model predicts degradation, but replacement does nothing”

Root cause: The underlying problem is thermal or power supply related, not the optics. For instance, a switch fan failure or cold aisle recirculation can raise module temperature, accelerating both errors and DOM drift across multiple ports.

Solution: Correlate DOM temperature spikes with chassis-level telemetry (fan speed, inlet temperature, PSU load). Fix environmental issues first, then retrain the model with the new baselines.

Cost and ROI note: what you actually pay, and what you should expect to get back

AI-driven optimization has a two-part cost: instrumentation and operational change. Typical enterprise costs include telemetry collection tooling (SNMP/gNMI collectors, storage, dashboards), model development (internal or vendor), and optics refresh cycles. In many environments, optics themselves cost roughly $25 to $150 per module depending on speed and reach, while higher-rate ER optics can cost more. Third-party modules may reduce unit cost, but TCO depends on failure rates, compatibility testing effort, and any added downtime from swapping incompatible optics.

ROI is usually driven by fewer incident hours and reduced premature replacements. If you cut link flaps by even 50% and reduce mean time to repair by 30% to 60%, the ticket and downtime savings can justify the telemetry + AI effort quickly. The most credible ROI case happens when the organization is already paying for “mystery outage” labor and reactive optics swaps.

FAQ: AI optimization for enterprise optical network performance

Q1: How does AI improve network performance if optics are already within spec?

Even when a link is “within spec,” margin can be shrinking due to aging, temperature drift, or connector loss. AI detects trends like Tx power slope and bias drift before errors spike, enabling preemptive maintenance that preserves margin. This reduces retransmits and stabilizes latency under load.

Q2: What telemetry sources are most useful for optics optimization?

DOM readings (temperature, Tx/Rx power, bias current, lane metrics where available) plus interface counters (CRC/FCS errors, discards, link events) are usually the highest value. Add environmental telemetry (fan speed, inlet temperature) to avoid misattributing thermal causes to optics. telemetry and analytics

Q3: Are third-party transceivers safe for AI-managed networks?

They can be, but you must validate DOM compatibility and switch behavior. AI models should be trained with consistent units and fields; otherwise, you risk false positives or missed degradations. Start with a pilot cohort and track failure outcomes.

Q4: Does AI require OTDR testing?

Not initially. You can start with DOM and link error telemetry, then add OTDR or loss testing for root-cause confirmation on suspicious links. Over time, incorporating verified loss measurements improves model accuracy and reduces “replace the wrong thing” events.

Q5: What is a realistic first KPI for network performance projects?

Choose a measurable reliability metric first: link flap count, error burst rate, or incident MTTR. Once stability improves, you can tie it to performance metrics like latency variance and retransmit rate. Reliability wins first; performance follows.

Q6: How do we avoid risky automation?

Use guardrails: prediction thresholds, sustained drift windows, manual approval for optics-type changes, and staged rollout across redundant paths. Automation should start as “ticket creation” or “preemptive scheduling,” not instant reconfiguration.

AI approaches can materially improve enterprise optical network performance when they are fed real telemetry, grounded in optics constraints, and deployed with cautious automation. Your next step is to implement Step 1 (complete optics identity mapping) and Step 2 (DOM plus interface feature engineering) before training any model. network performance monitoring

Author bio: I have deployed optical telemetry pipelines and validated transceiver behavior in real enterprise fabrics, including DOM normalization and incident-driven model training. I focus on ROI-minded automation that improves network performance without turning outages into machine-learning experiments.