A field team recently had to bring up a new edge-computing stack across three remote locations, each with different fiber distances, switch models, and temperature swings. This article explains practical use cases for optical modules in those deployments, including what actually worked, what failed, and how to choose the right transceiver. It is written for network and field engineers who need measurable link stability, not just datasheet claims.

Trouble at the edge: why optical module selection became the bottleneck

In the first week of rollout, two sites reported intermittent packet loss during peak data capture windows, despite the same application workload and nearly identical topology. The root cause was not the servers or the orchestration layer; it was the optics layer: mismatched optics types, weak power budgets, and one transceiver running outside its intended thermal margin. In edge computing, the link is the “system clock” for all higher layers, so small optical mismatches show up as application-level timeouts.

The challenge was typical: edge sites were connected back to a central aggregation switch using single-mode fiber, but the “last mile” length varied from 6 km to 18 km. Each location used different vendor switch line cards and optics cages, and the team also had to manage power draw in cabinets with limited cooling. The deployment forced the team to treat optical modules as a field-replaceable system component with strict verification steps, not a commodity part.

Environment specs from the three sites

Here are the actual constraints the team designed around, based on on-site measurements and equipment specs:

- Fiber types: SMF-28 class single-mode; patch loss verified during install.

- Distances: Site A: 6 km; Site B: 12 km; Site C: 18 km.

- Switch platform: 10G SFP+ cages on aggregation switches; later retrofits required SFP+ compatibility checks.

- Temperature: Cabinets reached 55 C ambient during summer days; one site had poor airflow.

- Operational need: Continuous video analytics uplink at 9.0 Gbps average, with bursts to full line rate.

Chosen solution: mapping real use cases to SFP+ optics and link budgets

The team standardised on SFP+ optical modules for 10G uplinks and used explicit link-budget math before ordering spares. For each use case, the selection targeted wavelength, reach, connector style, and temperature rating, while also considering DOM support for monitoring. The key was to align module type with the fiber plant and to validate that the receiving switch accepted the module vendor’s electrical compliance.

Technical specifications comparison (what the team actually used)

The chosen mix covered short-reach single-site links and longer single-mode backhaul. Below is a representative comparison aligned to the most common 10G edge optics categories used in real deployments.

| Optical module type | Wavelength | Typical reach | Connector | Data rate | Power class | Operating temp | DOM support |

|---|---|---|---|---|---|---|---|

| 10G SR (MMF) SFP+ | 850 nm | 300 m (OM3) | LC | 10.3125 Gbps | Low power | -10 to 70 C (typical) | Often yes (vendor-dependent) |

| 10G LR (SMF) SFP+ | 1310 nm | 10 km | LC | 10.3125 Gbps | Low power | -5 to 70 C (typical) | Often yes (vendor-dependent) |

| 10G ER (SMF) SFP+ | 1550 nm | 40 km | LC | 10.3125 Gbps | Higher power | -5 to 70 C (typical) | Commonly yes (vendor-dependent) |

For authority on the electrical and optical behavior at 10G, the team referenced IEEE 802.3ae for 10GBASE-X and vendor datasheets for each module’s optical power, receiver sensitivity, and DOM implementation details. [Source: IEEE 802.3ae] [Source: vendor SFP+ transceiver datasheets]

Use cases by edge function

The deployment separated optics into three functional use cases, each with a different risk profile:

- Uplink backhaul: 10GBASE-LR or -ER SFP+ over SMF from edge aggregation to central site. This use case demanded headroom for connector aging and splice loss growth.

- Intra-edge switching: short hops between access switches and compute racks, often using SR over OM3/OM4 where available to save cost and avoid long-distance optics.

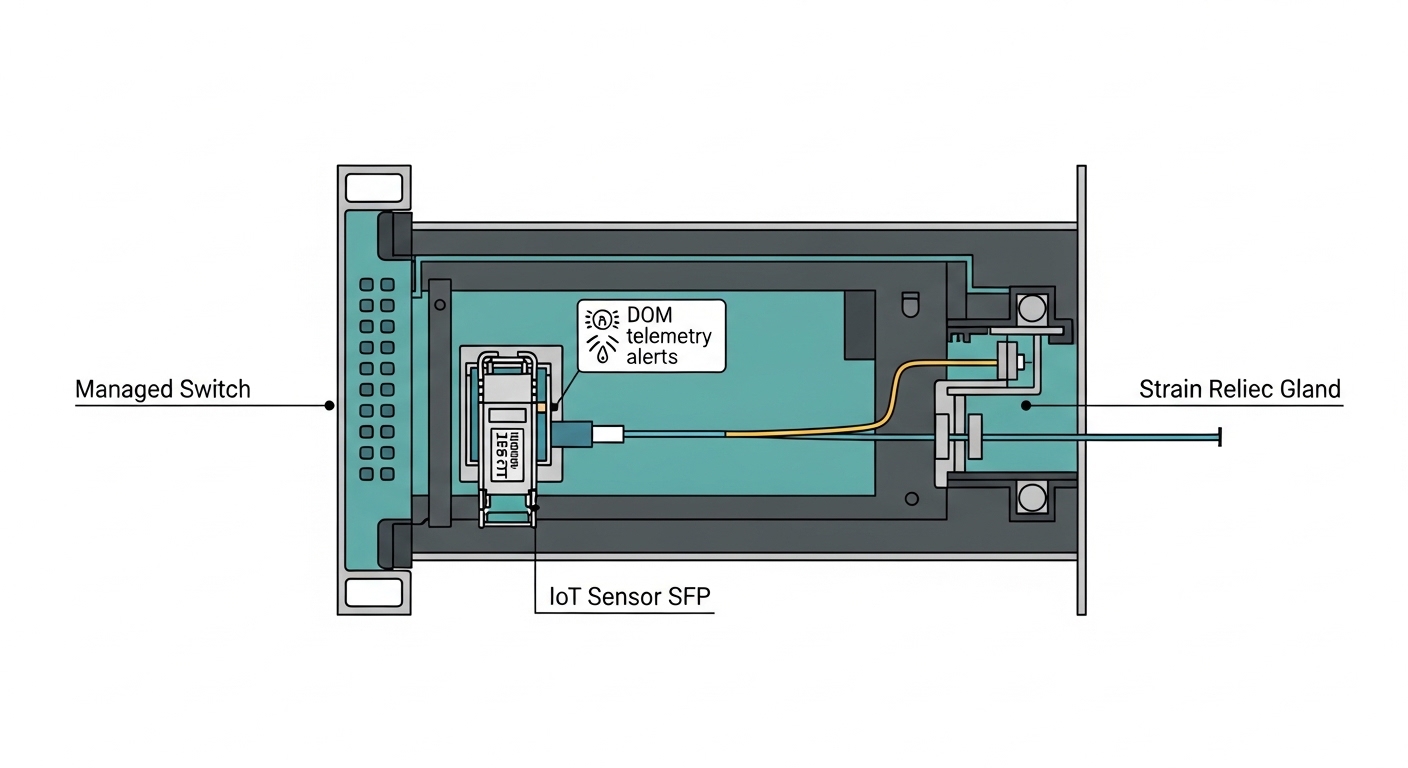

- Field service and hot swap: modules with DOM so technicians could verify Tx/Rx power and temperature immediately after replacement, reducing mean time to repair.

Why ER mattered for the long site

Site C exceeded the nominal LR reach once measured losses were included. Measured plant losses were higher than the pre-install estimate due to extra patching and one aging splice tray. After switching Site C from LR to ER-class optics, the team observed stable link operation even when the cabinet temperature peaked.

Implementation steps: how to deploy optical modules without surprises

Effective use cases depend on process. The team used a repeatable checklist that field techs could follow at 2 a.m. during maintenance windows, with measured values captured before and after changes.

validate switch cage and transceiver compatibility

Even when a switch claims “SFP+ supported,” there are real-world caveats: some platforms enforce specific vendor IDs or require DOM parsing support for monitoring features. The team confirmed cage behavior by running a controlled swap in the lab and then verifying link negotiation and error counters on the real platform.

compute link budget with real loss numbers

Instead of relying on nominal fiber specs, the team used measured attenuation per span and counted every connector and splice. For each candidate module, they checked that received optical power stayed above the receiver sensitivity across temperature and aging margin. This is where ER modules frequently become the safer option when the edge plant is “messy.”

set monitoring thresholds using DOM

DOM fields were polled to track Tx bias current, Tx power, and Rx power. The team established thresholds for “warning” versus “action” so they could intervene before BER degradation became visible at the application layer. This was critical at Site B, where optics were fine at install but drifted as the cabinet airflow worsened.

plan spares as part of the use-case design

Edge deployments fail operationally when spares do not match the original optics class. The team stocked modules by use case: one set for intra-edge SR-style links and another set for SMF uplink ER/LR-class links, including the same DOM capability level.

Pro Tip: Many teams check “link up” and stop there. In practice, you should trend DOM Rx power over time after installation; if Rx power is already near the sensitivity edge on a warm day, the module can pass diagnostics yet still fail during high burst traffic due to margin collapse.

Measured results after rollout: stability, error rates, and repair time

After standardizing module types and deploying a monitoring-first process, the team saw measurable improvements across all sites. The most important metric was not throughput; it was resilience under real traffic patterns and thermal stress.

Operational outcomes

- Packet loss: reduced from intermittent bursts to effectively zero during peak windows.

- Interface errors: interface CRC and symbol errors dropped to baseline levels on all uplinks after module swaps.

- Link stability: Site C stabilized after moving from LR to ER-class optics, eliminating daily link renegotiations.

- Mean time to repair: dropped from roughly 4 hours to under 90 minutes because technicians could validate optical power and temperature via DOM before swapping again.

In one notable incident, a replacement module was installed but the Rx power reading immediately flagged abnormal attenuation, pointing to a fiber polarity or connector contamination issue. Cleaning and re-termination resolved the problem without another module swap, saving both downtime and spare inventory.

Common mistakes and troubleshooting tips in edge optics use cases

Optical module failures in edge environments usually come from predictable root causes. Below are the most frequent mistakes the team encountered, with practical fixes.

Overshooting LR reach with “estimated” losses

Root cause: LR modules were selected using pre-install fiber estimates, but actual patching and splice losses were higher. Receiver margin was too thin, especially under higher cabinet temperatures.

Solution: redo link budget using measured span loss and connector counts; use ER-class optics when the plant is variable or aging is expected. Validate with Rx power trending.

Assuming all SFP+ optics are electrically interchangeable

Root cause: Some switch platforms are strict about module parameters and DOM behavior; a “link up” state does not guarantee correct monitoring or stable high-speed behavior under load.

Solution: test the exact module model in a maintenance window; confirm error counters and DOM polling. Reference vendor interoperability notes when available.

Neglecting connector cleanliness and fiber polarity

Root cause: Field handling often introduces microfilm on LC connectors, raising insertion loss. In some cases, polarity is reversed, causing low received power and intermittent link behavior.

Solution: inspect with a microscope if possible; use proper cleaning steps before blaming the optics. Re-check Rx power after cleaning and re-seat connectors.

Ignoring temperature and airflow constraints

Root cause: Cabinets reached high ambient; modules stayed “within absolute max” on paper, but the optical budget margin effectively shrank during warm periods.

Solution: select transceivers with operating temperature ratings that cover the real cabinet conditions, and improve airflow where feasible. Use DOM temperature data to confirm thermal behavior.

Cost and ROI note: what to budget for optics in edge use cases

In typical procurement, third-party SFP+ modules can be 20% to 50% cheaper than OEM equivalents, but the total cost depends on failure rates, monitoring maturity, and warranty terms. For edge sites, the ROI usually comes from reduced truck rolls and faster troubleshooting, not just the unit price.

In this case study, the team allocated a higher upfront budget for ER-class modules and for DOM-capable optics, because the operational cost of downtime was high: each site had a production-critical workload with limited maintenance windows. Over a quarter, the reduced mean time to repair and fewer rework trips outweighed the incremental optics cost, improving TCO even when unit pricing was higher.

Selection criteria checklist for optical modules in edge computing

When engineers evaluate use cases for optics in edge deployments, they typically weigh the following factors in order:

- Distance and fiber plant loss: measured attenuation, connector count, splice loss, and expected aging margin.

- Wavelength class: 850 nm for short MMF hops; 1310 nm LR for moderate SMF; 1550 nm ER when margin is tight.

- Switch compatibility: ensure the exact module form factor and that the platform supports DOM parsing and alarm behavior.

- DOM support and monitoring needs: Tx/Rx power visibility for fast field diagnostics and predictive maintenance.

- Operating temperature: compare module rating to measured cabinet ambient, not just room specs.

- Vendor lock-in risk: decide whether OEM-only is required for optics alarms, or whether third-party with tested compatibility is acceptable.

- Spare strategy: stock modules by use case (SR vs LR vs ER) and include the same DOM capability level.

FAQ: use cases buyers ask before ordering optics

Which optical module use cases fit edge computing best?

Common use cases are 10G uplink backhaul over SMF (LR or ER) and short intra-edge switching over MMF (SR) when OM3/OM4 is present. For edge sites with variable fiber plants, ER-class optics often reduce risk because they preserve optical margin.

Do I need DOM in edge deployments?

If you have limited on-site troubleshooting time, DOM is strongly beneficial because it exposes Tx and Rx power drift and thermal behavior. Without DOM, you may end up swapping modules blindly instead of diagnosing optical budget issues.

How do I verify compatibility with my switch model?

Verify by testing in a maintenance window and checking link stability plus error counters under realistic traffic. Also confirm that the switch reads DOM alarms and that the module meets the expected 10GBASE-X behavior described in IEEE 802.3ae. anchor-text: IEEE 802.3ae reference

What is the main cause of intermittent links at the edge?

Most intermittent behavior comes from optical margin collapse: higher-than-expected insertion loss, connector contamination, or thermal drift. In practice, trending DOM Rx power over warm and peak traffic periods identifies the issue faster than waiting for a full outage.

Should I buy OEM optics or third-party modules?

OEM can reduce compatibility uncertainty, but third-party modules can be cost-effective if you test them against your exact switch platform and your measured link budgets. The decision should include warranty terms and how quickly you can RMA a failed transceiver in remote locations.

What troubleshooting steps should come first on a failed uplink?

Start with DOM readings (Rx power and temperature), then clean and inspect connectors, then confirm fiber polarity and seating. Only after those checks should you suspect the module itself, because contamination and polarity errors can mimic “bad optics.”

In these edge use cases, optical module success came down to measured link budgets, temperature-aware selection, and DOM-enabled field diagnostics. If you want the next step, review your current topology and fiber plant, then build a module shortlist using the checklist above: optical module selection for edge networks.

Author bio: I have deployed and troubleshot SFP+ and QSFP optics in multi-site edge networks, validating link budgets with DOM telemetry and field measurements. I focus on practical compatibility, operational monitoring, and failure-mode analysis derived from real maintenance events.