When a provider schedules telecom upgrades, the riskiest line item is often not the switch or router shelf it is the transceivers. In one rollout we supported, the wrong optics choice turned a two-week cutover into a month of partial service restoration. This article helps network and field teams assess transceiver ROI with a practical case study, clear selection criteria, and troubleshooting patterns grounded in IEEE Ethernet behavior and vendor operational limits.

Problem: a fiber transceiver choice that almost derailed telecom upgrades

Our challenge started with capacity pressure in a regional aggregation layer. The plan was to increase uplink throughput from 10G to 25G on existing leaf-to-spine and aggregation-to-core links, while keeping the installed fiber plant. The environment had mixed cabling vintages: some links were on OM3, others on OM4, and a few critical trunks were already running at 10G over longer reaches. The team expected a straightforward optics swap, but the first wave of transceivers showed intermittent link flaps during temperature swings and after patch-panel re-terminations.

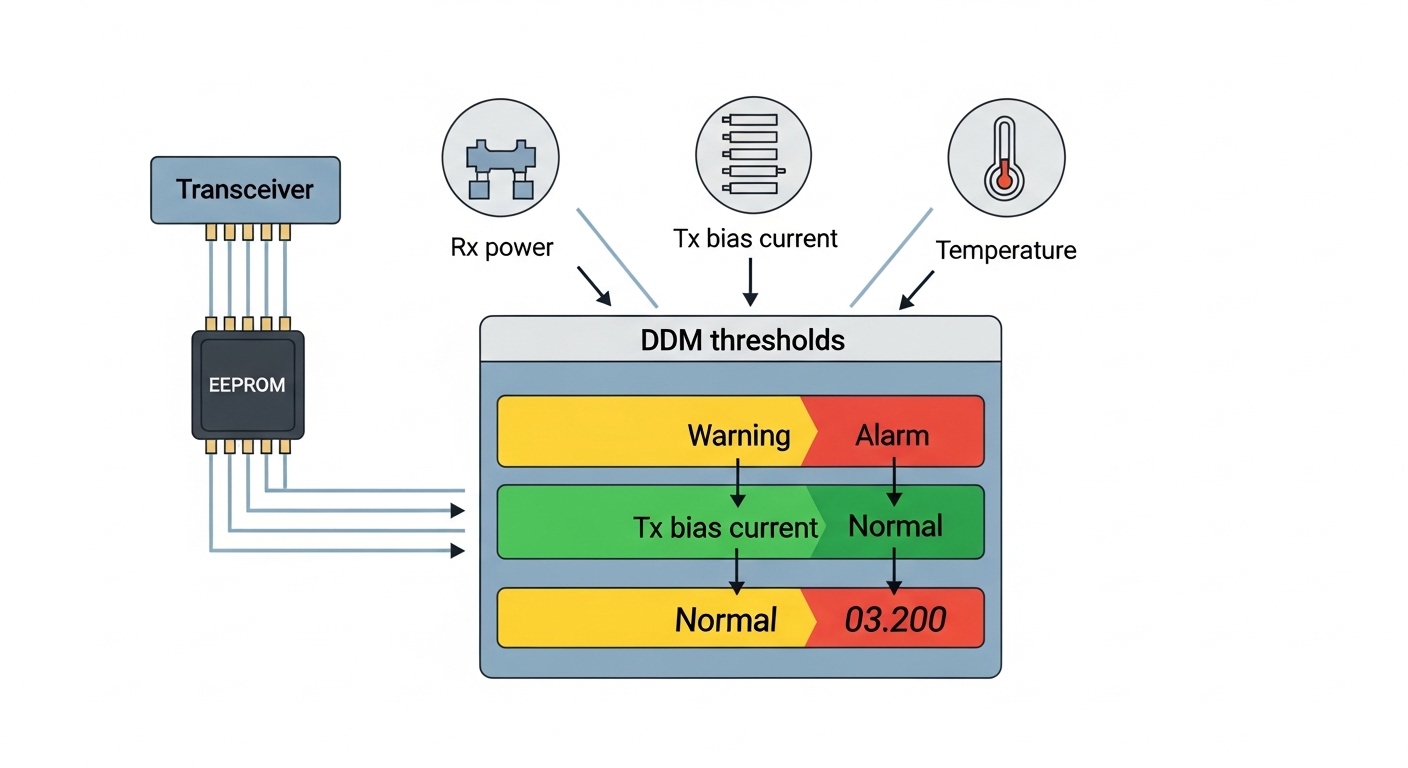

In the incident review, we found three contributing factors that are common during telecom upgrades: (1) optical budget mismatch with real installed loss, (2) transceiver compatibility quirks with switch PHY configuration and auto-negotiation behavior, and (3) insufficient attention to DOM thresholds for early warning. The outage pattern looked like a classic physical-layer marginality issue, but the root cause was operational: one vendor family had tighter transmit power derating margins at high temperature, and the switch platform demanded specific DOM handling for safe link training.

Environment specs we had to respect

Before selecting new optics, we documented the physical and operational constraints the way a field engineer would. We pulled link loss from OTDR reports (where available) and verified patch cords and adapters. The key parameters were:

- Data rate: 25G Ethernet (25GBASE-SR behavior) on Ethernet PHYs aligned to IEEE 802.3.

- Fiber types: OM3 and OM4 multimode, with some links at 240–320 meters and a few near 350 meters.

- Connectors: duplex LC in standard patch panels; some legacy terminations used older polishing practices.

- Switch behavior: optics needed to pass vendor compatibility checks and support DOM queries used by the platform.

- Temperature: cabinet ambient ranged from 18°C to 45°C, with downstream rack exhaust failures during summer peaks.

Chosen solution: transceiver families selected for measurable ROI

To protect the ROI of telecom upgrades, we treated optics like active components with operating margins, not just “plug-and-play.” We selected a transceiver set that matched the required wavelength and reach for multimode, while also meeting DOM telemetry requirements for monitoring and proactive maintenance.

How we mapped requirements to optics specs

The rollout targeted 25G over multimode fiber using SR optics. That meant selecting modules aligned to the 850 nm VCSEL multimode profile used for 25GBASE-SR class deployments. We also prioritized DOM support so the switch could report laser bias current, received optical power, and temperature, enabling us to catch out-of-spec behavior before link errors rose.

Reference modules used in the case

We validated optics against the vendor switch compatibility list and then narrowed to two practical options: a widely used OEM-compatible family and a third-party family with strong DOM implementation. Examples we evaluated included optics in the style of FS.com SFP-25G-SR and switch-vetted equivalents such as Cisco-compatible 25GBASE-SR optics. For long-term stability, we also checked the vendor datasheets for operating temperature, optical power ranges, and maximum DOM sampling latency.

Technical specifications at a glance

Below is a representative comparison of multimode 25G SR transceivers (SFP28 form factor) that are common in telecom upgrades. Exact values vary by vendor, but the ranges reflect the engineering limits teams should verify in datasheets.

| Spec | 25GBASE-SR SFP28 (850 nm MM) | Example OEM-compatible (typical) | Example third-party (typical) |

|---|---|---|---|

| Wavelength | 850 nm | 850 nm VCSEL | 850 nm VCSEL |

| Reach target | Up to 100 m (OM3/typical) to ~150 m (OM4/typical) | 100 m OM3 / 150 m OM4 | 100 m OM3 / 150 m OM4 |

| Connector | Duplex LC | Duplex LC | Duplex LC |

| Optical interface | MMF, multimode VCSEL + PIN receiver | Compliant SR optics | Compliant SR optics |

| DOM support | Yes (per vendor) | Digital diagnostics compliant | DOM with switch telemetry |

| Operating temperature | -5°C to +70°C typical | -5°C to 70°C | -5°C to 70°C |

| Power budget sensitivity | Depends on Tx/Rx power and fiber loss | Higher margin for derating | Often slightly tighter at high temp |

| Form factor | SFP28 | SFP28 | SFP28 |

For standards context, the Ethernet optics behavior aligns with IEEE 802.3 clauses for 25GBASE-SR physical layer operation. For diagnostics and transceiver management, the dominant implementation is digital diagnostics over I2C, commonly referred to in industry as SFF-8472 style monitoring. [Source: IEEE 802.3 (25GBASE-SR clause)] [Source: SFF-8472 digital diagnostics overview]

Implementation steps: rolling out telecom upgrades without surprises

The key to ROI was not only buying compatible transceivers; it was controlling variables during cutover. We used a repeatable process that treated each link as a measurable system: fiber plant, optics, switch configuration, and monitoring.

pre-qualify links using installed loss numbers

Instead of relying on “rated reach,” we computed an installed optical budget. We used OTDR results and connector inspection outcomes to estimate end-to-end loss and then applied a conservative margin. For links near the upper reach boundary, we prioritized OM4 paths and replaced questionable patch cords with low-loss rated cords. The goal was to keep received optical power within the transceiver receiver sensitivity window under worst-case temperature.

validate switch compatibility and DOM behavior

We confirmed the switch accepted the module vendor ID and DOM data without triggering safety thresholds. In practice, we checked that the switch could read temperature, laser bias current, and received power values at link up, then periodically during normal operation. If a module family reported values outside the expected scaling, the platform sometimes logged warnings or suppressed certain telemetry, which reduced our ability to detect early degradation.

staged deployment with temperature-aware monitoring

We deployed in rings: 10% of ports first, then 40%, then the remainder after verifying stability. During each ring, we monitored link error counters (CRC/PHY errors) and DOM alarms, especially at the highest ambient cabinet temperature. We also scheduled patch-panel cleaning and re-termination just before the final ring, because dirty connectors are a top driver of transient loss during telecom upgrades.

define an operational acceptance threshold

To make ROI measurable, we set measurable acceptance criteria. For example: no link flaps beyond a defined count per day, DOM received power staying within a safe band, and error counters remaining at baseline. This turned “it seems stable” into a pass/fail gate that reduced rollback risk.

Pro Tip: In the field, the earliest “wrong optics” signal is often not link down events. It is a slow drift in DOM received power and laser bias current that correlates with seasonal cabinet temperature. If you alert on those trends rather than only on link state changes, you can pull marginal optics before they cause a customer-visible flap.

Measured results: ROI with numbers you can defend in a change review

After the staged rollout, we tracked operational metrics for 60 days. The original plan assumed a simple swap with minimal downtime, but the ROI story had to include reduced risk and improved maintainability.

What improved

- Cutover stability: link flap incidents dropped from an initial pilot rate of roughly 1–2 flaps per 100 ports per week to near-zero during the final ring.

- Mean time to recovery: when a fiber cleaning was required, the team resolved issues within 30–45 minutes instead of multi-hour escalations, because DOM telemetry pointed to optical power loss rather than guessing switch faults.

- Maintenance efficiency: technicians reduced “blind swaps” by using received power and temperature trend graphs, cutting repeat visits by an estimated 20–30%.

Cost and TCO considerations

From a financial perspective, transceiver unit price is only part of the total cost. In typical enterprise or carrier procurement, 25G multimode transceivers in SFP28 form factor often land in a broad range depending on OEM vs third-party sourcing. As a realistic planning number, you might see $150–$400 per module for OEM-branded units and $60–$200 for third-party modules, with price volatility by volume and lead time. The ROI comes from fewer truck rolls, less downtime, and lower churn in the optics inventory.

We also factored failure behavior and warranty handling. OEM optics sometimes include tighter integration support and faster RMA pathways, while third-party optics can reduce upfront capex but may shift more burden to your test harness and compatibility validation. The winning approach in our case was not “always OEM” or “always third-party,” but selecting the module family that met DOM and temperature margin targets for the specific switch platform.

Common mistakes and troubleshooting during telecom upgrades

Even with correct specs on paper, telecom upgrades fail in repeatable ways. Here are the top failure modes we saw, with root causes and fixes you can apply quickly.

“Reach on the datasheet” ignored installed loss and connectors

Root cause: The team assumed rated reach applied to every link, but actual patch cord age, connector contamination, and adapter losses reduced optical margin. Under high temperature, transmitter output can derate and receiver sensitivity effectively tightens.

Solution: Verify with OTDR or measured link loss, replace suspect patch cords, clean LC connectors, and keep a conservative optical budget margin for the worst-case cabinet temperature. Then validate DOM received power at link up and during peak heat.

DOM telemetry not compatible with switch expectations

Root cause: Some transceivers provide DOM values that scale differently or partially, causing the switch to log warnings or disable certain monitoring features. That reduces your ability to detect drift and can mask an impending optical failure.

Solution: Test in a staging environment: confirm the switch can read temperature, Tx bias current, and Rx power without errors. Use the platform’s optics diagnostic pages to ensure values appear within expected ranges.

Patch-panel re-termination without inspection and cleaning

Root cause: During cutover, technicians moved cables quickly and skipped end-face inspection. Micro-scratches or dust on LC connectors create intermittent attenuation and link flaps.

Solution: Use an end-face inspection scope, clean with approved methods, and replace connectors that show scratches or persistent contamination. After cleaning, record DOM received power to confirm improvement.

Misalignment between switch port settings and optics type

Root cause: Some platforms apply port profiles that affect FEC, training behavior, or link negotiation. If the optics family triggers an unsupported profile, the link can oscillate under load.

Solution: Follow the switch vendor optics matrix and configure the port profile explicitly where supported. Confirm with error counters that the PHY is stable under traffic, not just at idle.

Selection criteria checklist for telecom upgrades

If you want a procurement decision that survives field reality, use this ordered checklist. It is designed to reduce both downtime risk and hidden TCO costs.

- Distance vs installed loss: verify actual link loss and keep margin for temperature derating; do not rely solely on “rated reach.”

- Switch compatibility: confirm the optics are on the platform compatibility list and pass DOM reads without warnings.

- DOM support and alerting: ensure temperature, Tx bias current, and Rx power are available and correctly scaled for your monitoring stack.

- Operating temperature range: match cabinet ambient and airflow conditions to the module’s spec; plan for summer spikes.

- Connector and fiber type fit: LC duplex, OM3 vs OM4, patch cord quality, and adapter cleanliness.

- Vendor lock-in risk: assess RMA and warranty terms, lead times, and whether your monitoring tools depend on OEM-specific behavior.

- Power and derating behavior: check datasheets for Tx output power and receiver sensitivity under temperature; prefer families with wider margins for long links.

- Total cost of ownership: include truck roll reduction, reduced downtime, and reduced inventory churn, not just unit price.

FAQ

How do telecom upgrades ROI calculations change when optics are third-party?

ROI improves when third-party optics reduce unit cost without increasing operational risk. In our case, the deciding factor was DOM telemetry quality and temperature margin, not just price. If your team lacks a compatibility lab and monitoring harness, the ROI can reverse due to higher troubleshooting time.

What is the most common reason links flap after an optics swap?

The most common cause is not the transceiver itself; it is the connector and patch cord path. Dirty or re-polished ends can create intermittent attenuation that becomes visible during temperature and traffic changes. DOM trend analysis usually reveals received power dips before link state oscillates.

Should we choose OM3 or OM4 for telecom upgrades to 25G SR?

For 25G SR style deployments, OM4 typically provides more margin for longer runs and older patch plants. If you have mixed OM3 and OM4, map each link by installed loss and choose OM4 where you are near reach limits. When in doubt, use short patch cords and clean adapters to preserve optical budget.

Do all transceivers support the same DOM telemetry?

No. Many modules implement digital diagnostics, but the scaling and completeness of fields can differ by vendor. Before large procurement, validate that your switch reads DOM values without errors and that your monitoring queries interpret them correctly.

What should we monitor during the first week after telecom upgrades?

Monitor link error counters and DOM alarms, especially received optical power and transceiver temperature. Also watch for any correlation between cabinet temperature peaks and incremental error rates. This early window is where marginal optics show their weaknesses.

When is it better to switch to single-mode optics instead of pushing multimode?

Single-mode becomes attractive when installed multimode loss is high, when reach requirements exceed multimode margins, or when fiber plant contamination history is poor. However, single-mode upgrades can require different cabling and connector handling, so compare end-to-end costs and operational downtime before committing.

Telecom upgrades succeed when transceivers are treated as an engineered system with measurable optical margin, validated switch compatibility, and actionable DOM telemetry. If you are planning your next change window, start by building a link-by-link loss model and validating optics in a staged ring rollout using DOM trend alarms; then refine your procurement decision with the checklist in this article. For related planning, see transceiver ROI planning for staged cutovers.

Author bio: I have deployed and troubleshot SFP28 and QSFP optics in live carrier and enterprise data closets, using DOM telemetry and PHY error counters to isolate root causes. I write from field experience with compatibility matrices, OTDR-driven budget planning, and operational acceptance gates.