AI traffic is no longer “bursty background load”; it is a sustained, latency-sensitive stream that stresses optics, power, and automation. This reference explains the technology trends affecting optical network design and helps network engineers select transceivers, fiber paths, and operational checks that hold up in production. It targets data center and campus teams planning upgrades for 25G/100G and beyond, where wrong choices can silently raise error rates or power draw.

How AI workloads change optical design constraints

Traditional capacity planning often assumed relatively stable flows and predictable utilization. AI training and inference introduce hot flows that move across leaf-spine fabrics, with microbursts that increase queueing and link utilization variance. That shifts design priorities toward lower latency optics, tighter jitter tolerance, and faster change control when you add capacity.

On the physical layer, the largest operational change is how often you re-balance links and re-route traffic as models scale. Engineers increasingly deploy automation that selects routes based on measured path telemetry, which raises the importance of optical diagnostics such as DOM (Digital Optical Monitoring), consistent vendor calibration, and predictable transceiver behavior across temperature swings. For standards alignment, the underlying Ethernet PHY behavior follows IEEE 802.3 for optical interfaces, while vendor-specific transceiver compliance details are documented in datasheets. IEEE 802.3 Overview

technology trends in transceiver selection for AI fabrics

AI fabrics commonly choose short-reach optics because the dominant cost is switch port density and cabling complexity, not long-haul fiber. In practice, you will see SR (multimode) and LR (single-mode) variants depending on rack distance and upgrade cadence. For 25G/50G/100G, the module form factor (SFP28, SFP56, QSFP28, QSFP56) and the optics type dictate reach, dispersion tolerance, and power budget.

Engineers also plan for migration paths: many networks start with 10G/25G and later consolidate to 100G as GPU clusters expand. That creates compatibility risk when mixing vendor optics across the same port group, especially if firmware expects consistent DOM behavior and thresholds.

| Spec | Typical AI Data Center Choice | Example Modules (real part numbers) |

|---|---|---|

| Data rate | 25G to 100G per lane aggregate | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, FS.com SFP-10GSR-85 |

| Wavelength | 850 nm for SR multimode; 1310 nm for LR single-mode | FTLX8571D3BCL (850 nm class SR), QSFP28 SR4 variants (850 nm) |

| Reach (typical) | Short reach: tens of meters to ~300 m depending on optics and OM grade | SR4/SR10 classes; verify with vendor reach tables |

| Connector | LC duplex (most common) | FS.com and Finisar SR transceivers typically use LC |

| Power budget | Lower is better for dense racks; verify max TX power and receiver sensitivity | Check datasheets for mW and dBm class limits |

| DOM support | Required for automation and alarms | Most modern QSFP28/QSFP56 provide monitoring tables |

| Operating temp | 0 to 70 C common; extended industrial variants may be available | Validate against your rack ambient |

Pro Tip: In AI fabrics, treat DOM thresholds as part of your “performance SLO.” If you only validate optics once at install time, you can miss drift from dust contamination, microbends, or aging lasers—DOM alarms plus consistent threshold tuning often detect issues days before BER spikes.

Designing fiber plants for AI growth without rework

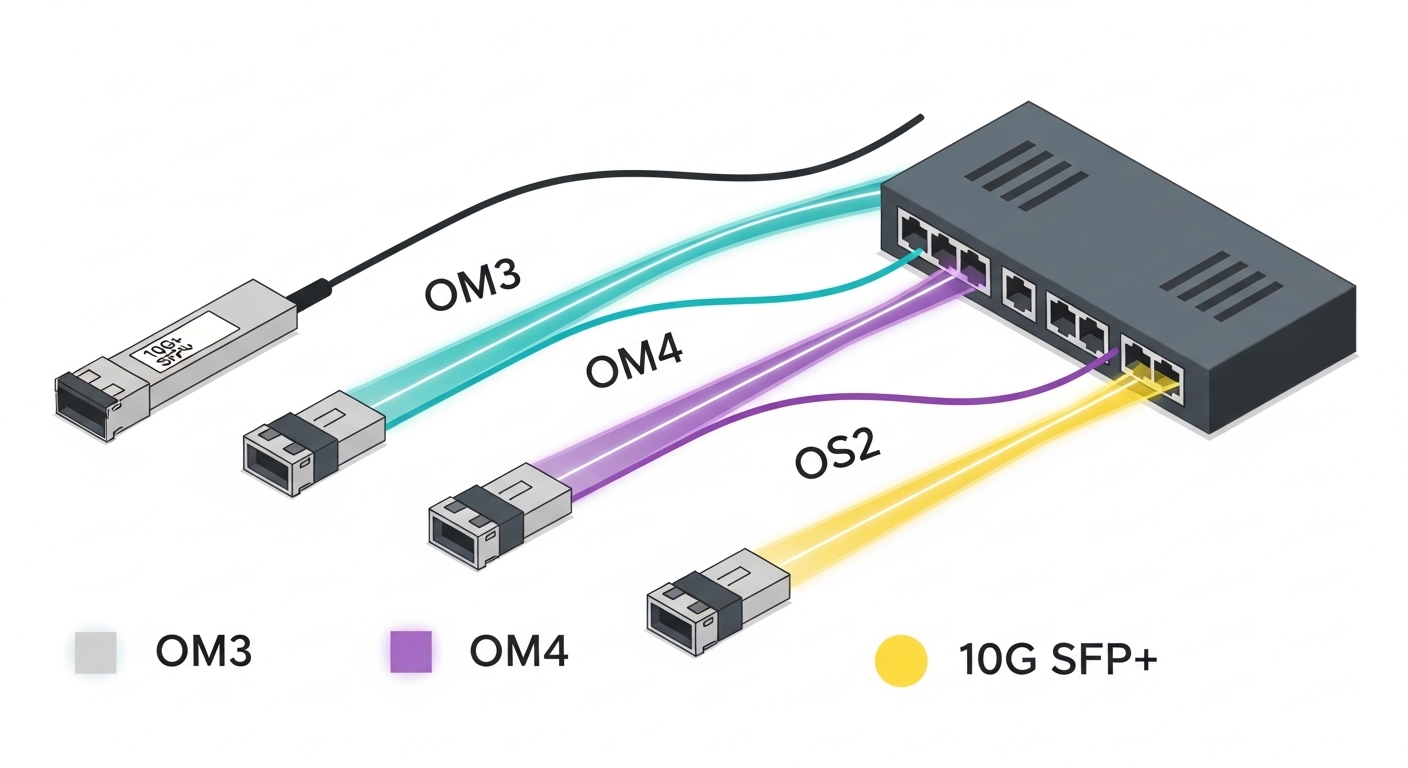

AI deployments expand quickly, so fiber plants must support both current reach and future reroutes. The biggest operational win is to standardize patch panel labeling, maintain slack, and enforce bend-radius rules during install. For multimode SR, ensure the installed fiber grade and patch cord type match the optics assumptions; mixing OM3 and OM4 or using non-compliant patch cords can reduce effective bandwidth and increase error correction load.

When planning reach, use the vendor link budget guidance and include realistic losses: connectors, splices, patch cords, and any additional attenuation from cleaning cycles. For single-mode LR, verify that you are not exceeding your dispersion and reflectance tolerance, especially if you have older patch panels or unknown connector history. Always validate with an optical power meter and, when available, an OTDR trace for critical trunks.

Selection checklist engineers use under AI-driven change pressure

Use this ordered checklist when selecting optics and planning the upgrade. It is optimized for teams that must avoid outages while scaling GPU clusters and automation.

- Distance and loss budget: confirm reach for your exact fiber grade and patch cord types; include connector and splice losses.

- Switch compatibility: verify the transceiver is on the switch vendor compatibility list; confirm the port type supports the module form factor.

- Data rate and lane mapping: ensure the module matches the intended PHY mode (e.g., 100G SR4 vs 25G breakout scenarios).

- DOM and telemetry: confirm availability of temperature, bias, optical power, and alarm flags; validate thresholds with your monitoring stack.

- Operating temperature: check max case temperature versus measured rack ambient and airflow constraints.

- Vendor lock-in risk: evaluate OEM vs third-party optics; test interoperability across at least one full maintenance window.

- Failure modes and spares strategy: stock spares by lot or vendor family when possible; define RMA lead times and acceptance criteria.

Common mistakes and troubleshooting in AI optical rollouts

Mistake 1: Assuming multimode reach from marketing numbers. Root cause is mismatch between fiber grade, patch cord specs, and connector quality. Solution: validate with OTDR and measured optical power at install; require end-to-end link verification after any re-cabling.

Mistake 2: Ignoring DOM alarms until links fully fail. Root cause is monitoring thresholds that do not match your vendor’s calibration behavior. Solution: baseline DOM readings on day 0 and after thermal stabilization; tune alert thresholds to catch drift without alert storms.

Mistake 3: Mixing optics vendors without a compatibility test. Root cause is subtle differences in digital diagnostics, reset behavior, and PHY handshake timing. Solution: run a controlled interoperability test in a lab or staging fabric, including link flap tolerance and error-rate observation.

Mistake 4: Overlooking patch connector cleaning and microbends. Root cause is dust on LC endfaces and routing that violates minimum bend radius during cable management. Solution: enforce cleaning SOPs, inspect endfaces, and re-route with proper strain relief and bend radius tooling.

Cost and ROI note for optics under technology trends

In many data centers, transceivers represent a meaningful but not dominant slice of total upgrade spend; the bigger cost is labor, downtime risk, and rework from incompatibility. OEM optics often cost more, but they reduce time-to-troubleshoot during incidents because vendor support aligns with known compatibility profiles. Third-party optics can cut unit cost, but you should budget additional engineering time for staged validation and potentially higher RMA frequency depending on supply chain quality.

As a realistic planning range, short-reach 100G optics frequently land in the low hundreds of currency units each in volume, while higher-density single-mode or newer form factors can be higher. TCO improves when you reduce failures and avoid truck rolls: automation-friendly DOM plus consistent monitoring can prevent extended outages and reduce mean time to repair. IEEE resources

FAQ

Q1: Which technology trends matter most for optical design in AI?

The most impactful trends are automation-driven telemetry (DOM), tighter latency sensitivity from AI traffic patterns, and faster scaling that increases the chance of fiber rework. Engineers respond by standardizing compatibility testing, monitoring thresholds, and link verification procedures.

Q2: Should I standardize on SR or mix SR and LR?

Use SR when rack-to-rack distances fit and the multimode plant is correctly provisioned. Mix SR and LR when you have longer spans or when you must simplify future expansions with single-mode trunks, but validate dispersion and connector reflectance constraints.

Q3: Do third-party transceivers work reliably in production?

They can, but reliability depends on switch compatibility, DOM behavior, and optical quality consistency. Plan a staging test that includes thermal cycling and link stability checks across your maintenance window.

Q4: What measurements should I collect during acceptance testing?

Collect optical power levels, DOM telemetry baselines, and error counters under representative traffic. After any cabling changes, re-check optical power and confirm error-rate stays within your operational thresholds.

Q5: How do I troubleshoot intermittent AI link flaps?

Start with DOM alarms, then inspect connector cleanliness and verify bend radius compliance. If flaps persist, isolate by swapping optics, then swap the patch cord and finally test the fiber path with OTDR.

Q6: How does AI change monitoring requirements?

AI increases the value of early warnings: small optical drifts can correlate with rising retransmissions and latency. Ensure your monitoring stack ingests DOM fields and triggers alerts tied to your SLOs rather than only link-down events.

AI-driven technology trends are forcing optical network design to become more measurable, more automated, and more compatibility-aware. Next, map your current cabling inventory and switch port capabilities using fiber plant planning for higher density.

Author bio: I am a field-focused network engineer who validates optics using measured link budgets, DOM baselines, and staged interoperability tests. I document deployment lessons from real racks, patch panels, and maintenance windows to help teams scale safely.