If your AI cluster is missing packets, saturating queues, or randomly dropping sessions, the culprit is often the optical layer, not the model code. This article walks engineers and platform leads through a practical technical evaluation of fiber optic transceivers and optics settings for AI/ML infrastructure. You will get a top-8 checklist, a real deployment scenario with numbers, and troubleshooting steps tied to common failure modes. It helps teams planning leaf-spine fabrics, high-density ToR switches, and high-throughput training networks.

Start with a link-budget reality check (not a datasheet)

For a technical evaluation, the first win is confirming that your optical budget supports both reach and margin under worst-case conditions. Vendors quote typical budgets, but your actual margin depends on fiber type, patch panel losses, MPO/MTP polarity handling, splice quality, and connector cleanliness. IEEE 802.3 defines electrical and optical requirements by PHY, but your field performance hinges on how the link is built and maintained. In AI/ML fabrics, small link margin mistakes show up as retransmits, ECC corrections, or link flaps under load.

Use a budget model that includes: transmitter launch power, receiver sensitivity (or minimum received power), fiber attenuation (dB/km at the wavelength), connector loss, splice loss, and any additional loss from patch cords. Then add an operational margin for aging and temperature swings, especially if your transceivers run near upper spec. For 10G/25G/40G/100G multimode, modal effects and differential mode delay matter; for single-mode, macrobend and connector inspection quality dominate.

- Best-fit scenario: You are validating new builds where cabling is fresh but patch panels and breakout adapters vary widely.

- Pros: Catches non-obvious budget failures early; reduces RMA churn.

- Cons: Requires disciplined inventory of losses and correct polarity assumptions.

Match wavelength and fiber type to the PHY expectation

AI/ML switches often support multiple optics options, but the PHY mode still expects the right wavelength and fiber type. Common choices are SR (short reach) multimode using 850 nm, LR (long reach) single-mode around 1310 nm, and ER (extended reach) around 1550 nm. If you mix optics and fibers incorrectly, you may get a link that “comes up” but performs poorly under temperature or after a few re-pulls. A technical evaluation should verify both the transceiver label and the fiber plant classification (OM3, OM4, OS2), not just “multimode vs single-mode” at a high level.

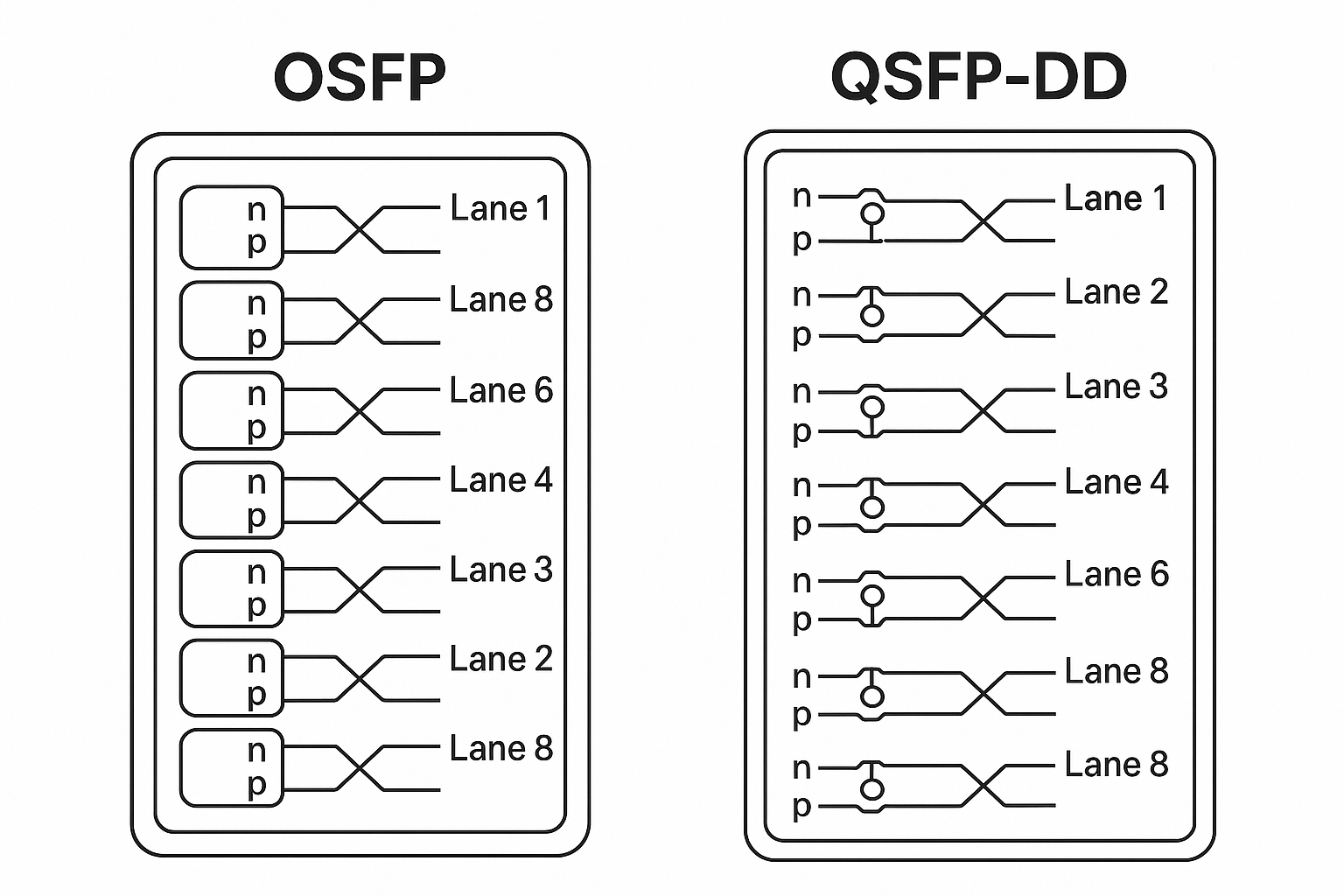

Also check whether the switch is expecting MPO polarity conventions for 100G/200G optics. For example, many 100G SR4 optics require a specific polarity mapping (often termed “Type A” or “Type B” in practice, depending on patch design). Getting this wrong can create symptoms like intermittent link loss or permanent failures after maintenance.

- Best-fit scenario: You are standardizing optics across multiple switch models and vendors.

- Pros: Prevents silent performance degradation from wrong fiber class.

- Cons: Requires cabling documentation and careful polarity labeling.

Compare power, sensitivity, and optical parameters across candidate optics

Once the link budget is plausible, do a side-by-side technical evaluation of optical parameters that affect real behavior: transmitter power, receiver sensitivity, and typically supported power ranges. Different transceivers can have different output power control loops and receiver AGC behavior, which changes how they tolerate marginal links. Field teams often discover that two “SR” modules with the same nominal reach behave differently on the same patch panel.

Below is a practical comparison table for commonly evaluated modules. Values vary by revision and vendor, so treat these as representative ranges and always verify with the exact datasheet for the part number you plan to deploy.

| Module type (examples) | Wavelength | Typical reach | Connector | Data rate | Operating temp | Power / sensitivity focus for evaluation |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR, FS.com SFP-10GSR-85 | 850 nm (MM) | Up to 300 m (OM3) / 400 m (OM4) for 10G | LC | 10G (1x) | 0 to 70 C (typical; confirm) | Receiver sensitivity and Tx launch power range; margin vs patch losses |

| Cisco QSFP-40G-SR4, Finisar FTLX8571D3BCL (40G SR4 class) | 850 nm (MM) | Up to ~100 m (OM3) / ~150 m (OM4) typical for 40G SR4 | MPO/MTP (4 lanes) | 40G (4x10G) | 0 to 70 C (typical; confirm) | Lane balance and equalization behavior; check lane-level optical power |

| Cisco QSFP-100G-SR4, FS.com QSFP100GSR4 | 850 nm (MM) | Up to ~100 m typical for 100G SR4 on OM4; varies by spec | MPO/MTP (8 lanes) | 100G (10x10G lanes or similar) | 0 to 70 C (typical; confirm) | Receiver sensitivity per lane; ensure adequate budget across all lanes |

| Single-mode transceivers like Cisco SFP-10G-LR, Finisar FTLX1471D3BCL (10G LR class) | 1310 nm (SM) | Up to ~10 km typical for 10G LR | LC | 10G | -5 to 70 C (typical; confirm) | Tx power and Rx sensitivity at 1310 nm; connector and splice loss impact |

- Best-fit scenario: You are picking between OEM and third-party optics for the same switch port count and cabling plant.

- Pros: Makes tradeoffs explicit; helps justify spend with measurable risk reduction.

- Cons: Datasheet ranges can be optimistic; you still need field validation.

Validate DOM and telemetry so you can detect drift early

Modern optical modules expose Digital Optical Monitoring (DOM) via I2C, typically providing Tx bias current, Tx power, Rx received power, temperature, and sometimes laser voltage. In a technical evaluation, DOM is not just for dashboards; it is your early warning system for aging optics and contamination. If you cannot read DOM reliably or the values are inconsistent across module vendors, you lose the ability to correlate failures with optical health.

Check what your switch actually reports and how it treats alarms. Some platforms enforce thresholds, and others only log events. Also confirm whether the vendor implements the same calibration and scaling for DOM metrics; two modules can show “normal” values but have different absolute calibration accuracy. For AI clusters that run 24/7, even small drifts can push marginal links over the edge after months of thermal cycling.

Pro Tip: In many environments, the earliest “optical problem” signal is a slow drift in DOM Rx power variance between lanes, not a sudden link flap. Scheduling a monthly lane-level Rx power check and correlating it with patch panel cleaning history catches problems before they become training-impacting incidents.

- Best-fit scenario: You run long-lived training clusters where link flaps cause expensive downtime.

- Pros: Enables proactive maintenance and cleaner root-cause analysis.

- Cons: Requires consistent telemetry access and alert tuning.

Stress-test thermals and airflow at the port level

In dense AI/ML top-of-rack or spine switches, thermal behavior can be the hidden variable in optical performance. Transceivers have specified operating temperature ranges, but the switch cage airflow pattern can create localized hotspots, especially near exhaust vents or cable bundles. A technical evaluation should include a thermal scan plan: record transceiver temperatures under steady load and after typical maintenance activities like adding patch cords.

If the optics run near the upper temperature bound, laser output control may reduce power, and receiver sensitivity can degrade. That can create a situation where links work during off-peak but fail during full training runs. Use the switch’s DOM temperature readings and chassis telemetry to identify correlation between temperature spikes and link errors.

- Best-fit scenario: You are deploying in enclosed cabinets or side-by-side racks with constrained airflow.

- Pros: Prevents “works in lab, fails in production” outcomes.

- Cons: Requires time for measurement and may force airflow rework.

Confirm switch compatibility: vendor checks, speed modes, and optics policies

Even when a module is electrically compatible, the switch may apply optics policies: allow-listing, vendor ID checks, speed negotiation constraints, or DOM alarm handling. A technical evaluation should include verifying that the exact module part numbers are supported on the exact switch firmware version you run. This is especially important when you use third-party optics to control costs.

Plan for firmware updates too. Some vendors change optics compatibility behavior between releases. If you run multi-vendor fabrics, validate optics compatibility across every target switch model and port profile, not just one reference device.

- Best-fit scenario: You are standardizing across a fleet with mixed switch generations.

- Pros: Avoids surprise “module not supported” events.

- Cons: Adds qualification workload and documentation overhead.

Measure with real traffic: BER, FEC counters, and link flaps

Optical specs alone do not prove performance under AI workloads. During a technical evaluation, validate with real traffic patterns that match your training and storage flows: sustained east-west traffic, bursty checkpoint writes, and microbursts during job scheduling. Monitor interface counters for CRC errors, FEC corrected/uncorrected events (where supported), and link up/down events. For 100G-class optics, lane-level effects can show up as increased FEC corrections long before you see user-visible failures.

In practice, you want to reproduce the worst-case conditions: maximum throughput for at least 30 to 60 minutes, then run a longer soak test if you can. If you see rising error counters correlated with temperature or specific patch panels, your root cause is likely contamination, a marginal connector, or a budget edge.

- Best-fit scenario: You are validating a new optics batch before scaling to dozens of racks.

- Pros: Converts optics selection into measurable operational confidence.

- Cons: Consumes lab time and requires careful test instrumentation.

Choose economics wisely: OEM vs third-party optics and TCO math

Cost is real, but so is downtime risk. A technical evaluation should include total cost of ownership (TCO): module unit price, expected failure rate, expected labor for swaps, shipping and lead times, and the cost of training downtime. In many data centers, third-party optics can reduce purchase price, but the savings can disappear if you need more qualification cycles or if compatibility causes operational friction.

Typical real-world price ranges (very approximate, varies by volume and region): 10G SR optics often land in the lower tens of dollars each; 25G and 40G SR optics are higher; 100G SR4 modules are meaningfully more expensive. OEM modules usually cost more, but they often come with tighter validation and predictable telemetry behavior. The best ROI strategy is to run a qualification pilot on your exact switch firmware, cabling plant, and thermal environment before ordering at scale.

- Best-fit scenario: You are standardizing optics procurement across multiple training clusters.

- Pros: Prevents budget surprises; reduces operational risk.

- Cons: Needs a disciplined acceptance test process.

Common Mistakes / Troubleshooting

1) Link comes up but errors climb after traffic ramps

Root cause: marginal optical budget or dirty connectors causing receiver to operate near sensitivity limits. Solution: inspect and clean connectors (including MPO/MTP ends), re-check patch panel loss, and compare DOM Rx power before/after cleaning.

2) Intermittent link flaps after maintenance

Root cause: polarity mismatch or damaged MPO keying during re-cabling. Solution: verify MPO polarity mapping against your patch design, label both ends, and replace any suspect jumpers; then run a sustained traffic soak.

3) “Works in one switch, fails in another”

Root cause: optics compatibility policy differences, firmware behavior changes, or port profile constraints. Solution: validate exact part numbers on exact firmware; keep a compatibility matrix and freeze optics policy during major training windows.

4) DOM alarms but no visible link errors

Root cause: DOM threshold mismatch or calibration differences between vendors. Solution: correlate DOM trends with interface counters (CRC/FEC/link events) and tune alert thresholds based on your baseline.

FAQ

Q: What does “technical evaluation” mean for optics in AI/ML networks?

It means you validate optical performance with a link-budget model, then confirm it with switch telemetry (DOM) and real traffic counters like CRC and FEC events. The goal is to prove reliability under your thermal and cabling conditions, not just meet nominal reach.

Q: Are 850 nm multimode optics enough for leaf-spine AI fabrics?

Often yes for short reach inside a data hall, especially with OM4 cabling. But you must account for connector/patch losses and ensure lane-level power margin for 40G/100G SR4 optics. If your cabling distances are close to the limit, single-mode may reduce operational risk even if initial cost is higher.

Q: How do I handle DOM and alarms when mixing OEM and third-party modules?

Start by building a baseline: record Rx power, temperature, and error counters for each module type on each switch model. Then set alerts based on observed variance, not just vendor defaults. If a platform rejects modules or logs frequent DOM-related warnings, requalify before scaling.

Q: What troubleshooting steps should I do first during a suspected optical failure?

First, inspect and clean connectors, then verify MPO polarity and jumper integrity. Next, check DOM Rx power trends and interface error counters during traffic ramp. Finally, compare the behavior against the link budget and identify whether the issue correlates with a specific patch panel or rack thermal pattern.

Q: Should I trust module “max reach” claims?

No, treat max reach as an upper bound under ideal conditions. In production, patch panels, splices, and connector cleanliness reduce margin, and temperature can shift laser output control. A technical evaluation should always include margin and a qualification pilot.

Q: Where can I find authoritative standards and reference behavior?

Start with IEEE 802.3 for PHY requirements and vendor datasheets for optical parameters and DOM behavior. For practical guidance, also reference ANSI/TIA cabling standards for connector and fiber handling best practices. IEEE 802.3 standards portal and TIA standards portal are good starting points.

Source: IEEE and [[Source: vendor transceiver datasheets]] underpin the PHY and optical parameter expectations, while field results depend heavily on cabling quality and telemetry discipline. For deeper cabling practices, see Source: ANSI/TIA cabling standards.

Summary: a strong technical evaluation combines budget math, correct fiber and polarity, DOM-driven drift detection, thermal validation, and real traffic counter verification. If you want the next step, review AI network monitoring for a practical alerting strategy that ties optical telemetry to training-impacting incidents.

| Rank | Checklist item | Why it matters most | Quick pass/fail signal |

|---|---|---|---|

| 1 | Link-budget reality check | Prevents marginal links from passing “by luck” | Budget margin meets your operational target under worst-case losses |

| 2 | DOM and telemetry validation | Detects drift and aging before downtime | Rx power and lane variance stable; alarms correlate with counters |

| 3 | Thermal and airflow stress | Temperature shifts can push marginal optics over the edge | Temp stays within spec with no rising FEC/CRC events |

| 4 | Wavelength and fiber type match | Wrong fiber class causes reliability surprises | Correct OM/OS mapping and polarity validated end-to-end |

| 5 | Switch compatibility and firmware policies | Avoids hard failures and unstable negotiation | All ports accept optics and remain stable across firmware |

| 6 | Traffic measurement with BER/FEC indicators | Proves reliability under actual AI load patterns | No sustained CRC growth; FEC stays within expected correction rate |

| 7 | Power/sensitivity comparison across candidates | Reveals differences hidden

🍪 We use cookies to improve your browsing experience and analyse site traffic.

Privacy Policy

|