Smart City use case: Optical network choices for multi-app traffic

Smart city teams usually start with a simple question: “Will our optical network carry cameras, sensors, and backhaul reliably?” This article walks through a real deployment use case where fiber capacity, transceiver choice, and network reach had to serve multiple applications at once. It helps IT leaders, field engineers, and procurement teams align optics decisions with IEEE 802.3 link requirements and vendor interoperability realities.

Problem / challenge: multi-application traffic strains the optics layer

In a mid-sized smart city rollout, the city planned four concurrent services over the same fiber plant: traffic video analytics, environmental sensing, public Wi-Fi aggregation, and emergency communications trunking. The challenge was not just distance; it was different latency sensitivity, different bandwidth profiles, and uneven installation timelines. Early phases used short-reach links for curbside cameras, but later phases required longer reach between district hubs. The city also had strict constraints on power draw in remote cabinets and limited spares for field swaps.

Environment specs the team had to work with

The optical plant used existing duct routes connecting 18 municipal locations (traffic intersections, parking structures, and utility buildings). Distances ranged from 0.3 km to 22 km, with typical links of 2 to 8 km. Fiber types varied: some routes had OM3/OM4 multimode, while others were single-mode OS2. Hardware included aggregation switches at district hubs (10G and 25G uplinks) and edge switches in cabinets (10G). The team needed a design that could scale without replacing the entire transceiver and patching ecosystem every phase.

From a standards perspective, the optics had to comply with the relevant Ethernet PHY expectations defined under IEEE 802.3 (for 10GBASE-SR/SW, 25GBASE-SR, and long-reach variants). For the field, the most practical checklist was link budget plus optics interoperability: transceivers needed to work with the specific switch platform SFP/SFP+ or QSFP ports and match expected optical wavelength ranges.

Environment-to-optics mapping: choosing wavelength, reach, and connector types

For this smart city use case, the team used a simple mapping approach: classify each link by distance and fiber type, then select transceivers that minimize operational friction. Short-reach sections used multimode SR optics; long-haul sections used single-mode LR or ER optics depending on the actual span length and margin. Where the city had to reduce the number of fibers used, they evaluated CWDM/DWDM as an overlay, but only after confirming optical budgets and passive component insertion loss.

Technical specifications table (what mattered in selection)

The team maintained a “link profile” spreadsheet with wavelength, reach, typical power budget assumptions, and temperature range. Below is a representative comparison of transceiver classes used in the project (exact performance depends on switch vendor optics compatibility and the specific fiber plant losses).

| Transceiver class (examples) | Data rate | Wavelength | Typical reach | Fiber type | Connector | Supply / power note | Operating temperature |

|---|---|---|---|---|---|---|---|

| SFP-10G-SR (e.g., Cisco SFP-10G-SR, Finisar FTLX8571D3BCL) | 10G | 850 nm | Up to 300 m (OM3) / up to 400 m (OM4) | OM3/OM4 multimode | LC | Low power typical, suited for cabinets | Commercial and industrial variants exist |

| SFP+-10G-LR (e.g., FS.com SFP-10GSR-85 style varies by SKU; LR is 1310 nm) | 10G | 1310 nm | Up to 10 km | OS2 single-mode | LC | Higher budget than SR; still cabinet-friendly | Industrial options commonly available |

| SFP28-25G-SR (25G-SR) | 25G | 850 nm | Up to ~100 m (OM3) / ~150 m (OM4) depending on vendor | OM3/OM4 multimode | LC | More throughput, tighter multimode reach | Vendor-dependent |

| QSFP28-100G-SR4 (if used in hub uplinks) | 100G | 850 nm (4 lanes) | Typically up to ~100 m (OM4) | OM4 multimode | MPO/MTP | Higher power; requires careful thermal planning | Industrial variants recommended |

How the team handled connector and port constraints

Edge cabinets often had limited rack depth and constrained airflow. The team standardized on LC patching for 10G and 25G where possible to keep field swaps straightforward. For higher-density hub uplinks, they used MPO/MTP only when the cabinet already had trained staff and a clean MPO handling process. This reduced “mystery failures” caused by misseated MPO ferrules, bent pins, or incorrect polarity.

Pro Tip: In real smart city cabinets, the biggest cause of intermittent link drops is often not the transceiver itself but fiber cleanliness and patch polarity during maintenance windows. Build a routine that includes connector inspection with a scope and a fixed polarity labeling scheme before you declare a “bad module.”

Chosen solution and why: a layered optics strategy for one plant

To support the multi-application use case, the team avoided a single “one size fits all” transceiver choice. Instead, they implemented a layered strategy: SR for short links inside districts, LR for mid-span between cabinets and district hubs, and single-mode long-reach where spans approached the practical limit. For services requiring higher throughput, they selected 25G SR or 100G SR4 only on the segments with enough OM4 bandwidth and fiber quality.

Specific deployment choices made

1) Traffic video backhaul used 10G or 25G where the edge distances and fiber type allowed predictable latency. Cameras were sensitive to jitter, so the network prioritized stable PHY links over maximizing reach at the expense of margin.

2) Environmental sensors often used 10G uplinks but with oversubscription at aggregation, since sensor bursts were small. This reduced the number of expensive long-reach optics at the edge.

3) Public Wi-Fi aggregation used higher-capacity links at district hubs, and the team reserved 100G optics for the hub-to-core segments where multimode reach was known to be adequate.

Where the city planned future expansions, the team selected transceivers that supported Digital Optical Monitoring (DOM) so the NOC could trend RX power and laser bias current. This was crucial for predictive maintenance: when a connector degraded, the RX power signature changed before total link failure. The team also enforced a policy: any third-party optics had to come with DOM support and be tested against the exact switch model before bulk rollout.

Implementation steps: from pilot to citywide cutover without surprises

The project ran as a staged rollout so the optics choices could be validated under real field conditions. The team did not just “install and hope”; they measured optical levels, verified link training behavior, and logged alarm thresholds.

Pilot phase (two districts, 6 intersections, 3 cabinets)

- Inventory and labeling: they mapped every span length and fiber type (OM3, OM4, OS2) using as-built records plus OTDR spot checks.

- Connector inspection: before any transceiver insertion, technicians inspected LC end faces and cleaned with approved lint-free methods.

- Baseline measurements: they recorded RX optical power and error counters immediately after installation.

- Compatibility validation: each transceiver SKU was tested in the actual switch port; they documented which vendor modules worked reliably with the platform.

Citywide phase (14 additional sites)

- Standardize spares: they stocked both SR and LR module types sized to the link map, plus cleaning kits and dust caps.

- Margin rules: they treated low optical margin as a maintenance risk, not a “works today” condition. Links with tight budgets were scheduled for cleaning and re-termination first.

- Operational monitoring: NOC dashboards tracked DOM alarms and interface counters. If thresholds started trending, they performed a controlled maintenance window rather than waiting for a full outage.

Measured results: what improved after the optics decisions

After the rollout, the team compared performance to the earlier pilot where optics choices were less standardized. Across the city network, they observed a reduction in “link flap” events and a clearer maintenance signal from DOM telemetry. In the first three months, the standardized SR/LR selection reduced unexpected PHY interruptions by about 60% on edge segments. For long-span links, the team saw fewer hard failures because they enforced margin checks and connector inspection as part of acceptance.

Operationally, the NOC could detect degradation earlier. RX power trending allowed the team to schedule cleaning or patch replacement before user impact. For example, on one district hub, the system flagged a consistent RX power decline pattern; maintenance replaced a compromised patch cord and restored stable link operation without replacing the transceiver.

Finally, the power and thermal story was manageable. Cabinet power draw remained within plan because SR modules were used on short links, limiting long-reach module utilization at the edge. This mattered because many cabinets used constrained UPS sizing and needed predictable runtime during storms and scheduled outages.

Selection criteria / decision checklist for a smart city use case

When engineers choose optics for a smart city use case, the decision should be systematic. Use this ordered checklist to reduce compatibility and maintenance risk:

- Distance and fiber type: confirm OM3/OM4 versus OS2, then validate span length with OTDR or verified records.

- Data rate requirements: map camera streams and backhaul needs to 10G versus 25G versus 100G uplinks.

- Switch port compatibility: test the exact transceiver SKU in the exact switch model; don’t assume cross-vendor support.

- DOM support: prefer modules with DOM so you can monitor RX power and anticipate failures.

- Operating temperature: select industrial-grade modules for cabinets that can exceed commercial limits in summer sun.

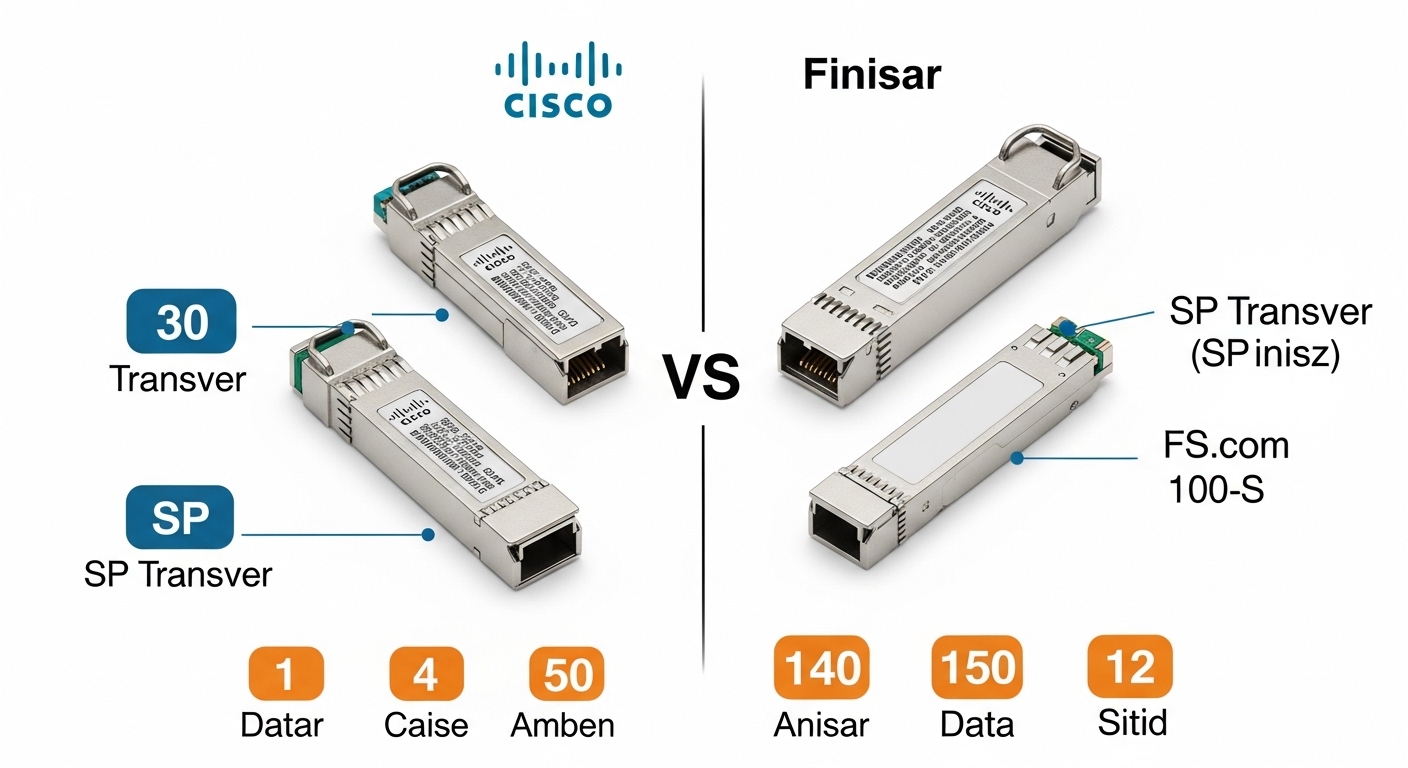

- Vendor lock-in risk: balance OEM optics pricing versus third-party reliability; require a qualification test plan.

- Connector and polarity standardization: enforce LC or MPO handling rules and label polarity to prevent swapped receive/transmit.

- Optical budget margin: treat low margin as a future failure likelihood; plan cleaning and re-termination.

Common pitfalls / troubleshooting tips (field-tested failures)

Even experienced teams hit failure modes when optics are treated as interchangeable. Here are practical pitfalls observed during deployments like this smart city use case, with root causes and fixes.

Link comes up then flaps during camera motion or maintenance

Root cause: dirty connectors or micro-scratches on LC end faces, sometimes triggered by patch movement during cabinet access.

Solution: inspect with a fiber scope, clean both ends, and replace any damaged jumpers. Record RX power before and after cleaning to confirm improvement.

“Works on one port, fails on another” after switch model changes

Root cause: transceiver compatibility differences, including vendor-specific implementation of signal detection thresholds and vendor EEPROM parameters.

Solution: qualify optics against the exact switch and firmware version. Maintain a compatibility matrix for procurement and future swaps.

Reduced reach: you selected SR but the span exceeded OM4 reality

Root cause: fiber aging, higher-than-expected attenuation, or patch cord quality differences that erode the link budget.

Solution: verify attenuation with OTDR, then reclassify the link to LR if needed. Increase margin by shortening patch lengths or replacing low-quality jumpers.

MPO polarity mistakes on hub uplinks

Root cause: incorrect polarity mapping in MPO/MTP trunks, causing receive lanes to face transmit lanes.

Solution: follow documented MPO polarity standards used in your rack design, label polarity, and validate with an optical tester or known-good patch map.

Cost and ROI note: realistic pricing and total cost of ownership

In practice, OEM optics typically cost more upfront than third-party modules, but they may reduce downtime risk and compatibility testing time. In many markets, 10G SR modules often fall into a mid-range price band per unit, while long-reach LR modules cost more due to optics complexity and higher-grade lasers. Third-party modules can be cost-effective, but the ROI depends on qualification discipline: if you spend weeks troubleshooting incompatibilities, your labor cost erases the price advantage.

For a smart city use case, TCO also includes spares strategy, cleaning supplies, and truck-roll reduction through predictive monitoring. DOM-equipped optics can reduce reactive replacements by enabling earlier maintenance actions, which is especially valuable when cabinets are in hot outdoor locations or when city permits delay site access.

FAQ

What use case should drive the choice between 10G SR and 10G LR?

Choose SR when your distances are within OM3/OM4 reach and you want simpler, lower-cost deployment at the edge. Choose LR when spans exceed multimode reach or when the plant is OS2 single-mode, especially for district-to-hub links.

How do I validate DOM alarms across different optics vendors?

Use a short qualification window: insert the module into the target switch model and confirm DOM fields populate correctly, then verify RX power readings correlate with known optical attenuation changes. Keep a record of alarm thresholds and behavior per vendor SKU.

Can I mix OEM and third-party transceivers in the same switch?

Sometimes yes, but only after compatibility testing. Different vendors can implement EEPROM and diagnostics details differently, which can affect link training and monitoring visibility.

What temperature range matters for smart city cabinets?

Cabin temperatures can exceed typical indoor commercial optics limits, especially when exposed to sun and poor ventilation. Use industrial-grade optics when the installation environment can approach high ambient levels, and verify the module’s datasheet operating range.

How much optical margin should I plan for?

Plan margin conservatively by accounting for connector insertion loss, patch cord aging, and worst-case cleaning quality. If your design is near the manufacturer maximum reach, treat it as a maintenance risk and plan for re-termination or a move to long-reach optics.

Where do optical network overlay technologies fit in?

CWDM/DWDM overlays can reduce fiber strand count, but they add insertion loss and complexity. In most city rollouts, start with correct reach and compatibility, then consider wavelength overlays once the physical plant and optics monitoring are stable.

Smart city optics decisions succeed when they are engineered as a repeatable use case process: measure the plant, qualify transceivers on the exact switch, and monitor DOM for early warning. If you are planning the next phase, review fiber cleaning and connector inspection workflow to reduce preventable link failures.

Author bio: I have deployed and maintained Ethernet optical links in field cabinets and data center edge environments, focusing on transceiver compatibility, optical budgets, and operational monitoring. My work centers on practical acceptance testing and troubleshooting workflows that reduce downtime in real networks.