Edge computing networks live and die by latency, throughput consistency, and operational simplicity. This article helps engineers and network owners evaluate performance gains when using Direct Attach Copper (DAC) solutions versus fiber optics, focusing on realistic edge deployments, measurable link behaviors, and compatibility constraints. You will get a practical selection checklist, troubleshooting patterns, and a cost and ROI view grounded in vendor datasheets and IEEE guidance. Updated 2026-05-03.

performance reality check: what DAC changes at the edge

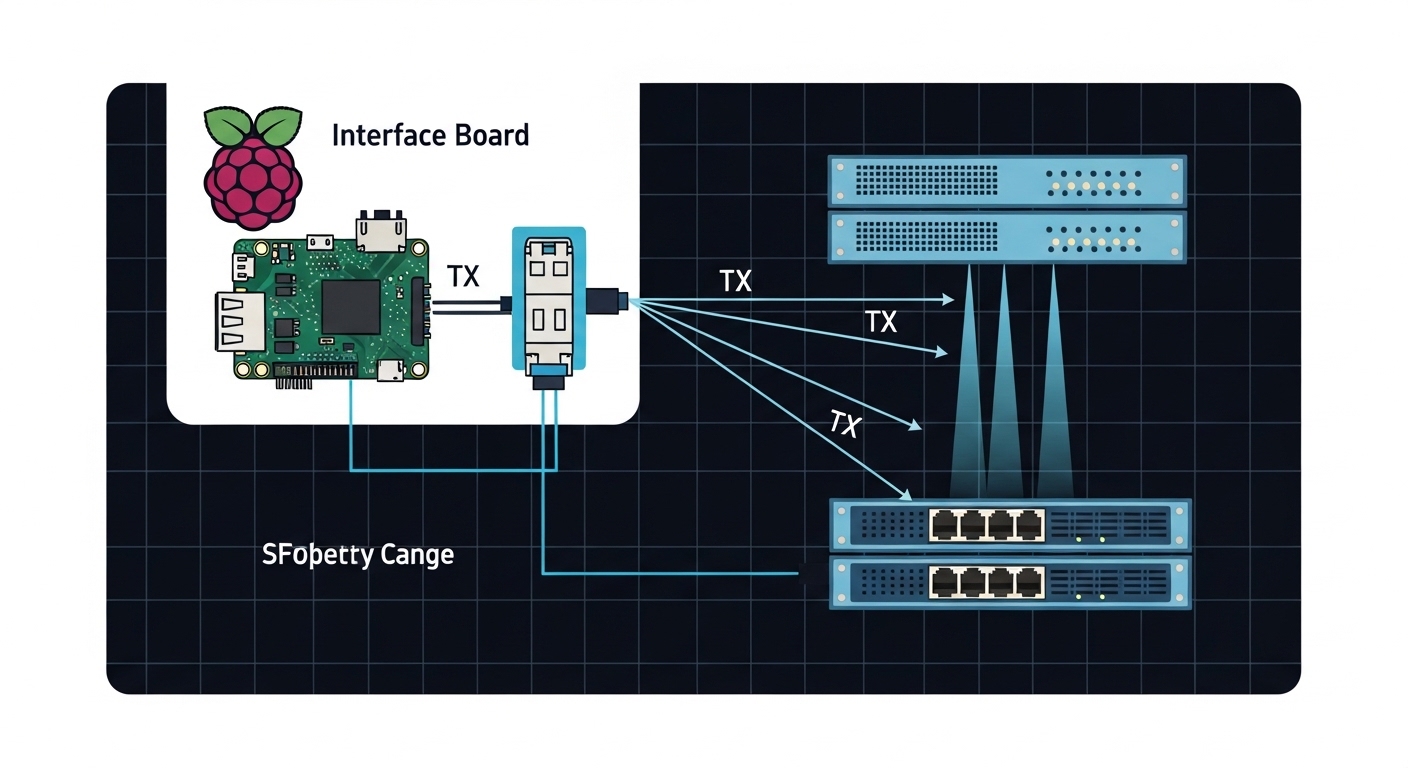

DAC solutions typically use twinax copper cables with integrated transceivers, designed for short-reach interconnects inside racks or between closely spaced edge devices. The performance advantage usually comes from lower modal complexity and simplified optics handling, which can reduce operational time and reduce link bring-up variability. In practice, the biggest wins show up as consistent link initialization, stable signal integrity within the specified reach, and fewer fiber-related failure modes. However, DAC is still bounded by electrical attenuation and EMI susceptibility, so the “performance ceiling” depends heavily on length, port type, and transceiver quality.

How performance is affected: latency, jitter, and signal integrity

For edge workloads using 25G/50G/100G Ethernet, the key contributors to perceived performance are consistent frame delivery and low retransmission rates rather than raw serialization delay alone. DAC links can maintain predictable BER behavior when the installation stays within the rated reach and bend radius, because the transmitter pre-emphasis and receiver equalization are tuned for that electrical channel. IEEE Ethernet PHY behavior is standardized, but the implementation details vary by vendor, which impacts sensitivity to marginal cabling. For protocol-level expectations, refer to IEEE 802.3 for Ethernet PHY operation and link behavior. anchor-text: IEEE 802.3

Pro Tip: Field teams often see “mystery packet loss” that is not a traffic problem. It is frequently a marginal DAC length or an over-tight cable bend that degrades equalization margin, raising error counts until the link renegotiates. If you can, measure optical power only when using optics; for DAC, focus on link counters (FEC/PCS errors if available) and validate the physical install against the vendor bend and minimum bend radius guidance.

DAC vs optics: head-to-head performance tradeoffs by reach and environment

When engineers debate DAC versus fiber at the edge, the decision usually starts with reach, but the real differentiator is the interaction between the channel and the environment. DAC is best when the physical distance is short and controlled, such as ToR-to-server, ToR-to-aggregation within the same rack row, or a compact edge pod. Optics become more attractive when you need longer reach, higher EMI resilience, or when the edge cabinet layout forces cables to pass near power electronics. The performance goal is the same—stable high-rate Ethernet delivery—but the failure modes and maintenance burden differ.

Technical comparison table: key specs that drive performance outcomes

The table below compares common DAC options with representative short-reach optics. Always confirm that your switch platform supports the exact transceiver part number and that the reach matches your channel budget.

| Parameter | 25G DAC (Twinax) | 100G DAC (QSFP28) | 10G SR Optics (SFP+) | 25G SR Optics (SFP28) |

|---|---|---|---|---|

| Typical data rate | 25G Ethernet | 100G Ethernet | 10G Ethernet | 25G Ethernet |

| Wavelength | N/A (electrical) | N/A (electrical) | 850 nm nominal | 850 nm nominal |

| Reach (typical) | 1 m to 7 m (model dependent) | 1 m to 3 m (model dependent) | Up to 300 m over OM3 | Up to 100 m over OM3 |

| Connector | QSFP28/Copper plug (twinax integrated) | QSFP28/Copper plug (twinax integrated) | LC duplex | LC duplex |

| Power (typical) | Lower than many optics; often a few watts per port | Moderate; varies by vendor and speed | ~0.8 W to 1.5 W | ~1 W to 2 W |

| Operating temperature | Often 0C to 70C for standard; some -20C to 85C options | Often 0C to 70C for standard; some extended options | 0C to 70C typical; extended variants exist | 0C to 70C typical; extended variants exist |

| Main performance constraint | Electrical attenuation + installation quality | Channel loss at high symbol rates | Fiber cleanliness and link power budget | Fiber OM grade, cleaning, and power budget |

What to expect in the field

In a properly installed DAC link, the performance is often “set and forget” because there are no optical power budgets to drift and no connector cleanliness issues. In contrast, fiber performance at the edge can be excellent but is sensitive to dust, microbends, and connector handling during maintenance. If your edge site has frequent technician visits or limited cleaning tools, DAC can reduce maintenance variability. If your edge site has high EMI and cable runs near inverters, fiber can be more robust even if the initial setup takes longer.

Selection criteria checklist: choosing DAC for maximum performance

Use the following ordered checklist to avoid performance surprises. It is designed for edge deployments where you may have limited time for rework and must keep service interruptions low.

- Distance and channel class: Measure the actual run length including slack. If your planned run is 4 m but the cable must route around a door hinge, treat it as 5 m for safety. Stay within the vendor-rated reach for the exact part number.

- Switch and port compatibility: Confirm the switch supports DAC in that specific port mode (for example, some platforms have mixed PHY capabilities). Validate with the vendor’s transceiver compatibility list or a supported SFP/QSFP program.

- DOM and management needs: DAC variants may include Digital Optical Monitoring equivalents or may behave differently by platform. If you rely on telemetry for RMA workflows, confirm that the switch exposes the needed counters and identifiers.

- Operating temperature and airflow: Edge cabinets often see hot spots. Verify the transceiver temperature spec and confirm the cabinet cooling meets the manufacturer’s guidance.

- EMI and grounding strategy: If the DAC run passes near high-current equipment, evaluate shielding and cable routing. Fiber can outperform DAC in noisy environments.

- Vendor lock-in risk: Third-party DAC and optics can work, but compatibility is not guaranteed across firmware versions. Budget for qualification testing during a planned maintenance window.

- Serviceability: Ensure you stock the correct length SKU. Mixing lengths or reusing cables across different port speeds can degrade performance or complicate troubleshooting.

Cost and ROI: when DAC beats optics (and when it does not)

DAC often wins on total cost when your distances fit the rated reach and your team values lower install and maintenance overhead. Typical street prices vary widely by speed, vendor, and whether you buy OEM versus third-party, but engineers commonly see DAC modules in the range of tens to a couple hundred USD per link depending on 25G versus 100G and length. Optics and fiber usually cost more at the module level, and you must add the fiber plant and cleaning/handling process. That said, if you need longer reach or your edge cabinet layout forces long runs, optics can reduce the need for awkward cable routing and replacement loops, which improves ROI through fewer truck rolls.

TCO model engineers actually use

For a realistic TCO comparison, include: module cost, expected failure rate, labor hours for installs, maintenance time, and downtime risk. In edge deployments with limited technical staff, labor hours dominate. For example, if cleaning fiber connectors costs 20 to 40 minutes per incident and a site sees multiple connector issues per year, the labor delta can erase the initial module cost advantage of DAC. Conversely, if your site is stable and you can keep cable routes short and clean, DAC can reduce incident frequency and speed up RMA replacements.

Pro Tip: Treat “performance” as an operational metric. If your edge site has a history of connector cleanliness issues or frequent maintenance, DAC can improve performance consistency by removing the fiber hygiene variable, even if fiber has a higher theoretical reach.

Common mistakes and troubleshooting tips that protect performance

Below are common failure modes that directly affect performance. Each includes the root cause and a field-tested remediation approach.

Over-length or “almost within spec” DAC installs

Root cause: Electrical attenuation and channel loss exceed the transceiver equalization margin, especially at higher symbol rates (for example, 100G). Symptoms: Interface flaps, rising error counters, intermittent packet loss, or link renegotiation. Solution: Replace with the next shorter rated DAC length, re-route to reduce effective length, and verify bend radius and cable seating. If the switch provides BER/PCS/FEC counters, correlate errors with temperature and time.

Incompatible transceiver mode or firmware gating

Root cause: Some switch platforms apply per-port PHY capability checks that reject unsupported transceivers or limit features, leading to degraded or non-functional links. Symptoms: “Unsupported optics” alarms, link stays down, or runs at a lower negotiated rate. Solution: Confirm the exact module part number is validated for your switch model and firmware. Update firmware only within a controlled window and retest link stability afterward.

EMI and grounding issues along the DAC run

Root cause: Twinax is not immune to strong electromagnetic interference, particularly when routed near high-current power supplies, inverter drives, or unshielded busbars. Symptoms: Errors increase during specific equipment cycles; performance degrades when motors start. Solution: Reroute cables away from power electronics, improve shielding and grounding, and if needed switch to fiber for that segment. Validate with controlled load tests while monitoring interface counters.

Thermal hotspots inside edge cabinets

Root cause: DAC modules can be sensitive to high local temperatures, which shifts receiver sensitivity and increases error rates. Symptoms: Performance is stable at install but degrades after hours; alarms correlate with cabinet temperature spikes. Solution: Improve airflow, add blanking panels to reduce turbulence, and verify the module temperature spec for your deployment profile. If available, log temperatures and error counters over time.

Which option should you choose? (DAC or optics) for edge performance

Use this decision matrix to pick the right interconnect strategy based on your constraints and risk tolerance. If you want maximum speed with minimal operational overhead and your distances are short, DAC is usually the pragmatic performance choice. If you need longer reach, higher EMI resilience, or simpler routing across cabinets, optics can deliver more reliable long-term performance.

| Your priority | Best default | Why it wins for performance | Key caveat |

|---|---|---|---|

| Shortest runs inside a rack | DAC | Predictable electrical channel when within rated reach | Install quality and bend radius matter |

| Fast deployment with fewer maintenance steps | DAC | Eliminates fiber cleanliness as a variable | May require re-cabling if layout changes |

| Longer reach between cabinets | Optics | Fiber power budgets support extended distances | Cleaning and handling processes are mandatory |

| High EMI environment | Optics | Optical links avoid electrical coupling noise | Connector hygiene and microbends still impact reliability |

| Strict vendor compatibility requirements | OEM optics or validated DAC | Highest chance of stable link behavior across firmware | Higher unit cost |

Clear recommendations by reader type

- Edge operators optimizing for operational simplicity: Choose DAC for in-rack and short row distances, and standardize cable routing rules to protect performance.

- Telecom and industrial integrators facing EMI and variable layouts: Prefer optics for any run that crosses near power electronics, and treat fiber cleaning as a formal procedure.

- Budget-focused teams with stable cabinets: DAC can reduce labor and speed up swaps, but qualify the exact part numbers on your switch firmware.

- Teams with high maintenance turnover: DAC can improve performance consistency by reducing connector handling errors, provided your installation workmanship is controlled.

FAQ

Does DAC always deliver better performance than fiber at the edge?

No. DAC can deliver excellent performance for short, controlled runs, but optics can outperform DAC when distance, EMI, or installation constraints exceed DAC’s electrical channel margin. Choose based on reach, environment, and install quality, not only on speed.

What length DAC should I buy for edge deployments?

Buy the length that matches your measured run plus a small margin, but stay within the vendor’s rated reach for that exact SKU. If your route involves detours around doors or cable trays, measure the physical path rather than trusting straight-line distance.

Will third-party DAC modules work in enterprise switches?

Often yes, but compatibility depends on switch platform checks and firmware behavior. Validate with a qualification test: bring up the link, monitor error counters for at least a full thermal cycle, and confirm consistent negotiation speed.

How do I troubleshoot DAC performance issues quickly?

Start with physical inspection: seating, cable bends, and routing away from EMI sources. Then check interface counters and events for flaps or FEC/PCS errors, and compare against temperature and load timing. Replace with a known-good shorter DAC to isolate channel margin problems.

Do DAC and optics both support telemetry for monitoring?

Optics commonly support standardized monitoring via transceiver interfaces, while DAC support varies by vendor and platform. If you rely on DOM-like telemetry for proactive maintenance, confirm your switch exposes the needed identifiers and error statistics for the DAC model you plan to deploy.

When should I switch from DAC to optics in the same edge site?

Switch to optics when you must cross cabinet boundaries, exceed DAC reach, or route near high-current power equipment. Also switch if you observe recurring performance degradation tied to environmental changes that suggest EMI or thermal instability.

If you want consistent edge performance, treat DAC as a high-efficiency short-reach tool: it can be excellent within rated length and clean installation practices, and it often reduces operational variability. For the next step, review your switch transceiver requirements and test one pilot rack end-to-end using the same checklist—then scale confidently with measured performance.

For related guidance on interconnect choices, see fiber optic transceiver reach planning.

Author bio: I have deployed 25G and 100G edge fabrics using DAC and short-reach optics across constrained cabinets, with hands-on monitoring of link error counters and thermal impacts. I write field-oriented guidance grounded in IEEE Ethernet behavior and vendor transceiver documentation.