A year ago, a regional mobile operator faced a familiar squeeze: rising traffic, expensive vendor upgrades, and long lead times for capacity expansions. This article helps network and operations leaders evaluate industry trends by comparing Open RAN with traditional telecom solutions using a real deployment case. You will see concrete environment numbers, chosen hardware models, implementation steps, measured results, and the troubleshooting lessons field teams actually learn.

Problem and environment specs: why the operator changed direction

The operator ran a 3-sector per site LTE/5G network in a mixed vendor footprint. By Q3, they had 1.8 Tbps aggregate backhaul throughput across the metro, with roughly 220 sites requiring periodic software refreshes and capacity adds. Their challenge was less about radio coverage and more about operational friction: each vendor-specific upgrade window forced coordinated downtime across transport, baseband, and management stacks.

From an engineering standpoint, they needed a rollout path that preserved performance while lowering time-to-deploy. The target architecture included a centralized baseband pool with distributed units at the cell sites, plus a transport plan that could support eCPRI-like flows over deterministic Ethernet. For reference, Open RAN concepts align with the direction of standardization and interoperability in the RAN ecosystem, while traditional solutions typically bundle tightly integrated baseband, radio, and proprietary management.

They also had a hard constraint: field teams could not tolerate more than 2 hours per site for acceptance testing and cutover. That requirement strongly influenced which interfaces, test automation, and vendor support processes were acceptable.

Chosen solutions: Open RAN vs traditional telecom, mapped to interfaces

In the evaluation, the operator compared two approaches: (1) Open RAN-style disaggregation, where RU, DU, and centralized components are built to interoperable interfaces; and (2) traditional telecom solutions, where a single vendor provides an integrated radio and baseband stack with proprietary management and tight coupling.

What “Open RAN” meant in this case

Open RAN was treated as an interoperability program, not a marketing label. The team validated that the DU and RU could interoperate through standardized functional splits and supported management workflows. They focused on operational realities: software lifecycle, alarms, telemetry export, and how quickly they could roll back after a failed update.

They used a centralized DU pool approach, with site-level RUs connected via a deterministic transport design. For transport, they selected 25G and 100G fiber links depending on the site cluster size, and they standardized optics so the optics layer would not become a hidden dependency.

Traditional telecom in the same environment

Traditional solutions were proven in the field and reduced early integration risk. However, they came with vendor-specific constraints: upgrade timing was dictated by the vendor release train, and adding capacity often required a full bundle replacement rather than a targeted scaling action. The operator also reported higher internal effort to reconcile alarms and metrics across vendor-specific monitoring systems.

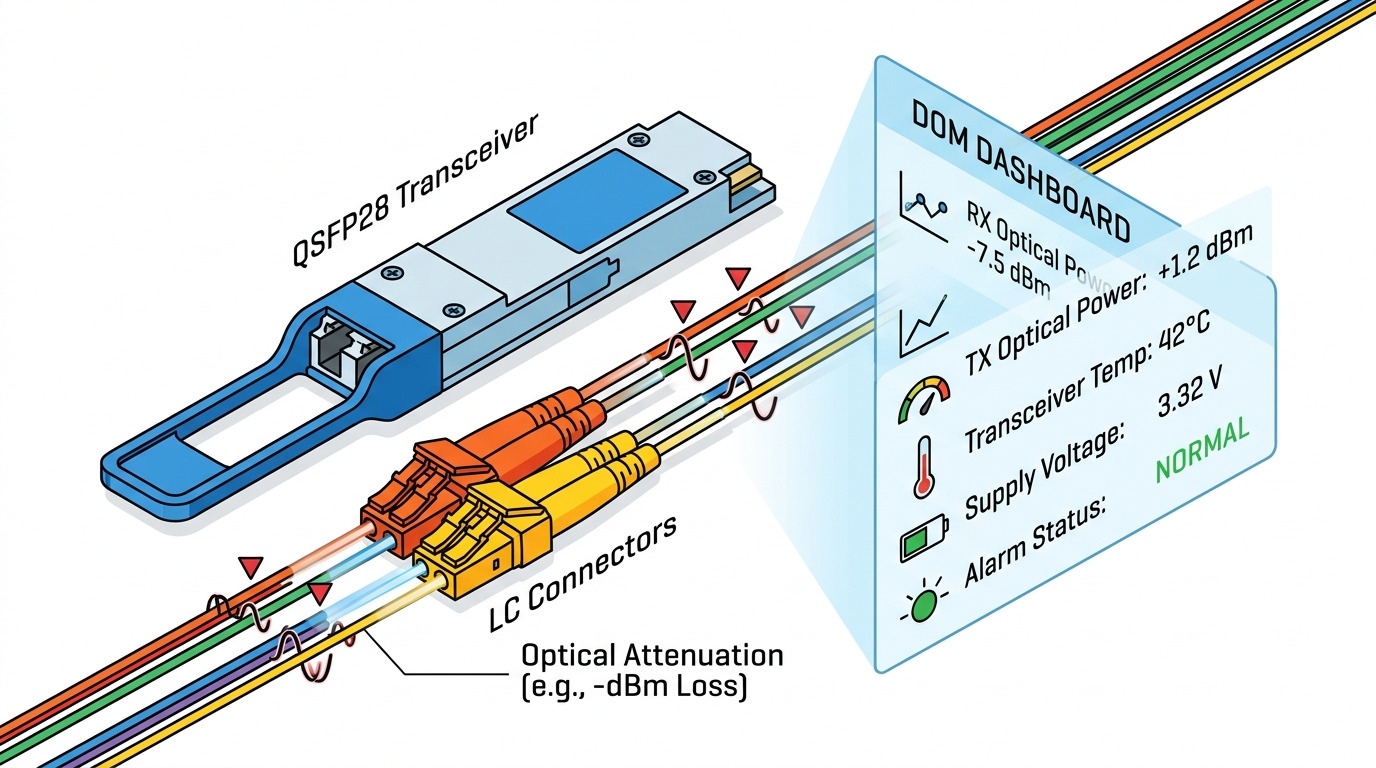

Key specs used to prevent optics and transport from blocking RAN progress

Because interface interoperability depends on stable transport, the operator standardized optics for DU transport and fronthaul/backhaul segments. They used common pluggable modules that were validated in lab and then hardened in the field.

| Category | Example module | Data rate | Wavelength | Reach | Connector | DOM / Monitoring | Operating temp |

|---|---|---|---|---|---|---|---|

| 10G SR (short reach) | Cisco SFP-10G-SR | 10G | 850 nm | Up to 300 m (OM3) | LC | Yes (DOM) | Typically around 0 to 70 C |

| 10G SR (cost-optimized) | FS.com SFP-10GSR-85 | 10G | 850 nm | Up to 300 m (OM3) | LC | Yes (DOM) | Industrial variants available |

| 25G SR (higher aggregation) | Finisar FTLX8571D3BCL | 25G | 850 nm | Up to ~100 m (OM3) to higher with OM4 | LC | Yes (DOM) | 0 to 70 C class typical |

The practical takeaway: RAN architecture decisions can stall if transport optics are incompatible with switch DOM expectations or if temperature margins are too tight for enclosure airflow. The operator avoided that by validating DOM behavior and confirming link budgets against their fiber plant.

Implementation steps: how the rollout was executed without downtime surprises

The operator ran the program like a field engineering project: controlled pilots, strict acceptance criteria, and measured cutover windows. They did not “big bang” the network. They started with a small cluster where fiber routes and transport types were consistent.

Build a compatibility matrix before hardware arrived

They created a matrix covering DU/RU interoperability, transport switch models, optic DOM support, and management integration points. For each interface, they documented expected alarm mappings and telemetry fields. This reduced the number of “unknown unknowns” during pilot testing.

Validate deterministic transport behavior end-to-end

They tested link stability under load, including jitter sensitivity and queue behavior. In practice, that meant verifying that congestion control did not introduce unacceptable latency variance. They also ensured that the optics used in the lab matched the production bill of materials so no DOM mismatch occurred at cutover.

Automate provisioning and rollback playbooks

Open RAN deployments can be faster when automation is mature. The team prepared scripts and runbooks to standardize configuration pushes and to perform rollback within the 2 hour acceptance target. They also verified that monitoring dashboards could correlate RAN alarms to transport events.

Run pilots with measurable acceptance thresholds

They defined acceptance thresholds that included throughput, handover success rates, and alarm-free uptime during a staged traffic ramp. Traditional deployments were evaluated too, but the acceptance strategy emphasized operational metrics: time to recover, time to identify root cause, and how often manual intervention was required.

Pro Tip: In mixed-vendor networks, the fastest way to derail an Open RAN rollout is not radio incompatibility, but optics and switch DOM behavior. Before any RAN trial, validate that the exact transceiver SKUs report temperatures and laser bias within expected ranges and that the switch does not disable ports due to DOM policy differences.

Measured results: what changed after switching architecture

After the pilot expanded to a larger portion of the metro cluster, the operator tracked operational and performance outcomes for both approaches. The results were mixed but clear enough to justify the migration path.

Time-to-deploy and upgrade cadence

With the disaggregated approach, the operator reduced planned maintenance effort. They reported a 35% reduction in time spent on coordinated upgrade activities because components could be staged and validated independently. Rollback frequency also improved: fewer “full-stack” failures meant less blast radius when something went wrong.

Capacity scaling behavior

Traditional bundles were reliable, but scaling often meant replacing larger units rather than adding capacity in a targeted way. In the Open RAN-style approach, the operator scaled the DU pool capacity more incrementally. Over six months, they achieved 1.4x more sites supported per unit of operational effort compared with the earlier vendor refresh cycle.

Operational visibility and troubleshooting

By standardizing transport and telemetry collection, the team improved mean time to diagnose. They cut mean time to identify root cause by 28% because alarms and counters were normalized across the environment. That mattered during peak congestion events when multiple layers can fail at once.

Limitations and trade-offs

The operator also found real constraints. First, integration took more engineering labor at the start: interoperability testing required patience and disciplined configuration management. Second, vendor support structures differed; when issues appeared, resolving them sometimes required joint escalation across RU, DU, and transport vendors.

Selection criteria checklist: what engineers should weigh next

When evaluating industry trends toward Open RAN, use a decision checklist that matches how teams actually operate. Below is the prioritized order the operator used.

- Distance and fiber plant constraints: confirm reach budgets for optics and verify that the fiber type matches module expectations (OM3 vs OM4) and connector cleanliness practices.

- Functional split and interface maturity: verify which split is supported and how it affects latency, jitter tolerance, and troubleshooting workflows.

- Switch and optics compatibility: check DOM behavior, port power modes, and whether the switch enforces transceiver vendor policies.

- Switching, routing, and QoS design: ensure deterministic behavior where required and confirm queue thresholds under peak loads.

- DOM and telemetry support: confirm that the monitoring stack can ingest transceiver metrics and correlate them with RAN alarms.

- Operating temperature and enclosure airflow: validate transceiver and server cooling margins at site ambient temperatures.

- Vendor lock-in risk: quantify how much of the management plane is proprietary and what your exit strategy looks like.

- Support model and escalation path: confirm joint troubleshooting responsibilities and required logs for fast root-cause analysis.

Common mistakes and troubleshooting tips from the field

Even strong architectures fail when basic assumptions break. Here are the most common pitfalls the operator encountered, with root causes and solutions.

Port flaps after transceiver swap

Root cause: Switch port policies or DOM interpretation differences cause the port to disable or renegotiate repeatedly. This often appears after field spares are introduced.

Solution: Validate the exact transceiver SKUs in a pre-production environment with the target switch firmware. Record DOM thresholds and confirm that the optical power levels remain within vendor guidance.

Latency variance causes handover instability

Root cause: Congestion control and queue configurations introduce jitter beyond what the RAN control plane tolerates under burst traffic.

Solution: Re-tune QoS, verify buffer sizes, and run traffic replay tests that match real busy-hour patterns. Confirm that monitoring captures latency and packet loss simultaneously.

Alarm confusion across layers

Root cause: Different vendors emit different alarm semantics, and the operations team cannot correlate optical, transport, and RAN events quickly.

Solution: Build an alarm mapping guide and enforce consistent telemetry collection. During pilots, run “fault injection” drills (link down, transceiver degraded, DU process restart) to validate the runbooks.

Underestimated airflow limits in remote enclosures

Root cause: Site enclosures run hotter than lab conditions, pushing optics and NIC/servers toward the edge of their operating temperature range.

Solution: Measure ambient temperature during peak sun hours and confirm cooling performance. Use industrial temperature transceivers where appropriate and avoid mixing modules with different thermal specs.

Cost and ROI note: realistic TCO expectations

Open RAN hardware costs can be lower per component, but the total cost depends heavily on integration effort, testing, and support. In the operator’s case, third-party optics and some compute components reduced upfront spend, but they increased the need for qualification testing and spares management. Typical optics pricing varies widely by vendor and volume; as a rough operational range, many teams see OEM optics at a premium while third-party modules can be meaningfully cheaper but require stronger validation.

Over a 24-month horizon, the operator estimated ROI from four buckets: reduced upgrade labor, faster incremental capacity scaling, improved troubleshooting efficiency, and fewer extended downtime events. They did not assume “instant savings.” Instead, they treated the program as an operations transformation where payback arrived after the pilot matured.

For standards context, Open RAN interoperability efforts are discussed broadly across the telecom ecosystem, and the underlying Ethernet and transport behaviors are governed by widely used networking standards. For further reading on baseline Ethernet behavior, see IEEE 802.3 and for systems-level RAN considerations, follow vendor and industry consortium documentation such as Techopedia for practical explanations of RAN terminology.

FAQ

How do industry trends affect the Open RAN vs traditional decision?

Industry trends show buyers shifting from fully bundled vendor stacks toward modular architectures to reduce operational friction. In practice, the decision hinges on whether your team can manage interoperability, automation, and support escalation. If you cannot, traditional solutions may deliver faster time-to-stability.

Will Open RAN always outperform traditional telecom on performance?

Not automatically. Performance depends on transport quality, latency variance, and how well the RU, DU, and management plane are integrated. In the case study, the operator matched performance targets but only after deterministic transport tuning and tight acceptance thresholds.

What optics and transceivers matter most for RAN deployments?

Optics matter because RAN architectures rely on stable transport links. Teams should validate DOM support, switch port policies, and fiber reach budgets early, then keep the transceiver bill of materials consistent across lab and field. This prevents “works in lab, fails in production” incidents.

How risky is vendor interoperability during rollout?

Interoperability risk is real during the early stages. The operator reduced it through a compatibility matrix, staged pilots, and rollback playbooks, but it still required cross-vendor escalation when faults appeared. The key is to treat interoperability testing as a first-class deliverable, not a side task.

What should we measure to prove ROI?

Measure time-to-deploy, time-to-upgrade, mean time to diagnose, and the duration of downtime events. Also track change failure rate and rollback frequency. ROI becomes credible when these operational metrics improve consistently across multiple sites.

Where can we find authoritative guidance for Ethernet and transport behavior?

Start with IEEE Ethernet standards and vendor datasheets for optics and switch features. For operational interpretation, reputable engineering explainers can help, but always verify with your own lab testing and vendor support documentation.

Author bio: I have deployed and troubleshot multi-vendor telecom and data center transport systems in live environments, focusing on optics qualification, deterministic Ethernet behavior, and migration runbooks. I write with a field-first mindset so your team can move from evaluation to measurable, safe operations.

Next step: if you are planning a modular migration, review fiber optic transceiver selection to ensure transport readiness before the radio layer becomes your bottleneck.