Industry applications of 400G transceivers in smart cities: how to choose

Smart city operators are pushing bandwidth into places where fiber ends at the curb, in traffic cabinets, and inside municipal data halls. This article explains how industry applications of 400G transceivers map to those environments, then gives engineers a selection checklist that accounts for optics reach, power budgets, and optics compatibility. You will also get troubleshooting patterns for field failures and a pragmatic cost and ROI view for municipal rollouts.

Where 400G optics fit in smart city traffic, surveillance, and IoT backhaul

In a typical smart city design, edge devices generate continuous streams: video analytics from intersections, license plate capture, environmental sensors, and utility telemetry. Those feeds converge through aggregation switches and then into a regional core or cloud interconnect. When traffic growth forces uplinks beyond 100G, 400G transceivers become attractive because they reduce the number of parallel optics needed per cabinet and can lower switch port counts at the aggregation layer.

IEEE 802.3 defines Ethernet PHY classes that drive how transceivers behave electrically and optically; for 400G, the market commonly aligns to coherent or high-speed direct-detect implementations depending on reach. In short-distance deployments (campus, municipal ring, and metro access), operators often prefer direct-detect 400G using multi-lane optics; for longer metro distances, coherent 400G can become the practical option. For standards context, see [Source: IEEE 802.3] and vendor application notes from major optics suppliers.

Concrete smart city link patterns that drive 400G demand

- Traffic video aggregation: 4K or multi-stream camera arrays can saturate 25G/100G uplinks quickly, especially when analytics requires frequent frame sampling and retention buffering.

- Distributed edge compute: When edge servers process inference locally and forward embeddings or clips, the egress bandwidth can still exceed what 100G can carry during peak events.

- Metro ring backhaul: Municipal networks often use ring topologies for resilience; 400G reduces the number of wavelengths or parallel fibers needed to keep failover headroom.

400G transceiver types, link budgets, and compatibility constraints

Engineers choose 400G transceivers based on three constraints: distance, fiber plant characteristics, and switch/host compatibility. The “distance” piece is not just nominal reach; it is the link budget that accounts for fiber attenuation, connector loss, splices, and any patch panel slack. The “compatibility” piece is equally important: many switch vendors require specific optics part numbers or certified transceivers to ensure deterministic behavior with their optics management and diagnostics.

Most smart city deployments use one of two optical families. Direct-detect 400G typically targets short to mid distances using parallel fibers and high lane counts, while coherent 400G targets longer metro distances over fewer fibers by using local oscillator technology and digital signal processing. Because coherent and direct-detect optics behave differently at the physical layer, you must align the transceiver type with the switch’s supported 400G PHY mode.

Key specifications to compare before you buy

The table below compares example 400G options engineers encounter in municipal networks. Always validate exact supported modes and optics interfaces against the host switch datasheet and the transceiver manufacturer’s compatibility list.

| Transceiver example | Typical data rate | Center wavelength | Reach (typical) | Form factor / interface | Connector | Power class (typical) | Operating temperature | |

|---|---|---|---|---|---|---|---|---|

| Cisco SFP-DD 400G SR8 (example family) | 400G | 850 nm | ~100 m over OM4 (varies by vendor) | QSFP-DD / OSFP-class (platform dependent) | LC | Low to moderate (verify host) | Commercial or extended (verify) | |

| FS.com 400G QSFP-DD SR8 / DR4-style offerings (example) | 400G | 850 nm (direct-detect) | ~100 m to ~150 m depending on fiber grade | QSFP-DD | LC | Low to moderate (verify) | -5 C to 70 C (typical for some SKUs) | |

| Finisar / Finisar-like coherent 400G pluggables (example family) | 400G | ~1550 nm band (coherent) | ~80 km to 200+ km (mode dependent) | Coherent 400G pluggable | LC or MPO (varies) | LC or MPO | Higher than direct-detect (verify thermal) | Industrial-grade variants exist |

For authoritative reach and power details, rely on the specific SKU datasheets rather than generic marketing figures. Common reference sources include [Source: IEEE 802.3], plus vendor datasheets for models such as Cisco-compatible optics and third-party suppliers like Finisar and FS.com listings.

Host switch compatibility: the hidden “spec” that dominates field outcomes

Municipal rollouts fail when optics are technically “400G-capable” but not electrically recognized by the host. In practice, switch firmware validates DOM fields and sometimes enforces vendor-specific calibration or lane mapping rules. Before procurement, do a lab compatibility check using the exact switch model and firmware revision you plan to deploy, then run a soak test that exercises link bring-up and traffic patterns for at least several hours.

Pro Tip: In smart city cabinets, the biggest cause of “random” link flaps is not always optics quality; it is often marginal connector geometry after repeated field service. If you standardize on a single connector ecosystem (for example, LC APC vs UPC choices) and log every field re-seat event against DOM serial numbers, you can correlate flaps to physical handling rather than to transceiver silicon.

Industry applications decision checklist for 400G optics in smart city networks

Engineers typically evaluate optics with a structured checklist because the cost of a wrong choice is high: re-pulling fiber, replacing transceivers in sealed cabinets, and reconfiguring switch ports can exceed the initial optics savings. Use the ordered list below as a procurement gate for industry applications in municipal environments.

- Distance and link budget: Calculate attenuation using measured fiber OTDR results when available; include patch cords, connectors, and splices. Confirm reach against the transceiver’s specified link budget, not only nominal reach.

- Fiber type and polarity: Verify OM3/OM4/OM5 for short-reach direct-detect; confirm MPO polarity handling for multi-fiber interfaces and ensure the patching scheme matches the transceiver requirements.

- Switch port mode support: Confirm the host switch supports the exact 400G PHY mode for the chosen transceiver type (direct-detect vs coherent).

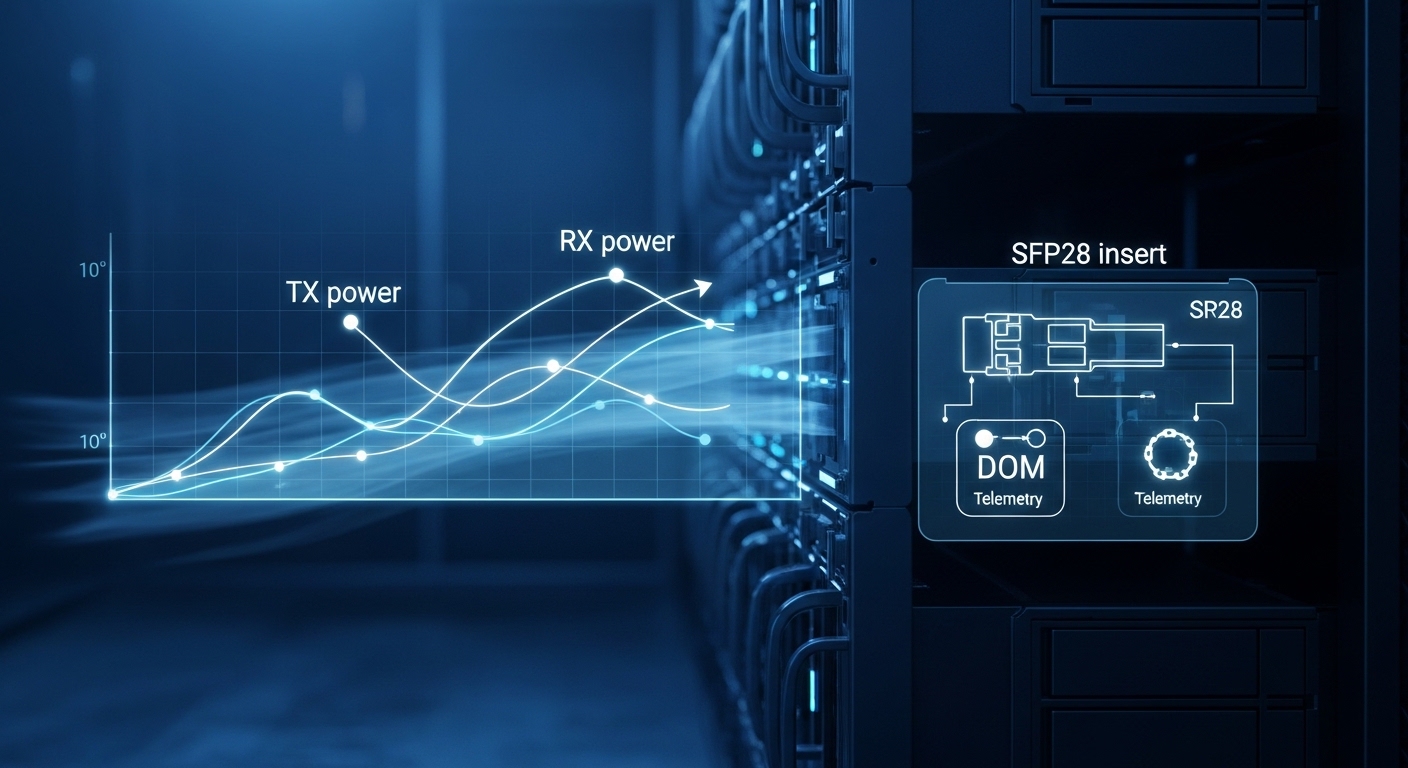

- DOM support and monitoring: Require Digital Optical Monitoring (DOM) support compatible with your NMS tooling. Confirm the fields you need (temperature, bias/current, received power) are exposed reliably.

- Operating temperature and thermal design: Smart city cabinets can swing from sub-freezing mornings to hot afternoons. Choose transceivers with an appropriate temperature range and ensure the switch airflow design maintains transceiver cooling margins.

- DOM alarms and thresholds: Validate alarm thresholds in the host software; misaligned thresholds can hide degradation until failure.

- Vendor lock-in risk: For OEM optics, validate pricing and lead times. For third-party optics, validate certification, compatibility lists, and DOM behavior on your exact switch/firmware.

- Spare strategy: Plan spares by SKU and batch, and ensure you can rapidly swap modules without changing optics settings.

Selection sub-cases by smart city location

- Inside municipal data halls: Prefer direct-detect 400G when fiber distances are short and fiber grade supports the reach with margin.

- Fiber-fed traffic corridors: If links span longer metro distances or require fewer fibers, evaluate coherent 400G pluggables with the right dispersion and performance assumptions.

- Harsh cabinet deployments: Prioritize industrial temperature variants and ensure connector access and dust control procedures are enforced.

Real-world deployment scenario: 400G uplinks in a municipal ring with 48-port ToR aggregation

Consider a municipal network in a mid-sized European city using a leaf-spine-like structure with multiple traffic zones. At each zone, a 48-port top-of-rack aggregation switch uplinks to a regional ring; each uplink originally ran at 100G to meet camera and sensor backhaul. During a modernization program, the operator adds new intersection cameras and increases retention windows, pushing peak traffic to approximately 1.6 Tbps per zone during event hours.

The operator replaces four 100G uplinks with two 400G uplinks per zone to reduce port consumption and improve headroom for failover. Link distances average 70 to 120 meters for intra-zone fiber runs using OM4 in a campus-like cabinet layout; therefore, direct-detect 400G SR8-class optics are evaluated first. In parallel, they validate coherent 400G for the longest ring segments where fiber plant is older and splice counts increase; those segments are tested with a conservative margin because municipal fiber attenuation can drift after repairs.

Operationally, the team standardizes on a single transceiver family per distance class, monitors DOM alarms in the NMS, and runs a traffic generator at line rate during cutover windows. After deployment, they expect a measurable reduction in switch port usage and a corresponding reduction in optics sprawl, but they also plan for higher per-module power draw and stricter thermal verification inside sealed cabinets.

Common mistakes and troubleshooting patterns for 400G optics

Field failures usually follow a small set of root causes. Below are concrete pitfalls engineers repeatedly encounter when rolling out industry applications of 400G transceivers in smart city environments.

“Works in the lab, flaps in the cabinet” due to connector handling and contamination

Root cause: Dust or micro-scratches on fiber endfaces after field re-seating, combined with high lane counts in direct-detect optics, can create intermittent received power drops. Temperature swings also exacerbate alignment sensitivity.

Solution: Enforce endface inspection with a microscope, standardize cleaning procedures, and log every re-seat by transceiver DOM serial number. After cleaning, verify received optical power values stay within the vendor’s recommended operating window.

Link comes up but traffic errors spike because of polarity or MPO mapping

Root cause: Multi-fiber interfaces for 400G direct-detect often require correct transmit/receive polarity and patching direction. Inconsistent MPO patching between contractors can lead to partial lane swaps that degrade BER.

Solution: Validate polarity with a deterministic test plan: confirm patch cord orientation, run BER or error counters under sustained load, and compare results to vendor guidelines. If needed, repatch using a documented polarity scheme and lock it into the build checklist.

Switch refuses optics or shows “unsupported” PHY because of firmware and DOM field mismatches

Root cause: Some hosts enforce strict DOM recognition, and certain transceiver SKUs require firmware support for the specific module type. Even when the physical connector matches, the host might not accept the lane mapping or calibration profile.

Solution: Perform a pre-deployment compatibility test using the exact switch model and firmware revision. Keep a matrix of supported optics part numbers and update it when firmware changes.

Thermal throttling or performance degradation due to poor airflow and high module power

Root cause: 400G modules can draw more power and generate more heat than older 10G/25G optics. In cabinets with restricted airflow, transceiver temperature can exceed thresholds, causing derating or intermittent link loss.

Solution: Measure cabinet ambient temperature and verify airflow paths. Use vendor thermal guidance to confirm the transceiver temperature stays within the specified range during peak environmental conditions.

Cost and ROI note: balancing OEM reliability with third-party optics economics

For smart city rollouts, the optics line item is only part of total cost. TCO includes installation labor, truck rolls for failures, downtime risk during maintenance windows, and the engineering time spent on compatibility testing. OEM optics often cost more per module, but they can reduce integration risk with the host switch and may improve lead time predictability for critical spares.

Third-party optics can reduce upfront spend, but the ROI depends on your acceptance testing discipline. In practice, successful third-party deployments require strict compatibility validation, consistent DOM behavior, and a clear return or replacement policy. Typical procurement planning budgets often treat transceivers as “paired with” the host platform, so savings may shrink if you must buy additional spares to cover compatibility or early-life failure rates.

As a rough planning reference, many enterprises see direct-detect 400G optics priced in the hundreds of dollars per module, while coherent 400G optics can cost substantially more due to DSP and laser complexity. Your exact pricing will vary by vendor, reach class, and volume commitments; the key is to compare TCO under your maintenance model, not only unit price.

FAQ: industry applications of 400G transceivers in smart city projects

What fiber reach should we target for 400G direct-detect in smart city cabinets?

Target reach with margin based on measured attenuation and connector/splice loss, not only vendor nominal figures. For OM4-based direct-detect, many deployments aim around 100 m class links, but your actual budget depends on patching length and polarity correctness.

How do we confirm compatibility between a 400G transceiver and our switch?

Use a compatibility matrix from the switch vendor or optics supplier, then validate in a lab with the exact switch model and firmware revision. Run link bring-up and sustained traffic tests while monitoring DOM power and error counters.

Are coherent 400G optics worth it for metro ring segments?

Coherent 400G becomes attractive when distances exceed direct-detect feasibility or when fiber counts and splice complexity make parallel-fiber direct-detect costly. The decision should include dispersion assumptions, required optical performance, and the operational maturity of your coherent support process.

What monitoring should we enable for 400G transceivers in production?

Enable DOM telemetry for temperature, transmit bias/current, and received optical power, and tie alarms to your NMS. Also track interface error counters and link flaps so you can distinguish physical-layer degradation from configuration issues.

What are the fastest troubleshooting steps during a field incident?

First inspect and clean the fiber endfaces, then confirm patch polarity and connector type. Next verify DOM readings are within vendor thresholds and check switch logs for PHY/unsupported optics messages.

Should we standardize on OEM optics or allow third-party modules?

Standardization reduces operational complexity and accelerates troubleshooting. If third-party modules are permitted, require compatibility validation, consistent DOM behavior, and a clear replacement workflow to protect uptime during municipal maintenance windows.

For engineers planning industry applications of 400G transceivers in smart cities, the safest path is to align distance class, switch PHY mode support, and thermal and monitoring requirements before procurement. Next, map your fiber plant measurements to the transceiver link budget and build a compatibility test plan with your exact switch firmware. fiber optic link budget best practices

Author bio: I have deployed and validated high-speed optics in fielded Ethernet networks, including metro rings and cabinet-based aggregation, with hands-on link budget and DOM telemetry troubleshooting. I focus on measurable acceptance tests, compatibility risk control, and operational TCO rather than vendor claims.