If your optical network is approaching the point where every upgrade means forklift work, downtime windows, and surprise budget overruns, this guide is for you. It walks you through a practical, field-ready plan to standardize transceivers, validate link budgets, and plan fiber capacity so 2026 upgrades feel routine rather than risky. You will learn what to measure, which module families to standardize on, and how to troubleshoot the top failure patterns engineers see in production.

-

Prerequisites: what you need before you touch optics

Before selecting parts, gather inventory and constraints. Pull the current switch models, transceiver part numbers, and port speeds, then confirm the physical plant: fiber type (OM3, OM4, OS2), connector style (LC/SC), and splice locations. For compliance and interoperability, also capture whether your platform expects Digital Optical Monitoring (DOM) and whether it enforces vendor-specific EEPROM behavior.

Finally, set baselines for performance and reliability: collect historical link flaps, BER estimates if available, and any alarms from your optics monitoring system. If you have a coherent or DWDM layer, record channel plan and dispersion compensation settings; if you are Ethernet-only, record typical oversubscription ratios and traffic growth targets.

Expected outcome: a single source-of-truth spreadsheet mapping every optical port to fiber span, transceiver type, and operational telemetry.

-

Step 1: Choose an upgrade path that matches your 2026 traffic profile

Future-proofing starts with a realistic demand forecast, not a “buy everything” mentality. In many enterprise and campus networks, the most common 2026 pattern is moving from 10G to 25G at the access layer, and from 40G to 100G at aggregation, driven by virtualization density and AI-adjacent workloads.

In a data center leaf-spine environment, you may see a shift toward higher lane-count optics (for example, 100G using four lanes of 25G) and higher-density switch blades. Your selection should reflect the switch line card capabilities and the optics wiring model (direct attach versus fiber).

Expected outcome: a prioritized roadmap: which links stay stable, which move to 25G/100G, and which require fiber re-termination or new strands.

-

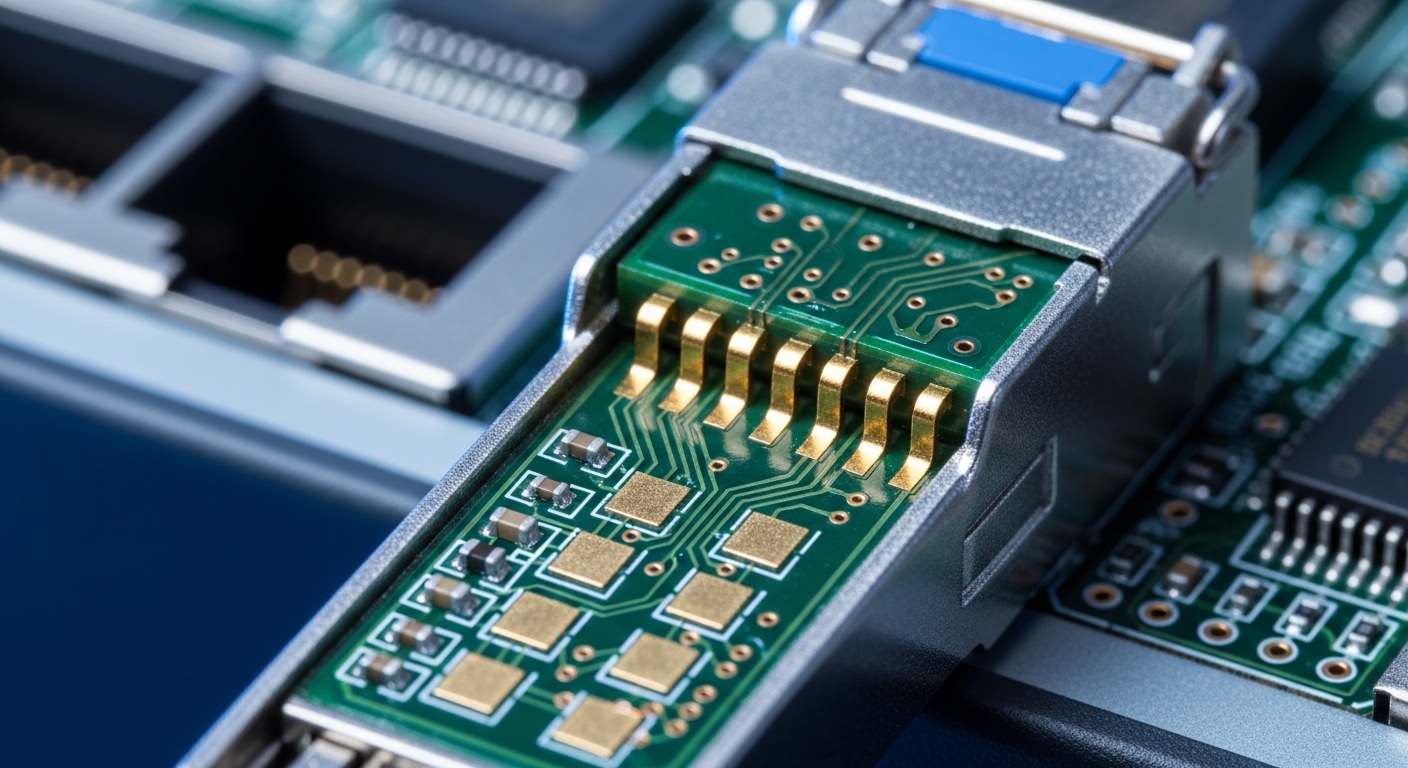

Step 2: Standardize transceiver families and data rates to reduce operational risk

Operational simplicity is a major lever in optical network readiness. Standardize across vendors and SKUs where possible, but only within the constraints of your switch vendor’s compatibility guidance. If your hardware supports SFP/SFP+/SFP28/QSFP+/QSFP28/QSFP56, pick a small set of families that cover your distance classes.

For short-reach enterprise and data center links, 100G SR4 and 25G SR typically dominate because multi-mode fiber and LC connectors simplify deployment. For longer reach, plan for 10G/25G LR on OS2 (and potentially ER for extra margin). For coherent DWDM, standardize channel spacing and transceiver vendor families to avoid unexpected optical budget or monitoring differences.

When referencing specific modules, verify wavelength and reach against your fiber type. Examples engineers often deploy include Cisco SFP-10G-SR, Finisar FTLX8571D3BCL (10G SR variants), and FS.com SFP-10GSR-85 (850 nm multi-mode). Always confirm the exact DOM and supported temperature grade in the vendor datasheet.

Expected outcome: a BOM shortlist of optics families tied to distance classes, with fewer part numbers to stock and fewer compatibility edge cases.

-

Step 3: Build a measurable link budget and margin plan (do not guess)

Engineers future-proof optical network designs by treating link budgets like design constraints, not “after the fact” checks. For Ethernet over optics, follow the IEEE 802.3 physical layer requirements and validate your transceiver parameters against your fiber attenuation, connector losses, and splice losses. Include worst-case temperature and aging margin.

At 850 nm over multi-mode, modal effects and launch conditions matter; at 1310/1550 nm over single-mode, chromatic dispersion and receiver sensitivity dominate. If you are using OM3 or OM4, confirm the modal bandwidth assumptions in your fiber spec and ensure the transceiver is designed for that fiber grade.

Expected outcome: a link budget worksheet that proves your reach with margin for connector endface cleanliness, aging, and temperature—not just the nominal datasheet reach.

-

Step 4: Use DOM and monitoring telemetry as part of your verification gate

Digital Optical Monitoring is not just convenience; it is your early warning system. When supported, DOM provides real-time transmit power, receive power, and bias current, enabling proactive thresholding before a link degrades into intermittent failures.

In production, implement monitoring policies: alert on transmit power drift outside vendor recommended ranges, and correlate optical power changes with environmental factors. Many teams deploy a simple workflow: optics telemetry into a time-series database, alarms into incident management, and monthly review of “links trending down.”

Expected outcome: automated dashboards that surface failing optics patterns early, reducing mean time to repair.

-

Step 5: Plan fiber capacity for 2026 upgrades without rework

Fiber planning is where future-proofing becomes tangible. Count strands, not ports. A 100G SR4 link may consume multiple fibers per direction, and future upgrades may require different lane mapping. Verify your current patching scheme and document which fibers are dark, spliced, or reserved.

For multi-mode, consider moving toward OM4 if you are still on OM3 and you have space to re-terminate or re-cable. For single-mode, ensure you have spare fibers in the same sheath and that your splice closures and slack management support future reroutes.

Also plan connector hygiene. Budget time and consumables for cleaning: lint-free wipes, isopropyl alcohol where permitted, and fiber inspection with a scope. Clean optics are often the difference between stable links and weeks of “mystery” flaps.

Expected outcome: a fiber inventory plan with spare strands, documented patch mapping, and a connector hygiene workflow.

-

Step 6: Validate operational temperature and power budgets early

Most optical failures are not “mysterious”; they are environmental or power-related. Confirm the module temperature range in the datasheet and compare it to the real airflow profile in your racks. If your data center has hot aisle/cold aisle patterns, verify that the switch intake temperatures align with expected module operation.

For high-density deployments, optics can run near their thermal limits. When possible, measure air temperature at the switch inlet and near the optics cage area. If you are deploying in industrial environments, verify the extended temperature grade and derating requirements.

Expected outcome: thermal compliance evidence and a reduced risk of intermittent receiver sensitivity loss.

-

Step 7: Compare common optical options for 2026 readiness

Use the table below to align distance, fiber type, and connector expectations. This is a practical comparison for planning your optical network modernization around 2026.

Optic / Use Case Wavelength Typical Reach Fiber Type Connector Data Rate Operating Temperature Notes / Limits SR (short reach, multi-mode) 850 nm Up to ~300 m (OM4 dependent) OM3 / OM4 LC 10G / 25G / 100G (variant) Vendor specific, often industrial or standard Insensitive to long-distance dispersion; sensitive to cleanliness and launch conditions LR (long reach, single-mode) 1310 nm Up to ~10 km OS2 LC 10G / 25G Standard or extended grade Requires single-mode fiber quality; budget for connector/splice loss ER (extra reach) 1550 nm Up to ~40 km class OS2 LC 10G / 25G (variant) Vendor specific Higher margin for longer spans; validate dispersion and optical budget SR4 / 100G multi-lane (data center) 850 nm Up to ~100 m to ~150 m class (OM4 dependent) OM4 LC 100G Vendor specific Consumes multiple fibers per direction; ensure lane mapping and patch plan Expected outcome: a distance-and-fiber aligned optics plan that prevents “wrong reach” purchases and reduces field returns.

-

Step 8: Design compatibility and vendor lock-in risk controls

Optical network upgrades often fail not because the physics is wrong, but because the platform rejects the optics. Your compatibility checklist should include: switch vendor supported transceiver list, DOM behavior, and EEPROM layout constraints. Some platforms are strict about vendor IDs; others are more tolerant but still require correct DOM calibration.

To minimize lock-in risk, pilot third-party optics only after you confirm DOM telemetry accuracy and stability. Keep a small “golden set” of known-good optics for each distance class. This gives you a fast rollback path during deployment.

Expected outcome: a controlled compatibility strategy with safe rollback and reduced vendor dependence.

-

Step 9: Implement a rollout process with verification gates

Future-proofing is a process, not a purchase. Plan staged rollouts: lab validation with representative fiber lengths, then a pilot in a low-risk rack or non-critical corridor. During activation, verify link up/down events, optical power levels, and any CRC/packet error counters.

For verification, capture before/after telemetry for at least 24 to 72 hours under normal traffic. Engineers often correlate optics changes with interface error spikes; if you see bursts, pause and inspect connector cleanliness and check transceiver seating.

Expected outcome: measurable confidence before scaling, plus a repeatable deployment playbook.

-

Step 10: Train your team with field-ready troubleshooting workflows

To keep the optical network stable through 2026 and beyond, your operations team needs a consistent playbook. Standardize steps for optics replacement, fiber cleaning, and DOM inspection. Document “what good looks like” for transmit/receive power and bias current, and train technicians to confirm fiber polarity and patch mapping.

Expected outcome: faster MTTR and fewer repeat incidents caused by inconsistent procedures.

Prerequisites checklist: what engineers verify before buying optics

Use this ordered decision checklist as you plan your optical network modernization for 2026. It is designed to reflect the exact questions field teams ask under tight deployment timelines.

- Distance and fiber type: confirm OS2 versus OM3/OM4 and measure actual patch-to-patch loss.

- Data rate and lane mapping: ensure the switch supports the exact optics form factor and lane configuration (for example, SR4 versus SR single-lane).

- Switch compatibility: validate against the switch vendor’s supported optics list and confirm DOM support requirements.

- DOM and telemetry behavior: check that transmit power, receive power, and alarms populate correctly in your monitoring system.

- Operating temperature and thermal airflow: compare datasheet temperature grade with measured rack inlet temps.

- Connector and cleaning plan: confirm LC polarity expectations and ensure inspection tooling is available.

- Vendor lock-in risk: pilot third-party optics, maintain golden spares, and avoid surprise EEPROM incompatibilities.

How to verify optical network readiness: link margin, DOM thresholds, and standards

Verification should be grounded in standards and measurable parameters. IEEE 802.3 defines Ethernet physical layer requirements that influence receiver sensitivity and transmitter behavior; use that as the baseline context for how the optics should perform. For practical deployment guidance, reference vendor datasheets and transceiver application notes.

For standards grounding, consult IEEE 802.3 and for optical transceiver guidance, use vendor datasheets from module manufacturers. Also review ANSI/TIA cabling guidance for fiber installation practices: ANSI/TIA standards portal.

Pro Tip: In many production environments, engineers discover that “reach failures” are actually “receive power margin” failures caused by dirty connectors and slight patching changes. A single re-termination or swapped patch lead can shift receive power enough to trigger link instability long before you hit the nominal datasheet reach.

Common mistakes and troubleshooting: the top failure modes in the field

Even well-designed optical network projects stumble in predictable ways. Below are the most common mistakes, their root causes, and the fastest solutions.

Link flaps after optics swap, but BER counters look inconsistent

Root cause: connector contamination or damaged fiber endfaces, often exacerbated by repeated insertions.

Solution: inspect both ends with a fiber scope, clean with lint-free wipes and approved cleaning method, then re-seat optics. If you have DOM, compare receive power trend immediately after cleaning versus the pre-clean baseline.

“Module not supported” or DOM alarms never populate

Root cause: EEPROM compatibility mismatch, unsupported DOM format, or switch platform restrictions on vendor IDs.

Solution: use the switch vendor’s supported optics list for the exact switch model and software version. If third-party optics are required, run a pilot and verify DOM telemetry fields in the management plane before scaling.

Distance is shorter than expected, even with the correct fiber type

Root cause: link budget miscalculation: underestimated connector/splice loss, wrong patch cord grade, or temperature differences not included.

Solution: measure actual optical power with a light source and power meter, then recalculate margin including worst-case. Update your design worksheet and add a conservative margin target for 2026 upgrades.

Intermittent errors at high temperature only

Root cause: thermal airflow short-circuiting, blocked vents, or optics running near their thermal limits.

Solution: check rack airflow paths, verify switch inlet temperatures, and ensure optics cages are not obstructed. If needed, adjust fan profiles or add cooling capacity and re-test with sustained traffic.

Cost and ROI note: how to budget optics without surprises

Pricing varies by vendor, data rate, and distance, but realistic ranges help you plan. Common third-party transceivers often cost 20% to 40% less than OEM equivalents, but total cost depends on returns, compatibility testing, and spare stocking.

When calculating TCO for your optical network, include: labor for cleaning and inspections, downtime risk during pilots, and the cost of maintaining a golden spare set. OEM optics may cost more (sometimes 30% to 60% higher), yet they can reduce deployment friction and compatibility issues. A pragmatic strategy is to standardize within one or two module families and require DOM/telemetry validation to avoid expensive field rework.

FAQ

Q1: What does future-proofing an optical network actually include?

It includes selecting optics families aligned to your 2026 traffic, verifying link budgets with measured losses, and planning spare fiber capacity so upgrades do not require re-cabling. It also means using DOM telemetry and setting monitoring thresholds to catch degradation early.

Q2: Should we standardize on one transceiver vendor?

Not necessarily, but you should standardize on a small set of compatible families and validate DOM behavior on your exact switch models. Use pilots and golden spares to reduce vendor lock-in risk without introducing deployment chaos.

Q3: How much link margin should we plan for?

A practical approach is to include connector and splice losses conservatively, plus temperature and aging margin beyond nominal datasheet reach. Many teams target sufficient budget so that receive power stays comfortably within spec even as optics drift over time.

Q4: What is the biggest cause of “works today, fails tomorrow” optical issues?

Connector cleanliness and patching changes are frequent culprits, especially after maintenance. The fastest fix is fiber inspection and cleaning, followed by receive power trend review using DOM.

Q5: Can we use third-party optics for our optical network?

Yes, if your platforms support them and you validate compatibility, DOM telemetry, and stability under load. Always pilot in a controlled segment before broad rollout and keep OEM spares for rollback.

Q6: Where do IEEE standards fit into day-to-day optics work?

They provide the physical layer baseline for how Ethernet transceivers should meet link requirements. Practically, you still rely on vendor datasheets and measured link budgets, but IEEE context helps you interpret receiver sensitivity and operational constraints.

If you implement the steps above, your optical network will be positioned for 2026 upgrades with fewer surprises and faster troubleshooting cycles. Next, align your physical plant and monitoring strategy with operational goals by reviewing fiber optic link budget planning.

Author bio: I design and validate optical network deployments from the rack to the monitoring dashboard, focusing on measurable link budgets and operational reliability. I have supported field rollouts where DOM telemetry and connector hygiene reduced MTTR and prevented repeat outages.