Open RAN rollouts often stall not because radio planning fails, but because the optical transport bill keeps changing. This article helps network architects and procurement teams run a practical cost analysis for implementing Open RAN using pluggable optical modules across fronthaul and midhaul. You will learn how to model transceiver unit cost, installation labor, power, spares, and compatibility risk so the budget survives field realities.

What drives Open RAN optical module cost in real networks

In Open RAN, optical modules are not a single line item; they are a system constraint that touches switch BOMs, fiber plant, power budgets, and maintenance workflows. A typical deployment uses ToR or aggregation switches with SFP/SFP+ or QSFP/QSFP-DD optics, plus O-RU/O-CU transport links that may include strict latency and jitter requirements. Even if your radio equipment is fixed, your optical choice can change the number of ports, the reach class, and the optics vendor mix. For cost analysis, the goal is to convert those choices into measurable impacts: initial capex, recurring opex, and downtime exposure.

Capex components you should not ignore

For optics, capex usually includes transceivers, switch line cards or port capacity upgrades, and cabling work. In a mid-size greenfield site, it is common to see optics procurement split across multiple SKUs: short-reach multimode for indoor runs, and long-reach single-mode for campus or rooftop cross-connects. Labor can dominate when you are re-terminating fiber or re-labeling patch panels to meet operational standards. In practice, field teams often find that the “cheapest” optics fail the compatibility test with a specific switch platform, forcing a re-buy and schedule slip.

Opex components: power, spares, and maintenance

Optics consume power even when traffic is idle, and the total number of ports matters more than you expect. A cost analysis should include average watts per transceiver, the expected utilization profile, and the energy price at your site. It should also include spares strategy: if you keep 10% spares for each optics type, your inventory cost becomes a meaningful opex-like capex carry. Finally, maintenance opex includes truck rolls and swap time; optics with better diagnostics (DOM) reduce troubleshooting time, which is a real cost.

Pro Tip: In field audits, the biggest “hidden” cost is not the optics price; it is the mismatch between optics capability and the switch’s optics qualification matrix. If you validate DOM and optical power class during the acceptance window, you can avoid a full rework cycle that otherwise burns weeks.

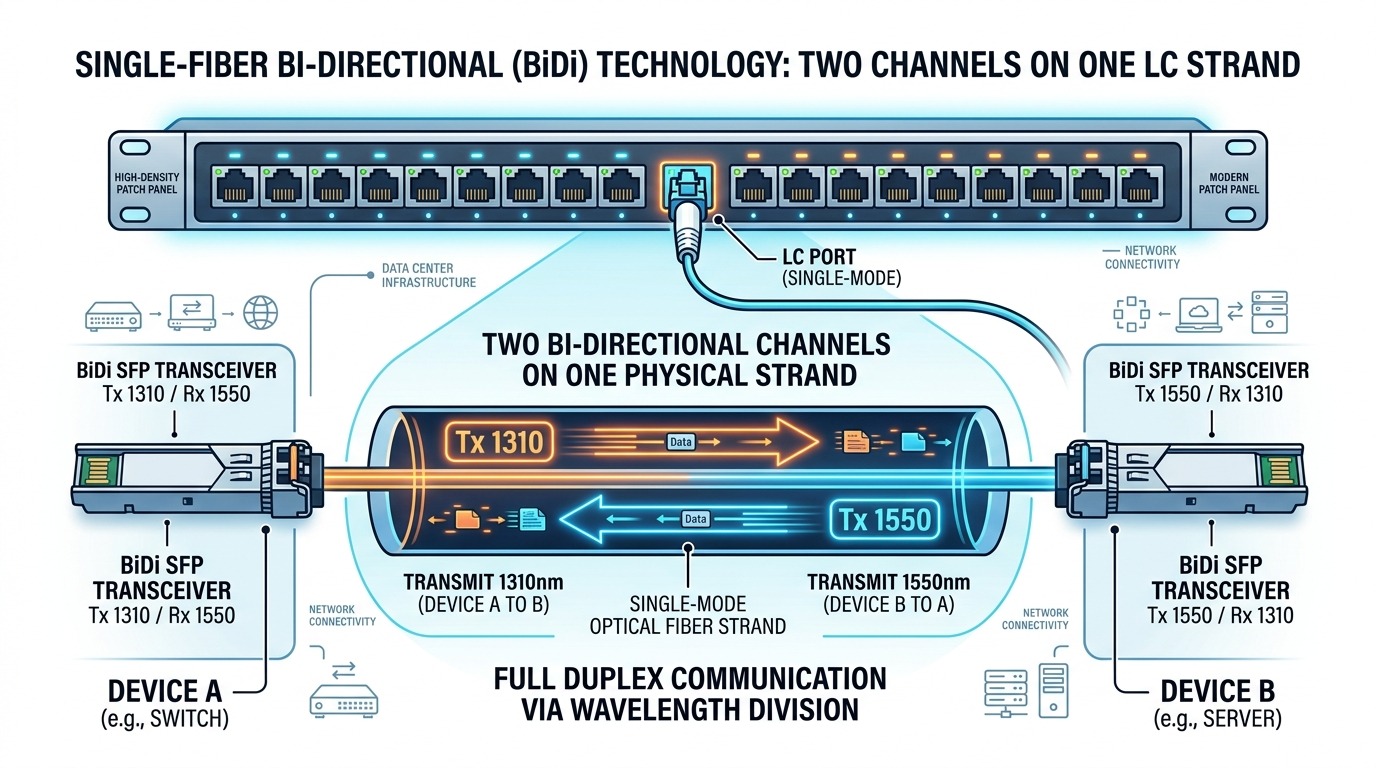

Optical reach, wavelengths, and module types: the cost equation

Open RAN transport is commonly mapped to Ethernet in fronthaul and midhaul, which means optics must match the physical layer requirements of IEEE 802.3 variants and vendor implementation details. For cost analysis, you should group links by reach and fiber type rather than by “site count.” A site with 4 O-RU units might need 8 to 16 high-speed lanes depending on functional split, redundancy, and aggregation design. Once you know lane counts, the module type choice (SFP/SFP+, QSFP, or higher-density variants) determines how many ports and how much switch capacity you must provision.

Key specifications to model

When evaluating transceivers, model wavelength, nominal reach, connector type, supported data rate, and temperature range. Also capture whether the module is designed for multimode (MMF) or single-mode (SMF), and whether it supports Digital Optical Monitoring (DOM) for operational visibility. Many procurement delays happen when teams select an optics family that is “compatible in theory” but not supported by the switch firmware for DOM thresholds.

| Optics family | Example parts | Wavelength | Reach class | Fiber / connector | Typical data rate | Power (typ.) | Temp range |

|---|---|---|---|---|---|---|---|

| SFP+ SR | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL | 850 nm | ~300 m (MMF) | OM3/OM4, LC | 10G | ~0.7 to 1.0 W | 0 to 70 C (typ.) |

| SFP+ LR | Common 10G LR SMF modules | 1310 nm | ~10 km (SMF) | Single-mode, LC | 10G | ~0.8 to 1.2 W | -5 to 70 C (typ.) |

| QSFP+ SR | FS.com SFP-10GSR-85 (10G SR optics) | 850 nm | ~300 m (MMF) | OM3/OM4, MPO/LC (varies) | 40G (4x10G) | ~3 to 4.5 W | 0 to 70 C (typ.) |

| QSFP28 SR | 25G/40G enterprise SR variants | 850 nm | ~100 m to 400 m (MMF, spec-dependent) | OM4/OM5, MPO | 100G (varies) | ~4 to 6 W | -5 to 70 C (typ.) |

Notes for the cost model: power numbers vary by vendor and revision, so you should pull exact values from datasheets for your chosen SKUs. Also, “reach” is not a guarantee; it depends on link budget, fiber quality, and connector losses. For standards grounding, Ethernet PHY behavior and optical channel requirements are aligned with the IEEE 802.3 family, while transceiver electrical and optical characteristics are defined in vendor datasheets and multi-source agreement documents. [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]]

Deployment scenario: modeling a campus Open RAN transport rollout

Consider a campus with 12 cell sites, each with 3 O-RU units, aggregated to two redundant midhaul paths. Assume functional split requires Ethernet transport of 25G per O-RU uplink, mapped to 100G aggregation per site with oversubscription managed at the aggregation layer. If you design for redundancy, you may need two independent optics per uplink direction, which can drive optics counts quickly.

A concrete budgeting example

Let’s assume each site needs 6 uplinks (3 O-RU times two redundant paths). If the aggregation uses 100G QSFP28 optics for 4x25G lanes, you might allocate 2 ports per site for the uplinks plus additional ports for O-CU connectivity. For a simplified cost analysis, suppose you deploy 24 transceivers per site across fronthaul and midhaul (this includes spares staging and additional cross-connect optics at aggregation). Across 12 sites, that is 288 transceivers plus a 10% spare pool, bringing the total to about 317 units.

How reach choices change total cost

If most runs are under 100 m indoor and use OM4, SR optics may be cost-effective and reduce cabling complexity. If rooftop-to-basement spans exceed that, you may switch to LR optics on SMF, which can be higher unit cost but avoids fiber redesign. In a cost analysis, you should compare “optics price delta” against “cabling rework cost delta.” For example, replacing a planned SMF run with MMF may require new patch panels, different fiber plant certification, and re-termination labor, which can erase the optics savings.

Operationally, you also need to budget for acceptance testing: optical power verification with an optical power meter, link loss checks, and DOM interrogation. These steps reduce future truck rolls. In many carrier-style environments, the acceptance window is short; delays from incompatible optics can cost more than the optics themselves.

Selection criteria checklist for optics under Open RAN constraints

Below is a decision checklist engineers use to keep cost analysis honest. Order matters because early choices cascade into later compatibility and operational costs.

- Distance and fiber type: classify each link by MMF or SMF, then map to SR or LR reach classes.

- Data rate and interface mapping: confirm the switch port speed (10G/25G/40G/100G) and how it aggregates lanes.

- Switch compatibility matrix: verify the transceiver SKU is supported by the exact switch model and firmware revision; do not rely on generic “works with” claims.

- DOM and diagnostics support: ensure DOM is enabled and that thresholds for optical power and temperature are supported by your monitoring stack.

- Operating temperature and airflow: validate temperature range and expected module heating in the actual rack airflow pattern.

- Vendor lock-in risk: evaluate OEM optics versus third-party. OEM may reduce compatibility risk; third-party may reduce unit price but increase validation time.

- Spare strategy: decide sparing level (commonly 5% to 15%) based on MTBF expectations and lead-time realities.

- Lead time and logistics: include expedited shipping costs during the rollout window for any optics that fail acceptance.

DOM and monitoring: why it impacts cost

DOM support affects how quickly operators detect degrading optics before a link fails. If your NMS can read DOM and correlate optical power with link errors, you can schedule proactive swaps instead of reacting to outages. That is a direct opex reduction, especially in multi-tenant or managed environments where each incident has a measurable cost.

Common pitfalls and troubleshooting tips during optics rollout

Most Open RAN optics issues are preventable, but they show up at predictable points: during acceptance, during first traffic load, or after environmental changes like dust and airflow modifications.

Pitfall 1: “Compatible” optics that fail switch acceptance

Root cause: The transceiver is not in the switch’s supported optics list for the given firmware, so the port may disable or run in an error state. Solution: validate using the exact switch firmware revision, and run a controlled bring-up test that checks link negotiation and DOM reads before scaling deployment.

Pitfall 2: Reach assumptions that ignore fiber certification results

Root cause: “300 m SR” is not a guarantee; actual performance depends on fiber bandwidth, connector endface quality, and patch panel losses. Solution: use fiber certification results (OTDR or mandatory link loss documentation) and re-clean connectors before swapping optics. Re-terminate when link loss exceeds threshold.

Pitfall 3: DOM monitoring mismatch causing false alarms or blind spots

Root cause: Your monitoring system expects DOM fields in a certain format or thresholds; third-party optics may report values differently. Solution: map DOM fields during pilot testing and adjust monitoring thresholds based on measured baseline values from a representative set of modules.

Pitfall 4: Temperature and airflow hotspots in dense racks

Root cause: High port density can raise module temperature beyond spec, especially if fans degrade or racks are partially blocked. Solution: verify airflow paths, measure inlet temperatures, and ensure modules operate within specified temperature ranges from the datasheet.

Cost analysis: pricing, power, spares, and TCO tradeoffs

A realistic cost analysis should separate three cost buckets: unit optics cost, system integration cost, and lifecycle cost. OEM modules typically cost more per unit but reduce acceptance failures and may shorten the validation window. Third-party optics can be cheaper, but you must budget engineering time for compatibility testing and monitoring calibration.

Typical price ranges to plan around

While market prices fluctuate, many teams see broad ranges like: SFP+ SR 10G in the tens of dollars, QSFP+ or QSFP28 40G/100G in the higher hundreds to low thousands per module depending on reach and speed, and LR SMF generally higher than SR for the same data rate. For OEM, add a premium; for third-party, add a validation and support premium. In a cost analysis spreadsheet, it is common to model a “risk-adjusted unit cost” that includes expected validation failures during pilot.

Power and spares: where TCO changes the decision

At scale, power becomes significant. If you deploy hundreds of modules and each consumes roughly 1 W to 6 W depending on speed and density, annual energy can move from “small” to “meaningful,” especially in high electricity price regions. Spares increase upfront capex, but they reduce downtime cost. If your operational model values uptime and fast restoration, the ROI of spares can be positive even if the spare purchase looks expensive.

Failure rates also matter. You should use vendor reliability statements from datasheets, and if available, internal warranty and RMA data from prior deployments. The cost analysis should include the cost of swap labor and the expected time to repair, not just the module replacement price.

For standards and compliance context, optical interface behavior and Ethernet PHY requirements are described across the IEEE 802.3 family, while transceiver electrical and optical specs are vendor-provided and sometimes aligned with multi-source agreements. [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]]

FAQ

How do I start a cost analysis for Open RAN optics without overfitting assumptions?

Start by counting physical ports and redundancy paths, then group links by reach class and fiber type. Build a base case with conservative power and include a small “risk buffer” for acceptance failures. Validate with a pilot site so your unit economics match real switch behavior.

Is third-party optics always cheaper for Open RAN deployments?

Often the unit price is lower, but the total cost can rise if acceptance testing and monitoring calibration take longer than planned. If you have strict maintenance SLAs, OEM modules can reduce downtime risk enough to justify the premium. Use a risk-adjusted unit cost rather than comparing sticker prices alone.

What should I verify during acceptance testing for optical modules?

Verify link up, error counters stability under load, DOM readout, and optical power levels within the allowed range. Also confirm that alarms in your NMS behave correctly and that thresholds match the module’s reporting behavior. Finally, confirm fiber link loss with certification records.

Do I need to buy spares, and how much should I stock?

Many teams stock 5% to 15% depending on lead time, criticality, and historical failure rates. If modules are long lead-time items, spares become more valuable. The best approach is to combine lead-time risk with your uptime penalty model.

How does DOM support affect operational cost?

DOM enables earlier detection of degrading optics, which reduces reactive troubleshooting and link downtime. In cost analysis terms, that often translates into fewer truck rolls and faster MTTR. It also improves root-cause clarity during incident reviews.

Which standards should I reference when validating optics for Ethernet transport?

Use IEEE 802.3 as the baseline for Ethernet PHY expectations and vendor datasheets for transceiver electrical and optical characteristics. For practical acceptance, align with your switch vendor’s optics qualification guidance and firmware release notes. [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]]

If you want the next step, run the same cost-analysis method for your fiber plant and patching strategy using cost analysis for fiber plant and patching. That combination is where many Open RAN budgets either stabilize or drift.

Author bio: I design high-availability transport and access architectures for carrier and enterprise networks, and I build deployment models that field teams can execute under real constraints. I have supported optics acceptance, monitoring integration, and failure triage across multi-vendor switch and transceiver ecosystems.

References & Further Reading: IEEE 802.3 Ethernet Standard | Fiber Optic Association – Fiber Basics | SNIA Technical Standards