When traffic grows faster than your budget, the decision between 400G and 800G stops being theoretical. This article helps network and infrastructure teams choose based on business needs like upgrade timing, optics inventory, and rack power limits. You will get a practical, field-engineer checklist, common failure modes, and a final ranked comparison to reduce risk before purchasing.

Top 1: Map your business needs to real bandwidth and failure tolerance

Start with what the business actually demands: sustained throughput, burst tolerance, and how quickly you can absorb a link failure without triggering congestion. In practice, teams measure oversubscription at the leaf-spine layer and correlate it with application latency SLOs. If your traffic profile is spiky (backup windows, batch analytics), you may prefer 400G aggregation that tolerates incremental upgrades while keeping risk contained.

Best-fit scenario: A 3-tier data center network where ToR switches uplink at 3:1 oversubscription, and you need a stable improvement within two quarters. Use 400G to expand uplink capacity while monitoring congestion, then plan 800G when traffic maturity is proven.

- Pros: Lower optics complexity; easier staged rollouts.

- Cons: May require more ports or later rework if you outgrow the footprint.

Top 2: Understand the transport reality: ports, optics, and interoperability

400G and 800G are not just “faster pipes”; they alter port planning, optics density, and compatibility boundaries with your switch silicon. IEEE 802.3 defines Ethernet PHY behavior, but vendors implement specific electrical/optical interfaces and coding choices that affect transceiver selection. Always validate with the exact switch model optics matrix and ensure your optics meet the expected form factor and lane mapping.

Key spec themes: 400G commonly uses 8x50G lanes internally or equivalent mapping, while 800G typically uses 16x50G lanes or analogous lane groupings depending on the vendor. That changes thermal load, optical budget headroom, and how you handle diagnostics like DOM support.

Best-fit scenario: A mixed fleet where you already standardized on Cisco SFP-10G-SR or QSFP-DD optics. If your switch vendor offers a stable, tested 800G optics ecosystem and you can tolerate inventory refresh, 800G becomes viable; otherwise, 400G reduces interoperability risk.

- Pros: 800G can cut port count and improve cabling density.

- Cons: Higher likelihood of optics interoperability surprises during rollouts.

Top 3: Compare technical specifications that affect uptime and power

In the field, the “real” comparison is not only reach; it is power per port, thermal tolerance, connector stress, and optical budget margin under aging. Vendor datasheets for transceivers and switch power models should guide your rack-level calculations. For reference, consult IEEE 802.3 Ethernet PHY standards and vendor optics documentation.

| Parameter | 400G (typical) | 800G (typical) |

|---|---|---|

| Data rate | 400 Gbps | 800 Gbps |

| Common optics form factor | QSFP-DD or OSFP class (vendor-specific) | OSFP or QSFP-DD high-rate variants (vendor-specific) |

| Reach (example classes) | SR4/FR4-style short to medium reach depending on lane count | SR8 style short reach; longer reach depends on optics generation |

| Typical wavelength bands | 850 nm (SR classes) or vendor-specific bands | 850 nm (SR classes) or vendor-specific bands |

| Optical diagnostics | DOM over I2C/SFF with vendor-defined fields | DOM with higher lane granularity and vendor-defined thresholds |

| Operating temperature | Often commercial or industrial ranges per module grade | Same concept, but validate airflow and switch-imposed limits |

Best-fit scenario: If your data hall has constrained airflow and you see elevated switch inlet temperatures, 400G may be safer because you can distribute load with fewer “all-at-once” thermal events. If your design targets high-density spine uplinks with strict port-count limits, 800G can reduce the number of active ports and cabling volume.

- Pros: Better margin planning with quantified power and cooling constraints.

- Cons: Reach and power are module-specific; do not generalize across vendors.

Top 4: Evaluate cost and ROI with total lifecycle thinking

Transceiver pricing varies heavily by OEM vs third-party and by whether you buy from the switch vendor’s “approved optics” list. In many deployments, the apparent per-port cost is offset by reduced switch licensing complexity, lower failure rates from matched optics ecosystems, and fewer active ports needed for the same throughput. Still, 800G can raise upfront optics cost and may accelerate refresh cycles if your vendor roadmap changes.

Practical budgeting note: Teams often see third-party optics priced materially lower than OEM, but savings can evaporate if you lose time in RMA cycles, compatibility testing, or you hit vendor support restrictions. Factor power: even small watts per port changes your monthly utility and cooling costs at scale.

- Pros: 800G can reduce port count and potentially lower cabling labor.

- Cons: Higher transceiver cost and potential procurement lead-time risk.

Top 5: Use a decision checklist that field teams can execute quickly

Before ordering, run this ordered checklist to align with business needs and reduce integration risk.

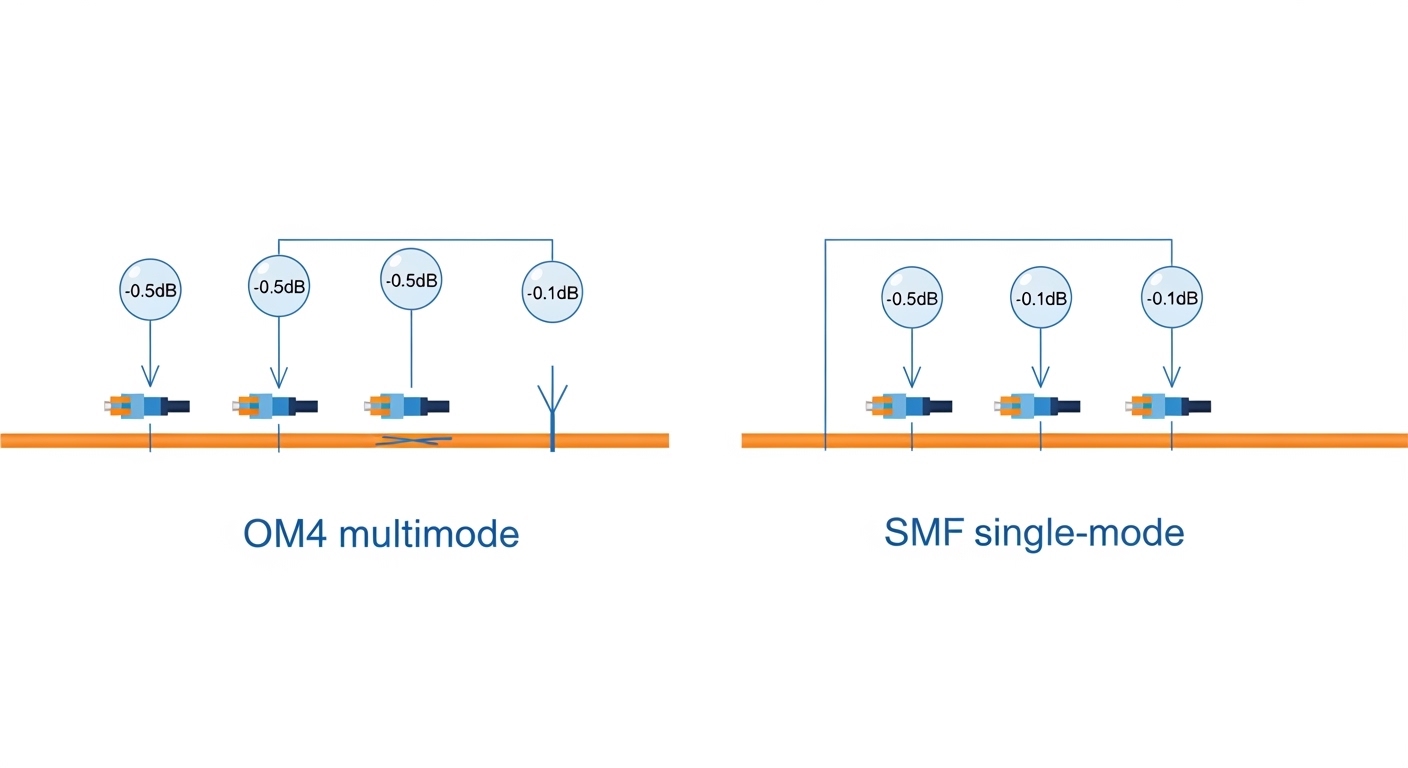

- Distance and reach class: Confirm fiber type (OM3/OM4), connector cleanliness plan, and target optical budget margin.

- Switch compatibility: Verify the exact switch model optics matrix for 400G and 800G.

- DOM and monitoring: Confirm DOM fields you rely on (lane errors, temperature, bias current) and your NMS ingestion.

- Operating temperature and airflow: Match module grade to your switch intake specs and measured inlet temperatures.

- Vendor lock-in risk: Decide whether you can tolerate OEM-only support or need approved third-party options.

- Upgrade timing: Choose the rate that supports your staged rollout schedule without re-cabling.

Pro Tip: In high-density halls, the first failures during a “new speed” rollout are often not optics defects but connector contamination and uneven MPO/MTP polishing. Add a mandatory inspection-and-cleaning step before you declare the module incompatible; it is the fastest path to separating human-factor faults from genuine PHY margin issues.

Top 6: Common mistakes and troubleshooting that waste weeks

Even careful teams stumble. Here are frequent failure modes with root causes and corrective actions.

- Mistake: Swapping 400G optics into an 800G slot “because the connector matches.”

Root cause: Lane mapping and expected electrical/optical behavior differ by platform.

Fix: Use the vendor-approved 800G part number list and confirm form factor and DOM compatibility. - Mistake: Ignoring optical budget margin during planned moves.

Root cause: Aging, patch panel rework, and additional connectors reduce margin; 800G is less forgiving.

Fix: Re-verify link parameters after every cabling change, and keep a hygiene SOP for cleaning and inspection. - Mistake: Underestimating thermal impact at scale.

Root cause: Switch inlet temperature rises during peak loads; transceiver thresholds trigger link flaps.

Fix: Measure inlet and module temperatures, then adjust airflow or placement and retest during peak conditions. - Mistake: Misreading diagnostics and chasing the wrong component.

Root cause: DOM interpretation differs across vendors; alarms may point to a single lane group.

Fix: Train on vendor-specific DOM fields and isolate by swapping only one known-good module at a time.

Top 7: Final ranking by business needs scenarios

Use this table as a practical starting point, then validate with your switch optics matrix and measured conditions.