Optical outages in telecom networks rarely announce themselves; they show up as CRC bursts, rising BER, and sudden link flaps long after the last maintenance window. This field-ready guide helps network engineers and operations teams apply best practices for optical resilience across design, installation, protection switching, and day-2 monitoring. You will get implementation steps, realistic verification targets, and troubleshooting patterns tied to common root causes.

Prerequisites and resilience targets before you touch hardware

Before selecting optics or changing protection paths, align on measurable goals: availability, mean time to repair, and acceptable optical power margins. For example, set operational thresholds for receive power (Rx) and error-rate indicators so you can detect degradation before a hard failure. In practice, teams often standardize on optical budgets and monitoring rules per IEEE 802.3 link behavior and vendor optics specifications. For optical protection, ensure your switching design matches the transport layer capabilities and timing assumptions.

Implementation checklist: what to verify first

- Optical reach and budget: confirm link loss, connector/splice loss, and margin for aging and temperature swings.

- Transceiver compatibility: validate vendor and firmware support for your switch/ROADM platform (DOM parsing, alarms, and wavelength mapping).

- Protection model: define whether you use path protection (transport) or link protection (optical/PHY) and how failover is detected.

- Monitoring plan: ensure you can collect DOM metrics, optical alarms, and interface error counters with timestamps.

Expected outcome: a documented baseline that ties each link to a budget, optics part numbers, and monitoring thresholds so you can act before service impact.

Step-by-step best practices for optical resilience design

Resilience starts at the physical layer, but it must be enforced by operational controls. The best practices below combine conservative optical engineering with pragmatic monitoring and protection behavior. Use them to reduce the probability of a hard outage and to shorten restoration time when faults occur.

Engineer optical budgets with margin, not just reach

Use manufacturer link budget formulas and actual component losses: fiber attenuation at the operating wavelength, splice loss, connector loss, and any patch-cord penalties. Then keep a margin for aging and reconnection events; teams commonly aim for a 3 to 6 dB operational headroom depending on deployment volatility. Ensure the transceiver’s specified Rx sensitivity and maximum input power envelope align with your budget.

Expected outcome: fewer “mystery degradations” where the link survives but BER rises due to tight margins.

Choose optics with proven DOM and alarm semantics

Optical resilience is not only about light—it is about visibility. Select SFP/SFP+/QSFP modules that expose DOM fields your platform can interpret, including Rx power, temperature, bias current, and alarm flags. In field deployments, engineers standardize on modules with consistent alarm thresholds so automated workflows can quarantine bad optics quickly. Examples include Cisco and Finisar-compatible optics such as Cisco SFP-10G-SR and Finisar FTLX8571D3BCL, or third-party equivalents like FS.com SFP-10GSR-85, but always verify platform compatibility.

Expected outcome: early detection of laser aging, connector contamination, and fiber damage before a full outage.

Implement protection that matches failure modes

Design protection to cover the most likely optical failure modes: fiber cut, patch panel misconnections, and transceiver faults. If your transport layer supports it, use path protection; otherwise, isolate critical links so one fiber segment failure does not cascade across services. Ensure failover detection is tied to interface down/up and error counter surges so you can distinguish transient impairments from persistent faults.

Expected outcome: predictable restoration behavior with fewer unnecessary switchovers.

Standardize fiber handling and patching discipline

Contamination and mechanical stress are top optical failure causes in real operations. Use lint-free cleaning protocols and inspect connectors before re-termination; enforce bend radius rules during routing and rack installation. Avoid frequent patch churn on high-speed optics; when changes are required, schedule them during controlled windows and re-check Rx power and error counters after each move.

Expected outcome: reduced connector-related intermittents and fewer “passes in testing, fails in production” cases.

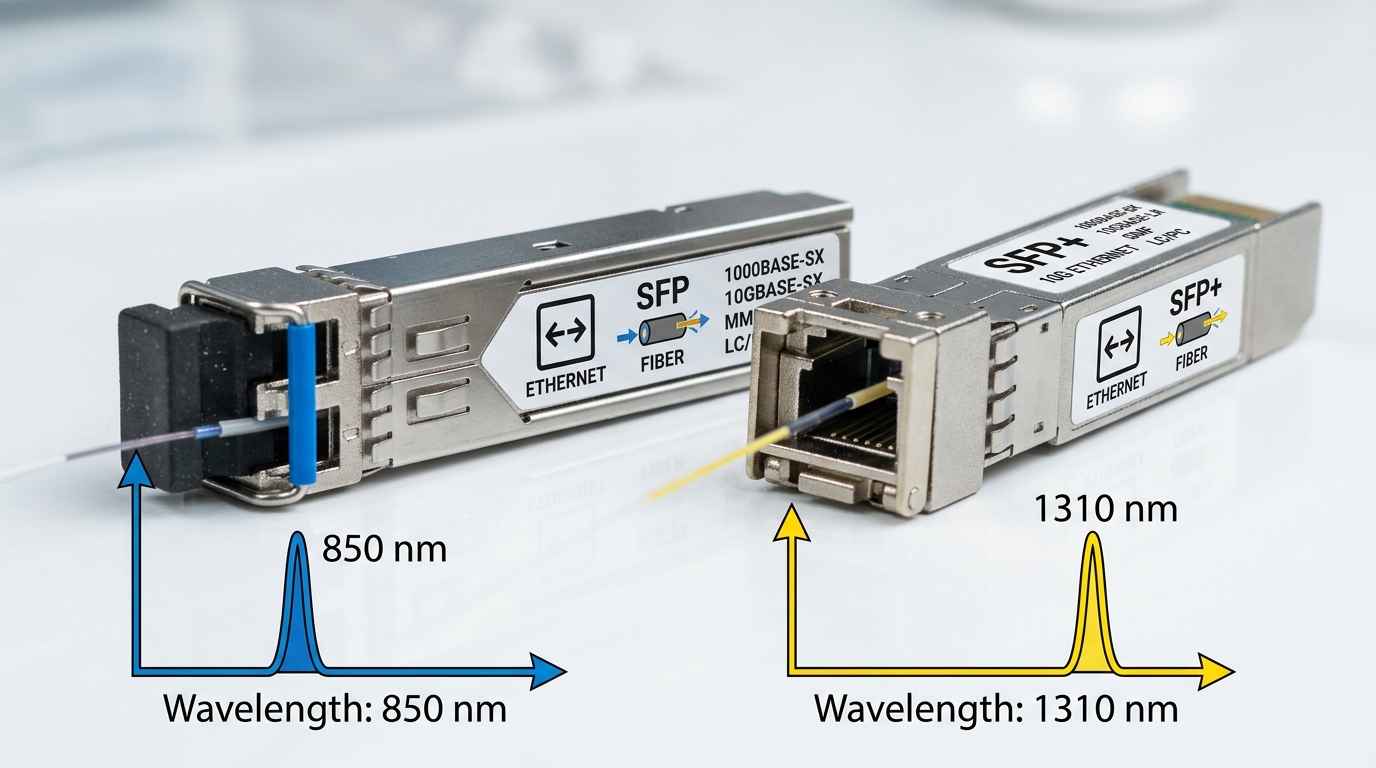

Optics comparison for resilient link engineering

Engineers often optimize for reach, but resilience depends on wavelength, connector type, monitoring capability, and operating temperature. Use the table below as a starting point for selecting common module families for resilient deployments. Confirm exact specs against current datasheets and your switch vendor transceiver matrix.

| Module / Use Case | Wavelength | Typical Reach | Connector | Data Rate | DOM / Monitoring | Operating Temperature |

|---|---|---|---|---|---|---|

| 10G SR (example: SFP-10G-SR class) | 850 nm | up to 300 m (OM3) / 400 m (OM4) | LC | 10 Gb/s | DOM with Rx power and alarms (model dependent) | 0 to 70 C (typical) |

| 10G SR (85 C variant class) | 850 nm | similar to SR specs by fiber type | LC | 10 Gb/s | DOM with vendor-specific alarm mapping | -5 to 85 C (model dependent) |

| BiDi or longer-reach options | Varies (often 1310/1550) | depends on module and fiber grade | LC | 10 Gb/s or higher (model dependent) | DOM typically available | varies by grade |

Expected outcome: a consistent optics selection that reduces surprise incompatibilities and preserves monitoring fidelity.

Real-world deployment scenario: leaf-spine with optical protection discipline

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches feeding 100G spine uplinks over OM4, the team targeted optical resilience by standardizing on DOM-aware optics and strict patch-panel hygiene. They mapped each uplink to a budget with 4 dB operational headroom, then set alerts on Rx power drift exceeding 1.5 dB within 24 hours and on rising interface error counters. When a single rack experienced intermittent flaps, the root cause was traced to a connector that passed visual inspection but failed under angled inspection and cleaning; after cleaning and re-seating, BER normalized and alarms stopped. This approach shortened restoration time because monitoring pointed to degradation before a full link drop.

Selection criteria and best practices decision checklist

Use this ordered list during procurement and engineering reviews to enforce best practices across vendors and sites.

- Distance and fiber type: choose reach based on OM grade, expected loss, and margin, not only “max reach.”

- Switch compatibility: confirm module support on your exact switch or ROADM line card; validate DOM field interpretation.

- DOM support and alarm behavior: ensure you can read Rx power, temperature, bias, and alarm flags reliably.

- Operating temperature: match optics grade to ambient conditions; high-density racks can run hotter than planned.

- Vendor lock-in risk: assess whether third-party optics work with consistent alarm semantics and whether spares are interchangeable.

- Serviceability and lead time: verify spare availability and whether you can swap optics without recalibration steps.

Expected outcome: fewer field surprises and smoother maintenance operations with predictable alarm behavior.

Common pitfalls and troubleshooting tips for optical resilience

Even well-designed systems fail; the goal is to fail safely and diagnose fast. Below are three frequent failure modes with root causes and solutions that field teams see repeatedly.

Failure mode 1: link flaps after a patch change

Root cause: connector contamination, incorrect fiber polarity, or insufficient cleaning after re-termination. Solution: clean with approved procedures, verify polarity (especially for duplex LC), re-seat connectors, then re-check Rx power and interface error counters.

Failure mode 2: rising BER with stable link state

Root cause: optical margin erosion from aging optics, micro-bends, or loose patch cords. Solution: graph Rx power and temperature over time; if Rx power drifts by more than your threshold, replace optics and inspect routing for bend-radius violations.

Failure mode 3: alarms show “present” but monitoring is unusable

Root cause: DOM field mapping mismatch between optics and platform, or unsupported alarm interpretation by the telemetry pipeline. Solution: validate DOM ingestion in a lab or staging environment; update parser logic and confirm that your monitoring system correlates DOM alarms with interface counters.

Pro Tip: In many networks, the earliest signal of an impending optical fault is not link down events but a consistent trend in Rx power combined with a slow rise in error counters. Alerting on rate-of-change (for example, dB drift over hours) can beat static thresholds and reduce mean time to repair.

Cost and ROI note: what to budget for resilience

Realistic optics pricing varies by vendor and grade, but third-party 10G SR modules commonly fall in the tens of dollars per unit range, while OEM modules can be higher depending on licensing and warranty terms. The total cost of ownership often favors optics that integrate cleanly with monitoring and reduce truck rolls; if a resilient monitoring plan prevents even one major incident, it can outweigh the price delta. Also factor power and cooling: higher-temperature optics grades can reduce derating risk in dense racks, which lowers failure rates and spares consumption over time.

FAQ

What are the most important best practices for optical resilience?

Prioritize optical budget margin, DOM-based monitoring, strict fiber handling, and protection design aligned to realistic failure modes. Then enforce these with measurable thresholds so degradation triggers action before a hard outage.

How do I choose between OEM and third-party optics without increasing risk?

Validate compatibility on the exact platform model, including DOM alarm mapping and telemetry ingestion. Keep a controlled pilot group and compare Rx power stability and error-counter behavior before scaling deployment.

What monitoring signals best predict an optical fault?

Track Rx power drift, temperature/bias trends, and