best practices for AI fiber utilization without wasting ports

AI and ML clusters fail in expensive ways when fiber planning is sloppy: unexpected port shortages, mismatched optics, and repeated truck rolls. This article helps data center and network engineers apply best practices to maximize fiber utilization while keeping transceivers interoperable. You will get practical workflows for selecting optics, mapping fibers to traffic, and validating link behavior against IEEE 802.3 and vendor datasheets. Update date: 2026-05-03.

Why fiber utilization breaks first in AI/ML racks

In many AI deployments, traffic grows faster than the physical plant. A typical training setup may use 8 to 16 links per node at 25G, 50G, or 100G, then later add evaluation and inference services that still consume the same uplink or spine capacity. If you oversubscribe fiber by “just in case” patching, you end up with orphaned fibers and hard-to-debug cross-connect drift.

The core issue is that optics and cabling decisions couple tightly to port density, reach class, and connector type. For example, an SR optics choice might look equivalent to an LR choice on paper, but the connector geometry, DOM behavior, and wavelength budget differ. The result is that “spare” fibers often cannot be reused without re-terminating or replacing transceivers.

To stay ahead, treat fiber like compute: design it with a measurable utilization target and an operational validation loop. The most effective best practices blend standards awareness (IEEE 802.3) with field-tested cabling governance and optics compatibility checks. anchor-text: IEEE 802.3 standard portal

Specs that actually matter: SR vs LR and connector economics

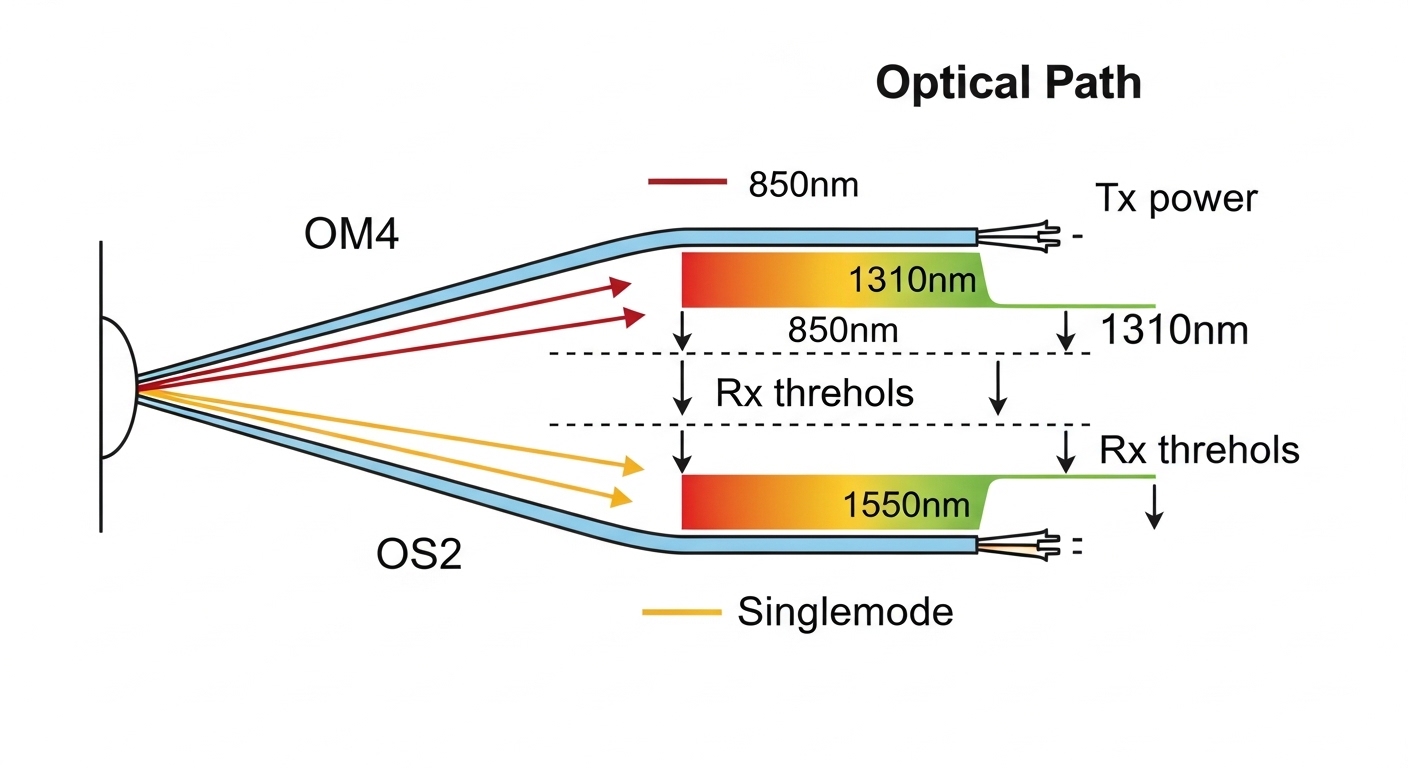

Engineers often compare only reach, but fiber utilization depends on wavelength class, connector type, and monitoring features. Short-reach (SR) modules typically use 850 nm over OM4/OM5 multimode fiber, while long-reach (LR) uses 1310 nm over singlemode fiber. Connector choice (LC vs MPO/MTP) impacts patch panel density and rework time during moves.

| Optics example | Data rate | Wavelength | Typical reach | Fiber type | Connector | DOM / monitoring | Operating temp (typ.) |

|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | 850 nm | 300 m (MMF class-dependent) | OM3/OM4 | LC | Yes (per vendor) | 0 to 70 C |

| Finisar FTLX8571D3BCL | 25G | 850 nm | 100 m class (MMF) | OM4/OM5 | LC | Yes (per datasheet) | -5 to 70 C |

| FS.com SFP-10GSR-85 | 10G | 850 nm | 300 m class | OM3/OM4 | LC | Varies by SKU | -5 to 70 C |

| 100G QSFP28 SR4 (typical) | 100G | 850 nm | 100 m class (MMF) | OM4/OM5 | MPO/MTP | Yes (per vendor) | 0 to 70 C |

For AI clusters, you usually win fiber utilization by standardizing on SR where distances allow, because MMF patching with OM4/OM5 supports high port density. When you must cross rows or buildings, move to LR over singlemode, but do it intentionally with a documented fiber map so you do not strand “wrong-reach” fibers.

Pro Tip: Before you label fibers, validate the switch optics compatibility list and confirm DOM behavior for the exact transceiver SKU. In the field, we have seen links come up at low speed or flap under temperature swings when DOM or vendor-specific diagnostics differ, which then triggers unnecessary re-cabling and wastes the very fibers you planned to reuse.

Workflow: map traffic to fibers, then design for reuse

Start with a traffic model and translate it into link counts per rack. In a leaf-spine fabric, many teams reserve spine uplinks early, then later discover that GPU-to-GPU traffic patterns changed after the scheduler configuration. Your best practices workflow should therefore include a “fiber map revision” step after each major training stack change.

Step-by-step planning checklist

- Distance and reach class: Measure actual patch-to-patch fiber length, including slack. Apply conservative margins for connector loss and aging.

- Budget and oversubscription: Choose optics reach class aligned to your BER targets at the operational temperature range. Validate link budgets against vendor specs for your exact fiber type.

- Switch compatibility: Use the switch vendor’s transceiver compatibility matrix for SR/LR and for the exact form factor (SFP+, SFP28, QSFP28, QSFP56).

- DOM and monitoring: Confirm that your network OS supports DOM fields and that alarms are mapped consistently. This reduces “silent failure” during maintenance.

- Operating temperature: Verify transceiver temperature ranges and ensure airflow meets datasheet guidance, especially in GPU racks with high thermal density.

- Vendor lock-in risk: If you plan to mix OEM and third-party optics, standardize on SKUs with matching DOM expectations and documented compliance.

- Connector strategy: Prefer LC for flexible short runs and MPO/MTP for high-density 100G/400G trunks, but document polarity rules and labeling conventions.

Once you have the plan, enforce it with a fiber administration standard: consistent rack numbering, cross-connect naming, and a single source of truth for mapping. When you later add nodes, you can reuse existing trunks by selecting the next available lanes in MPO/MTP bundles rather than cutting new paths.

Comparison: how to choose optics for fiber reuse

Fiber utilization is not only about cabling; it is about reducing the number of “stranded” fibers created by mismatched optics types. SR optics over OM4/OM5 can reuse existing multimode infrastructure, while LR over singlemode requires different transceiver hardware and different fiber termination practices.

Decision logic that field teams use

- If your measured distance plus margin fits SR reach, standardize on SR to preserve fiber reuse.

- If you need to cross long distances, design a singlemode “trunk layer” early and document where multimode ends.

- When upgrading from 10G to 25G or 100G, check whether your switch supports breakout modes that align with your existing connector and lane mapping.

Also consider operational constraints: MPO/MTP polarity errors are a leading cause of “it should work but it does not” during commissioning. A disciplined polarity labeling convention and a test plan using an optical power meter and light source can prevent a week of rework.

Common mistakes and troubleshooting tips

Even with good planning, AI networks are sensitive to small errors because link counts are high. The following best practices troubleshooting points are based on recurring issues seen during rollouts of 25G and 100G fabrics.

Polarity mistakes in MPO/MTP trunks

Root cause: Lane mapping and polarity mismatch when reversing one end of an MPO bundle. Symptoms: No link, or link flaps during traffic bursts. Solution: Verify polarity method (for example, using a compliant MPO polarity harness) and re-test with a light source to confirm correct transmit/receive lanes.

Wrong reach class for planned reuse

Root cause: Planning with nominal reach but ignoring connector loss, patch panel quality, and temperature. Symptoms: Link comes up intermittently or shows rising error counters under load. Solution: Measure end-to-end fiber loss and re-assign optics or re-terminate to meet the vendor link budget.

Transceiver compatibility and DOM handling gaps

Root cause: Using a third-party module that is “electrically compatible” but not fully compatible with the switch’s DOM diagnostics and threshold expectations. Symptoms: High interface errors, warnings in logs, or inconsistent link behavior after warm restarts. Solution: Confirm the exact transceiver SKU against the compatibility list and test in a maintenance window with monitoring enabled.

Thermal oversubscription in GPU racks

Root cause: Insufficient airflow across optics cages during peak load, pushing temperature beyond datasheet limits. Symptoms: DOM temperature alarms and gradual degradation before failure. Solution: Validate airflow paths, clean intake filters, and adjust fan profiles to maintain stable thermal conditions.

Cost and ROI note: what fiber optimization really saves

Cost depends on whether you buy OEM optics, third-party optics, or both. In real deployments, OEM transceivers can cost roughly 20% to 60% more than third-party equivalents, but OEM often comes with smoother qualification and lower operational risk. For budgeting, also include TCO items: truck rolls, downtime, testing labor, and the chance of re-termination due to polarity or reach miscalculations.

Optimizing fiber utilization typically reduces the number of new trunks you install during phased AI rollouts. Even a single avoided rework event can offset the incremental cost of better labeling, testing equipment usage, and a stricter optics qualification process.

FAQ

What are the best practices for planning fiber in an AI training cluster?

Model link counts per rack, measure actual patch-to-patch distances, and standardize on SR optics where reach allows. Then enforce a fiber map with consistent labeling and a revision process after scheduler or topology changes. This prevents orphaned fibers when you scale GPUs.

Should we standardize on SR optics or mix SR and LR?

If your measured distances fit SR reach with margin, standardize on SR to maximize reuse of OM4/OM5. Mix in LR only for defined long-distance segments, and document the boundary so you do not strand multimode infrastructure.

How do we reduce optics compatibility problems?

Use the switch vendor compatibility matrix for the exact transceiver SKU and form factor. Confirm DOM monitoring support and test stability under realistic load before you scale out. This is one of the most effective best practices to avoid intermittent link failures.

What is the most common cause of “no link” during MPO/MTP installation?

MPO/MTP polarity or lane mapping errors at one end of the trunk. Validate polarity using a known-good harness or a light source test, then re-terminate or re-patch as needed.

Do third-party transceivers increase operational risk?

They can, especially if DOM thresholds, compliance reporting, or vendor-specific diagnostics differ. If you use third-party optics, standardize SKUs, run acceptance tests, and monitor DOM alarms so you can detect drift early.

How can we quantify ROI for better fiber utilization?

Track avoided cabling installs, avoided re-termination events, and reduced downtime during expansions. Include labor and testing time in TCO, and compare it against the cost difference between OEM and qualified third-party optics.

If you want to expand this approach beyond optics, apply the same governance to patch panels, cross-connect records, and change control. Next step: best practices for fiber labeling and change control

Author bio: I have deployed and troubleshot high-density optical fabrics in enterprise and AI data centers, focusing on repeatable acceptance tests and operational documentation. My work emphasizes standards alignment, DOM observability, and field-ready cabling workflows that reduce rework during scaling.