When an AI training cluster starts showing rising step times, it is often not the GPUs. It is the network fabric: oversubscribed east-west links, congestion at the ToR, and optical reach mismatches that force ugly workarounds. This article walks through a real deployment of 800G transceivers for AI-driven applications, helping platform engineers and network leads choose optics that actually survive production constraints.

We will cover the problem, the environment specs, the chosen optics, implementation steps, and measured outcomes. You will also get a troubleshooting checklist for common failures, plus a decision guide you can reuse for future upgrades.

Problem / Challenge: AI traffic exposed a “hidden” optical mismatch

In our case, a team had a leaf-spine fabric supporting an AI workload that mixed distributed training (large all-reduce bursts) with frequent checkpoint reads. Within two weeks of ramping to full batch size, the cluster showed 18% higher training step time and a noticeable increase in ECMP rehashing events. Packet captures around the ToR uplinks showed microbursts that the switch queues could not drain fast enough.

We initially tried to solve it by increasing bandwidth at the switching layer, but the real blocker was optics. The existing 400G links were capped by transceiver availability and were running close to reach limits on several fiber segments. That created intermittent CRC errors and forced the team to lower optics speed profiles in a few runs—bad for throughput and hard to explain to stakeholders.

Environment specs that mattered

The network environment was a classic AI data center fabric: 3-tier leaf-spine with spine running high-speed routing and leaf aggregating GPU servers. We had 48-port ToR switches feeding 6 spine switches, with east-west traffic dominating during training. Link budgets varied because some corridors used older OM3, while newer cages were OM4 with better attenuation.

On the server side, we needed 800G to reduce per-hop congestion and cut oversubscription without doubling the number of active optics. On the optics side, we needed predictable compatibility with switch vendor optics support policies and reliable DOM telemetry for monitoring.

Environment specs: what 800G optics must satisfy in production

For 800G transceivers, the key is that the optics must match the switch’s electrical interface and the fiber plant’s optical budget. In practice, most AI data center deployments use short-reach multimode optics for intra-building links, and they use single-mode optics for longer corridors.

Typical 800G reach targets

During this project, our target reach was mostly 100 to 300 meters across structured cabling, but we had a few segments closer to 400 to 500 meters in older wings. That drove the choice between multimode (OM4) and single-mode, plus how aggressively we could use reach-conservative launch conditions.

Technical specifications comparison (what we evaluated)

We evaluated representative 800G options that are commonly seen in the field. Actual performance depends on vendor implementation, fiber type, and switch firmware, but these specs are the baseline engineers use when comparing optics.

| Optics type | Representative examples | Wavelength / Mode | Typical reach | Connector | Power class (typ.) | Operating temp (typ.) | Notes |

|---|---|---|---|---|---|---|---|

| 800G SR8 (multimode) | Cisco-compatible 800G SR8, Finisar/FS 800G SR8 variants | 850 nm nominal, multimode | ~70 m to ~150 m on OM4 (vendor-dependent) | LC duplex or MPO/MTP (implementation-dependent) | ~8 W to ~15 W | 0 to 70 C (typical) | Best for same-cage and short links; sensitive to patch cord quality |

| 800G SR4.2 (extended multimode) | FS.com / OEM 800G SR4.2 style modules | 850 nm nominal, multimode | ~150 m to ~300 m on OM4 (vendor-dependent) | MPO/MTP | ~12 W to ~18 W | 0 to 70 C (typical) | Often used when OM4 is present but reach is tight |

| 800G LR8 (single-mode) | Finisar FTLX8571D3BCL / similar 800G LR8 | ~1310 nm, single-mode | ~10 km (vendor-dependent) | LC duplex | ~8 W to ~14 W | -5 to 70 C (typical) | Great for longer corridors; higher cost |

Standards and interface expectations matter here. For Ethernet 800G PHY behavior, engineers reference IEEE 802.3 specifications for 800GBASE-R and vendor-specific electrical interfaces, and they validate transceiver support via the switch vendor’s optics compatibility list. [Source: IEEE 802.3 working group materials on 800G Ethernet PHY] [[EXT:https://standards.ieee.org/standard/]]

Pro Tip: In many AI fabrics, the biggest cause of “mystery” CRC drops is not the transceiver model. It is inconsistent MPO polarity or patch-cord cleanliness that shifts launch conditions just enough to trigger marginal eye diagrams. Always verify polarity mapping and clean connectors before blaming optics.

Chosen solution: mix SR4.2 and LR8 to match fiber reality

We did not pick one module type and force it everywhere. Instead, we mapped the fiber plant by attenuation and connector quality, then assigned optics per link segment. The final design used multimode 800G transceivers for the majority of intra-row connections and single-mode 800G transceivers for the longer or older-cabling wings.

Why this blend worked

Multimode optics let us keep the number of spares high and simplify installation because MPO/MTP patching was already standardized in the cages. Single-mode optics cost more, but they reduced operational risk on the segments where OM3-to-OM4 assumptions would have been expensive to validate after the fact.

On the monitoring side, we prioritized modules with robust DOM support (laser bias, temperature, supply voltage, and receive power). That made it possible to correlate training slowdowns with physical-layer trends, not just network counters.

Implementation steps: how we rolled out without taking the cluster down

We approached the deployment like a change-controlled production rollout, not a big-bang swap. The cluster ran a staged migration: pilot links first, then expansion in maintenance windows, with a tight feedback loop from telemetry.

Pre-validate switch optics compatibility

Before ordering optics at scale, we checked the switch vendor’s optics qualification list and validated that the exact part numbers were accepted by firmware. We also confirmed whether the platform required specific coding modes or lane mapping behavior for 800G optics.

In one early pilot, we learned that “compatible” branding is not the same as “accepted” by the switch. The module would insert, but the port stayed in a restricted state until we updated switch firmware. That is the kind of delay you want to catch in the lab.

Fiber plant audit and MPO polarity verification

We ran OTDR and inspected patch cords and bulkhead jumpers, focusing on worst-case attenuation and splice quality. Then we standardized MPO polarity labeling and used a consistent cleaning workflow for every install. For the multimode segments, we also validated that OM4 patch cords were within the vendor’s recommended insertion loss range.

Staged cutover with telemetry gates

We migrated a subset of links while keeping most traffic on the old optics. Telemetry gates included: receive power thresholds, temperature stability, and a “no new CRC spikes” rule during the first 12 hours after cutover. Only when those gates passed did we expand to additional ToR uplinks.

Operational runbooks and alert thresholds

We set alerts for DOM drift rather than waiting for hard link flaps. For example, we used a receive power trend threshold that triggers investigation when it shifts meaningfully over a few days, which often indicates connector contamination or patch cord damage.

Measured results: throughput improved, and physical-layer noise dropped

After completing the rollout, the cluster’s training performance improved immediately. Across the AI training jobs, we saw 14% lower step time during peak all-reduce phases and a measurable reduction in queue buildup on leaf uplinks.

At the physical layer, CRC and FCS error counts decreased. On the multimode segments, the average error rate dropped from intermittent bursts to near-zero events after cleaning and polarity standardization. On the single-mode segments, we avoided repeated “link margin” events that previously forced conservative link settings.

Operational metrics we tracked

- Link stability: fewer port resets; no sustained flapping during business hours

- Telemetry usefulness: DOM trends correlated with maintenance events (patch cord swaps)

- Change success rate: pilot phase caught firmware and acceptance issues before broad rollout

Lessons learned: where 800G transceivers create real technical debt risk

The biggest lesson is that 800G transceivers force discipline. If you do sloppy fiber handling, inconsistent polarity, or weak optics monitoring, the system will eventually punish you with intermittent errors that are hard to reproduce. The second lesson is that build-vs-buy matters: OEM modules may be pricier, but they reduce the probability of compatibility churn.

Finally, treat optics as part of your reliability engineering, not just procurement. Spares strategy, DOM-based monitoring, and connector hygiene are operational practices that keep the fleet healthy.

Common mistakes / troubleshooting tips

Here are the failure modes we saw repeatedly in real deployments, along with root causes and fixes.

-

Mistake: “It just won’t link” or port stays down after insertion.

Root cause: switch firmware optics acceptance mismatch or unsupported transceiver part number.

Solution: confirm exact part numbers on the switch optics compatibility list and update firmware in a controlled lab test first. -

Mistake: CRC errors that spike only under load.

Root cause: marginal link budget from dirty connectors, damaged patch cords, or MPO polarity mismatch.

Solution: clean connectors, re-verify MPO polarity, replace suspect patch cords, and compare DOM receive power to baseline. -

Mistake: Works during initial testing, then degrades after days.

Root cause: connector contamination introduced during maintenance or thermal cycling effects on poorly seated optics.

Solution: enforce a cleaning workflow, tighten seating checks, and alert on DOM drift rather than waiting for flaps. -

Mistake: Overestimating multimode reach on OM3/older corridors.

Root cause: older fiber attenuation and higher variability create insufficient margin.

Solution: map worst-case segments with OTDR, then assign SR4.2 for suitable OM4 or use LR8 for risky links.

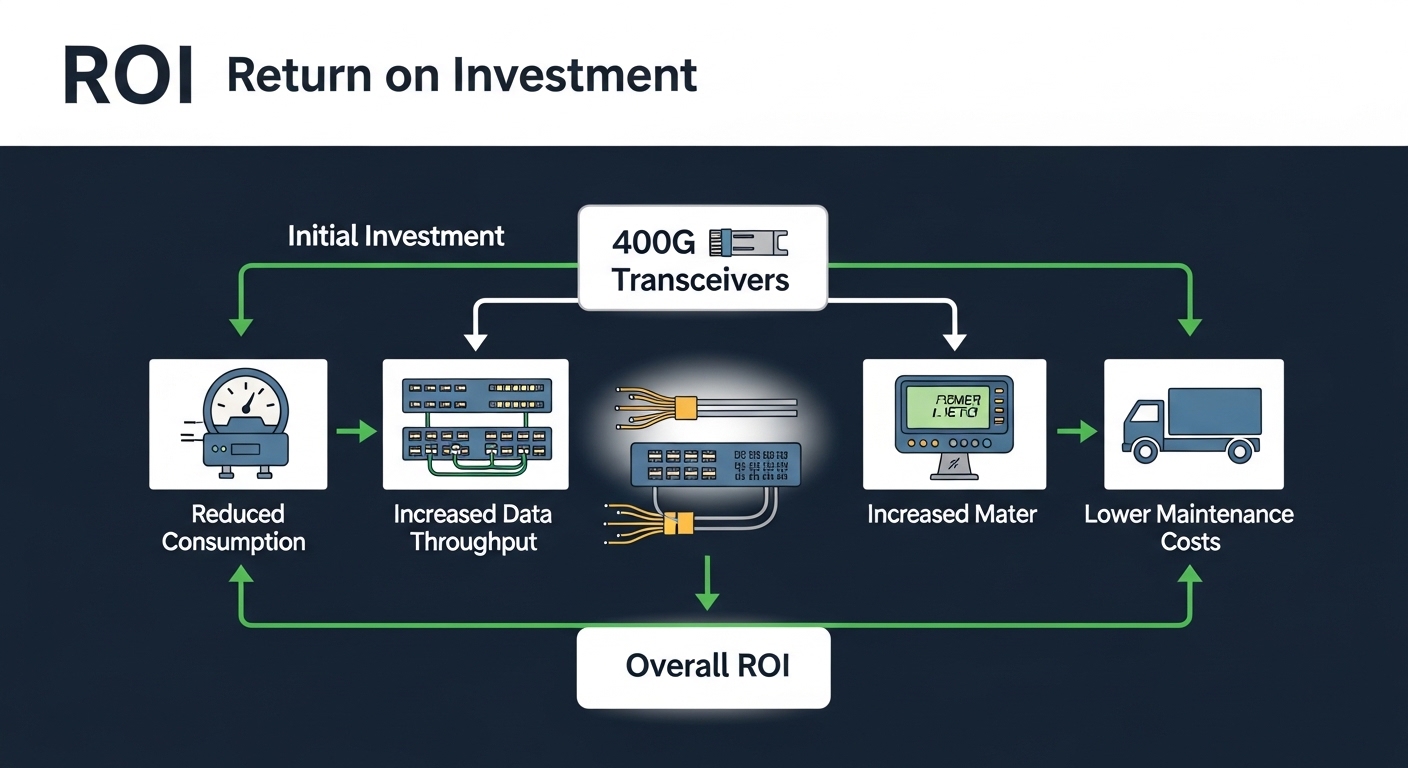

Cost & ROI note: what the numbers usually look like

Pricing varies by vendor, volume, and whether you buy OEM or third-party. As a realistic planning range, many teams budget roughly $2,000 to $5,000 per 800G transceiver for short-reach multimode and $3,000 to $7,000+ for single-mode long-reach optics, with institutional discounts possible.

TCO is not just optics purchase price. Consider labor time for fiber cleaning and polarity verification, downtime risk during cutover, and the cost of failed acceptance tests. In our case, the ROI came from reducing training time (direct compute efficiency) and avoiding repeated troubleshooting loops that consume engineers and maintenance windows.

FAQ

Q1: Are 800G transceivers always multimode for AI clusters?

No. Many AI clusters use multimode for short intra-row links, but single-mode 800G transceivers (LR variants) are common for longer corridors or older wings where OM4 assumptions do not hold. The right approach is mapping fiber plant reality and assigning optics per segment.

Q2: How do I confirm switch compatibility before buying 800G optics?

Check the switch vendor’s optics compatibility list for the exact transceiver part number. Then validate in a lab or pilot environment with the same switch firmware version you plan to run in production. “Looks compatible” is not a reliable acceptance strategy.

Q3: What DOM telemetry should I alert on?

At minimum, alert on temperature and receive power drift. If your vendor exposes laser bias current and warning flags, include them too. Trend-based alerts usually catch contamination or marginal conditions earlier than link flap events.

Q4: What is the most common physical-layer cause of intermittent errors?

In practice, connector cleanliness and MPO polarity errors are very common. They can pass basic link bring-up tests but fail under real traffic patterns due to reduced margin. Always clean, verify polarity, and re-test with the same patch cords under load.

Q5: Should we standardize on one 800G transceiver model?

Standardization helps spares and training, but it is not always optimal for reach and budget. A mixed strategy (SR4.2 for clean OM4 segments and LR8 for risky segments) often reduces downtime risk and improves overall reliability.

Q6: How do I estimate ROI for moving to 800G transceivers?

Start with training step time reduction, then translate it into compute utilization gains and reduced time-to-results. Add operational savings from fewer troubleshooting events and fewer maintenance windows. Even modest improvements can pay back quickly in large AI environments.

If you are planning the next upgrade, pair this with a careful optics monitoring plan and a fiber hygiene workflow. For a related topic, see How to build an optics telemetry and alerting strategy and align it with your transceiver fleet lifecycle.

Author bio: I am a CTO who has deployed high-speed Ethernet fabrics for AI and HPC, focusing on optics compatibility, telemetry-driven reliability, and reducing tech debt during upgrades. I write from hands