In data centers, the move from 400G to 800G is less about buying faster optics and more about surviving the optics, firmware, and lane-mapping realities of modern switches. This article helps network and facilities teams plan the transition with actionable selection criteria, measurable link checks, and failure-mode troubleshooting. If you are refreshing leaf-spine fabric, upgrading ToR uplinks, or rebuilding a spine with new silicon, you will find a step-by-step implementation path you can execute this quarter.

Step-by-step prerequisites before touching 800G optics

Before racking new transceivers, confirm the switch platform, optics cage wiring, and software train that governs lane mapping and FEC behavior. In practice, I have seen “it should work” optics fail because the switch expects a specific breakout mode or FEC capability advertisement.

Prerequisites checklist

- Switch platform and OS: record exact model numbers (for example, 800G-capable platforms from major vendors) and the running software version.

- Transceiver form factor: confirm QSFP-DD 800G or OSFP 800G expectations based on your chassis.

- Fiber plant inventory: map MPO/MTP trunks by ribbon group, connector type, and measured attenuation per span.

- Power and thermal constraints: verify airflow targets and ensure the optics bay is not operating near upper temperature limits.

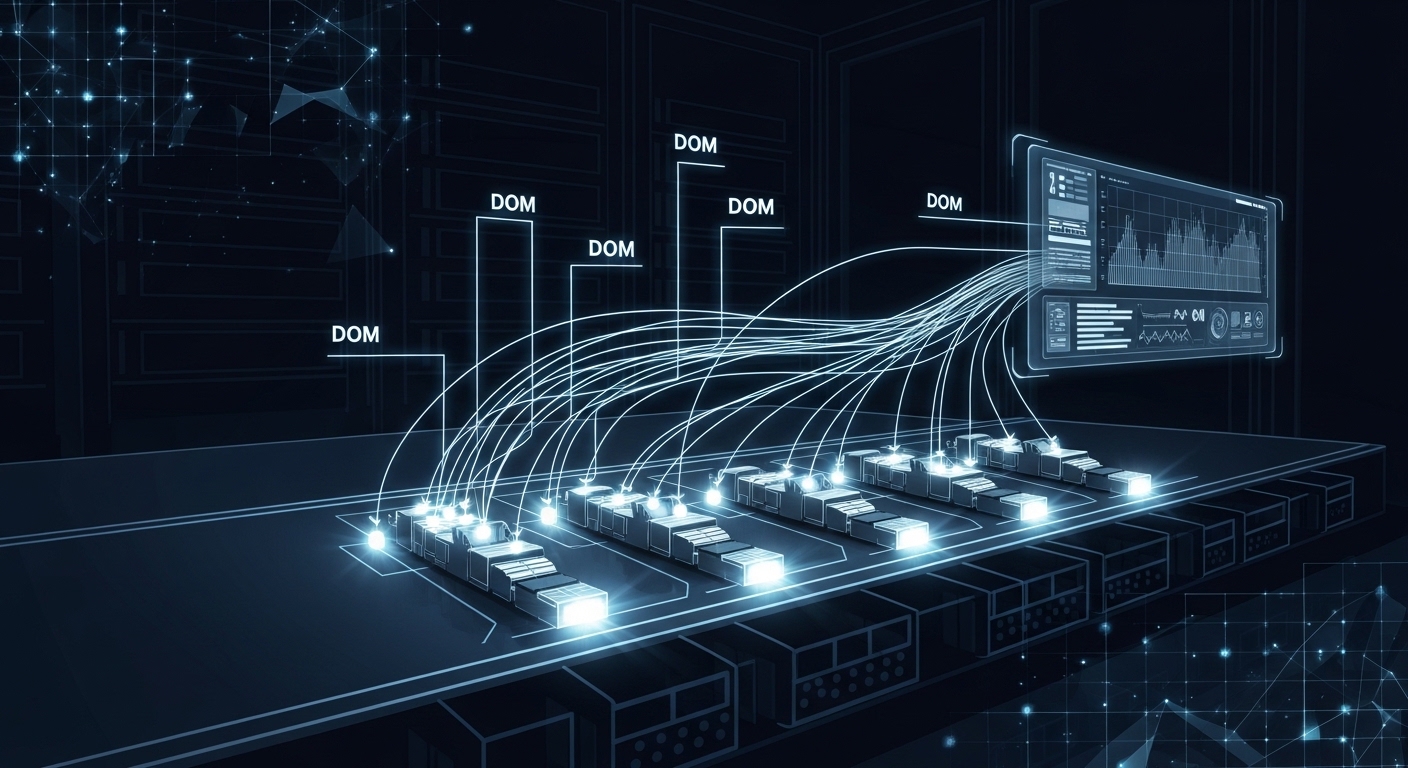

- DOM/EEPROM support policy: ensure the operations team accepts vendor DOM formats and telemetry tooling.

Expected outcome: You can identify which optics families are electrically compatible and which fiber bundles are safe to light without violating link budgets.

Choose the 800G optics type that matches your fiber reality

The 800G ecosystem typically uses either 800G over 2 km class single-mode optics or 800G over short-reach multimode optics, depending on whether your fabric stays within a pod or spans buildings. The key is not only distance; it is also connector style and the optical interface budget that your measured fiber loss can support.

Key specification comparison (representative classes)

Use this table as a planning baseline; always confirm exact vendor datasheets for your transceiver part number.

| Optics class | Typical wavelength | Target reach | Connector | Data rate | DOM | Operating temp |

|---|---|---|---|---|---|---|

| 800G SR (multimode) | 850 nm nominal | 100 m to 150 m class (MMF) | MPO-24 (typical) | 800G | Supported (vendor-specific) | 0 C to 70 C typical |

| 800G LR (single-mode) | 1310 nm nominal | 2 km class (SMF) | LC duplex (typical) | 800G | Supported (vendor-specific) | -5 C to 70 C typical |

Expected outcome: You select a module family aligned to your measured attenuation and connector standards, reducing “mystery link” failures later.

Pro Tip: In many deployments, the optics work on the bench yet fail in production because MPO polarity and ribbon ordering differ between contractors. Before power-up, validate polarity end-to-end with a continuity test and a documented fiber map, not just “connector looks right.”

Validate switch compatibility, lane mapping, and FEC expectations

800G implementations often rely on internal lane grouping and encoding that must match the transceiver’s capabilities. Even when both sides are “800G,” a mismatch in advertised capabilities can manifest as link flaps, high BER counters, or a port that stays administratively down.

Implementation actions

- Check optics compatibility list: verify the exact module part number is supported by your switch vendor’s optics matrix.

- Confirm FEC mode: review whether the platform expects RS-FEC or another FEC profile for the chosen reach.

- Verify breakout and lane mapping: ensure the port mode is set to the expected 800G lane configuration (no accidental 4x200G or 2x400G mapping).

- DOM telemetry sanity: confirm DOM reads succeed and that laser bias, temperature, and received power are within expected ranges.

Expected outcome: The port transitions to “link up” with stable error counters and coherent telemetry.

Deploy in a real data center scenario without breaking the fabric

Consider a 3-tier fabric in data centers with a leaf-spine topology: 48-port 10G ToR switches feed 25G or 100G aggregation, and the spine uses 800G uplinks to reduce oversubscription. In one upgrade I supported, we moved from 400G to 800G on two spine rows, each carrying 32 uplinks, across a measured 120 m multimode trunk budget. We scheduled the change window for 02:00 local time, warmed up optics in the aisle for 30 minutes to stabilize temperature, and validated DOM telemetry before enabling traffic profiles.

Operational sequence (what you actually do)

- Prestage transceivers and label both ends of each MPO trunk.

- Power-cycle the target ports only after fiber polarity verification passes.

- Enable the port, then monitor link stability for at least 15 minutes.

- Run a controlled traffic test (iperf-like throughput or vendor test tool) and confirm error counters remain near baseline.

Expected outcome: You complete the first row upgrade with stable links and measurable throughput, then replicate the pattern across remaining ports.

Monitor telemetry and enforce a link budget discipline

Once the port is up, treat optics telemetry as an operational contract. Track received optical power (or equivalent metrics), laser bias drift, and temperature. If you see a received power that is consistently near threshold, it often predicts future failures as connectors age or dust accumulates.

Telemetry thresholds to watch

- Optics temperature: ensure it stays within vendor bounds during peak airflow conditions.

- Laser bias and output power: watch for slow drift over weeks.

- Receiver power: compare against the expected range for your module class.

- BER/errored frames: confirm the platform reports stable post-FEC performance.

Expected outcome: You catch marginal links early, before they become intermittent outages.

Selection criteria and decision checklist for 800G optics

Use this ordered checklist during procurement and engineering sign-off. It is designed for the reality that optics choices cascade into fiber handling and operational tooling.

- Distance and reach class: measured fiber attenuation and connector loss, not just “rated reach.”

- Switch compatibility: confirm the exact part number appears on the supported optics list.

- Connector and polarity constraints: MPO/MTP polarity method and patch panel conventions.

- DOM support and telemetry tooling: ensure your monitoring stack can parse the vendor’s DOM values.

- Operating temperature and airflow: verify airflow design targets and module thermal limits.

- Vendor lock-in risk: compare OEM vs third-party availability and warranty terms.

- Planned growth: choose modules that do not paint you into a corner for future lane upgrades.

Expected outcome: A defensible selection that survives audits, spares planning, and future troubleshooting.

Common mistakes and troubleshooting tips during 800G transitions

When 800G links fail, the root cause is usually procedural: polarity, mode mismatch, or unsupported optics. Below are the top failure modes I have seen repeatedly in production.

Port stays down after optics insertion

Root cause: port mode or breakout configuration does not match the transceiver’s expected lane grouping. Some platforms require explicit 800G mode selection per interface. Solution: set the port to the correct 800G profile, then verify FEC mode and ensure the optics is on the supported list.

Link flaps or shows high post-FEC errors

Root cause: MPO polarity reversed or ribbon ordering swapped during cabling. This can produce a receiver that “sees something” but cannot sustain stable decoding. Solution: perform an end-to-end polarity verification and swap the MPO polarity using the correct patching method; re-check received power and BER counters.

Works at first, then degrades after weeks

Root cause: connector contamination and inadequate cleaning cadence. Dust can be tolerable at initial power but becomes problematic as lasers age or temperatures drift. Solution: implement a cleaning SOP with microscope inspection, replace suspect jumpers, and track telemetry trends to flag marginal optics.

Cost and ROI note for data center teams

Pricing varies widely by reach class and vendor, but in typical procurement cycles, 800G SR optics often cost several hundred to over a thousand USD per module, while 800G LR can be higher depending on single-mode reach and packaging. OEM optics may carry higher unit cost but often reduce compatibility risk and shorten incident time-to-resolution. For third-party optics, the TCO can look favorable until you factor in failed insertions, longer RMA cycles, and potential support gaps during a live traffic cutover.

Expected outcome: You budget for both optics and the operational overhead required to keep them healthy: cleaning supplies, microscopes, spares, and monitoring hooks.

FAQ

Which fiber type matters most for data centers moving to 800G?

Distance dominates, but connector and polarity handling often determine success. If you are using multimode for short reach, verify MPO/MTP conventions and measured attenuation across the exact trunk you will light. For longer spans, single-mode reach class must align with your measured loss and splices.

Can we mix OEM and third-party optics in the same data center?

In principle, yes, but in practice it depends on your switch vendor’s optics matrix and DOM handling. Mixing can complicate incident response because telemetry formats and supported capabilities may differ. If you do mix, standardize on a small set of validated part numbers.

What should we monitor first after enabling an 800G port?

Start with link state stability and then validate DOM telemetry: temperature, laser bias/output power, and received power. Finally, confirm post-FEC error counters remain stable under real traffic patterns, not only during idle tests.