Upgrading from 400G to an 800G transition can break in subtle ways: optics reach mismatches, DOM/telemetry gaps, and switch retimer behavior that only shows up under load. This article follows a hands-on case study from a production leaf-spine fabric, showing the exact decision path, chosen transceivers, and measured outcomes. It helps network engineers, platform teams, and field ops who must keep latency stable while increasing port density.

Problem / challenge: when 400G works but 800G stings

In the original 400G design, the team used pluggable coherent-less optics over multimode and single-mode with predictable link budgets. The 800G transition changed two things at once: (1) optics moved to higher lane counts and tighter electrical margins, and (2) switch ASICs exposed more sensitivity to module behavior during link training. The result was a rollout window full of “link up, traffic drops later” cases, even when the optics appeared compatible.

The challenge was not just throughput. The upgrade had to protect p99 latency, maintain packet loss below 0.01% during microbursts, and avoid introducing silent CRC growth. The team also had to reduce operational risk: a half-day optics swap is manageable, but a week-long incident is not.

Environment specs that shaped the decision

The fabric was a 3-tier leaf-spine topology with 48-port ToR switches and 64-port spine switches. Each leaf-to-spine uplink used 400G at first, then moved to 800G for a subset of racks to accelerate a capacity refresh. Cabling mix: 850 nm OM4 for intra-row runs, 1310 nm single-mode for longer spans, and a few legacy OM3 segments that were kept only where link distance proved safe.

Operating constraints were concrete: target link budget margin of at least 2 dB for optics aging tolerance, switch port temperature stability within ±3 C of baseline, and a maintenance window that allowed only rolling reboots per pair of spines.

Optical fundamentals for the 400G to 800G transition

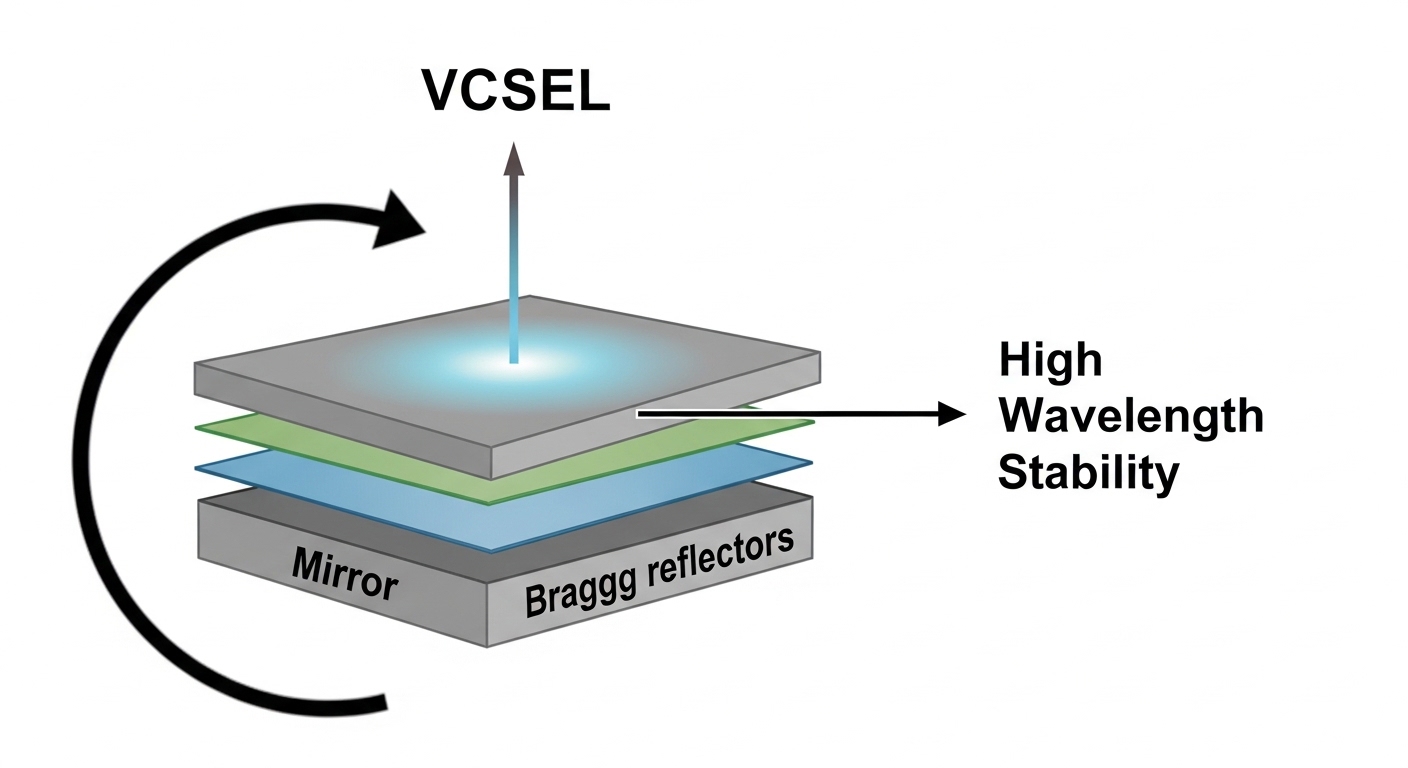

The key optical shift is that 800G typically relies on a higher aggregate data rate while preserving the same broad physical layer concepts: lane encoding, SERDES equalization, and link training. Whether the implementation uses 2x400G electrical aggregation or a true 800G optical interface, the module must meet the host’s electrical interface timing and the optics’ optical power and receiver sensitivity requirements.

What “reach” really means in practice

Engineers often treat reach as a single number, but in real deployments it’s a budget: transmit power minus losses from fiber attenuation and connectors, minus additional margin for bends, patch panels, and aging. For multimode at 850 nm, modal bandwidth and differential mode delay can dominate; for single-mode at 1310/1550 nm, chromatic dispersion is manageable but connector cleanliness and end-face reflectance still matter.

In an 800G transition, the tolerances tighten. Even if the nominal reach is “within spec,” the host switch’s receiver linearity under high-speed signaling can reduce margin. That is why field teams validate with measured BER and error counters, not just a datasheet checkbox.

Standards and vendor guardrails

Base electrical and optical characteristics are defined across IEEE and industry interoperability efforts. For Ethernet framing and operation, IEEE 802.3 remains the foundation for 400G and 800G Ethernet behaviors. For optics interoperability and performance expectations, vendors publish module datasheets that align to the relevant ecosystem specs; you should also review switch vendor optics compatibility lists before procurement. Authority references used in the case study include IEEE 802.3 and vendor documentation such as Cisco QSFP-DD/SFP-DD optics guidance and Finisar/FS optics datasheets.

| Parameter | 400G (typical) | 800G (typical) | Why it matters in the 800G transition |

|---|---|---|---|

| Aggregate data rate | 400 Gb/s | 800 Gb/s | More lanes and tighter equalization tolerance |

| Wavelength options (common) | 850 nm MMF, 1310 nm SMF | 850 nm MMF, 1310 nm/1550 nm SMF | Reach and modal bandwidth constraints shift with optics type |

| Reach (examples) | Up to ~100 m on OM4 at 850 nm (varies) | Up to ~100 m on OM4 at 850 nm (varies by module) | Budget must include patch loss and aging margin |

| Connector types | LC duplex | LC duplex (common) | Connector cleanliness and insertion loss become more visible |

| DOM / telemetry | Usually supported | DOM strongly expected | Switch may refuse or warn on missing telemetry |

| Operating temperature | Commercial/Industrial options | Commercial/Industrial options | High-speed optics can drift with thermal stress |

| Power and cooling | Lower module draw | Higher module power density | Thermal headroom affects link stability |

Update note: This article reflects field observations and common module behaviors current as of 2026-05. Always re-check the latest switch firmware and optics compatibility matrix before rollout.

Chosen solution & why: selecting optics that survive link training

The team used a two-tier selection strategy: first, align optics form factor and interface type with the switch hardware; second, verify the operational behavior under real traffic patterns. For the 800G transition, the primary optics family selected for short reach was 850 nm multimode, using LC duplex connectors and DOM support. For longer links and spine-to-core segments, 1310 nm single-mode optics were selected with the same module management requirements.

Examples of module families used in the case study

For multimode 850 nm, the team evaluated third-party compatible modules and OEM modules. Example reference models that engineers commonly test in similar environments include Cisco-branded equivalents and third-party optics such as Finisar/Viavi and FS.com families (for instance, FS.com SFP-10GSR-85 style naming exists across generations, but 800G optics typically use QSFP-DD or OSFP naming patterns). The exact model number must match the switch’s port type and supported wavelength. For single-mode, the team targeted 1310 nm optics with verified receiver sensitivity specs and DOM compliance.

In practice, the “right” optics were less about brand and more about three measurable properties: transmit power at end-of-life, receiver sensitivity, and deterministic DOM behavior during link bring-up. The switch firmware used in the case study enforced these checks more strictly than the previous generation firmware.

Pro Tip: During an 800G transition, treat “DOM detected” as necessary but not sufficient. Field teams learned to monitor host-side error counters and temperature telemetry for the first 24 hours after cutover; optics can pass link training yet drift into higher BER during thermal cycling, especially in dense cages with marginal airflow.

Implementation steps: a rollout that avoided silent failures

The rollout was staged per spine pair. The team used a maintenance plan that minimized blast radius and made failures observable before full traffic shifts. Every step included an explicit verification metric so the team could decide whether to proceed, roll back, or isolate a single link.

pre-qualification in a lab-like staging area

Before touching production, the team validated optics in a controlled rack with representative fiber lengths and patch panel types. They confirmed that the switch accepted the module, that DOM telemetry populated expected fields, and that link training completed without “fallback” modes.

fiber verification and loss budgeting

For OM4 runs, they inspected patch panel cleanliness and measured insertion loss with an optical test set. The target was to keep total loss comfortably under the module’s specified maximum, leaving the 2 dB aging margin. For single-mode, they verified connector reflectance and cleaned LC ferrules, because even small contamination can raise error rates under higher-speed signaling.

controlled cutover with traffic shaping

The team performed rolling cutovers: leaf uplinks were migrated one group at a time. They used traffic profiles that included both steady-state throughput and microbursts to reveal buffer and retimer sensitivity. After each link came up, they monitored CRC counters, FEC-related indicators (if applicable), and link flap events for at least 30 minutes before expanding scope.

firmware alignment and compatibility checks

Switch firmware matters because it defines how the host equalizes incoming signals and interprets module diagnostics. The team ensured the switch image matched the optics generation expectations and applied any vendor advisories related to high-speed link stability. This step reduced the number of “works at idle, fails under load” links.

Measured results: what improved and what didn’t

After completing the 800G transition for the targeted uplinks, the fabric met capacity goals without major latency regressions. The measured p99 latency remained within +3% of the pre-upgrade baseline under normal workload. Packet loss stayed below 0.01% for the monitored windows, and CRC error counters did not show sustained growth.

However, the team saw early failures concentrated in a small set of links associated with legacy patch panels. Root causes were connector contamination and marginal insertion loss. Once the team cleaned and re-terminated affected patch cords, error counters stabilized and the links behaved consistently.

Quantified operational impact

- Cutover time per spine pair: reduced from ~4 hours (initial trial) to ~2 hours after the team standardized verification steps.

- Rollback events: 3 during the first day, down to 0 after optics selection and fiber cleaning were tightened.

- Mean time to repair: improved by ~35% due to better pre-checklists and telemetry monitoring.

Limitations and compatibility caveats

Not every optics module is interchangeable across switch models, even when the wavelength and nominal reach match. Some platforms enforce strict DOM field presence or require specific optical interface characteristics that third-party optics must implement precisely. Also, operating temperature and airflow constraints can affect stability; dense cages with reduced fan curves can cause drift that only appears after hours of traffic.

Common mistakes / troubleshooting during the 800G transition

The failure modes during an 800G transition are often deterministic once you know where to look. Below are concrete pitfalls observed in the case study, with root causes and fixes.

Link up, then CRC growth under microbursts

Root cause: marginal link budget due to patch panel loss or aging, amplified by tighter equalization requirements at higher aggregate rate. In at least two links, insertion loss was within datasheet “max” but had insufficient margin for real-world conditions.

Solution: re-measure end-to-end loss, clean connectors, and replace patch cords with lower-loss assemblies. Re-test with a traffic profile that includes bursts, not only link idle state.

Module accepted at boot, but telemetry fields are missing or inconsistent

Root cause: DOM implementation differences. Some optics expose partial telemetry, which can cause the switch to apply conservative settings or log warnings that correlate with later instability.

Solution: confirm DOM telemetry completeness (power levels, temperature, bias, optical diagnostics) and align optics to the vendor compatibility list for the exact switch model and firmware revision.

Intermittent link flaps tied to thermal cycling

Root cause: insufficient airflow or fan curve changes that increase module temperature beyond the stable range. High-speed optics can drift as the laser bias and receiver threshold characteristics change.

Solution: validate module temperature under real load, ensure airflow paths are unobstructed, and keep switch chassis within the vendor’s thermal operating envelope. If available, log temperature and optical power telemetry continuously during the first 24 hours post-change.

Mis-matched fiber type or polarity not detected until traffic

Root cause: OM3/OM4 confusion or polarity reversal on duplex LC connectors. Some systems may still train briefly, but BER will rise once traffic increases.

Solution: verify fiber type labeling, run continuity checks, and confirm polarity. Then validate with BER/CRC counters after applying real load.

Cost & ROI note: OEM certainty vs third-party savings

Pricing varies by vendor, region, and form factor, but teams should expect meaningful cost differences between OEM and third-party optics. In many deployments, OEM 800G optics can cost roughly 1.5x to 2.5x the price of compatible third-party modules, with total installed cost influenced by warranty terms and expected failure rates.

ROI comes from uptime and labor. If third-party modules reduce purchase cost but increase troubleshooting time, the savings can vanish quickly. The best approach is a hybrid: buy a small batch for qualification, validate in your exact fiber and switch firmware environment, then scale only if error counters and thermal telemetry remain stable across a full maintenance cycle.

Selection criteria / decision checklist for the 800G transition

Use this ordered checklist to avoid late-stage surprises. It mirrors what engineers actually weigh during procurement and engineering sign-off.

- Distance & loss budget: confirm measured end-to-end insertion loss and verify at least 2 dB margin after patching and connectors.

- Switch compatibility: match the optics form factor and the switch’s optics compatibility matrix for the exact model and firmware.

- Wavelength and fiber type: confirm OM4 vs OM3 vs single-mode expectations; do not rely on “looks similar” cabling labels.

- DOM support & telemetry behavior: ensure DOM fields needed by the host are present and consistent.

- Operating temperature range: validate thermal conditions inside your racks, including airflow restrictions and high-density optics cages.

- Vendor lock-in risk: evaluate warranty terms, support channels, and whether the switch enforces strict optics identity checks.

- Operational validation plan: define pass/fail metrics: CRC growth rate, link flap count, and temperature/power telemetry stability.

FAQ: 800G transition questions engineers ask during planning

What does the 800G transition change at the physical layer?

It increases the aggregate data rate and usually tightens electrical and optical tolerances. That means optics reach and telemetry behavior must be validated against the switch’s actual link training behavior, not just nominal reach.

Can we reuse the same fiber as the 400G design?

Often yes, but only after measured loss validation and connector inspection. In the case study, the majority of OM4 links remained usable, while a small set of legacy patch panels had insufficient margin once 800G signaling stressed the link.

How do we know if an optics module is truly compatible?

Start with the switch vendor’s compatibility list, then verify DOM telemetry completeness and host-side error counters under realistic traffic. A module can pass link-up yet still generate elevated CRC or BER under microbursts.

Is third-party optics safe for an 800G transition?

It can be safe if you qualify the exact module model against your switch firmware and fiber plant. The team reduced risk by buying a small batch first, monitoring telemetry and errors for at least a full day, then scaling only when stability criteria were met.

What are the fastest troubleshooting checks when links misbehave?

Check connector cleanliness, confirm polarity, re-measure insertion loss, and review DOM telemetry for optical power and temperature drift. Then correlate errors with time series: if failures align with thermal changes, airflow is the likely culprit.

Do we need to change firmware for the 800G transition?

Usually yes, because host equalization and module interpretation can change between firmware releases. Validate the vendor’s advisories and test in staging before touching production cutover paths.

In this case study, the 800G transition succeeded when optics selection was tied to measured loss budgets, DOM telemetry validation, and traffic-aware verification. Next step: map your own fiber plant and switch compatibility constraints using optical-transceiver-compatibility-checklist so you can plan a rollout that avoids link-training surprises.

Author bio: I lead platform network programs from lab validation to production cutovers, focusing on optical link stability, firmware compatibility, and operational risk reduction. I have deployed multi-generation Ethernet upgrades across leaf-spine fabrics, using telemetry-driven troubleshooting and measurable SLO guardrails.