Teams planning 400G upgrades usually discover the same bottleneck: compatibility and power budgets, not just raw bandwidth. This article connects optical trends to practical decisions for 400G transceivers—what is changing, what is stable, and how to avoid costly mis-picks during rollout. It helps network engineers, architects, and field technicians evaluate module formats, fiber types, and DOM support against switch optics and rack-level constraints.

How optical trends are reshaping 400G module choices

400G arrived as an inflection point because the industry had to balance higher symbol rates, tighter SerDes power, and optics cost per delivered bit. The dominant architectural shift is moving from “more lanes at slower rates” toward higher integration and multi-lane optics that keep reach while controlling thermal load. In parallel, optical trends are pushing toward better digital diagnostics (DOM) and more deterministic optics behavior for automation and fault isolation.

From a standards perspective, most 400G Ethernet optics align with IEEE 802.3 pathways for 400GBASE interfaces and vendor implementations that follow the relevant electrical/optical lane mapping. For physical optics, the important practical question is not only wavelength band (for example, SR in multimode vs. LR4/ER4 in single-mode), but also how many fibers and what lane speeds the switch expects. Vendors also increasingly publish compliance matrices for each switch line card, because “works in lab” does not guarantee “works with your exact optics and firmware.”

What changed versus 100G in the field

Compared with 100G, 400G transceivers tend to increase the number of optical channels and the sensitivity to link margin. In deployments with patch-panel congestion, the loss budget and connector cleanliness become more visible. In practice, teams that did not re-verify MPO/MTP polarity and insertion loss after re-cabling often see intermittent link flaps under link training retries.

Power is the other major shift. Even when per-bit power improves, the absolute optics power and heat per port can stress top-of-rack airflow and adjacent modules. That is why optical trends increasingly focus on thermal design, airflow lanes, and transceiver temperature reporting via DOM—especially in dense leaf-spine racks.

Pro Tip: Before ordering 400G optics, pull the vendor’s optics compatibility table and confirm not only “module type,” but also the exact DOM and firmware expectations for that switch generation. Field experience shows that “same part number from a different manufacturing lot” can still behave differently when the switch is running stricter optics health thresholds.

400G transceiver evolution: formats, wavelengths, and reach

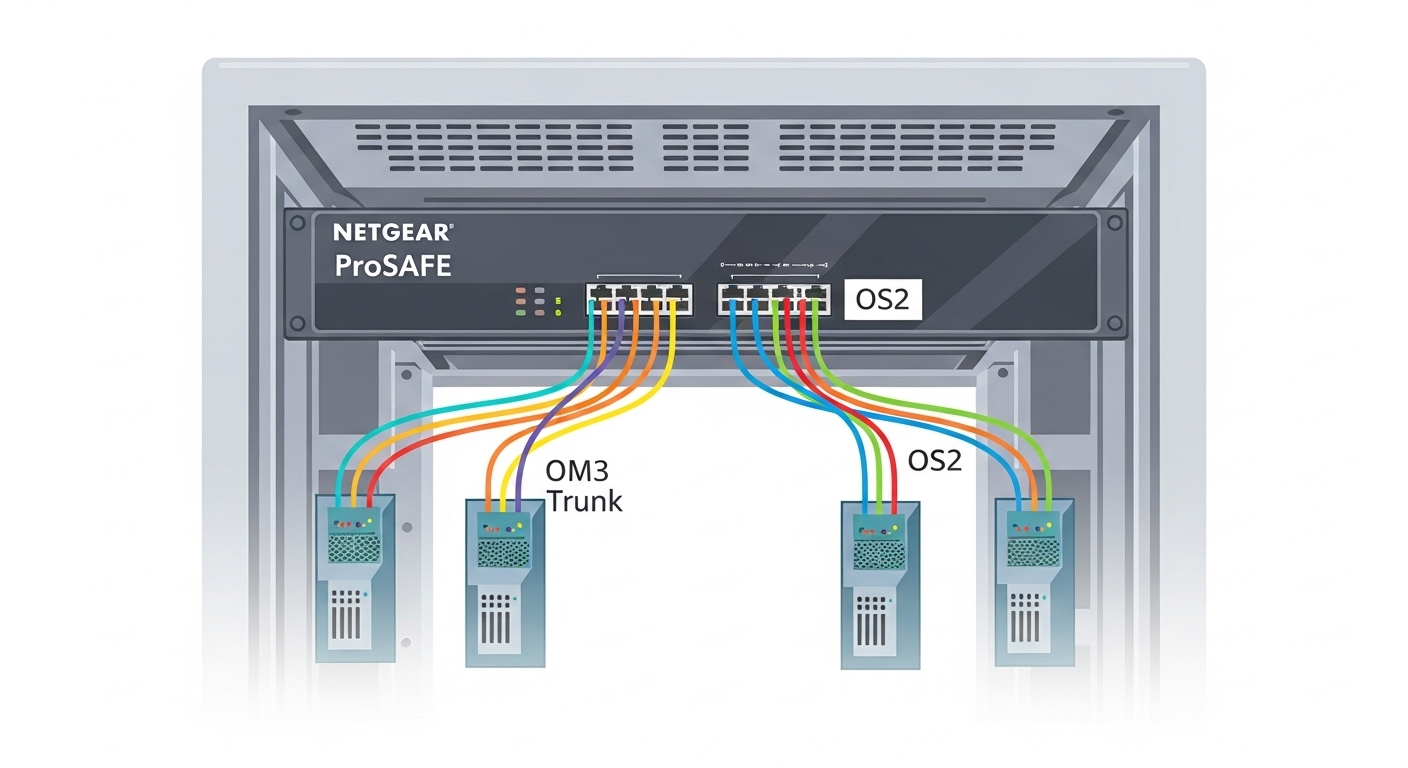

In day-to-day selection, 400G optics map to a few common fiber categories and lane strategies. The most frequent enterprise and data center starting points are short-reach multimode (often “SR” variants) for leaf-to-spine and pod interconnects, plus longer-reach single-mode variants (often “LR4” or “ER4”-style naming) for aggregation and regional extensions.

Below is a practical comparison of widely deployed 400G optics classes. Actual product availability and exact parameters vary by vendor and switch support; always validate with your switch optics matrix and the transceiver datasheet.

| Class (common naming) | Typical wavelength/technology | Target reach | Fiber type / connector | Data rate / lanes (typical) | DOM / diagnostics | Operating temperature (typical) |

|---|---|---|---|---|---|---|

| 400G SR (multimode) | 850 nm class (VCSEL/array) | ~100 m class (exact depends on spec) | OM4/OM5, MPO/MTP | 400G aggregate, multi-lane parallel optics | Digital diagnostics supported (per vendor) | 0 to 70 C (commercial) or wider (industrial) |

| 400G LR4 | ~1310 nm class | ~10 km class | Single-mode, LC duplex | 400G aggregate, four-channel lane mapping | DOM supported | 0 to 70 C (commercial) or wider (industrial) |

| 400G ER4 | ~1550 nm class | ~40 km class | Single-mode, LC duplex | 400G aggregate, four-channel lane mapping | DOM supported (often more extensive) | -5 to 70 C (typical vendor ranges vary) |

| 400G “FR4” class | ~1550 nm class | ~2 km class (naming varies) | Single-mode, LC duplex | 400G aggregate, four-channel lane mapping | DOM supported | Vendor dependent |

For concrete examples that teams commonly benchmark, you may see 400G SR optics sold under vendor-specific SKUs and form factors, and third-party compatible modules such as those distributed by FS.com (for example, 400G SR4 families) or Finisar/Fiberstore lines where available. Always cross-check the exact speed and reach, because “400G SR” naming can mask differences in optical power levels, center wavelength tolerances, and lane mapping.

Multimode reality: OM4 vs OM5 and migration pressure

Optical trends in data centers increasingly favor OM5 for future-proofing because it supports wider effective bandwidth over typical 850 nm use cases. However, many installed bases are OM4, and the migration path is often driven by how patch panels are structured rather than by a pure “spec sheet” decision. If you are moving to higher density, you must also revisit polarity rules and cleaning procedures for MPO/MTP links.

Single-mode reality: insertion loss and margin at 400G

For LR4/ER4-style optics, the margin story shifts toward fiber quality, splice loss, and connector cleanliness. Field troubleshooting frequently traces flaps to uneven patch-panel insertion loss or aging connectors. With 400G, the link training may be more sensitive to marginal received power, so you may see intermittent errors that disappear when a cleaner cable is inserted.

Selection checklist for 400G optics aligned with optical trends

Use this ordered checklist to minimize returns, avoid downtime, and hit predictable link margins. This is written for engineers who need repeatable decisions across racks, sites, and vendors.

- Distance and link budget: confirm the actual installed reach including patch cords, patch panel loss, splices, and connector counts. Don’t ignore worst-case paths.

- Switch compatibility: use the switch vendor optics matrix for that exact line card model and firmware. Confirm the expected form factor (QSFP-DD, OSFP, or vendor-specific) and lane mapping.

- Fiber type and connector ecosystem: verify OM4 vs OM5, MPO/MTP polarity plan, and LC duplex usage for single-mode. Align with your structured cabling standards.

- DOM support and threshold behavior: ensure the module supports DOM and that the switch reads it cleanly. Check if the switch enforces stricter thresholds for temperature, bias current, or optical power.

- Operating temperature and airflow: confirm the transceiver temperature range and your rack airflow. Dense 400G can raise local module temperature even if the room is within spec.

- Vendor lock-in risk: evaluate OEM vs third-party. Prefer suppliers that provide detailed datasheets, traceable part numbers, and clear compatibility statements.

- Power and thermal constraints: compare typical and maximum power draw per module and plan airflow. Validate that adjacent modules do not exceed thermal limits.

- Spare strategy: stock based on failure rates and lead times, not only on “planned ports.” Keep a small buffer of known-good optics for troubleshooting.

If you are standardizing across sites, track performance telemetry (DOM readings, error counters) after install and define acceptance thresholds. This turns optical trends into measurable operational outcomes rather than procurement guesswork.

Common pitfalls and troubleshooting for 400G optics rollouts

Below are frequent failure modes seen during 400G migrations. Each includes the root cause and a practical fix.

Link flaps caused by MPO/MTP polarity mistakes

Root cause: MPO/MTP polarity mismatch, reversed ribbon orientation, or incorrect use of polarity adapters. At 400G lane counts, the effect is more noticeable because multiple channels fail together.

Solution: verify polarity end-to-end with a documented polarity map; re-terminate or use certified polarity adapters; clean the endfaces and re-test with a light source and power meter.

“Works on one switch, fails on another” compatibility gaps

Root cause: module electrical/optical parameters and DOM interpretation differ by switch platform generation. Some platforms enforce stricter thresholds or expect a specific lane mapping behavior.

Solution: validate using the vendor optics compatibility table for each switch line card; update switch firmware if recommended by the optics vendor; keep a known-good reference module for A/B testing.

Intermittent errors due to connector contamination

Root cause: dirty connectors or connector damage after repeated moves. Even a small contamination can cause marginal received power and rising error rates.

Solution: inspect with a fiber microscope; clean with appropriate lint-free tools and approved cleaning consumables; re-check optical power levels and error counters after cleaning.

Thermal overshoot in high-density racks

Root cause: airflow obstruction, missing airflow baffles, or too-tight spacing between modules. DOM may show temperature spikes while the link remains “up” until conditions worsen.

Solution: confirm airflow paths, reseat modules, verify fan tray operation, and monitor DOM temperature and bias currents during peak load.

Cost and ROI note: balancing OEM, third-party optics, and TCO

Pricing varies widely by reach, form factor, and vendor ecosystem. In many enterprise and data center contexts, 400G SR optics (multimode) are typically less expensive than long-reach single-mode options, but the total cost depends heavily on cabling rework, spares, and downtime risk.

As a realistic planning range, teams often see OEM 400G optics priced meaningfully higher than third-party options, sometimes by a factor of 1.2x to 2.0x depending on reach and availability. The ROI impact comes from reduced capital spend, but also from fewer operational incidents if you choose a supplier with strong compatibility documentation and consistent DOM behavior.

TCO should include: optics and labor, structured cabling repairs (especially MPO/MTP polarity and connector cleaning), test equipment usage, and the cost of extended troubleshooting. In practice, the highest ROI usually comes from aligning optical trends with a repeatable install process: validated optics compatibility, standardized polarity, and DOM-based acceptance checks that prevent “silent marginal links.”

FAQ

What optical trends matter most for 400G planning right now?

The biggest practical trends are tighter integration (higher lane counts and more sensitive link margins), improved DOM/telemetry for automation, and increased emphasis on thermal behavior in dense racks. These directly affect compatibility risk, troubleshooting time, and rack airflow planning.

Should I standardize on OM5 or stay with OM4 for 400G SR?

If you have the option to upgrade structured cabing during a refresh, OM5 can reduce future constraints. If you are constrained by installed OM4, you can still deploy 400G SR, but you must validate reach and insertion loss for your exact patch-panel and connector counts.

How do I confirm switch compatibility before buying 400G optics?

Use the switch vendor’s optics compatibility matrix for your exact line card model and firmware version, then verify DOM support expectations. For risk control, order one or two modules first and run acceptance tests (link up stability, error counters, DOM temperature/power baselines).

What is the fastest troubleshooting path when a 400G link will not come up?

Start with physical layer checks: verify polarity and connector cleanliness, then confirm fiber type and reach against the optics class. Next, compare DOM readings from a known-good reference module if available, and validate the optics against the switch compatibility list.

Are third-party 400G optics safe for production?

They can be, but only when the vendor provides detailed datasheets and compatibility statements for your switch platform. The ROI improves when you have a controlled acceptance process and you monitor DOM plus error counters after install.

What testing should I automate during a 400G rollout?

Automate: DOM capture (optical power, bias current, temperature), link stability checks, and error counter baselines per interface. Then enforce an acceptance threshold so marginal links are caught before they become intermittent outages.

Optical trends for 400G are less about chasing novelty and more about managing compatibility, margin, and thermals with repeatable field processes. Next step: use 400G optics compatibility to build a procurement workflow that matches your switch matrices, cabling plan, and acceptance tests.

Expert author bio: I have deployed 10G to 400G optical networks in leaf-spine data centers and enterprise campuses, focusing on optics compatibility, DOM telemetry, and link margin verification. I write implementation guidance grounded in switch vendor matrices and on-site fiber validation practices.