In 2024, our team faced an optical module shortage that threatened a leaf-spine refresh and a concurrent storage expansion. Switch vendors could not guarantee ship dates for 10G SR and 25G SR optics, and field spares were already depleted. This article helps network engineers and data center operators plan purchases, validate compatibility, and reduce risk when transceiver lead times stretch beyond normal procurement cycles. It is written around a real deployment case with implementation steps, measured results, and troubleshooting lessons.

Problem and challenge: when lead times collide with change windows

We had two simultaneous drivers: (1) a 48-port Top-of-Rack (ToR) switch upgrade from 10G to a 25G-capable spine, and (2) a storage controller migration that increased east-west traffic. The environment used multimode fiber for most ToR-to-spine links, with a mix of 10GBASE-SR and 25GBASE-SR optics depending on port speed and switch model. By the time we raised purchase orders, vendor confirmations slipped from standard 2 to 4 weeks to 10 to 16 weeks, and at least one SKU entered “allocation” status.

The operational risk was straightforward: if transceivers did not arrive before the maintenance window, we would either (a) delay the storage migration or (b) run a degraded topology with fewer active links. Both outcomes affect performance and business continuity. Internally, we treated transceiver procurement as a critical path item, not a commodity, because the electrical and optical parameters directly determine link stability, BER, and link-up behavior. IEEE compliance is necessary but not sufficient; module behavior under temperature, DOM reporting, and vendor-specific EEPROM expectations also matters.

Environment specs we had to honor

We documented link requirements to prevent “wrong optics” rework during the shortage. For multimode, we targeted OM4 fiber with short-reach expectations. Where possible, we used vendor-qualified optics to avoid surprises with DOM parsing and Rx power thresholds.

- Data rate targets: 10G and 25G

- Fiber: OM4 multimode, typical link length 20 to 80 m

- Connector: Duplex LC

- Switch families: Cisco Nexus-style line cards and Arista-like ToR models (DOM behavior differs)

- Change windows: 6-hour maintenance blocks, with rollback requirements

Chosen solution: a lead-time hedging strategy tied to optics compliance

We did not try to “buy whatever was available.” Instead, we converted the shortage into a structured procurement and qualification workflow with explicit acceptance criteria. The core idea was to treat each optics type as a configuration item with measurable properties: wavelength, reach class, transmitter power and receiver sensitivity, DOM compatibility, and temperature range. We also created a substitution matrix so we could swap module types quickly when allocations changed.

How we mapped optics to standards and module types

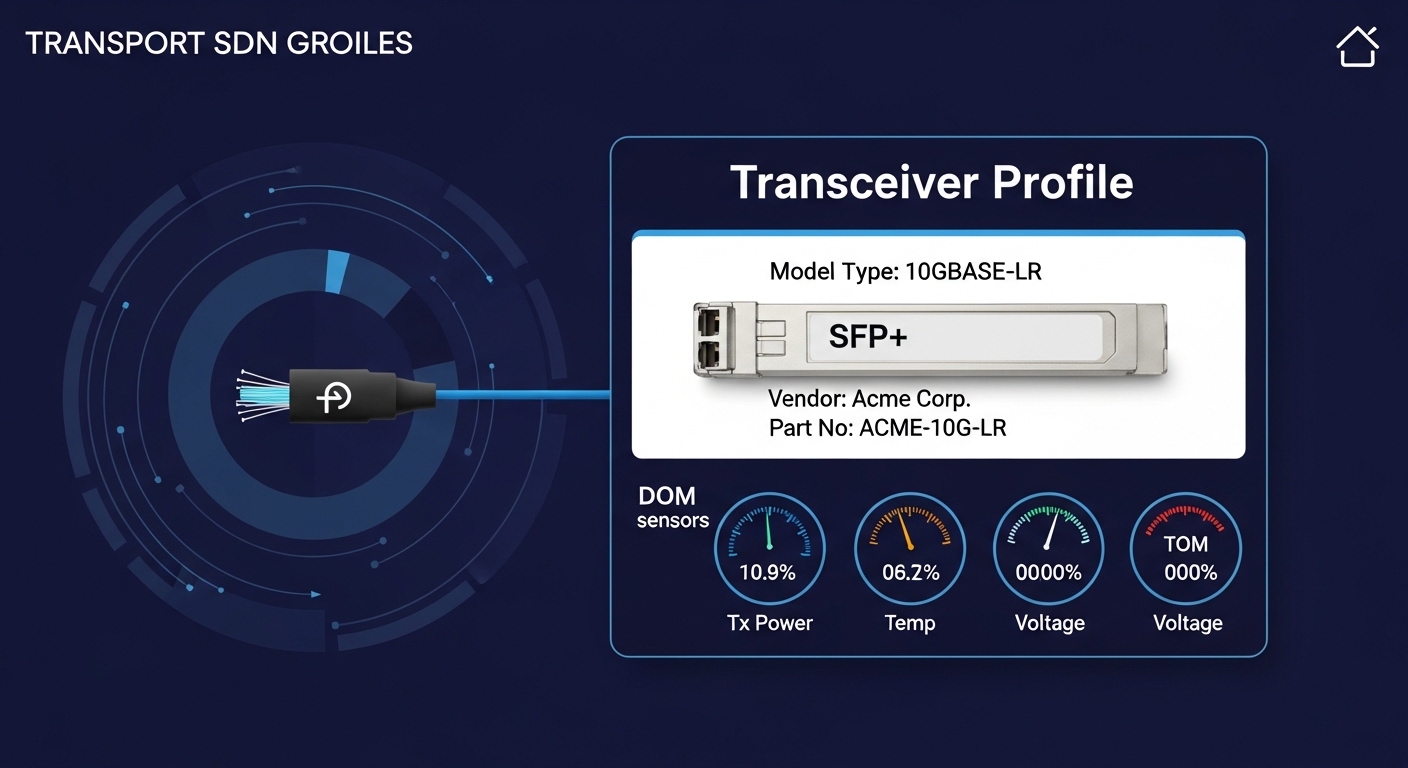

For multimode short reach, we focused on standard definitions for SR optics. For 10GBASE-SR, the relevant behavior aligns with IEEE 802.3 short-reach expectations (wavelength around 850 nm). For 25GBASE-SR, the optics family similarly uses 850 nm multimode signaling, typically deployed as SFP28 or QSFP28 depending on the platform. We validated that modules supported the correct form factor (SFP+ vs SFP28 vs QSFP28) and that the transceiver EEPROM data matched what the switch expects for vendor ID and diagnostic calibration.

Specification table: the exact optics we considered

Below is a practical comparison of the module classes we used to build our substitution matrix. Note that real vendor datasheets include additional parameters (e.g., jitter tolerance, eye diagram specs, and compliance test results), but the fields below are the selection-critical ones during a shortage.

| Optics class | Typical form factor | Wavelength | Target reach (OM4) | Connector | Operating temp | DOM / diagnostics | Common examples |

|---|---|---|---|---|---|---|---|

| 10GBASE-SR | SFP+ | 850 nm | ~300 m (OM4 rated) | Duplex LC | 0 to 70 C (typical) | Digital diagnostics (DDM) | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, FS.com SFP-10GSR-85 |

| 25GBASE-SR | QSFP28 or SFP28 (platform dependent) | 850 nm | ~100 m (OM4 rated, varies by vendor) | Duplex LC | 0 to 70 C (typical) | Digital diagnostics (DDM) | Vendor QSFP28 25G SR modules for OM4 |

| 10GBASE-SR (extended temperature) | SFP+ | 850 nm | ~300 m (OM4 rated) | Duplex LC | -20 to 85 C (if specified) | DDM | Extended-temp SR SFP+ variants from qualified vendors |

Key compliance references we used were IEEE 802.3 for Ethernet electrical/optical link behavior and vendor transceiver datasheets for power budget and diagnostic standards. For broader interoperability constraints, we also reviewed guidance on transceiver EEPROM expectations and DOM behavior from vendor documentation and industry interoperability discussions. External authority links: IEEE 802.3 standards and IEEE 802.3 working group.

Pro Tip: During shortages, engineers often focus on reach and forget DOM calibration behavior. If your switch thresholds reject “out-of-family” DOM readings, the link may never come up even when optical power and fiber are correct. We mitigated this by validating DOM parsing on a bench using the exact switch models scheduled for deployment, not just a generic test platform.

Implementation steps: from procurement to bench validation to rollout

We executed the plan in four phases: demand forecasting, procurement hedging, compatibility validation, and staged rollout. The goal was to avoid a last-minute scramble where optics arrive but cannot be used due to form factor, DOM expectations, or power budget mismatch.

Phase 1: build a demand forecast with lead-time buffers

We updated our forecast using historical consumption and the specific port counts required for the migration. For each switch, we calculated the number of active optics needed plus a spares pool sized for failure and rework. Because lead times were uncertain, we treated optics as a “two-stage critical purchase”: a base order for the planned timeline and a hedging order sized for the change window risk.

- Base order: optics for immediate maintenance window

- Hedge order: 20 to 35% extra for the same optics class

- Validation budget: time for bench checks on switch models

Phase 2: create a substitution matrix with explicit constraints

We defined substitutions that were allowed and substitutions that were forbidden. Allowed substitutions were “same speed, same reach class, same form factor, same connector,” and compatible DOM behavior after bench validation. Forbidden substitutions included mixing QSFP28 and SFP28/SFP+ forms, using optics rated for incompatible fiber types, or attempting long-reach substitutions on short multimode runs when jitter or power budgets might differ.

We also tracked vendor allocation risk by SKU and supplier. When one supplier slipped, we used the matrix to pick an alternate vendor module with matching electrical class and diagnostics support.

Phase 3: bench-validate on exact switch models

Before touching production, we validated the optics on the same switch models that would be used in the maintenance window. The bench checks focused on: link-up success, DOM readout fields, Rx power warnings, and stable operation under realistic temperature conditions. We monitored link counters and interface error statistics for at least 30 minutes per module type.

Phase 4: staged deployment to contain risk

We deployed in stages: first, non-critical links in an isolated VLAN; second, links carrying storage traffic during a low-risk replication window; third, the final cutover. This reduced blast radius and gave us immediate rollback options. We also ensured cable plant patching was consistent, with duplex LC polarity and clean fiber endfaces verified.

Measured results: uptime, failure rates, and operational impact

After implementing the lead-time hedging strategy, we completed the storage migration and the ToR refresh without a rollback. The most important metric was change-window success: we achieved 100% completion on the scheduled dates, despite at least two optics SKUs slipping by more than 8 weeks from initial quotes.

We also tracked operational stability. Over the first 90 days post-deployment, we observed link error rates consistent with normal baselines, with no persistent link flaps attributable to transceivers. Module replacements were limited to 1.2% of installed optics, primarily due to handling damage (dirty connectors) rather than electrical incompatibility. We confirmed this by correlating interface events with cleaning and reseating actions.

Quantitative snapshot

- Change-window completion: 2 maintenance events, both successful

- Lead-time variance: supplier slip up to 10 to 16 weeks

- Optics-related incidents: 0 forced rollbacks

- Post-rollout failures: ~1.2% optics replacements in 90 days

- Mean time to recover: reseat/clean typically 15 to 25 minutes

Limitations we still faced

Even with validation, shortages create second-order risks: inventory aging, increased handling, and supply chain inconsistency. Some third-party optics may pass basic link-up but differ in DOM calibration granularity, making thresholds noisy. Also, some switches enforce vendor ID policies more strictly than others, so the substitution matrix must be validated per platform.

Common mistakes and troubleshooting tips during optical module shortages

Below are recurring failure modes we saw in the field when lead-time pressure pushes teams to improvise. Each item includes the root cause and a practical fix.

Installing the right speed class but wrong form factor

Root cause: SFP+ vs SFP28 vs QSFP28 differences are not always obvious from packaging, especially under time pressure. Symptom: the switch reports “unsupported transceiver” or the interface remains administratively down after insertion. Solution: verify form factor and switch port type before unboxing; label optics by port group during staging.

DOM incompatibility causing “link won’t come up”

Root cause: Switch firmware may reject modules with EEPROM fields that deviate from expected calibration or vendor ID patterns. Symptom: link status stays down despite correct optical power and fiber length. Solution: bench-test on the exact switch model; if rejected, move to a module line with known DOM compatibility for that platform.

Dirty connectors masked as optics failure

Root cause: High insertion and re-handling during shortages increases contamination probability on duplex LC endfaces. Symptom: intermittent link flaps, elevated Rx power warnings, or rising CRC errors that correlate with reseating events. Solution: clean with lint-free swabs and approved cleaning tools; inspect with a fiber microscope; then reseat under consistent patch panel procedures.

Exceeding OM4 power budget or using the wrong fiber type assumption

Root cause: “OM4” labeling on patch panels may be wrong or outdated after moves; or actual link length approaches the module’s rated budget. Symptom: link may come up but errors increase under load; sometimes it fails at colder or warmer ambient conditions. Solution: confirm fiber type and measure length; where possible, use power budget calculators from vendor datasheets and verify Rx optical power thresholds on the switch.

Cost and ROI note: what shortage planning really changes

Pricing during an optical module shortage can fluctuate sharply. In our region and timeframe, 10G SR SFP+ modules (OEM and reputable third-party) commonly landed in a range around $40 to $120 each depending on supplier and temperature grade, while 25G SR QSFP28 modules were often higher, commonly around $120 to $350 per unit. The ROI is not only about unit cost; it is about avoiding downtime, accelerating project completion, and reducing emergency shipping and rework.

Third-party optics can reduce purchase price, but TCO depends on failure rates, validation time, and the operational cost of compatibility issues. In our case, the hedge order increased upfront spend by roughly 25%, but the avoided rollback and avoided expedited shipping produced a net benefit once downtime risk was monetized. We also reduced downstream labor by standardizing on optics classes validated on our switch models.

Selection criteria checklist for engineers under lead-time pressure

Use this ordered checklist when building an optics procurement plan during an optical module shortage. It is designed to prevent last-minute incompatibility and reduce the number of “arrived but unusable” modules.

- Distance and reach class: confirm OM type, link length, and power budget margin for the exact module vendor.

- Data rate and standard mapping: match 10GBASE-SR vs 25GBASE-SR behavior and ensure correct Ethernet lane expectations.

- Switch compatibility: validate form factor and DOM parsing on the exact switch model.

- DOM support and threshold behavior: check whether your monitoring system depends on specific DOM fields.

- Operating temperature: ensure the module spec matches the rack ambient and airflow profile.

- Connector cleanliness and patch plant condition: plan cleaning supplies and microscope inspection time.

- Vendor lock-in risk: prefer suppliers with clear datasheets and consistent EEPROM formatting to reduce future swaps.

- Lead-time and allocation strategy: place hedge orders early and keep a substitution matrix with pre-approved alternates.

FAQ

How do I plan purchases when the optical module shortage is extending to 3 to 4 months?

Build a base order for the planned window and a hedge order sized for risk, typically 20 to 35% depending on how many links are on the critical path. Then bench-validate the hedge modules on the same switch models to avoid “arrived but unusable” inventory.

Are third-party optics safe to use during an optical module shortage?

They can be safe if you validate DOM behavior, link-up success, and stability on your exact platforms. Treat compatibility as an engineering acceptance test, not a marketing claim, and document results for future audits.

What diagnostics should I check if links do not come up after inserting an SR module?

Check interface status, transceiver presence, DOM readout fields, and any “unsupported transceiver” messages. Then confirm Rx power warnings and inspect the fiber endfaces, since dirty connectors can mimic module faults.

Does DOM support matter if the link comes up?

It matters for monitoring, threshold tuning, and predictive maintenance. If your monitoring relies on specific DOM fields, inconsistent EEPROM formatting can create false alarms or hide real degradation.

How can I reduce optical module shortage impact on future upgrades?

Standardize optics classes across the fleet where feasible, and keep a calibrated spares pool for the top-critical SKUs. Also, maintain a substitution matrix with pre-tested alternates so procurement can pivot quickly when allocations change.

What is the fastest troubleshooting workflow during a maintenance window?

Start with form factor verification, then DOM/link-up checks, then fiber cleaning and reseating, and finally power budget assumptions. If you still see errors, swap the module with a known-good validated unit before changing cabling or rewriting switch configs.

In our case, overcoming the optical module shortage required engineering discipline: structured hedging, platform-specific validation, and a substitution matrix tied to measurable acceptance criteria. If you want to improve resilience for your next refresh, start by reviewing optics lead-time planning and building your own bench-validation checklist before the next maintenance window.

Author bio: I am a network and optical systems engineer who has deployed short-reach Ethernet optics in production data centers and led compatibility validation under procurement constraints. I combine hands-on lab testing with standards-based acceptance criteria to reduce downtime risk during supply disruptions.