When an Open RAN rollout hits a fiber distance surprise or a vendor compatibility snag, the whole radio site schedule can slip. This guide helps field engineers and network planners select optical transceivers that actually work for fronthaul and midhaul, with practical checks for switch compatibility, DOM behavior, and environmental limits. You will leave with a distance-to-module mapping mindset, a troubleshooting playbook, and realistic cost expectations.

Why Open RAN fronthaul stresses optics differently

Open RAN splits functions across distributed units and radios, so your network carries tighter timing and higher operational sensitivity than many legacy backhaul links. In many deployments, fronthaul runs over dedicated fiber with strict latency budgets and continuous link monitoring, so marginal transceivers can “work” until temperature swings or connector contamination pushes them over the edge. I have seen this during live cutovers where a link passed OTDR checks but still degraded because of dirty LC endfaces and weak optical budget margin.

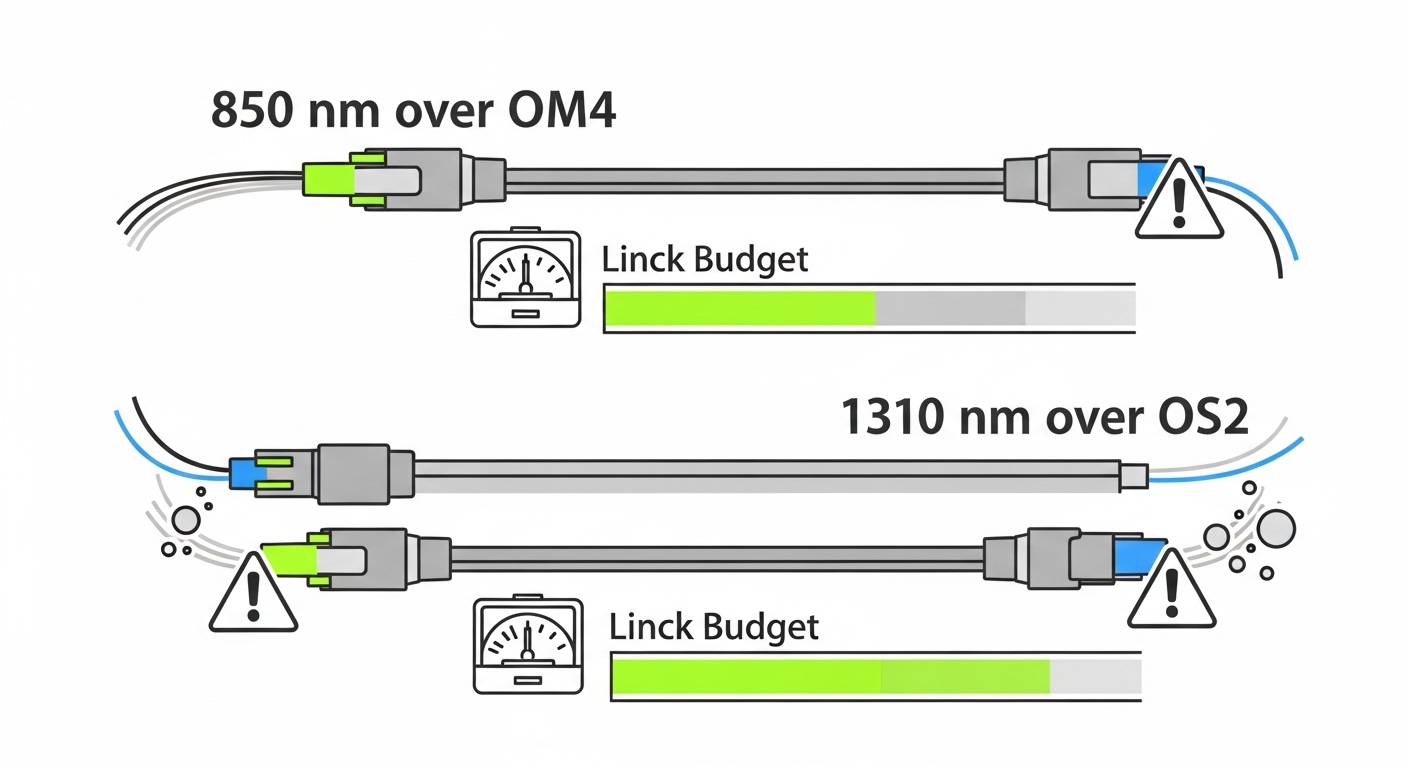

From an optics perspective, you are balancing wavelength, reach, link budget, and transceiver behavior (especially digital diagnostics like DOM). IEEE Ethernet PHYs typically rely on SFP/SFP+/QSFP electrical compliance, while the transport layer expects stable optical power and low bit-error rate. If you pair the wrong module class with the wrong port speed, you can get intermittent link flaps, even when the nominal wavelength matches.

Fronthaul vs midhaul: what changes in your module choice

Fronthaul commonly uses shorter-reach optics in controlled environments, but some sites still stretch distances with engineered budgets. Midhaul often aggregates higher counts of links and may tolerate different reach classes, as long as you keep consistent operational temperature and monitoring discipline. The practical difference is how aggressively you should de-rate optical margin and how carefully you should validate DOM and speed negotiation.

Pro Tip: In the field, “it links up” is not the same as “it is stable.” After installation, I measure receive power and error counters over a full temperature ramp (at least one hour) before declaring the Open RAN optics set “done.” Modules that pass on a cool morning can fail intermittently after enclosure heat soak.

Optical transceiver specs that matter for Open RAN ports

Start by matching your port optics standard to the transceiver type: 10G SR over multimode, 25G SR over multimode, 40G/100G SR over multimode, or LR/ER/ZR over single-mode. Then verify physical connector type (LC vs MPO), wavelength, and reach class, and finally confirm DOM support and temperature range. Vendor datasheets often show absolute maximums, but in real networks you should target stable operation with headroom.

Below is a compact spec comparison for common Ethernet transceiver families engineers see when planning Open RAN fronthaul and midhaul.

| Transceiver family | Wavelength | Typical reach class | Data rate | Connector | Optical tech | Operating temp | Common module examples |

|---|---|---|---|---|---|---|---|

| 10G SR | 850 nm | Up to 300 m (OM3) | 10G Ethernet | LC | MMF, VCSEL | 0 to 70 C (typical) | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL |

| 25G SR | 850 nm | Up to 100 m (OM4 typical) | 25G Ethernet | LC | MMF, VCSEL | -5 to 70 C (typical) | FS.com SFP-25G-SR, Finisar variants |

| 40G SR4 | 850 nm | Up to 150 m (OM3/OM4 class) | 40G Ethernet | MPO | MMF, 4-channel | 0 to 70 C | QSFP-40G-SR4 style modules |

| 100G SR4 | 850 nm | Up to 100 m (OM4 typical) | 100G Ethernet | MPO | MMF, 4x25G lanes | 0 to 70 C | QSFP28-100G-SR4 |

| 10G LR | 1310 nm | Up to 10 km | 10G Ethernet | LC | SMF, DFB | -5 to 70 C (typical) | Finisar/FS LR10G variants |

| 25G LR | 1310 nm | Up to 10 km (class dependent) | 25G Ethernet | LC | SMF, DFB | -5 to 70 C | 25G SFP28 LR families |

For standards grounding, validate against the relevant Ethernet transceiver electrical/optical behavior and port expectations. IEEE 802.3 defines Ethernet PHY behavior, while vendor datasheets define SFP/QSFP electrical interface and DOM register maps. For optical safety and general interface expectations, also consult manufacturer documentation and relevant safety practices. [Source: IEEE 802.3 Ethernet PHY specifications] [Source: Cisco transceiver datasheets and compatibility notes] [Source: SFP and QSFP vendor product documentation]

[[IMAGE:A close-up photography scene of a field engineer wearing high-visibility vest holding an open LC connector cleaning kit next to an installed SFP+ transceiver in a rugged Open RAN transport switch. The setting is a telecom rack at a cell site, early morning natural light mixed with soft LED rack lighting. Shallow depth of field, realistic textures on dust, crisp focus on the connector endface and transceiver label, documentary style, 50mm lens look, high clarity.]

Selection criteria checklist for Open RAN optics

Engineers often choose optics by reach alone, but Open RAN deployments fail when operational constraints are ignored. Use the following ordered checklist during design and before the final cutover.

- Distance and fiber type: confirm MMF vs SMF, and check OM3/OM4/OS2 class. Match the module reach class to the measured link loss, not the marketing maximum.

- Data rate and lane mapping: verify port speed (10G/25G/40G/100G) and whether the transceiver uses SR4 or SR2 lane aggregation. A 40G SR4 module will not behave like a 10G SR module even if both are “850 nm.”

- Switch compatibility: confirm the exact switch model and optics support list. Some platforms restrict optics by DOM behavior or mandate specific vendor EEPROM layouts. Check vendor compatibility matrices before ordering.

- DOM support and telemetry: ensure the transceiver exposes expected DOM fields (temperature, bias current, TX power, RX power). If your monitoring system expects certain thresholds, verify DOM presence and naming conventions in the vendor docs.

- Operating temperature and enclosure conditions: Open RAN cabinets can exceed room ambient, especially near DC power modules. Prefer modules rated for the environment and validate that the transceiver temperature stays within spec during heat soak.

- Connector discipline: LC vs MPO matters. For MPO, confirm polarity and guide pin alignment. Mis-polarity can make a link look “dead” while the fiber loss checks look fine.

- Vendor lock-in risk: third-party modules can reduce cost, but verify long-term compatibility. A firmware update on the switch can change acceptance behavior; plan a validation test window.

Decision shortcuts I use when the clock is running

If you have measured attenuation from OTDR or a certified loss test, compute an optical budget and keep margin. If you cannot measure yet, assume worst-case jumpers and patch cords, and choose the shortest reach class that still fits. For fronthaul, I bias toward conservative margins and modules with consistent DOM support, because operations teams will need telemetry to isolate faults quickly.

[[IMAGE:An engineering illustration concept art showing a simplified Open RAN transport path from radio unit to distributed unit through a switch, with three labeled optical links: “MMF SR 850 nm,” “SMF LR 1310 nm,” and “SMF ER/ZR.” The style is clean vector diagram with glowing light beams, icons for LC and MPO connectors, and color-coded optical power budget bars. Bright, high-contrast, minimal text, infographic aesthetic.]

Deployment scenario: fronthaul optics in a leaf-spine build

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches at each leaf and 2 spines per pod, I supported an Open RAN midhaul aggregation design where each leaf carried 16 fronthaul uplinks and 32 aggregation links. The fronthaul ran from a roadside cabinet to a nearby aggregation room over OM4 multimode patching engineered for 80 m average reach, with a 10 m worst-case jumper variance due to planned slack. We deployed 25G SR where the access switch ports were 25G capable, using LC connectors, and reserved 10G LR only for the few SMF runs that exceeded multimode limits.

Operationally, we required DOM telemetry for receive power thresholds so the NOC could alert before BER climbed. During acceptance testing, each link was verified for stable optical receive power and no incrementing CRC/late-collision counters over a 60-minute soak. The biggest schedule risk came from mismatched port speed settings on one leaf, where a 25G-capable port was provisioned for 10G, causing negotiation fallbacks and intermittent traffic loss.

Common pitfalls and troubleshooting in the field

When Open RAN optics misbehave, the root cause is frequently mundane: connector contamination, polarity issues, incorrect module type, or DOM mismatch. Here are failure modes I have personally seen, with concrete fixes.

Link flaps after heat soak

Root cause: marginal optical margin plus enclosure temperature rise, or a transceiver operating near its bias current comfort zone. Sometimes the module is rated for temperature, but the cabinet airflow is insufficient and the transceiver hits higher internal temperature than expected.

Solution: measure RX power and error counters at cold start and after 45 to 90 minutes. Replace the optics with a higher margin reach class or improve cooling; also re-check fiber endface cleanliness.

“Dead” link even though OTDR looks fine

Root cause: MPO polarity reversal or wrong jumper pairing. OTDR on the fiber strand can show good attenuation while the active transmit/receive pair is swapped at the patch panel.

Solution: verify MPO polarity using the panel labeling and polarity method required by your cabling standard, then re-terminate or re-jumper. Confirm TX to RX mapping end-to-end and re-test with a known-good transceiver.

Intermittent negotiation or speed mismatch

Root cause: using a transceiver that is electrically compatible but not aligned with the port speed configuration, or a platform that requires a specific module class for the target rate. This can appear as “up/down” events or unstable traffic under load.

Solution: lock port speed and verify the switch configuration matches the transceiver data rate. Check the switch optics compatibility list and firmware release notes for transceiver acceptance behavior.

DOM monitoring shows missing fields or wrong thresholds

Root cause: DOM register map differences between vendors, or a module that exposes only partial telemetry. Monitoring systems may treat missing values as faults or fail to trigger early warnings.

Solution: confirm DOM availability and expected telemetry fields in the switch CLI or vendor diagnostics. If needed, adjust monitoring thresholds or standardize on one transceiver vendor for a given site.

[[IMAGE:Lifestyle-style documentary photography of a nighttime maintenance window at a telecom shelter. The subject is a technician using a small flashlight and a smartphone inspection camera to check fiber endfaces while a rack of QSFP28 optics glows with status LEDs. Cinematic lighting, shallow depth of field, realistic grain, high detail on hands cleaning LC connectors, moody atmosphere.]

Cost and ROI: what you should budget for Open RAN optics

Optics cost varies widely by data rate, reach, and whether you choose OEM or third-party. In many real deployments, OEM modules can be roughly 1.3x to 2.0x the cost of comparable third-party optics, but OEM often reduces compatibility risk and shortens troubleshooting time. Third-party optics can still be cost-effective if you validate DOM behavior, switch acceptance, and thermal stability with a controlled test plan.

For TCO, include labor and downtime risk, not just the module purchase price. A single failed fronthaul link can stall radio integration work, and truck rolls are expensive. Also factor in failure rates influenced by connector contamination and handling practices; training and cleaning supplies often deliver higher ROI than chasing marginal price differences on transceivers.

If you are comparing vendors, consider running a pilot batch: 5 to 10 links installed in the same cabinet conditions, tested for stable RX power and error counters over a full day. That approach usually beats guessing based on spec sheets alone.

FAQ on Open RAN optical transceiver selection

What optical standard should I use for Open RAN fronthaul?

Use the standard that matches your port speed and fiber type: MMF SR at 850 nm for shorter runs with OM3/OM4, or SMF LR at 1310 nm for longer distances on OS2. Then verify the switch compatibility list and DOM telemetry expectations. [Source: IEEE 802.3 Ethernet PHY specifications]

Can I mix OEM and third-party transceivers in the same Open RAN switch?

Often you can, but you must validate acceptance and monitoring behavior. Some platforms restrict optics by EEPROM contents and may react differently after firmware upgrades. If you need predictable NOC workflows, standardize by site or at least by switch model and firmware version.

How do I check optical budget before ordering modules?

Measure end-to-end loss using certified fiber testing when possible, including the patch panels and jumpers. Then compare to the module’s documented transmit power and receiver sensitivity, and keep margin for aging and cleaning variability. If you cannot measure, conservative design and shorter reach classes reduce risk.

Why does MPO polarity cause “mystery” failures?

Because TX and RX fibers are physically swapped in the patching path while overall attenuation can still look acceptable. The link can appear dead even though OTDR results look good. Always verify polarity at the patch panel and confirm correct MPO orientation.

What temperature issues should I plan for in an Open RAN cabinet?

Cabinets near DC power and fans can raise internal temperatures beyond room conditions. Use transceivers rated for the expected ambient range, and test stability after heat soak. Monitoring RX power over time is more reliable than relying on initial link-up success.

Do I need DOM for Open RAN operations?

DOM is highly recommended because it enables early detection of degradation via RX power and temperature trends. Without DOM, you may only learn about problems after BER or traffic impact. Ensure your monitoring system understands the DOM fields your transceivers provide.

Open RAN optics selection is less about picking “the right wavelength” and more about matching reach, port speed, DOM behavior, and environmental reality. If you want the next step, review your switch optics compatibility list and run a small pilot batch before scaling across all radio sites via Open RAN transport network .

Author bio: I am a field-focused photographer and network builder who documents real deployments, from fiber endface hygiene to transceiver telemetry validation. My work blends visual inspection discipline with hands-on optics troubleshooting in live Open RAN rollouts.