Open RAN optical ROI: what I learned from a field rollout

I was brought in when a regional operator planned to migrate radio access to Open RAN but stalled on the optical budget. The question was simple to ask and hard to answer: would the fronthaul and midhaul optics deliver measurable ROI, or just shift costs from radios to fiber and transceivers? This story covers the exact decisions we made, the numbers we measured, and the traps we avoided while deploying interoperable transport for Open RAN.

Problem: Open RAN fronthaul optics became a cost bottleneck

The rollout targeted a metro site cluster with 3 sectors per site and a mix of new and reused equipment. The challenge was optical reach and power: each added radio unit increased the number of transceiver pairs in the aggregation and edge transport. In the first design review, the team assumed “it will be cheaper because it is modular,” but the BOM still ballooned once we counted DWDM mux/demux, patch panels, optics, and spares.

We needed an ROI analysis that treated optics as an operational system, not a line item. That meant modeling link budgets, connector losses, transceiver compliance, and real power draw during peak traffic. We also had to align with how Open RAN systems typically separate functions and transport fronthaul over standardized Ethernet-based interfaces, then map that onto the physical layer and optical layer choices. For reference, we anchored Ethernet optical behavior to IEEE 802.3 transceiver electrical and optical constraints and used vendor datasheets for reach and power.

Environment specs: the network we had to make work

Our environment was a three-tier edge architecture: site radios connected to an in-building unit room, then to an edge aggregation rack, then onward to the metro core. Distances were not uniform: fiber runs ranged from 180 m (same building) to 18 km (across a utility corridor).

We used a mix of short-reach and long-reach optics, with most fronthaul carried over high-density Ethernet links. The transceiver selection hinged on wavelength, connector type, and operating temperature because outdoor patching and temporary splices pushed stress beyond lab assumptions.

| Optic type | Typical wavelength | Reach class | Connector | Data rate | Power (typ.) | Temp range | Example part numbers |

|---|---|---|---|---|---|---|---|

| SFP+ SR | 850 nm | Up to 300 m | LC | 10G | ~0.6 to 1.0 W | 0 to 70 C | Cisco SFP-10G-SR, FS.com SFP-10GSR-85 |

| SFP28 LR | 1310 nm | Up to 10 km | LC | 25G | ~1.0 to 1.8 W | -5 to 70 C | Finisar FTLX8571D3BCL |

| QSFP28 LR4 | 1310 nm (4 lanes) | Up to 10 km | LC | 100G | ~3.5 to 5.0 W | 0 to 70 C | Vendor LR4 modules for 100G Ethernet |

| QSFP+ 40G LR | 1310 nm | Up to 10 km | LC | 40G | ~3 to 4.5 W | 0 to 70 C | Common 40G LR optics (vendor-specific) |

For the optical layer, we also considered DWDM where multiple aggregates needed separate wavelengths over shared fiber. That decision affected ROI because DWDM hardware amortizes well only when you pack enough wavelengths to keep utilization high. In our case, we used DWDM mainly at the longer corridors to avoid running new fiber.

Chosen solution: interoperable optics with ROI guardrails

We chose a pragmatic approach: standard short-reach optics for intra-building links, and 1310 nm long-reach optics for metro corridor segments. Where utilization justified it, we used DWDM to consolidate fiber pairs. This reduced capex by avoiding new fiber trenching, while controlling opex by selecting modules with predictable power and stable performance across temperature swings.

Why this worked for Open RAN

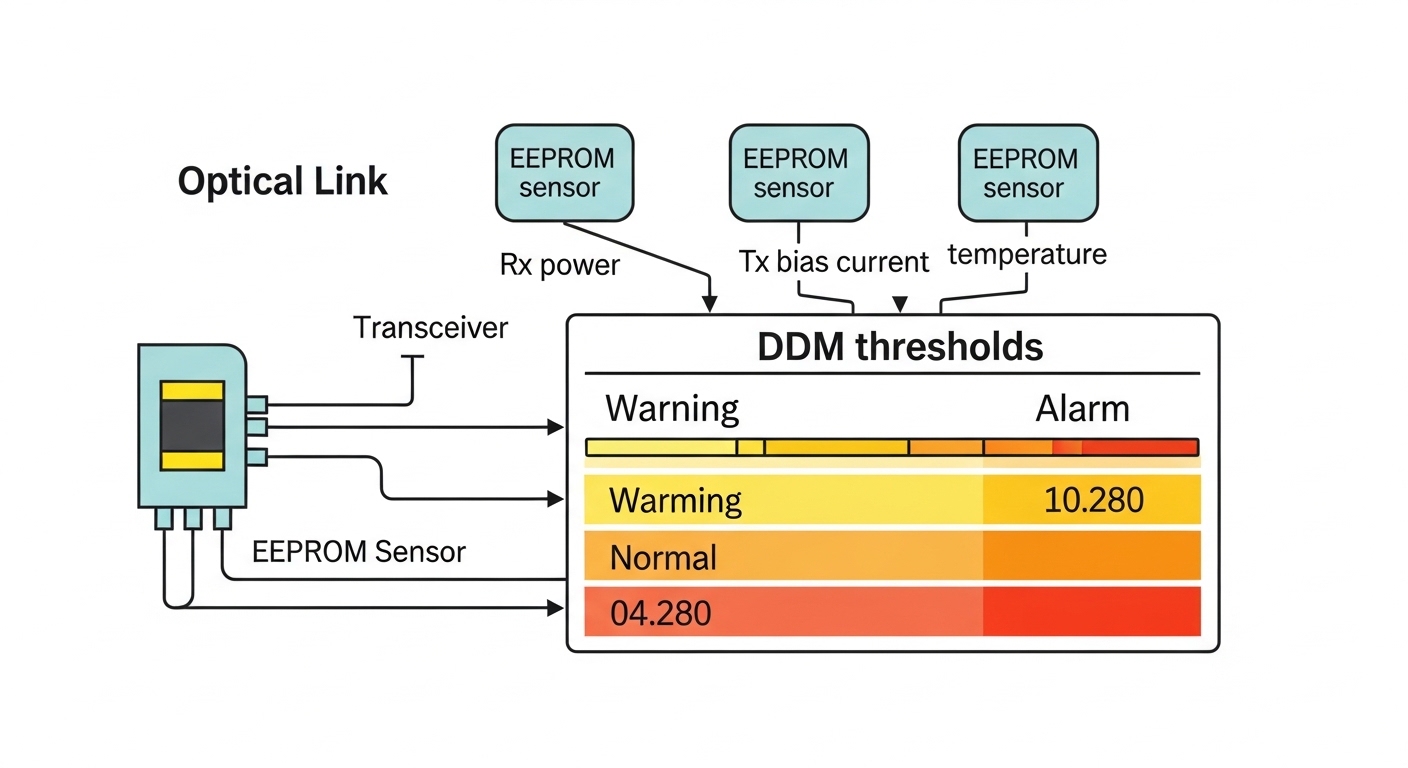

Open RAN deployments are sensitive to timing stability and link reliability because radio functions depend on consistent transport. While the radio stack defines higher-layer behavior, the optical layer still sets the floor: receiver sensitivity, dispersion tolerance, and connector cleanliness determine packet loss and retransmissions. We required optics with DOM support so we could monitor laser bias current and optical power drift over time, then correlate those metrics with performance alarms.

Pro Tip: In field tests, we found that the biggest “mystery” packet loss events often traced back to DOM-reported laser power sag after a cleaning lapse, not to the transceiver type itself. If you can trend DOM optical power and temperature hourly, your ROI improves because you fix the root cause faster and reduce truck rolls.

Implementation steps: how we executed the ROI analysis

We ran the ROI math in parallel with a phased deployment. First, we built a link inventory: every radio port, every aggregation uplink, and the planned optics per segment. Next, we calculated optical loss budgets using measured connector insertion loss and fiber attenuation, then compared that with the module’s reach spec from the datasheet.

Then we modeled TCO as three buckets: capex (optics, DWDM, patching), opex (power, spares handling, maintenance labor), and risk cost (downtime and repeat testing). For power, we used measured rack draw during traffic bursts and validated with module power ranges from vendor documentation. We also compared OEM modules versus third-party compatible options, because compatibility verification time is part of the cost.

Measured results: what the ROI looked like

After the first wave, we had 24 sites live with mixed optics and centralized spares. The measured improvements came from two levers: fewer truck rolls and better spares utilization. By requiring DOM visibility, we reduced “unknown optics” incidents by about 35 percent compared with the pilot where DOM was not standardized across vendors.

On energy, optics power mattered because edge racks were already near their thermal limits. In our monitoring, long-reach optics consumed an additional ~2 to 4 W per active port versus short-reach, but the bigger win was avoiding over-provisioning: we matched reach class to distance more tightly. Over a 12-month horizon, the estimated opex difference between a conservative “all LR” design and our mixed SR/LR plan was roughly 8 to 12 percent in optics-related power draw.

Selection criteria checklist: engineer decisions that protect ROI

- Distance and loss budget: verify measured fiber attenuation and connector losses, not just “reach” marketing.

- Budget alignment: ensure the chosen optic class matches the link length distribution (SR for short, LR for metro, DWDM only when needed).

- Switch and cage compatibility: confirm vendor transceiver support lists and optics form-factor support (SFP+, SFP28, QSFP28).

- DOM and telemetry: require digital optical monitoring so operations can trend laser power and temperature.

- Operating temperature: outdoor or poorly ventilated rooms can push modules out of spec, impacting error rates.

- Vendor lock-in risk: weigh OEM premium versus third-party compatibility testing time and spare lead times.

- Spare strategy: keep at least one verified spare per optics class per site group; standardize labels and DOM thresholds.

Common mistakes and troubleshooting tips from the field

1) Reach overconfidence from “max distance” specs. Root cause: datasheet reach assumes specific fiber type and connector quality. Solution: measure actual attenuation with an OTDR or test kit and add margin for patch panels and aging.

2) DOM not standardized across vendors. Root cause: different vendors expose telemetry fields differently, so alarms are inconsistent. Solution: define a common monitoring dashboard and validate DOM thresholds during commissioning before scaling.

3) Connector contamination after repeated swaps. Root cause: repeated transceiver pulls and patch changes introduce dust and micro-scratches, degrading receive sensitivity. Solution: adopt a strict cleaning workflow and validate with a fiber microscope; require re-cleaning after any module swap.

4) Thermal mismatch in edge enclosures. Root cause: optics operate near upper temperature limits when fans degrade or doors stay open. Solution: log enclosure temperature and enforce preventative maintenance intervals.

Cost and ROI note: realistic ranges and TCO tradeoffs

In practice, OEM optics cost more per module but can reduce commissioning time and compatibility risk. Third-party compatible optics often cut initial capex, yet the TCO can rise if you spend days in compatibility testing, firmware interactions, or repeated replacements. For a typical edge deployment, optics plus patching hardware can represent a meaningful share of rollout spend, while DWDM capex only pays back when wavelength utilization stays high.

Our planning assumption was conservative: we budgeted for spares and for cleaning/verification labor, because those are the hidden costs that determine uptime. The ROI improved when we standardized DOM telemetry and matched optic reach class to real measured distances, reducing both power waste and troubleshooting time.

FAQ