When a telecom operator faces rising capex for radio access upgrades or slow vendor lead times, the access network becomes the bottleneck. This article explains how Open RAN changes that reality by separating interfaces and enabling multi-vendor ecosystems. It is written for network architects, RAN engineers, and procurement teams evaluating disaggregation for 4G and 5G rollouts.

Why Open RAN matters when networks must evolve fast

Traditional RAN deployments often bundle radio units, baseband processing, and management software into tightly coupled vendor systems. That coupling can slow upgrades, inflate integration costs, and make it harder to introduce new capabilities across sites. Open RAN reframes the design by standardizing key interfaces so components can interoperate across vendors.

In practice, Open RAN typically centers on the split between distributed units (DU) and centralized units (CU), plus standardized fronthaul and midhaul interfaces. This enables operators to scale capacity by choosing best-fit components rather than buying a full stack from a single supplier. For standards context, refer to IEEE 802.3 for Ethernet transport behaviors and timing considerations, and to published RAN interface specifications from the relevant standards bodies. [Source: IEEE 802.3 series via IEEE Standards]

Pro Tip: In field deployments, the biggest “hidden” benefit of Open RAN is not just vendor flexibility; it is the ability to isolate integration risk. If your fronthaul is standardized and your DU/CU interfaces are consistent, you can trial a new vendor for a subset of sites and measure KPIs before scaling—reducing the blast radius of a failed upgrade.

Open RAN building blocks: interfaces, performance targets, and compatibility

To evaluate Open RAN credibly, you need to map the end-to-end chain: radio unit (RU), distributed unit (DU), centralized unit (CU), and transport (fronthaul and midhaul). The interfaces between these blocks define how signals are carried and how timing is maintained. Even if two vendors claim “Open” compliance, operational interoperability depends on configuration details, timing profiles, and software release maturity.

Engineers commonly focus on Ethernet transport characteristics (latency, jitter, packet loss), timing distribution (often via PTP in modern Ethernet-based transport), and radio software behavior. Many operators design for deterministic transport budgets and validate with packet-level telemetry and radio KPIs. For timing distribution guidance, consult vendor and standards documentation for PTP behavior and network timing constraints, and validate with a lab-to-field test plan.

| Spec category | Open RAN-related target | Typical implementation examples | Where it impacts you |

|---|---|---|---|

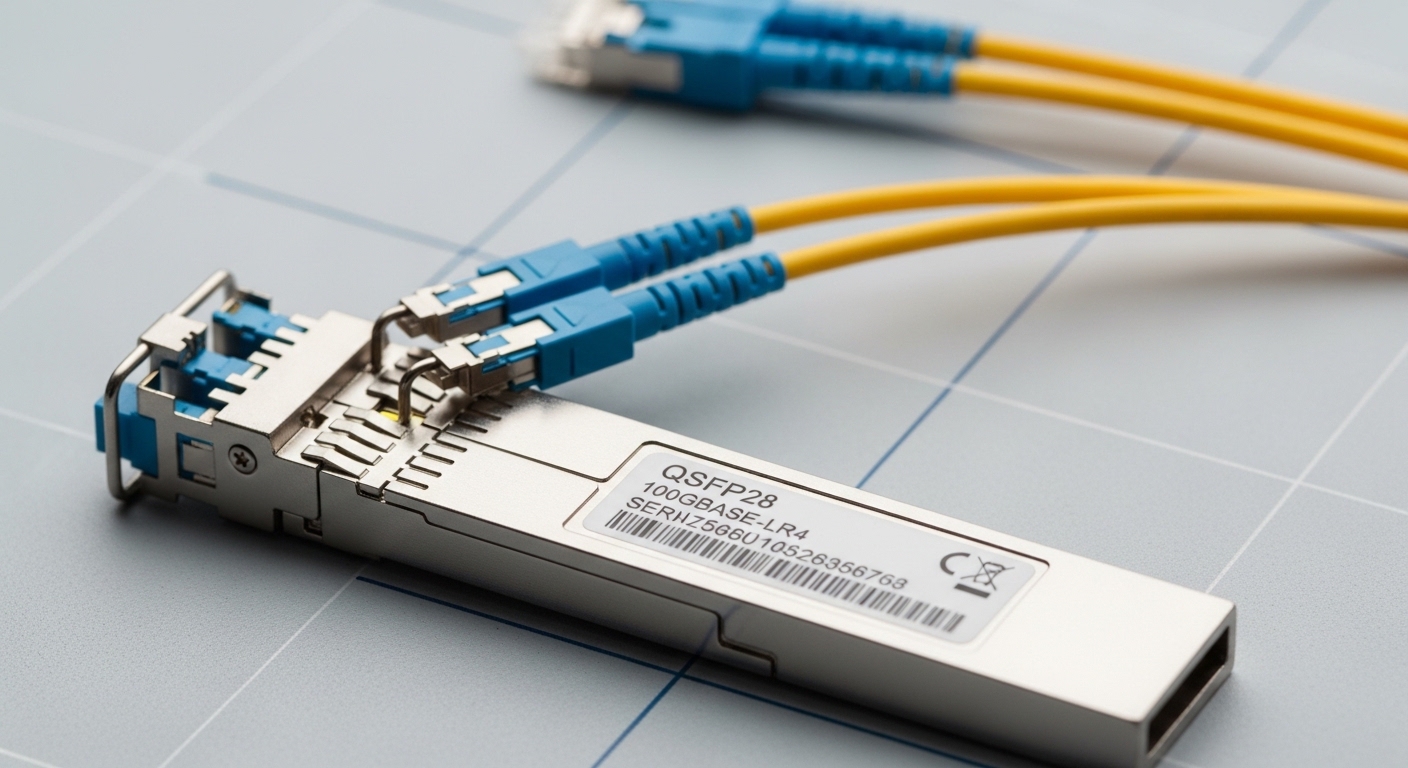

| Data rate (fronthaul) | High throughput; depends on functional split | 10G, 25G, 50G Ethernet links (site dependent) | Determines optics choice, switching capacity, and oversubscription risk |

| Latency budget | Strict bounds depending on split | Low-latency leaf-spine fabric or dedicated VLAN/traffic engineering | Impacts HARQ timing, scheduling, and user-plane responsiveness |

| Jitter and packet loss | Minimize jitter; prevent loss during congestion | QoS with priority queues; congestion management | Stabilizes radio processing and reduces retransmissions |

| Temperature range | Operational survivability at cell sites | Outdoor cabinets and controlled indoor equipment rooms | Prevents thermal throttling and optics/ASIC derating failures |

| Optical transport (example) | Wavelength and reach aligned to plant | 850 nm multimode short reach or 1310 nm single-mode reach | Reduces link outages and maintenance calls |

Real deployment scenario: Open RAN in a leaf-spine transport design

Consider a 3-tier data center leaf-spine topology supporting a regional radio footprint. A typical case uses 48-port 10G or 25G ToR switches at the aggregation layer, with spine links engineered for low congestion. In one rollout pattern, 60 sites are brought online in waves: each wave provisions RU-to-DU fronthaul and DU-to-CU midhaul using VLAN segmentation and QoS policies.

Engineers set a measurable transport budget before first RF activation: for example, allocate a conservative one-way latency target between DU and the relevant CU processing boundary, and enforce packet loss thresholds using monitored counters. During acceptance testing, field teams validate end-to-end performance by correlating interface counters (CRC errors, dropped frames), PTP offset stability (where applicable), and radio KPIs such as PRB utilization and RLC retransmissions. The outcome is not theoretical; it is a repeatable runbook that reduces time-to-service and makes upgrades safer.

Selection criteria checklist: how teams choose Open RAN components

Buying Open RAN is not just a technology decision; it is a systems integration and lifecycle decision. Use the checklist below to evaluate vendor offerings and reduce integration surprises. This is the same approach procurement and engineering teams use when they need predictable timelines and measurable outcomes.

- Distance and transport reach: confirm fronthaul and midhaul physical plant constraints, including fiber type and expected link lengths.

- Functional split compatibility: ensure RU/DU/CU components support the same split configuration and that timing assumptions match.

- Switch and optics compatibility: validate optics models and switch firmware behavior under your traffic profile; avoid “it works in the lab” assumptions.

- DOM and diagnostics support: require transceiver diagnostics compatibility (DOM readings, alarm thresholds) and confirm tooling integration.

- Operating temperature and enclosure constraints: verify equipment derating curves for outdoor cabinets and controlled indoor rooms.

- Release cadence and interoperability testing: check how vendors coordinate software releases; require a documented interoperability matrix.

- Vendor lock-in risk: quantify how much of the stack is actually replaceable; negotiate portability for orchestration and monitoring.

Advantages and limitations: where Open RAN delivers and where it still bites

The strongest advantages of Open RAN show up in lifecycle management. When interfaces are standardized, you can introduce new components without replacing the entire stack, and you can test upgrades on a subset of sites. This can reduce mean time to repair by aligning spare strategies across vendors and simplifying troubleshooting with consistent interface telemetry. It can also improve procurement leverage by enabling multi-vendor sourcing for DUs, CUs, and transport elements.

However, limitations are real. Integration quality depends on software maturity, configuration discipline, and the correctness of timing and transport engineering. Some operators experience initial performance variability during early waves until traffic engineering and radio parameter baselines are tuned. As a result, Open RAN projects succeed when they treat interoperability testing and automation as first-class workstreams, not afterthoughts.

Common pitfalls and troubleshooting tips in Open RAN projects

Open RAN failures often look like radio issues, but root causes are frequently transport, timing, or configuration. Below are concrete failure modes that field teams commonly encounter, with practical solutions.

“Intermittent link drops” that correlate with congestion

Root cause: QoS misconfiguration or insufficient buffer tuning causing drops under burst traffic, which then destabilizes fronthaul processing. Solution: enforce traffic classes with strict priority where required, validate queue occupancy with switch telemetry, and run controlled traffic tests that mimic peak radio load.

“PTP offset drift” leading to radio KPI degradation

Root cause: timing distribution loops, incorrect grandmaster selection, or unstable network paths causing offset excursions. Solution: lock the timing hierarchy, validate PTP boundary conditions, and confirm that network paths used for timing are consistent and monitored for path changes.

“DOM alarms and monitoring gaps” after optic swaps

Root cause: transceiver diagnostics compatibility issues or missing threshold mappings in your monitoring stack. Solution: standardize on optics and firmware combinations that your operational tooling supports, verify DOM alarm thresholds, and run a post-change validation checklist for alarms, readings, and event logs.

“Works at low load, fails during scale-up” after software updates

Root cause: resource contention in CU/DU compute (CPU pinning, NUMA locality, memory bandwidth) or mismatched configuration defaults after upgrade. Solution: capture baseline performance counters before upgrades, apply deterministic CPU and memory policies, and run load tests that match the target wave scale.

Cost and ROI note: how to estimate TCO beyond transceiver pricing

Open RAN ROI is best modeled as total cost of ownership across five areas: equipment procurement, integration labor, operational overhead, and upgrade risk. Transceivers and optics can vary widely by vendor and reach; for example, short-reach optics for data center links may be relatively inexpensive, while long-reach or ruggedized options cost more. In many deployments, the largest cost lever is integration and the number of sites affected by rollback risk.

Typical budgeting ranges vary by region and scope, but teams often see meaningful savings when multi-vendor sourcing reduces procurement premiums and when standardized interfaces lower integration effort. Third-party components can reduce unit cost, yet they may increase warranty complexity and require additional validation. A practical approach is to compare OEM vs third-party using a risk-adjusted model: include expected failure rates, spares logistics, and time-to-repair based on your maintenance history.

FAQ

How does Open RAN reduce vendor lock-in in real procurement?

Open RAN reduces lock-in by standardizing interfaces so you can source RU, DU, and CU from different vendors, or swap components during phased upgrades. Still, you must validate interoperability with the exact software releases you plan to deploy and document an acceptance test plan.

Is Open RAN always cheaper than traditional RAN?

Not automatically. Unit costs may drop, but integration labor and testing effort can rise during early waves. ROI becomes favorable when you scale across many sites, reuse integration playbooks, and reduce upgrade rollback risk.

What transport network features matter most for Open RAN?

Latency, jitter, packet loss control, and timing distribution are the main transport sensitivities. Engineers typically enforce QoS and monitor interface counters continuously, then correlate transport metrics with radio KPIs during commissioning.

Do I need specific optics or switch models for Open RAN fronthaul?

You need optics and switches that match your reach, speed, and diagnostic requirements, plus firmware behaviors that cooperate with your monitoring tooling. Validate DOM support and ensure your network design avoids congestion patterns that cause drops.

What is the biggest operational risk during Open RAN rollout?

The biggest risk is inconsistent configuration or insufficient interoperability testing across vendors and software releases. Mitigate it with a staged rollout, automated configuration management, and measurable acceptance criteria per wave.

How do I start an Open RAN pilot without disrupting service?

Start with a limited site set where you can isolate variables: use a controlled transport segment, define timing and QoS baselines, and run a full commissioning workflow before RF expansion. Measure KPIs and interface counters before and after each change, then scale once results are stable.

Open RAN can deliver faster upgrades, stronger sourcing flexibility, and operational repeatability when interfaces are treated as engineering contracts and transport is designed for deterministic behavior. If you want the next step, review Open RAN transport design to build a testable fronthaul and midhaul plan that holds up under real traffic.

Author bio: Dr. Elena Hart is a field-focused network scientist who has led RAN transport validation and timing engineering for disaggregated access networks. She designs measurable acceptance tests that connect interface telemetry to radio KPIs.