Open RAN deployments often fail not because components are weak, but because compatibility assumptions break under real traffic, timing, and environmental stress. This guide helps network engineers, reliability leads, and field teams build a practical compatibility test plan across radio units, distributed units, central units, and transport. You will get a measurable workflow, a specs comparison table, and troubleshooting steps aligned with ISO 9001 style verification thinking and MTBF-aware risk control.

Prerequisites and acceptance criteria for compatibility testing

Before you connect anything, define what “compatible” means in testable terms. In Open RAN, compatibility spans protocol behavior (for example, O-RAN fronthaul mappings), timing alignment, management interfaces, and physical layer optics for fronthaul or midhaul. Treat this like a quality gate: document inputs, verify outputs, and record evidence for auditability.

What you need on day zero

- Inventory with part numbers: vendor, model, revision, and firmware build for RU, DU, CU, and switch/transport gear.

- Reference configs: expected band, numerology, supported split option (for example, functional split), and transport profiles.

- Timing plan: whether the system uses SyncE, 1588 PTP, GNSS, or internal holdover, plus the role (master or slave) of each node.

- Optics and cabling list: transceiver part numbers, wavelength, reach class, and connector type for any optical fronthaul links.

- Test instrumentation: packet capture for control/user plane, BER/optical power checks, and a traffic generator capable of stressing line rates.

Acceptance criteria you can measure

Set pass/fail thresholds that match your operational risk. For example, require no alarm escalation during 24 to 72 hours of continuous traffic, stable synchronization offsets, and repeatable throughput under load. Align with IEEE and vendor datasheets, and document deviations with corrective actions to keep your process ISO 9001 friendly.

Primary standards to anchor your expectations

- IEEE 1588 for time synchronization behavior, and IEEE 802.3 for link layer characteristics. anchor-text: IEEE Standards

- Vendor Open RAN implementation guides and transceiver compliance notes from module manufacturers.

- [Source: IEEE 802.3] Link layer electrical and optical considerations for Ethernet PHY behavior.

- [Source: IEEE 1588] Timing and synchronization message behavior for networked systems.

Pro Tip: In field tests, “it links at 10 seconds” is not the same as “it stays synchronized at 10 hours.” Many compatibility issues appear only after link renegotiation, PTP re-lock events, or traffic pattern changes that trigger buffer pressure and queue scheduling differences.

Step-by-step implementation guide for Open RAN compatibility

This section is a numbered test plan you can run in a lab or controlled field environment. It is designed to catch compatibility gaps early, before you scale capacity or sign off for production operations.

Build a compatibility matrix before cabling

Create a matrix that lists each pairing: RU-to-DU, DU-to-CU, and each transport segment between them. Include firmware versions, timing roles, and any required configuration knobs such as VLAN tagging, MTU, and QoS profiles. Expected outcome: a single document that predicts which combinations are allowed and which are “unknown risk.”

- Expected outcome: a signed-off test matrix with owners, dates, and rollback plans.

Validate timing and transport profiles in isolation

Start with the transport network and timing path only, without launching full radio traffic. Verify SyncE or PTP status, check that the DU and CU agree on timing hierarchy, and confirm link stability on any fronthaul optics. Expected outcome: stable synchronization indicators and no recurring alarms.

- Recommended checks: PTP lock state, SyncE holdover behavior, and Ethernet link error counters.

Bring up RU and DU with minimum functional load

Power on the RU, connect to the DU, and configure the split option and parameters according to vendor documentation. Use a minimal radio load profile that still exercises control paths and key data flows. Expected outcome: RU-DU functional establishment without repeated resets or inconsistent capability negotiation.

- Measured evidence: successful control-plane session establishment and stable application logs.

Exercise user-plane traffic with realistic load patterns

Run traffic that matches your expected cell load and scheduling behavior. For Open RAN, that means varying packet sizes, bursts, and timing alignment stress. Monitor throughput, jitter, packet loss, and any application-level alarms. Expected outcome: sustained performance with synchronization within defined tolerances.

- Example target: continuous traffic for 24 to 72 hours with stable error counters.

Perform optical and environmental verification for transport

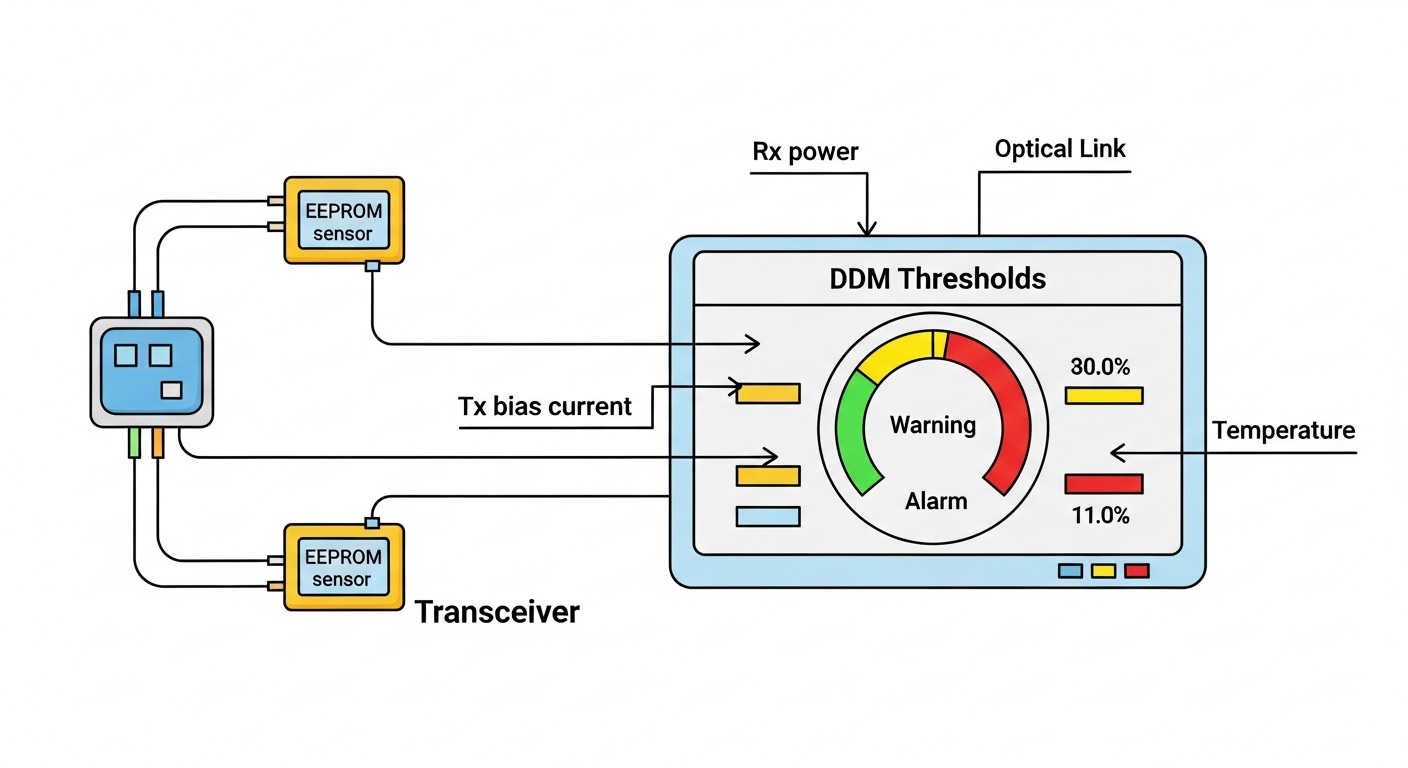

If your compatibility depends on optics, validate wavelength, link budget, and power levels at the actual operating temperature. Confirm transceiver DOM readings if supported, and ensure the switch accepts the module characteristics. Expected outcome: stable optical link with no BER degradation across your temperature range.

- Measured evidence: optical transmit power, receive power, and DOM alarms.

Fronthaul transport compatibility: optics, reach, and DOM behavior

Open RAN fronthaul and midhaul commonly rely on Ethernet transport with strict timing requirements. Optical transceivers are often the hidden compatibility gate: mismatched wavelength, insufficient reach, or DOM quirks can cause intermittent link instability. Even when Ethernet “links,” a marginal link budget can drive higher error rates under temperature drift.

Quick comparison table for common 10G SR optical modules

The table below shows typical parameters engineers compare when validating optical compatibility for Ethernet segments. Always confirm exact values in each vendor datasheet and match the switch transceiver support list.

| Module example | Wavelength | Reach | Data rate | Connector | DOM support | Operating temperature |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 850 nm | ~300 m (OM3 typical) | 10G | LC | Yes (vendor-specific) | 0 to 70 C typical class |

| Finisar FTLX8571D3BCL | 850 nm | ~300 m (OM3 typical) | 10G | LC | Yes | 0 to 70 C typical class |

| FS.com SFP-10GSR-85 | 850 nm | ~300 m (OM3 typical) | 10G | LC | Varies by SKU | 0 to 70 C typical class |

Limits to remember: reach is fiber type dependent (for example, OM3 versus OM4), and connector cleanliness can dominate performance. DOM support matters when switch firmware expects specific diagnostic behavior; some third-party modules can pass basic link but fail DOM polling or alarm thresholds.

Pro Tip: If your switch does strict optical threshold enforcement, a compatible module by wavelength and reach can still be “incompatible” due to different DOM calibration or alarm default thresholds. Validate by reading DOM live during traffic, not just by observing link up.

Selection criteria checklist to prevent compatibility surprises

Use this ordered checklist to reduce rework. It is tuned for Open RAN projects where radio, compute, timing, and transport are all changing at once.

- Distance and fiber type: confirm OM3/OM4, patch loss, and expected link budget margin.

- Data rate and Ethernet profile: ensure the DU and switches support the required line rate and QoS behavior.

- Switch compatibility: verify the transceiver is in the switch vendor compatibility list, or confirm the switch accepts the module via permissive configuration.

- DOM and alarm behavior: confirm monitoring integration in your NMS and that DOM readings do not trigger false alarms.

- Operating temperature and airflow: check module temperature class and verify that the cage environment matches your thermal model.

- Vendor lock-in risk: decide early whether you accept OEM optics pricing or plan validated third-party modules with documented evidence.

- Firmware alignment: ensure RU, DU, and CU builds are within the vendor’s supported interoperability matrix.

For Open RAN specifically, also include split option compatibility and timing hierarchy behavior. A system can pass link and still fail radio session stability if timing offsets exceed tolerances or if PTP message handling differs between vendors.

Common pitfalls and troubleshooting fixes for compatibility

Below are the most frequent failure modes seen in mixed-gear Open RAN trials. Each includes root cause and a practical solution that field teams can apply immediately.

Failure mode 1: Link flaps under traffic bursts

Root cause: marginal optical budget from dirty connectors, underestimated patch loss, or incorrect fiber grade assumption (OM3 vs OM4). Under burst traffic, the system reveals higher error rates that trigger PHY resets. Solution: clean connectors, re-measure receive power, verify link budget margin, and replace with a module SKU validated for the switch and fiber type.

Failure mode 2: RU-DU session establishes, then degrades over time

Root cause: timing instability due to SyncE/PTP role mismatch, clock holdover behavior, or re-lock events after network changes. This can cause drift that radio control loops tolerate briefly but not long term. Solution: confirm PTP domain settings, ensure a single timing master source, and run a 24 to 72 hour soak with recorded synchronization offsets.

Failure mode 3: Monitoring shows alarms even though traffic seems fine

Root cause: DOM threshold mismatches or NMS polling expectations that differ between OEM and third-party optics. Some environments treat benign DOM differences as critical, leading to automated remediation that disrupts the network. Solution: compare DOM readings against vendor baselines, adjust alarm thresholds in your monitoring system, and document approved optics SKUs with evidence.

Cost and ROI note: balancing compatibility, MTBF, and total cost

OEM optics and vendor-certified modules usually cost more, but they reduce integration time and reduce the chance of opaque compatibility failures. Typical market pricing varies by speed and contract, yet in many enterprise and carrier budgets, transceivers can range from $50 to $300 per module depending on brand and certification, while optics and labor failures can dominate TCO during ramp. For ROI, focus on MTBF and mean time to repair: a module that fails once during a critical deployment window can outweigh the price difference.

For Open RAN, include compute, power, and operational overhead in TCO. If third-party components require repeated requalification, the “savings” can vanish in engineering hours. A pragmatic approach is staged qualification: start with OEM for the first cells, then introduce validated third-party optics only after DOM, BER, and long-soak evidence meets your acceptance criteria.

FAQ

How do I prove compatibility between RU and DU beyond basic link up?

Run a controlled bring-up and confirm stable session establishment, then apply realistic traffic patterns for at least 24 hours. Capture evidence from logs, alarms, and synchronization status, not just Ethernet link state. If available, validate against the vendor interoperability matrix and repeat the test after any firmware change.

Does compatibility depend on optics even when Open RAN is mostly compute and software?

Yes, because fronthaul and midhaul carry time-sensitive data and control traffic. A marginal optical link can create packet loss, increased latency, and timing instability that only appears under load. Validate optics with live DOM readings and sustained traffic, and confirm fiber reach and link budget margin.

Can third-party transceivers be compatible with Open RAN deployments?

They can, but compatibility is not guaranteed by wavelength and reach alone. You must verify switch acceptance behavior, DOM polling, alarm thresholds, and long-soak BER stability. Use a qualification plan that documents tested SKUs and firmware combinations.

What timing issues most often cause compatibility failures?

Role mismatch for PTP masters, incorrect domain settings, and unexpected holdover behavior after network events are common. These lead to gradual drift or re-lock events that degrade radio stability. Ensure a single authoritative timing source and validate offsets over long durations.

What should we include in our compatibility sign-off report?

Include part numbers and firmware builds, transport topology, timing configuration, test duration, traffic profiles, and measured outcomes such as error counters and synchronization stability. Add a deviation log with corrective actions for any nonconformities. This makes audits and future upgrades much faster.

How often should we re-run compatibility tests during the project lifecycle?

Re-run tests after any firmware upgrade, major topology change, or optics refresh. For reliability, also re-test during seasonal thermal changes or after maintenance events that impact cabling and airflow. If you use continuous integration for configurations, align test triggers with your change management process.

Open RAN compatibility is achievable when you treat it like a controlled reliability program: define measurable acceptance criteria, validate timing and transport in isolation, then run long soak tests under realistic load. Next, use Open RAN reliability and MTBF planning to build an evidence-backed upgrade and maintenance strategy that protects uptime during scale-out.

Author bio: I have hands-on experience validating Open RAN and Ethernet transport in production-like labs, including optics DOM verification and 72-hour traffic soak testing. As a reliability engineer, I design compatibility gates that reduce MTTR and keep deployment risk measurable and auditable.