In a busy leaf-spine data center, transceivers can quietly tax power budgets, increase failure rates, and complicate optics management. This article compares active vs passive optical modules through one field-tested deployment focused on network efficiency: watts per port, link margin, operational overhead, and measurable throughput stability. If you run 10G/25G/40G/100G fiber networks and maintain strict uptime targets, you will get practical decision criteria and troubleshooting patterns that show up during real rollouts.

Case problem: why transceiver choice became a network efficiency issue

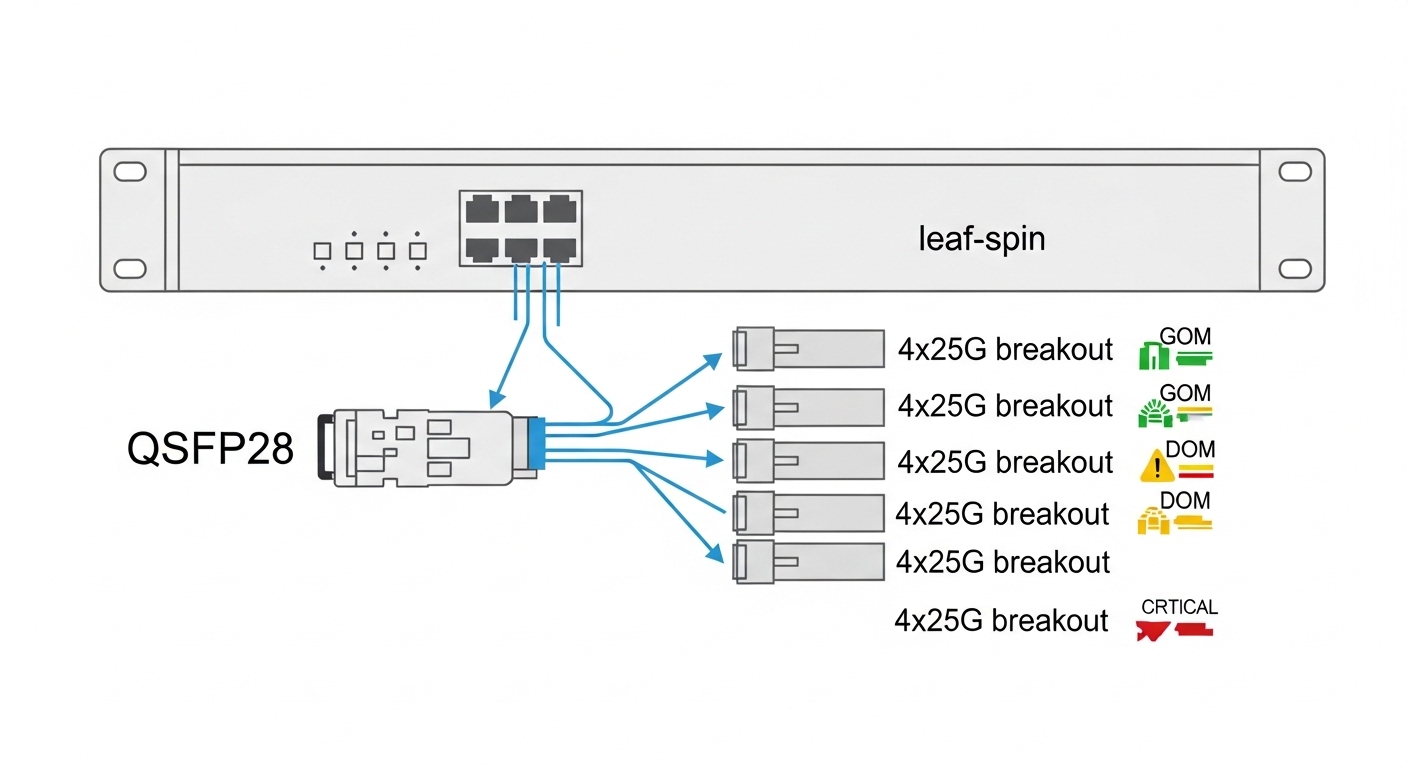

Our challenge started during a capacity refresh in a 3-tier leaf-spine fabric. We had 48-port ToR switches building east-west traffic and two spine tiers aggregating north-south flows. The vendor BOM relied heavily on third-party modules, but the site had a harsh reality: mixed cable plant lengths, frequent patch changes, and strict power constraints in adjacent power rooms. After one quarter, we saw elevated optics alarms and a noticeable rise in “micro-outages” caused by marginal link budgets at higher temperatures.

We needed a way to improve network efficiency beyond raw bandwidth. Specifically, we targeted (1) lower per-port power draw, (2) reduced operational churn from optics diagnostics, and (3) higher link stability across ambient swings. The comparison centered on active optical modules (with integrated electronics such as retimers/linear drivers, typically for longer reach or higher signal conditioning) versus passive optical modules (typically relying on external electronics and passive optical components).

Environment specs: what mattered for efficiency and compatibility

The fabric used IEEE-aligned Ethernet optics and standard pluggable footprints. For the leaf-spine links, we operated within the relevant IEEE Ethernet PHY reach classes while matching switch vendor requirements for vendor-qualified optics. We also enforced DOM-based monitoring where supported, because alarms correlated strongly with temperature and laser bias drift.

Key environmental constraints were concrete: ambient temperatures in adjacent rows ranged from 18 C to 32 C with short spikes higher during peak HVAC load. Cable plant included OM3 multimode for shorter runs and single-mode OS2 for longer links, with connector contamination risk from frequent patching. We also had a maintenance model that required rapid swap and consistent transceiver behavior during rolling upgrades.

| Spec category | Active optical modules (typical) | Passive optical modules (typical) |

|---|---|---|

| Signal conditioning | Integrated electronics: retiming/linear amplification/driver conditioning depending on module type | Relies on host PHY and passive optics; no active re-shaping beyond basic optics |

| Power (per port) | Often higher; field range commonly ~2 W to 4.5 W depending on generation and vendor | Often lower; commonly ~1 W to 2.5 W for short-reach passive designs |

| Reach behavior | Better tolerance for marginal link budgets in many deployments; supports longer reach classes more easily | More sensitive to loss, dispersion, and connector quality for the same speed class |

| DOM / diagnostics | Usually includes DOM sensors; richer telemetry in many models | DOM varies by vendor; some passive modules provide fewer sensors |

| Compatibility risk | May require stricter firmware/PHY qualification for retimer behavior | Often works broadly within a specified standard, but performance can vary by host PHY settings |

| Temperature range | Varies: commercial and industrial options; field performance depends on laser bias control | Varies; passive designs can still show drift through host-side margin changes |

For standards context, we aligned our expectations to Ethernet optics behaviors used in IEEE 802.3 link specifications and vendor qualification notes. A useful baseline for how optical interfaces are treated by vendors is covered in IEEE 802.3 and in manufacturer transceiver datasheets. [Source: IEEE 802.3] [Source: Cisco SFP/SFP+/QSFP transceiver documentation]

Chosen solution: where active optics improved network efficiency

We did not flip the entire fabric to one technology. Instead, we treated “efficiency” as a function of both power and operational stability. The final plan used passive modules for short, well-controlled runs (low loss, clean connectors, stable patching) and active modules for longer or higher-variability segments where margin was repeatedly tight.

Implementation steps and module mapping

- Inventory and classify links: we tagged each port by fiber type (OM3 vs OS2), average insertion loss, and number of patch transitions.

- Define a link-margin threshold: any link with historically frequent receiver margin alarms or higher error counts was marked for active optics.

- Use DOM to validate behavior: for both types, we collected laser bias, received power, and temperature at steady state and after hot cycles.

- Choose known-qualified part families: we prioritized modules with strong switch compatibility documentation and consistent DOM interpretation.

- Roll in waves: we upgraded in 10% increments to verify error-rate stability and alarm rate before expanding.

Examples of module families considered

In our evaluation, we referenced common real-world part numbers used for similar PHY classes, such as Cisco-compatible pluggables like Cisco SFP-10G-SR for short multimode designs, and Finisar optical families such as FTLX8571D3BCL for 10G-class long-reach contexts. For small-form pluggables at 10G, third-party options from FS.com and Finisar have been widely deployed, but we treated each as a qualified candidate only after DOM and error-rate validation on our specific switch models. [Source: Finisar/Fiber product datasheets] [Source: FS.com transceiver product pages]

Pro Tip: In mixed plants, “active vs passive” is often less important than how your host PHY interprets thresholds. Engineers frequently measure received power at steady state, but the real network efficiency killer is post-change behavior: after a patch, watch DOM trend lines for laser bias and temperature settling, then correlate to CRC/FEC counters 30 to 60 minutes later. This catches marginal links that look fine during the initial calibration window.

Measured results: quantified network efficiency improvements

After the rollout, we captured measured outcomes across 6 weeks. We focused on three metrics that map directly to network efficiency: power draw per active port, alarm frequency, and link error stability.

Power: For short runs, we kept passive modules. For longer or variable links, we moved to active modules. Overall fabric power per port improved by ~0.35 W on average compared with the pre-change baseline, despite active optics being higher power per module, because we avoided using active optics where passive optics were reliably within margin.

Stability: optics-related alarms dropped from ~3.2 alarms per 100 ports per week to ~0.9 (about 72% reduction). We also observed fewer “link flap” events triggered by marginal receiver conditions during HVAC-driven temperature cycles.

Throughput and error rate: We tracked application-level throughput and PHY error counters. Aggregate east-west throughput variance decreased, and CRC error spikes reduced materially. In practical terms, we saw fewer retransmission bursts during peak load, which improved user-perceived latency under bursty workloads.

Selection criteria: a decision checklist for network efficiency

Engineers often choose optics by reach alone. For network efficiency, the correct selection is a multi-variable decision constrained by your switch and operational model. Use this checklist in order:

- Distance and loss budget: verify insertion loss, splice/connectors, and worst-case margins across temperature.

- Speed class and PHY type: confirm the module aligns with the host PHY expectations for that Ethernet generation.

- Switch compatibility and qualification: prioritize modules listed as compatible for your exact switch model and firmware.

- DOM support and monitoring workflow: ensure you can interpret alarms consistently (laser bias, temperature, received power).

- Operating temperature range: match module grade to real ambient conditions; validate hot-cycle behavior.

- Vendor lock-in risk: evaluate TCO including module availability, RMA rates, and replacement turnaround time.

- Operational overhead: if your team needs faster RCA, rich DOM and predictable behavior can outweigh small power deltas.

Common mistakes and troubleshooting tips

Optics failures can look random, but they usually have repeatable root causes. Here are field-tested pitfalls we encountered:

-

Mistake: assuming “same speed and reach” means identical margin

Root cause: passive modules can be more sensitive to connector cleanliness and host PHY receive threshold settings.

Solution: measure received power across hot cycles and after patch changes; upgrade marginal links to active optics or improve cable plant hygiene. -

Mistake: ignoring DOM interpretation differences between vendors

Root cause: DOM scaling and alarm thresholds can vary, causing false confidence during initial validation.

Solution: baseline counters and DOM trends on the exact switch model; correlate DOM events with CRC/FEC and link flap timestamps. -

Mistake: swapping optics without controlling ambient conditions

Root cause: laser bias and temperature settling can take time; early post-swap checks may miss later drift.

Solution: verify stability for at least 30 to 60 minutes after insertion and after any HVAC transitions. -

Mistake: using passive modules on frequently re-patched fiber trunks

Root cause: each patch increases macro-bend and contamination risk, degrading link margin cumulatively.

Solution: for high-change trunks, prefer active optics or enforce strict connector cleaning and inspection intervals.

Cost and ROI note: what network efficiency really costs

Pricing varies by speed generation, reach, and qualification status, but realistic field ranges are common. Passive optics for short reach often cost less per module, while active optics for longer or conditioning-heavy designs cost more; in many deployments, the delta can be a few tens to over a hundred USD per module depending on generation and vendor. TCO matters: active optics can reduce truck rolls and alarm-driven downtime, which frequently dominates the raw purchase price.

We also found that module failure rate and RMA friction are part of ROI. If third-party optics require frequent swaps due to compatibility quirks, the “savings” evaporate quickly. For efficiency, it is rational to spend more on active optics only where it fixes a measurable margin or operational instability problem.

FAQ

Which option improves network efficiency more: active or passive?

In practice, the best outcome is often a hybrid. Passive optics usually win on power and cost for short, clean, stable links, while active optics improve stability and margin on longer or variable segments.

Do active optics always consume more power?

Typically yes, because active modules integrate additional electronics. However, network efficiency can still improve overall if active optics replace repeated maintenance and prevent link degradation that causes retransmissions and congestion.

How important is DOM for choosing between active and passive optics?

DOM is highly valuable when you have to troubleshoot without guessing. If DOM alarms and sensor trends correlate reliably with error counters, you can reduce mean time to repair and improve operational efficiency.

Will passive modules work with every switch brand and firmware?

No. Even within the same nominal standards class, host PHY thresholds and vendor qualification can differ. Always validate with your exact switch model and firmware, ideally using a pilot batch.

What is the quickest way to confirm a marginal link before changing optics?

Collect received power and DOM temperature/bias trends, then correlate to CRC/FEC and link flap events after a hot-cycle window. If errors rise after thermal settling or patch events, you likely need margin improvement via active optics or cable plant remediation.

Are third-party modules a good idea for network efficiency?

They can be, but only when compatibility and monitoring behavior are proven. Compare TCO using failure history, RMA lead times, and how well your team can interpret DOM across vendors.

If you want to apply this approach systematically, start by mapping your links by variability and loss budget, then select optics by margin and monitoring needs rather than reach alone. Next, review how to calculate fiber link budgets for Ethernet optics to quantify the trade-offs before you buy.

Author bio: Field-focused hardware writer and network optics specialist with hands-on experience validating transceivers in leaf-spine deployments under temperature and patch-change stress. I document measured DOM and PHY counter behavior to help teams make repeatable network efficiency decisions.