In multi-cloud ecosystems, one wrong optical choice can strand production traffic during peak change windows. This article helps network engineers, architects, and field ops teams select the right fiber optics and transceivers for leaf-spine and campus links across vendors. You will get a practical Top 8 list, a spec comparison table, a decision checklist, troubleshooting pitfalls, and a realistic cost and ROI view.

10G SR over OM3: the workhorse for dense leaf-spine links

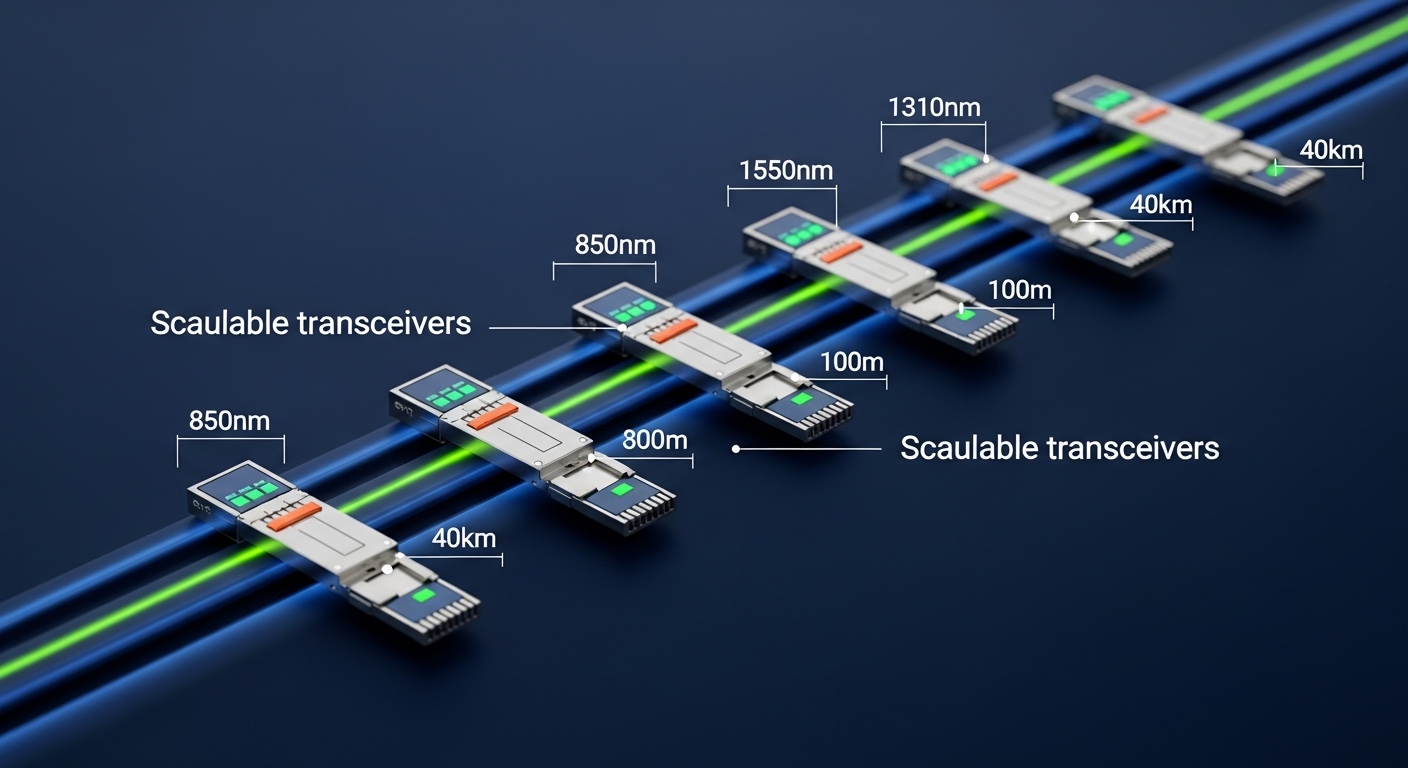

For many multi-cloud ecosystems, 10G SR over multimode fiber is the default because it is inexpensive, widely supported, and fast to deploy. SR modules use 850 nm VCSEL transmitters and typically target short-reach data center runs where patching and spares matter more than long distance. In practice, SR optics pair naturally with 10GBASE-SR compliant switch ports that expect standard electrical characteristics (per IEEE 802.3) and standard optical power budgets.

Key specs to check: data rate (10.3125 Gbps nominal for 10G Ethernet), wavelength (850 nm), reach on OM3/OM4, connector type (LC duplex is common), and DOM support (Digital Optical Monitoring). Typical DOM implementations expose module temperature, supply voltage, laser bias current, and received power.

Best-fit scenario: In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches and 2x 10G uplinks per server NIC, you may run 120 to 220 meters on OM3 between ToR and aggregation. SR reduces cost per port and keeps spares manageable across racks and pods.

- Pros: low cost, broad compatibility, quick field swap, strong vendor availability

- Cons: limited distance vs LR/ER, multimode plant quality matters, higher modal effects on older OM3

10G LR over OS2: when you need budget-friendly reach

When multi-cloud ecosystems expand into inter-building connectivity or longer spine runs, 10G LR over single-mode OS2 becomes a pragmatic upgrade. LR uses 1310 nm optics and is commonly designed for up to 10 km at the link budget level used by IEEE 802.3. Unlike multimode, OS2 is less sensitive to modal dispersion, which simplifies long-run reliability.

Key specs to check: wavelength (1310 nm), reach (often 10 km class), transmitter power and receiver sensitivity ranges, and whether the vendor provides calibrated DOM thresholds. Also verify that the switch supports SFP+ or other form factor and that the module is on the switch vendor’s compatibility list when required.

Best-fit scenario: A hybrid multi-cloud environment with a campus edge router to a regional core switch may use 8 km OS2 fiber. LR keeps optics and fiber operational while the organization stages a future 25G/100G migration.

- Pros: strong reach, OS2 stability, common across vendors

- Cons: OS2 fiber and splicing costs, higher module cost than SR, careful cleaning needed for OS2 LC endfaces

25G SR over OM4: modernizing without redesigning the plant

As multi-cloud ecosystems adopt higher east-west throughput, 25G SR over OM4 is a frequent stepping stone. It leverages 850 nm optics but with higher signaling rates, which means you must validate your multimode plant performance rather than assuming “it worked at 10G.” Field teams often run link validation by checking fiber attenuation, connector loss, and patch cord quality.

Key specs to check: data rate (25.78125 Gbps class), wavelength (850 nm), reach target (commonly 100 m on OM4 for 25G SR), and whether the module uses a form factor your switches support (often SFP28). DOM is strongly recommended for proactive monitoring and faster incident triage.

Best-fit scenario: In a Kubernetes-heavy multi-cloud environment, you might upgrade a ToR from 10G to 25G while keeping the existing OM4 trunk. If your average patch-to-patch distance is 60 to 90 meters, 25G SR avoids immediate OS2 conversion.

- Pros: better density, lower migration friction, good performance for typical data center distances

- Cons: multimode plant must be clean and validated, not all OM3 supports 25G SR reliably

25G LR over OS2: a bridge for longer rack-to-core runs

25G LR over OS2 supports longer reach at 25G rates, making it suitable for multi-cloud ecosystems that span multiple buildings, floors, or zones. Using 1310 nm optics, LR modules often target around 10 km depending on class and vendor design. In practice, this is a strong choice for aggregation links where patching discipline is good and you want fewer spares across distance tiers.

Key specs to check: reach class, optical budget (tx power and rx sensitivity), and whether your switch expects 25G Ethernet electrical compliance for SFP28. If you plan to mix vendor optics, confirm that DOM alarms and thresholds are consistent enough for your monitoring stack.

Best-fit scenario: A multi-cloud SaaS provider might connect a regional DR site to a main core with 6 to 7 km OS2. 25G LR provides headroom without rushing to 40G/100G optics.

- Pros: longer reach at higher throughput, stable OS2 behavior, fewer distance tiers

- Cons: cost and lead time vs SR, OS2 cleaning and connector inspection remain mandatory

40G QSFP+ SR4: when you must stay on 40G aggregation

Some multi-cloud ecosystems still run 40G aggregation in the real world, especially where refresh cycles are staggered. 40G SR4 uses 850 nm multimode optics with four lanes aggregated into a single link. It is often deployed with QSFP+ interfaces and LC duplex cabling that maps to lane groups inside the module.

Key specs to check: wavelength (850 nm), reach (varies by OM3/OM4 and vendor class), and lane behavior (since a single lane issue can degrade the link). DOM support is valuable because it can help identify whether the fault is transmit-side, receive-side, or temperature-related.

Best-fit scenario: In a mixed fleet where core switches are being replaced later, you may need 40G from aggregation to spine for 150 to 300 meters on OM3/OM4. SR4 can meet that distance while buying time for a future 100G transition.

- Pros: leverages existing 40G chassis investments, good performance in short distances

- Cons: lane-level troubleshooting complexity, multimode plant quality becomes critical

100G SR4/DR4: high-density east-west throughput with careful budget math

For multi-cloud ecosystems pushing heavy east-west traffic, 100G SR4 or 100G DR4 can deliver large throughput without redesigning the entire fabric. SR4 typically uses 850 nm multimode and DR4 uses 1310 nm single-mode. The critical part is that 100G optics are less forgiving of excess loss, so you must validate link budgets and connector cleanliness.

Key specs to check: form factor (QSFP28 or CFP depending on platform), lane count (4 lanes at 25G each), wavelength (850 or 1310), and DOM and alarm thresholds. Also verify how your switch handles “DOM present but out-of-range” events, because some platforms may log but still forward traffic until thresholds tighten.

Best-fit scenario: A leaf-spine fabric with 100G uplinks from ToR to spine may run 70 to 120 meters on OM4 for SR4. If your cabling discipline is strong and your patch loss is low, SR4 can be cost-effective.

- Pros: massive bandwidth per port, strong for data center topologies

- Cons: higher sensitivity to fiber loss, more expensive optics, more complex incident response

100G LR4: long-reach backbone without stepping into 400G complexity

When multi-cloud ecosystems require long reach at high speed, 100G LR4 over OS2 is a common solution. LR4 uses four wavelengths around 1310 nm (with ITU-T channel spacing) and aggregates them into a 100G link. This option is popular for spine-to-core or site-to-site segments where you want to keep the migration path straightforward.

Key specs to check: wavelength plan (ITU-T grid compliance), maximum reach class (often 10 km or 40 km depending on module), and whether your optics are specified for your fiber type and link budget. Some vendors provide stronger DOM telemetry and more consistent alarm behavior, which matters for automated monitoring and incident triage.

Best-fit scenario: A multi-cloud provider might connect two data halls with 15 km OS2 and wants to avoid intermediate regenerators. LR4 matches that need while staying within typical “transceiver-to-transceiver” reach classes.

- Pros: long reach, mature ecosystem support, scalable for backbone upgrades

- Cons: cost, wavelength plan compatibility, fiber and splice quality still matters

BiDi optics and WDM pairs: squeezing more capacity into the same fiber

In mature multi-cloud ecosystems, fiber scarcity often becomes the bottleneck rather than switching capacity. BiDi (bidirectional) optics use different wavelengths for send and receive on the same strand, reducing the number of fibers needed. This is especially helpful when you already have OS2 fiber installed but spare strands are limited.

Key specs to check: bidirectional wavelength pair (commonly 1310 nm send / 1490 nm receive or the reverse depending on vendor), reach class, and strict pairing rules (you must use the correct transmit wavelength module with the matching receive wavelength module). DOM support is useful, but wavelength pairing errors will not be “fixed” by monitoring; they must be correct at install time.

Best-fit scenario: In a multi-tenant building with limited fiber runs between floors, you may reuse existing OS2 fibers to upgrade from 10G to 25G. BiDi can enable the upgrade while keeping downtime low and avoiding new conduit.

- Pros: saves fiber strands and installation cost, useful for constrained retrofits

- Cons: pairing complexity, stricter install procedures, can increase troubleshooting time if modules are swapped

Optical spec comparison you can use during procurement

Buying decisions in multi-cloud ecosystems get easier when you compare the core parameters that actually drive compatibility and link performance. The table below focuses on the most common Ethernet transceiver categories used across modern data centers and hybrid deployments.

| Option (Typical Form Factor) | Wavelength | Medium | Target Reach Class | Connector | DOM | Typical Use in Ecosystems | Operating Temperature Range |

|---|---|---|---|---|---|---|---|

| 10G SR (SFP+) | 850 nm | OM3/OM4 MMF | ~300 m (OM3 class), ~400 m (OM4 class) | LC duplex | Common | ToR to aggregation | 0 to 70 C (typical) |

| 10G LR (SFP+) | 1310 nm | OS2 SMF | Up to ~10 km | LC duplex | Common | Campus or inter-building | -40 to 85 C (available) |

| 25G SR (SFP28) | 850 nm | OM4 MMF | Up to ~100 m (class varies) | LC duplex | Recommended | 10G to 25G modernization | -5 to 70 C (common) |

| 25G LR (SFP28) | 1310 nm | OS2 SMF | Up to ~10 km | LC duplex | Recommended | Longer rack-to-core | -40 to 85 C (available) |

| 40G SR4 (QSFP+) | 850 nm | OM3/OM4 MMF | Class dependent | LC (duplex mapped to lanes) | Common | 40G aggregation holdover | 0 to 70 C (typical) |

| 100G SR4 or DR4 (QSFP28) | 850 nm or 1310 nm | MMF or SMF | ~70 to 300 m (SR), up to ~500 m (DR class varies) | LC | Common | High-density east-west | -5 to 70 C (common) |

| 100G LR4 (QSFP28) | Multi-wavelength around 1310 | OS2 SMF | ~10 km or 40 km class | LC | Common | Backbone between sites | -40 to 85 C (available) |

| BiDi (SFP+/SFP28 class) | 1310/1490 pair (example) | OS2 SMF | Class dependent | LC duplex or simplex per design | Varies | Fiber-scarce retrofits | -40 to 85 C (available) |

For standards grounding, Ethernet optics generally align with IEEE 802.3 transceiver specifications and vendor datasheets. For wavelength and link behavior, also consult transceiver vendor documentation and ITU-T guidance for LR4 channel spacing. [Source: IEEE 802.3 Working Group] and [Source: vendor transceiver datasheets]

Pro Tip: Before you blame “bad optics,” measure end-to-end patch loss with a certified light source and power meter (or an OTDR for fiber plant). In high-rate multimode links like 25G SR, connector contamination and excess patch loss often dominate, and DOM will only tell you symptoms, not root cause.

Selection criteria checklist for multi-cloud ecosystems

Use this ordered checklist to avoid expensive rework and to reduce change-window risk across multi-cloud ecosystems. The goal is to make compatibility and link performance decisions early, then validate with field measurements.

- Distance and fiber type: confirm OM3 vs OM4 vs OS2, then validate patch cord lengths and worst-case run.

- Data rate and lane format: match interface type (SFP+, SFP28, QSFP+, QSFP28) and Ethernet speed expectations.

- Switch compatibility: check vendor support statements; some platforms enforce optical parameter constraints and can reject non-matching modules.

- DOM behavior: ensure DOM is supported by the switch and your monitoring system; confirm alarm thresholds match your operational playbooks.

- Operating temperature and airflow: pick modules with sufficient temperature range for the enclosure; validate with thermal maps if you operate near limits.

- Budget and TCO: compare price per module plus expected failure rate, spares strategy, and downtime cost during replacements.

- Vendor lock-in risk: decide whether to standardize on OEM only or allow third-party with a qualification matrix and acceptance testing.

- Security and supply chain: ensure you can trace serial numbers and purchase through channels with warranty and documentation.

Common mistakes and troubleshooting wins

Optical incidents can be noisy, but most are predictable. Here are frequent failure modes engineers see in multi-cloud ecosystems, with root causes and practical solutions.

“Link down” after a clean swap: mismatched wavelength or wrong BiDi pairing

Root cause: BiDi optics require correct wavelength pairing; installing the wrong TX/RX module direction can lead to no light or out-of-band reception. Some teams also mix LR and ER classes accidentally during spares rotation.

Solution: verify module labels and vendor wavelength pair documentation before insertion. In the field, confirm with a fiber power meter and check received power on the switch DOM if available.

Flapping at 25G SR: multimode plant loss or fiber contamination

Root cause: high-rate multimode links are sensitive to connector cleanliness, patch cord quality, and excess insertion loss. Old patch panels and oxidized LC endfaces can cause intermittent link drops.

Solution: clean with lint-free approved methods, re-seat connectors, and re-test with a certified link budget measurement. If possible, compare DOM receive power trends during flaps to detect margin collapse.

“DOM present but alarms”: thermal or power constraints in the enclosure

Root cause: operating temperature outside module spec can raise bias current or change optical output power, triggering DOM warnings. In high-density racks, airflow can shift after maintenance or fan replacements.

Solution: check switch fan status, confirm airflow baffles are in place, and validate module temperature readings during steady state. If needed, move the link to a lower temperature slot or replace with a module specified for the harsher temperature range.

Works on one vendor switch, fails on another: parameter enforcement differences

Root cause: different switch vendors may enforce stricter optical/electrical requirements or interpret DOM fields differently. Third-party modules can be electrically compatible but fail vendor-specific compliance thresholds.

Solution: maintain a qualification matrix per switch model and module part number. Use a controlled acceptance test that checks link stability, error counters, and DOM alarm behavior.

Cost and ROI note: OEM vs third-party with measurable tradeoffs

In multi-cloud ecosystems, optical spend is recurring because spares and refresh cycles matter. OEM modules often cost more but may reduce qualification time and vendor escalations. Third-party modules can be significantly cheaper, but you need a qualification program to manage compatibility risk.

Realistic price ranges (typical market observations): 10G SR SFP+ modules often fall in the low tens of USD for third-party and higher for OEM; 25G SR SFP28 modules are commonly higher; 100G LR4 QSFP28 modules can be several hundred USD to over a thousand USD depending on reach class and vendor. TCO should include: module cost, expected MTBF/return rate, labor time for troubleshooting, downtime impact, and qualification testing. Field teams often find that the ROI of third-party optics improves when you standardize part numbers and use consistent acceptance tests rather than ad-hoc swaps.

For accuracy, always consult current vendor pricing and warranty terms. Also align with your organization’s procurement and security policies for supply chain assurance. [Source: vendor datasheets and warranty documentation]

Summary ranking table: pick the best option for your ecosystem

The table below ranks the Top optical choices by typical ecosystem fit, not by absolute performance. Use it as a quick starting point, then confirm with distance, fiber plant validation, and switch compatibility checks.

| Rank | Optical Choice | Best When | Main Constraint | Operational Complexity |

|---|---|---|---|---|

| 1 | 10G SR (SFP+) | Dense data center runs on OM3/OM4 | Multimode distance and plant quality | Low |

| 2 | 25G SR (SFP28) | Modernizing east-west within 100 m class | Strict multimode loss and cleanliness | Medium |

| 3 | 10G LR (SFP+) | Campus and inter-building OS2 links | OS2 build and connector discipline | Low |

| 4 | 25G LR (SFP28) | Longer reach at 25G without redesign | Higher module cost | Medium |

| 5 | 100G LR4 (QSFP28) | Backbone between sites over OS2 | Cost and wavelength plan correctness | Medium

🍪 We use cookies to improve your browsing experience and analyse site traffic.

Privacy Policy

|