A 400G upgrade often fails not because the optics are unavailable, but because the migration strategies were not engineered end-to-end: optics selection, forward error correction behavior, DOM handling, and traffic cutover. This article helps network engineers and platform teams planning a leaf-spine refresh in production environments where downtime is constrained and rollback must be deterministic. You will see a concrete deployment case with measured link performance, operational steps, and troubleshooting patterns tied to IEEE 802.3 and vendor datasheets. Update date: 2026-05-02.

Problem / Challenge: Why 400G Migration Strategies Fail in Production

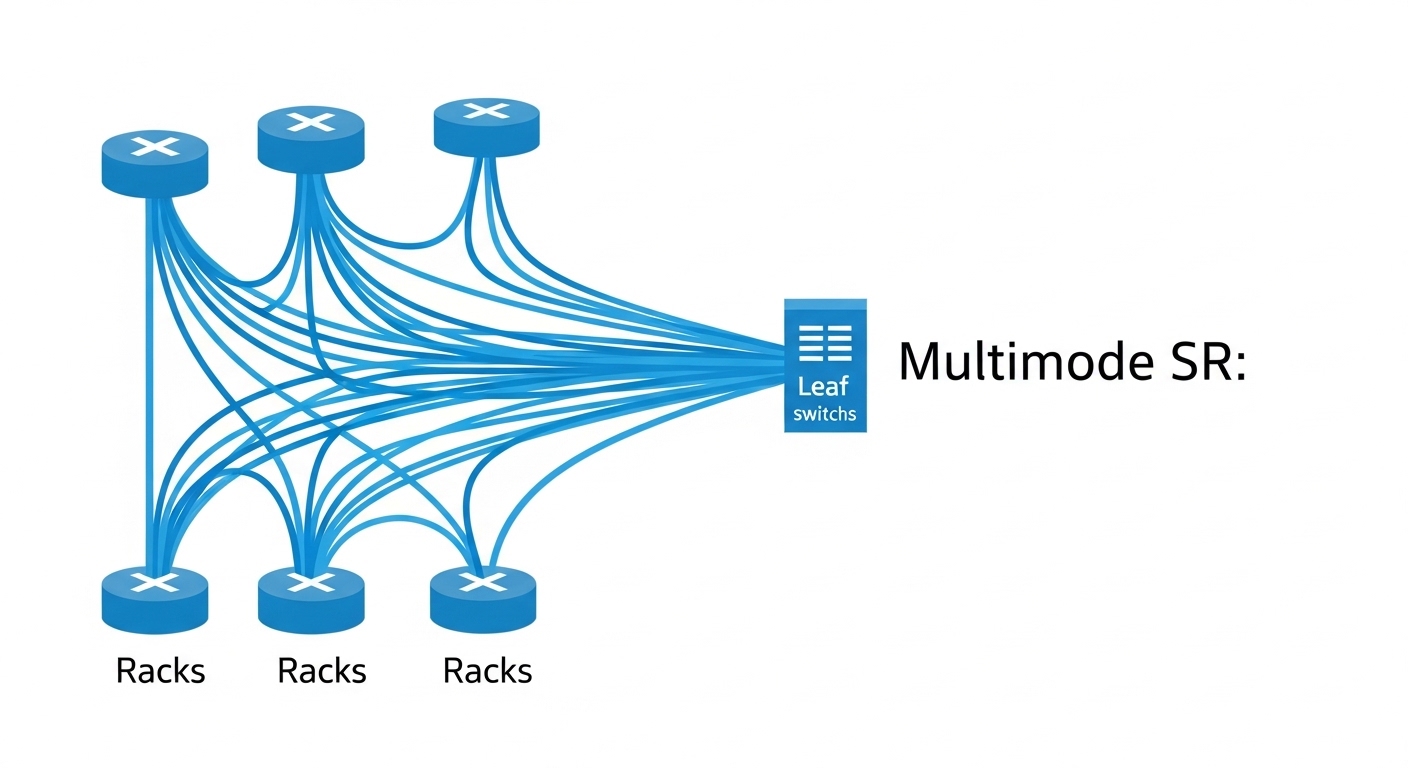

In our case, the enterprise ran a 3-tier leaf-spine fabric with 10G/25G edges and 100G spine uplinks, then needed 400G capacity for a new analytics workload. The primary challenge was that the migration strategies had to preserve application latency while expanding uplink bandwidth, without triggering link flaps during optics initialization or during FEC mode negotiation. We also had to meet strict power and thermal constraints in 40 C ambient operation and avoid “mystery” incompatibilities between optics vendors and switch PHY implementations.

At the physical layer, 400G options commonly map to coherent or non-coherent transport depending on module type. For short-reach data center deployments, engineers typically use QSFP-DD 400G SR8 or OSFP 400G SR8 over multi-fiber MPO-12 or MPO-16 cabling, with FEC enabled by default on many platforms. The IEEE alignment is grounded in IEEE 802.3 Ethernet PHY specifications for 400GBASE-R and related reaches, while optics electrical interfaces and DOM behavior are governed by vendor implementation details and SFF standards. See [Source: IEEE 802.3].

Environment Specs: Link Budget, Cabling, and Hardware Constraints

Before picking optics, we measured the real plant. The environment included 48-port top-of-rack leaf switches uplinking to a pair of spine switches using 100G today, with the target to replace specific uplink groups with 400G. We verified cabling type, connectors, and polarity, then calculated link budget using measured insertion loss rather than nameplate estimates. For example, we confirmed MPO trunk assemblies with typical mated connector loss and patch panel loss, then validated that the total loss stayed within the SR8 operating envelope at the specified temperature profile.

400G short-reach optics comparison (what we actually selected)

| Parameter | 400G SR8 (QSFP-DD) | 400G SR8 (OSFP) | 400G DR4 (if used) |

|---|---|---|---|

| Nominal wavelength | ~850 nm multi-lane | ~850 nm multi-lane | ~1310 nm |

| Reach class | Typically 70 m class (varies by vendor) | Typically 100 m class (varies by vendor) | Typically 500 m class (varies) |

| Fiber interface | MPO-12 (8 lanes active) | MPO-16 (8 lanes active in OSFP implementations) | LC duplex |

| Data rate | 400G aggregate | 400G aggregate | 400G aggregate |

| Typical power class | ~7–12 W per module (datasheet dependent) | ~12–15 W per module (datasheet dependent) | ~6–10 W per module |

| Operating temperature | 0 to 70 C common for enterprise | -5 to 70 C or 0 to 70 C common | 0 to 70 C common |

| Connector cleaning requirement | High (MPO endfaces, inspect every patch) | High (MPO endfaces, inspect every patch) | Moderate (LC duplex, still inspect) |

We primarily used SR8 for the leaf-spine hop because the measured plant loss fit within the SR8 power budget, and SR8 avoided transceiver-to-fiber complexity of DR4 cabling. For reference, optics models we considered included Cisco-compatible 400G SR8 families and third-party options such as Finisar/FS.com style SR8 modules; exact part numbers vary by switch vendor and DOM behavior. Always validate against the specific switch platform optics matrix published by the switch vendor and the module vendor datasheet. [Source: vendor switch QSFP-DD/OSFP compatibility matrix; vendor module datasheets].

Chosen Solution & Why: Engineering the Optics and Cutover Path

Our chosen migration strategy mixed module types by physical location: QSFP-DD SR8 for leaf uplinks where the switch ports were rated and validated for that cage, and OSFP SR8 where the chassis supported higher power classes and better airflow design. This prevented port-level incompatibility and reduced the risk of marginal thermal behavior during peak traffic. We also enforced a single OM3/OM4 fiber standard in the affected corridors to minimize modal and insertion loss variability across patch panels.

Implementation steps (deterministic cutover)

- Pre-validation in a staging rack: we installed the exact optics SKUs in the same switch models, then ran BER and link stability tests at line rate using traffic generators. We confirmed that DOM telemetry (temperature, laser bias, supply voltage) populated correctly and that the switch accepted vendor-specific DOM fields without raising “unsupported module” faults.

- Link loss verification with OTDR/OLTS: we used a calibrated OLTS procedure on MPO trunks and patch panels, recording worst-case insertion loss per hop. If the margin was under the vendor-recommended threshold, we remediated by replacing the highest-loss patch assemblies.

- Polarity and cleanliness enforcement: we cleaned MPO endfaces with lint-free wipes and approved inspection tools, then re-terminated any channel that showed contamination signatures. For MPO, we verified polarity with a known-good reference jumper and documented the polarity mapping in the change ticket.

- Controlled enablement of 400G interfaces: we enabled interfaces in maintenance windows with traffic drained from the target uplink group. We brought links up with conservative interface settings first (default FEC behavior as per switch defaults) and only then shifted traffic.

- Rollback plan: if any interface showed excessive FEC correction counts, CRC spikes, or link retrains, we reverted to the prior 100G uplink set within the change window and captured logs for vendor escalation.

Pro Tip: In many 400G Ethernet implementations, “link up” can occur even when the optics are marginal; the real indicator shows up later as rising FEC correction and CRC retries under load. During cutover, monitor forward error correction counters and interface error states at steady-state traffic, not only at initial link negotiation.

Measured Results: What Improved After the 400G Migration

After completing the leaf-spine uplink swap, we observed measurable improvements in throughput headroom and reduced congestion at the aggregation layer. In our environment, each leaf had 48 downlinks at 25G and uplinks migrated from 4 x 100G to 4 x 400G for targeted corridors, effectively increasing uplink capacity by about 4x per leaf. During peak analytical scans, we saw reduced queue depth on spine-facing ports and lower tail latency at the application gateway.

Operationally, we learned that migration strategies must include telemetry-based acceptance criteria. For example, we defined success as stable link operation for 24 hours with no link flaps, sustained BER/packet error rates within vendor-recommended ranges, and DOM telemetry within nominal thresholds. We also tracked failure rates of optics and patch components; in the first week, the majority of early “issues” were traced to dirty MPO endfaces rather than module electronics, reinforcing the need for inspection gating.

Selection Criteria Checklist for 400G Migration Strategies

Below is the ordered checklist engineers should use when designing migration strategies for 400G short-reach Ethernet. We used it to reduce vendor churn and avoid late-stage rework.

- Distance and plant loss: confirm measured insertion loss and connector loss for each MPO trunk and patch channel; ensure margin against the module vendor’s SR8 power budget.

- Switch compatibility: confirm optics support per port and platform, including whether the switch enforces vendor OUI checks or specific DOM fields.

- Data rate and FEC mode behavior: validate whether the platform uses RS-FEC or another scheme and how it reports correction counters; confirm expected stability at line rate.

- DOM support and telemetry granularity: verify temperature and laser bias telemetry availability; ensure your monitoring stack can ingest DOM fields without throttling or parsing failures.

- Operating temperature and airflow: validate module operating range against measured chassis inlet temperatures; consider worst-case seasonal ramp.

- Connector and cleaning workflow fit: MPO endfaces require a disciplined inspection and cleaning SOP; ensure your team has the tools and time.

- Vendor lock-in risk and RMA process: compare OEM vs third-party TCO with realistic RMA lead times, warranty terms, and whether the vendor provides compatibility guidance for your exact switch model.

Common Mistakes / Troubleshooting in 400G Migrations

Even teams with strong fiber discipline can fail during 400G migration strategies. Here are specific failure modes we encountered and how we addressed them.

Link flaps that correlate with temperature spikes

Root cause: module temperature reached upper operational limits due to constrained airflow around specific cage zones, especially when multiple high-power OSFP modules were installed in adjacent ports. Solution: measure inlet temperature per zone, adjust fan profiles or airflow baffles, and re-seat modules to ensure proper heat transfer.

High FEC correction counts under load with “stable” link state

Root cause: marginal optical power caused by a single high-loss patch assembly, often from contaminated MPO endfaces or a damaged ferrule. Solution: isolate by swapping patch segments and running per-link diagnostics; inspect MPO endfaces and replace the worst-loss component rather than the entire trunk.

“Unsupported module” or intermittent DOM parsing faults

Root cause: switch firmware expecting specific DOM field formatting or a monitoring collector failing due to unexpected DOM scaling units. Solution: validate DOM compatibility in staging with the same switch firmware version; update monitoring parsers and confirm that interface bring-up succeeds with no module warnings.

Polarity errors that only appear on certain lanes

Root cause: MPO polarity mapping mismatch between trunk and patch panel, especially when using different jumper types across corridors. Solution: verify polarity using a known-good reference jumper and document polarity maps in the change record; then re-terminate only the affected patch panel rows.

Cost & ROI Note: Budgeting for Optics, Power, and Risk

In real deployments, 400G optics cost varies widely by vendor, reach class, and whether you buy OEM-branded modules. Typical enterprise street pricing ranges roughly from $800 to $2,000 per 400G SR8 module depending on SKU and availability; OSFP variants can sit toward the higher end due to package and thermal design. TCO should include power and cooling impact: a 400G SR8 module may draw on the order of 7–15 W, and multiplying by dozens of ports plus cooling overhead can meaningfully affect annual operating cost.

ROI comes from capacity relief and operational stability. If migration strategies reduce oversubscription and avoid emergency upgrades, the avoided downtime and reduced incident tickets can outweigh incremental optics costs. Still, third-party optics can be cost-effective, but only if switch compatibility and DOM behavior are validated for your exact platform and firmware release. [Source: vendor datasheets; published RMA and warranty policies from optics and switch vendors].

FAQ: 400G Migration Strategies Engineers Actually Ask

What migration strategies minimize downtime during 400G cutovers?

Use a parallel uplink approach: stage optics in a staging rack, bring up 400G interfaces in a maintenance window while draining traffic from the target group, then shift traffic in controlled increments. Maintain rollback by keeping the previous 100G links active until 400G stability is proven with load.

Which optics type is best for typical enterprise leaf-spine distances?

For short-reach data center links, SR8 (QSFP-DD or OSFP) is usually the best fit if the measured plant loss fits the vendor power budget. For longer reach or different cabling plants, DR4 or other reach classes may be viable, but you must re-evaluate connector types, fiber counts, and polarity workflows.

How do I validate DOM support before rolling to production?

Install the exact module SKUs into the same switch model with the same firmware version, then verify that DOM telemetry populates and that the switch does not raise module compatibility warnings. Confirm your telemetry pipeline ingests DOM fields without parsing errors and that counters for FEC and CRC behave normally under load.

What are the fastest troubleshooting steps when 400G links misbehave?

Start with physical layer checks: inspect and clean MPO endfaces, verify polarity mapping, and confirm insertion loss on the failing hop. Then correlate interface counters (FEC correction, CRC errors, link retrains) with load patterns to determine whether the issue is marginal optics power or a configuration mismatch.

Is it safe to mix optics vendors during a 400G migration?

Mixing can work, but only after compatibility testing with your switch platform and firmware. Differences in DOM formatting, FEC implementation details, and electrical interface tolerances can cause intermittent issues that only appear under certain temperature or traffic patterns.

How should I plan the rollout timeline for migration strategies?

Plan for at least two phases: a staging validation phase and a production cutover phase with a rollback window. Include time for fiber inspection and remediation because many early failures trace to patch components rather than the transceivers themselves.

If you want to replicate this outcome, treat migration strategies as an engineering program: validate optics and DOM behavior, prove link budget with measured loss, and execute cutover with telemetry-based acceptance gates. Next, review 400G optics compatibility and DOM validation to operationalize a repeatable validation workflow.

Author bio: Field-focused network performance engineer specializing in Ethernet PHY bring-up, optics qualification, and telemetry-driven cutover validation in enterprise data centers. Helps teams reduce migration risk by aligning IEEE PHY expectations, vendor DOM behavior, and measurable link acceptance criteria.